By not supporting TOSLINK, Nintendo has actually done a good thing, it's a bottlenecked standard, it'll be outdated soon. Better for everyone to avoid it, and they don't need to pay royalties for licensed formats as well.

People, who have no tech experience, seem to be praising licensed audio technologies such as Dolby and other stuff, well, that's good to have in stereo, but LPCM is the best thing, there is no need for any licensed stuff since most of it is lossy, therefore inferior.

HDMI has the bandwidth as well as copy protection support.

All "Licensed Audio Logos" on WiiU Game Boxes are misleading and should not be there. Publisher mistake/ignorance. Instead, a text displaying the channel support should be printed, for example "Audio: LPCM 5.1ch" or "Audio: LCPM 2.0ch"

In the end, it's up to the game-specific audio file bitrates and codecs that will dictate the quality you hear, nintendo does it's best to avoid bottlenecks , bad audio quality is all developer's fault. But 7.1 is not supported as we can see - this should not be a very big deal at all, quality is more important than channel count.

Even as Nintendo provides support for such throughput and you have a modern HDMI Sorround Setup, if the game files are encoded in crappy 96kb/s .mp3, the audio quality will be shit no matter what you do on the external side. Blame developer

Since the OP is taking ages to update the thread here is what I wanted to fix in the FAQ:

Q:

What if I use the optical output from my TV and hook that into my receiver/HTiB. I'll get surround then?

A: No. You will just get plain stereo.

This is not Nintendo's fault, it may change in future and is up to TV manufacturers.

As well as some clarifications why TOSLINK is not as good as some think:

From lovely AVForums:

Toslink and optical does indeed have the bandwidth required for HD audio, but the S/PDIF specifications do not. The S/PDIF protocol and interface themselves do not comply with the requirements and this will not be updated. HDMI has been adopted for both HD audio and video. As far as HDCP goes then yes, S/PDIF doesn't support that either, but you can stream HD audio via multichannel component leads and that interface doesn't support HDCP. It is simply a fact that S/PDIF has ceased to be updated and will never be updated to support higher bandwidths. Why would they update it if the update would only be applicable to new devices with a revised interface, HDMI will already be present on such devices and there would be no need for the revision or expansion of S/PDIF on such devices.

The problem is not the optical media, which has adequate bandwidth as is proven by the adoption of optical connectors in the computer industry for expensive systems, but the audio specification. The specification for S/PDIF does not mandate support for the bandwidth required for 8 channels of 24 bit, 192kHz audio (needs 36.86 MB/s), or the protocols. HDMI does. The result is that nobody implements an interface, nor could they (no specification ==> no standard ==> no interoperability ==> no work). Updaiting the spec is possible, but since everything would need to be replaced, it's simpler to mandate a new connector that makes it obvious.

Optical media does indeed have the bandwidth for full HDMI. As a result, HDMI over fibre is both specified and available.

TOSLink is a registered trademark of Toshiba (TOShiba-LINK), which is why I've discussed the standard optical link. Although TOSLink's bandwidth is adequate for HD audio, it is inadequate for the full HDMI (including video).

It's because of SPDIF socket/plug, not the actual TOSLINK cable which would had enoguh bandwidth for 5.1ch but still only by just, and not comparable to actual fiber-grade speed in other cables that exist.

This has to be a joke standard, fiber optics is what Toslink is made of, and as well as what FTTH is made of, and FTTH is the future of ultra high-speed internet. Toslink is old, throw it away.

So basically what everyone of you should do, instead, go spam developer to make better audio code and , most developer care shit about audio and their audio engines are so crap if they increase audio quality framerate goes down a lot, but this will be a lot easier with WiiU's Audio DSP and I just cannot wait to hear the juice, this has to be the best console ever for now. It's also up to sound designers to actually care to not compress the audio and take high-bitrate ogg stuff into the game.

Disc size of 25GB will also help immensly. All those games which use only a fraction of that could sound a lot better, they have a lot of space why just don't fill it with great audio.

Source quality is way more important and it's the first thing you need to have before you worry about outputting that, so take you're horses to what really matters.

Wii U already has a dedicated chip for audio processing. Of course Nintendo went for the cheapest option. It seems one model higher and Dolby Digital on-the-fly encoding would have been possible by using that chip only.

You're wrong. LPCM is already full-quality. Most of this thread's nintendo bashing is invalid, because it's due to lack of understanding.

My issue was more on the video processing side, not audio. 320x240 sources look pretty bad at 1080p, so I was wondering if anyone had experience with that particular scaler.

What do you think of the Take 5.1s, Mejilan? I've heard some good things; what made you go with them?

You would want a better video scaler than the TV.

Well, The TV should display native 720p if that's what the WiiU outputs, I am not fond of upscaling of any kind, from the TV, from the console, or software-wise from the game it self (eg: BlackOps 2)

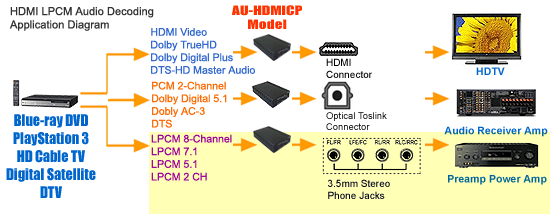

Yeah, that's because SPDIF doesn't support enough bandwidth for 5.1 LPCM audio.