For what it's worth, a game with really large internally rendered dynamic range (and ffxv has *huge*and highly realistic dynamic range) will often show this kind of thing. A primarily SDR game that had been converted often will not.

So for a moment assume an SDR TV caps out at 100nits of brightness (roughly right), whereas a high-end HDR tv can push 1000+ nits.

The game internally might be rendering parts of the image that will really be that bright - way over 1000nits; so with a good HDR TV, the game just sends that image 'raw' to the TV. If the TV can't handle 1000+ nits it'll have to either clip the bright parts, or 'tonemap' them.

When you tonemap an image, you compress the brightest parts to the limited available range. So as a hypothetical example, if the TV can only display 1000 nits, but it gets sent a signal up to 2000 nits then it'll need to compress it. The 2000nit (max) input becomes 1000 output (max), 1300 becomes 900, 800 stays 800. The top 1000nits of the input is compressed into the top 200 nits of the output.

By doing this you can still see some detail in the extreme highlights and you avoid clipping, but it will look washed out and low contrast in the extreme brights.

Point is, the TV chooses what it does.

However, with an SDR TV the game knows the hard limit is always ~100nits, so the *game* always performs a (much more aggressive) tonemap.

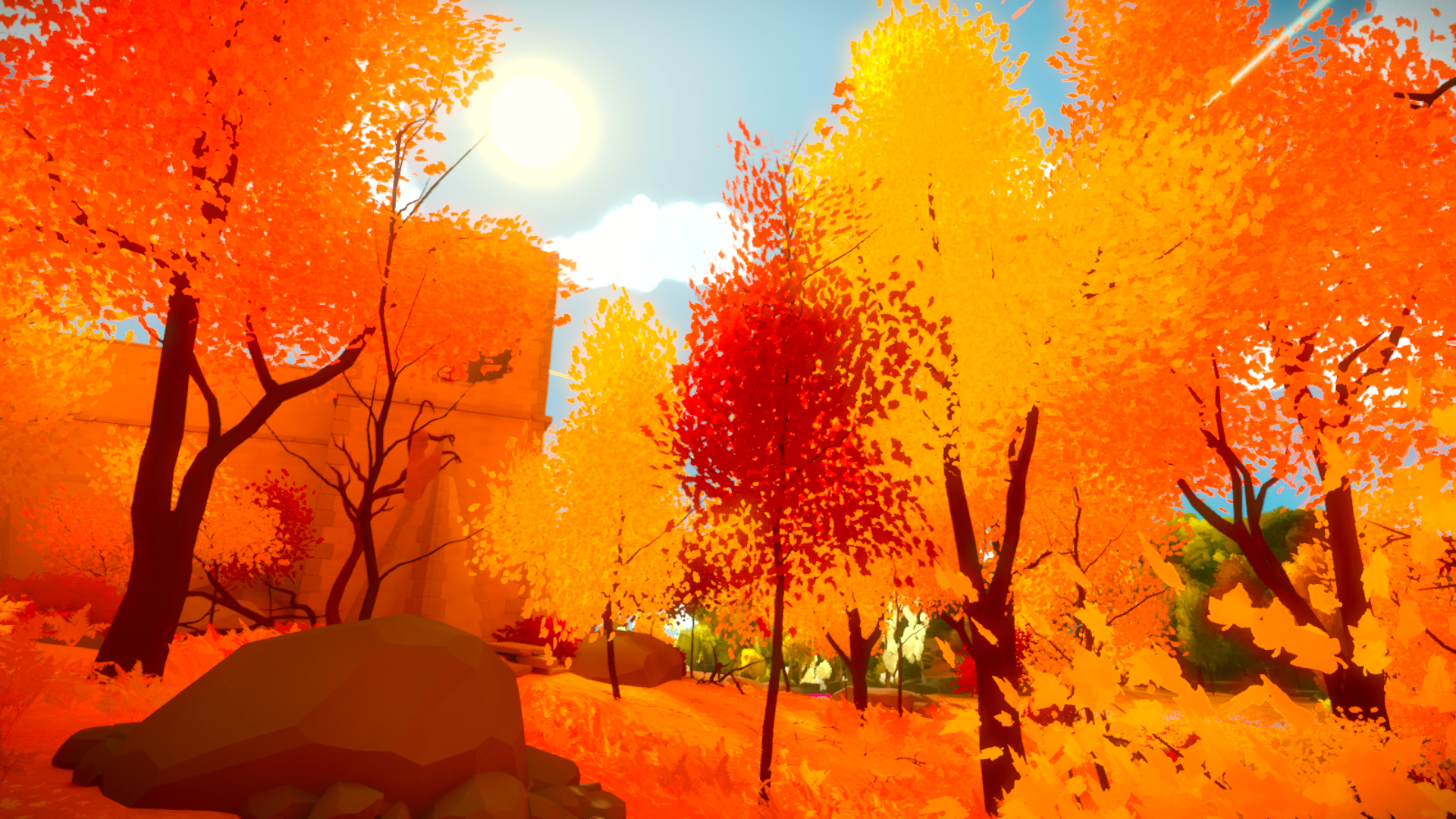

This is what you are seeing here: the SDR image is tonemapped by the game. The brights are all compressed to sdr's extremely limited range, so they lose contrast and saturation but they *aren't* clipped so are still visible (which the HDR captured image isn't - you can't see anything in the sky even though the HDR source has information there, it's all clipped to white and looks really bad).

That's how a proper HDR implementation with excellent source dynamic range being sent to the TV in an unfiltered way *should* look.