-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

G-SYNC - New nVidia monitor tech (continuously variable refresh; no tearing/stutter)

- Thread starter artist

- Start date

I dont think it's been mentioned here yet but those demos were running on fucking 760s ..

That was to drive home the point that, as much as G-sync helps over 60 frames, it makes under 60 frames look just as good. This is important for things like Steambox that want to run affordable hardware and look reasonably good doing it.

When Carmack lights up like a Christmas tree at the possibilities, you have to give Nvidia credit.

So what's the upper limit of the refreshing? 120? 144?

144. With G-sync and 144, they said anything beyond that would be a total waste because it's under 2ms or something like that.

You need experience screen tech in person to be possibly impressed. Dunno it seems aimed at mid to high end to me. The screen is not that expensive.Oh. Not impressed tbh. This is nice for super high end dudes, I guess....?

plagiarize

Banned

144 hz.So what's the upper limit of the refreshing? 120? 144?

and... damn Dark... really? I thought you'd be all over this!

I like the idea but I use a TV for PC gaming. Furthermore, this seems to be limited to LCDs right now which makes it worthless to me.

"sure, the first rocket in space is okay i guess, but what i'm really interested in is going to the moon".

They should read the Carmack and Sweeney quotes.A lot of people don't realize how crazy big this is for quality.

Tim Sweeney, creator of Epic’s industry-dominating Unreal Engine, called G-SYNC “the biggest leap forward in gaming monitors since we went from standard definition to high-def.” He added, “If you care about gaming, G-SYNC is going to make a huge difference in the experience.” The legendary John Carmack, architect of id Software’s engine, was similarly excited, saying “Once you play on a G-SYNC capable monitor, you’ll never go back.” Coming from a pioneer of the gaming industry, who’s also a bonafide rocket scientist, that’s high praise indeed.

plagiarize

Banned

I should clarify. When I said input lag will be similar to the input lag you experience with v-sync turned off... that would be for playing on a 144 hz display with v-sync turned off.

Because 1/144 of a second is less than 1/60th.

and to put that into perspective for people not getting it, we've had high def monitors on PCs for a very very long time.

Because 1/144 of a second is less than 1/60th.

Tim Sweeney, creator of Epic’s industry-dominating Unreal Engine, called G-SYNC “the biggest leap forward in gaming monitors since we went from standard definition to high-def.”

and to put that into perspective for people not getting it, we've had high def monitors on PCs for a very very long time.

Future PhaZe

Member

Wow this sounds cool but my question is will it work if I have an AMD card? cause I just recently switch to AMD and don't feel like going back...

no

Maybe a dumb question: will this help make games running <60fps appear smoother?

Yes

Future PhaZe

Member

Maybe a dumb question: will this help make games running <60fps appear smoother?

yes

it's going to make that zone between 30fps and 60fps infinitely more tolerable

So there seems to be no chance tech like this comes to HTPC gamers who play on their big screens?

I recently started playing Skyrim on my PC. The game was stuttering big time, so I dloaded Dxtory to lock the frame rate at 60. That fixed the problem, but tearing was introduced so I enabled VSync through RadeonPro. That add heavy input lag, so I enabled triple buffering to address that issue.

Since then the game is running pretty well, but it would be awesome to have this tech instead to insure that I get the best results in all three areas. There's no way in the world I'll trade in my comfy couch experience by going back to monitors, so is it technically impossible for some third party to design similar tech that will work as an add-on (exterior of course) ti HDTVs?

I recently started playing Skyrim on my PC. The game was stuttering big time, so I dloaded Dxtory to lock the frame rate at 60. That fixed the problem, but tearing was introduced so I enabled VSync through RadeonPro. That add heavy input lag, so I enabled triple buffering to address that issue.

Since then the game is running pretty well, but it would be awesome to have this tech instead to insure that I get the best results in all three areas. There's no way in the world I'll trade in my comfy couch experience by going back to monitors, so is it technically impossible for some third party to design similar tech that will work as an add-on (exterior of course) ti HDTVs?

http://www.geforce.com/whats-new/ar...evolutionary-ultra-smooth-stutter-free-gaming answers a lot of your questions. If not, post em and I'll answer if I can.

Maybe a dumb question: will this help make games running <60fps appear smoother?

Extremely so.

http://www.geforce.com/whats-new/ar...evolutionary-ultra-smooth-stutter-free-gaming answers a lot of your questions. If not, post em and I'll answer if I can.

Can I buy the BenQ XL2411T right now without worrying about it being supported? (DIY kit)

I've missed a few pieces of info.

Can I just order my BenQ XL2411T now and buy the solution later and DIY?

It's only for that few Asus monitors at the moment, maybe there will be some kits for other monitors in the future but nothing is in place. AndyBNV answered on my question in the main thread:

http://www.neogaf.com/forum/showpost.php?p=86539078&postcount=1183

Oh. Not impressed tbh. This is nice for super high end dudes, I guess....?

Actually it will improve the experience drastically for people that don't get systems that can run min. FPS 60/120. This is extremely relevant for every gamer. You can then let your game run at somewhere between 30-60 and neither get tearing or stuttering. This is the biggest jump in quality since HD and imo way more impressive then 4k.

Maybe a dumb question: will this help make games running <60fps appear smoother?

When the monitor and card hold hands it makes the experience smooth and almost seamless. Frames per second matter far, far less.

Future PhaZe

Member

hm, 30 hz is the minimum with g-sync

wonder what happens if the frames dip below that

wonder what happens if the frames dip below that

It's only for that few Asus monitors at the moment, maybe there will be some kits for other monitors in the future but nothing is in place.

I saw BenQ with Asus and two other manufacturers on that reveal pic, that's why I wondered about it.

This is something for the enthusiast PC Gamer , right ? It's not something that's going to make a massive difference for the average dude ?

It's probably better for the average dude, assuming you're talking an average dude with the same eye for detail but a smaller wallet. Most of the excesses of enthusiast PC gaming are based on pegging 60 (or 120) in worst-case scenarios to avoid all of the equally bad solutions to framerate drops; this changes the ugly-ass jerkiness, input lag, and cascading wasted frames that you get on a midrange rig into a slight loss of temporal resolution.

I haven't gone green since my TNT2, because of that weakness in the midrange every time I've upgraded, but I'm pretty sure it's time now.

Future PhaZe

Member

Actually it will improve the experience drastically for people that don't get systems that can run min. FPS 60/120. This is extremely relevant for every gamer. You can then let your game run at somewhere between 30-60 and neither get tearing or stuttering. This is the biggest jump in quality since HD and imo way more impressive then 4k.

I'd have to agree.

Can I buy the BenQ XL2411T right now without worrying about it being supported? (DIY kit)

No. At this time the DIY mod is only designed for the ASUS monitor. I have no info on it or another version being released for other monitors.

Relaxed Muscle

Member

IT'S FULL OF STARS

And I fixed my caps lock key.

When I calm down I will write a post explaining it and why it's the best thing in gaming display technology in a decade. Seriously.

Will you have the same reserves like with mantle, being propietary and all that or the excitement will wash away any concern?

gutterboy44

Member

Holy shit, this is amazing. I am glad I didn't pull the trigger on another 120hz panel yet. Will definitely be getting a monitor with this built in next year. Fuck yes! I hate fucking with v-sync and tearing is the god damn worst.

csquared587

Member

Oh wow, that Asus 144hz monitor is way more affordable than I thought it would be. I might pick that up after I recover from my next gen spending. Im guessing this means anything from 30-144 fps will be tolerable. Sounds cool and might make me try to move up from 60hz.

djplaeskool

Member

This is some seriously sexy tech.

EatChildren

Currently polling second in Australia's federal election (first in the Gold Coast), this feral may one day be your Bogan King.

Made some images for a visual representation of tearing, lag/stutter, and what G-Sync does. There's probably some inaccuracies (I'm not overly knowledgeable on the tech), but it should at least give people an idea.

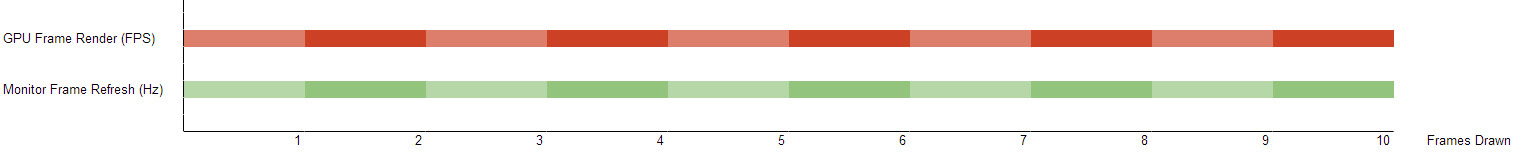

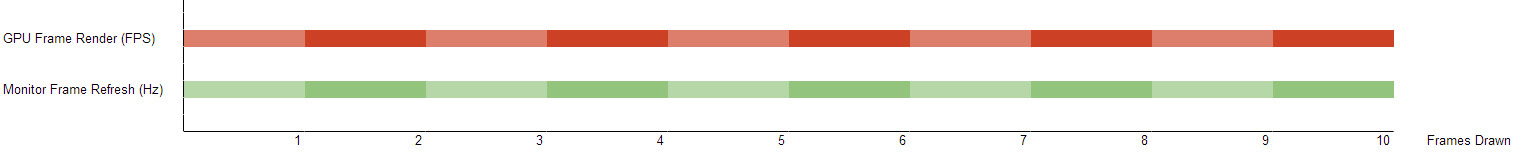

Image 1: Perfection.

In graphics rendering you have two forces at play. Firstly, your hardware/GPU is rendering each frame of the game. We call this framerate. And the other is your monitor/display, which is refreshing display frames. We call this hertz (Hz). Traditionally your monitor's Hz is locked. Do you have a 60Hz monitor? What about 120Hz? Or 144Hz? This number tells you how many times it forcibly refreshes itself every second.

And so, in a perfect world, for every single refresh of the monitor's frames the GPU would render a single frame of game data. They would work in perfect unison together.

But this doesn't happen, not unless you have insane hardware or lower specs. We see it on PC, we see it on consoles, but framerate fluctuates. Your GPU does not render a locked framerate most of the time. And even when it is hitting that smooth 60fps, something can happen in the game that drops it. Big explosion out of nowhere. Building crumbling. The hardware is stressed, and the framerate drops. It might even drop below the monitor's refresh rate.

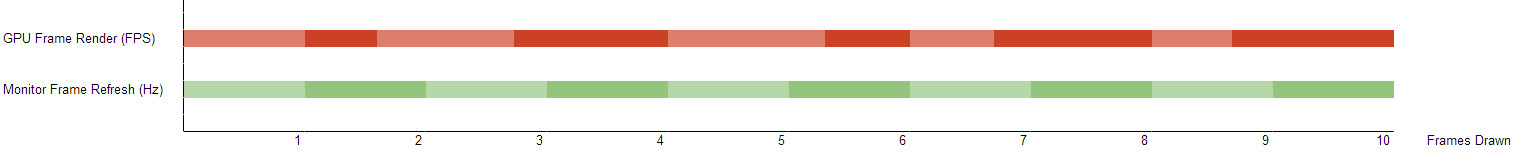

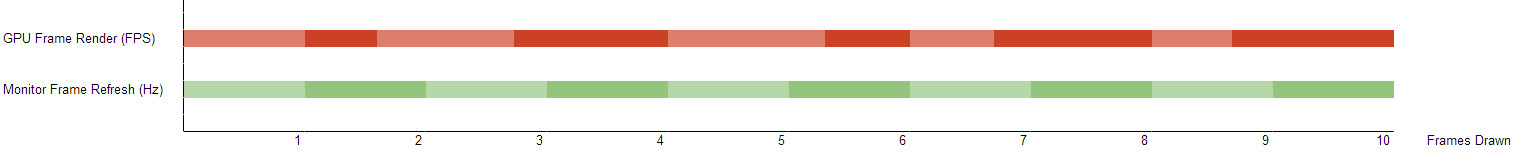

Image 2: Tearing

So what happens when our framerate is moving all over the place, but our monitor's refresh rate is locked? We get something called "tearing". Long and short of it, this is when the frames being rendered by the GPU are not in sync with the monitor's locked frame refresh rate. We get overlaps between the two. See image below.

This means that when the monitor tries to refresh a frame, sometimes the GPU has two frames of overlapped data. This is what causes that big "tear" horizontally through the screen of some games.

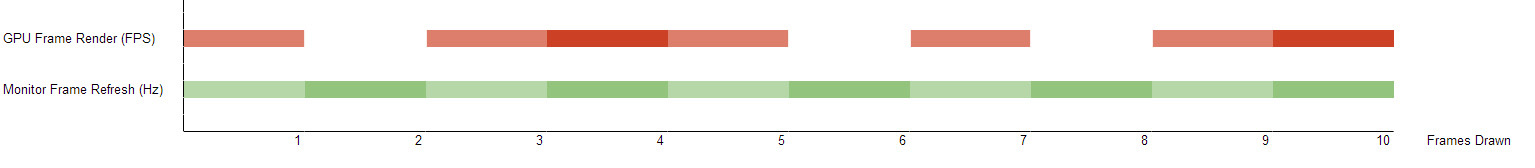

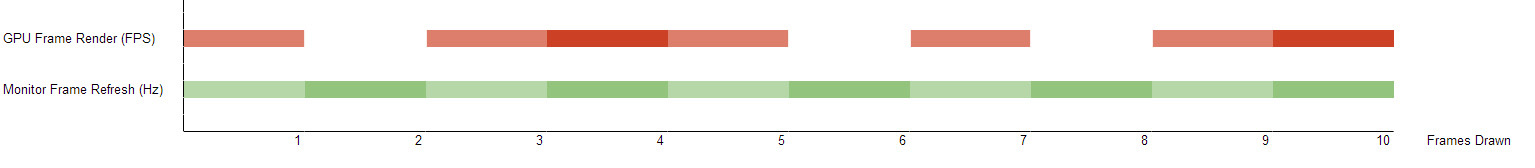

Image 3: Vsync Solution! Also Stutter/Lag

People fucking hate tearing, so a solution was found: Vsync. Vsync acknowledges the refresh rate of your monitor. 60Hz? 120Hz? And it says "I'm only going to send GPU rendered frames to the beat of that refresh rate!". Everything is synchronised! But this has another problem. As we just mentioned, games rarely run at locked framerates. So what happens when my 60fps game drops to 45fps, but my monitor is 60Hz, and I'm using Vysnc?

Essentially, the GPU forces itself to 'wait' on each frame before the monitor refreshes. Remember, the monitor refresh rate is locked. It stays beating to the same rhythm, regardless of how fast or slow the GPU is spitting out frame data. In this case, our GPU is rendering frames slower than the monitor's Hz, but Vsync is forcing it to play catch up. If the monitor tries to draw a frame, but no frame exists, it simply draws the last one, doubling up for a couple of seconds. Imagine this in a game. This would give the impression of "stuttering". This also introduces input lag from peripherals, as the GPU is constantly trying to play catch-up to the monitor's refresh rate.

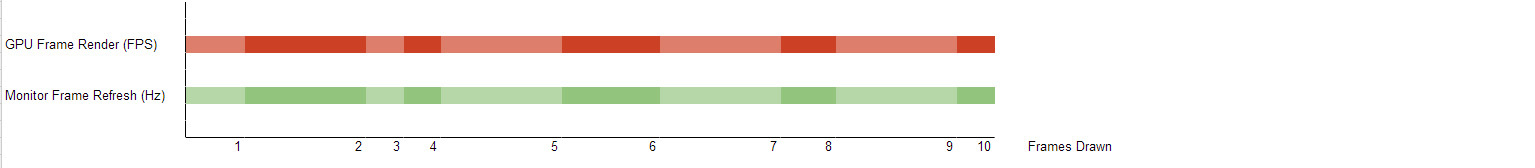

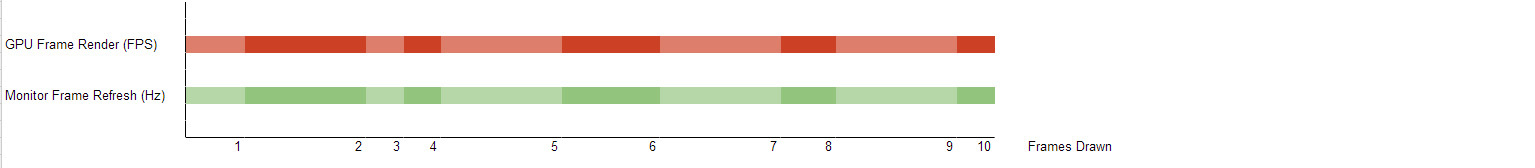

Image 4: G-Sync Solution

G-Sync is a hardware level solution inside your monitor that communicates directly with the GPU. Instead of Vsync, G-Sync says "why don't we change the monitor refresh rate too?".

So no matter how fast or slow the GPU is rendering frames, the monitor is never locked to a particular refresh rate. Not stuck at 60Hz, even if the GPU is stuck on 45fps. In this case, the monitor would change to 45Hz to match the framerate. And if the GPU suddenly boosts to 110fps? The monitor boosts to 110Hz too.

Every frame is drawn perfectly in sync with the monitor. The monitor doesn't ever have to play catch up to the GPU (tearing), nor does the GPU ever have to play catch up to the monitor (stutter/lag).

Image 1: Perfection.

In graphics rendering you have two forces at play. Firstly, your hardware/GPU is rendering each frame of the game. We call this framerate. And the other is your monitor/display, which is refreshing display frames. We call this hertz (Hz). Traditionally your monitor's Hz is locked. Do you have a 60Hz monitor? What about 120Hz? Or 144Hz? This number tells you how many times it forcibly refreshes itself every second.

And so, in a perfect world, for every single refresh of the monitor's frames the GPU would render a single frame of game data. They would work in perfect unison together.

But this doesn't happen, not unless you have insane hardware or lower specs. We see it on PC, we see it on consoles, but framerate fluctuates. Your GPU does not render a locked framerate most of the time. And even when it is hitting that smooth 60fps, something can happen in the game that drops it. Big explosion out of nowhere. Building crumbling. The hardware is stressed, and the framerate drops. It might even drop below the monitor's refresh rate.

Image 2: Tearing

So what happens when our framerate is moving all over the place, but our monitor's refresh rate is locked? We get something called "tearing". Long and short of it, this is when the frames being rendered by the GPU are not in sync with the monitor's locked frame refresh rate. We get overlaps between the two. See image below.

This means that when the monitor tries to refresh a frame, sometimes the GPU has two frames of overlapped data. This is what causes that big "tear" horizontally through the screen of some games.

Image 3: Vsync Solution! Also Stutter/Lag

People fucking hate tearing, so a solution was found: Vsync. Vsync acknowledges the refresh rate of your monitor. 60Hz? 120Hz? And it says "I'm only going to send GPU rendered frames to the beat of that refresh rate!". Everything is synchronised! But this has another problem. As we just mentioned, games rarely run at locked framerates. So what happens when my 60fps game drops to 45fps, but my monitor is 60Hz, and I'm using Vysnc?

Essentially, the GPU forces itself to 'wait' on each frame before the monitor refreshes. Remember, the monitor refresh rate is locked. It stays beating to the same rhythm, regardless of how fast or slow the GPU is spitting out frame data. In this case, our GPU is rendering frames slower than the monitor's Hz, but Vsync is forcing it to play catch up. If the monitor tries to draw a frame, but no frame exists, it simply draws the last one, doubling up for a couple of seconds. Imagine this in a game. This would give the impression of "stuttering". This also introduces input lag from peripherals, as the GPU is constantly trying to play catch-up to the monitor's refresh rate.

Image 4: G-Sync Solution

G-Sync is a hardware level solution inside your monitor that communicates directly with the GPU. Instead of Vsync, G-Sync says "why don't we change the monitor refresh rate too?".

So no matter how fast or slow the GPU is rendering frames, the monitor is never locked to a particular refresh rate. Not stuck at 60Hz, even if the GPU is stuck on 45fps. In this case, the monitor would change to 45Hz to match the framerate. And if the GPU suddenly boosts to 110fps? The monitor boosts to 110Hz too.

Every frame is drawn perfectly in sync with the monitor. The monitor doesn't ever have to play catch up to the GPU (tearing), nor does the GPU ever have to play catch up to the monitor (stutter/lag).

â Narayan

Member

This is pretty fucking huge. Next monitor I get is definitely going to have this equipped.

This explicitly says DisplayPort only.

http://www.geforce.com/whats-new/ar...evolutionary-ultra-smooth-stutter-free-gaming answers a lot of your questions. If not, post em and I'll answer if I can.

Thus, for Triple Monitor (or Surround) you'll need Tri-SLI? Or DVI to DisplayPort adapters? I assume the latter.

Can similar technology ever see the inwards of consumer TV's, for example some BRAVIA LCD that would work in conjunction with PS4 in some special "mode", for instance?

It's possible. I'm sure patents are flying around on this tech but the crux of G Sync is that you have purpose built cards and purpose built monitors walking hand in hand. There's no reason this tech can't filter down to consoles eventually.

This is huge for 4k gaming, because you can run @4k at less than 60fps and still have a great experience. It effectively moves the hardware goalposts forward a year or two.

dragonelite

Member

Take my tears filled money Nvidia just when you think team red is making an move Team green out do them..

Future PhaZe

Member

*checks FRAPS*

"wtf...I'm running at 35 fps...eh, who gives a shit"

IN MY VEINS

"wtf...I'm running at 35 fps...eh, who gives a shit"

IN MY VEINS

It's possible. I'm sure patents are flying around on this tech but the crux of G Sync is that you have purpose built cards and purpose built monitors walking hand in hand. There's no reason this tech can't filter down to consoles eventually.

This is huge for 4k gaming, because you can run @4k at less than 60fps and still have a great experience. It effectively moves the hardware goalposts forward a year or two.

yeah. this will be everywhere in a few years. probably the biggest price of tech : quality of experience improvement we'll see for a long time.

i wonder how quickly they can miniaturise it for rift?

PeterVenkman

Member

This is fantastic. One of the reasons I was holding off on upgrading my monitor was that I couldn't run current/soon to be released games at a stable 60 frames at 1080p, let alone a higher resolution/fps. I cannot wait for this. Screen tearing is the bane of my existence.

EDIT:

So is mine - but this, to me, is worth an upgrade. I know some people that aren't bothered by screen tearing, stutter or fluctuating frame rates. It drives me crazy though.

EDIT:

nomis said:My 570 is too old :'(

So is mine - but this, to me, is worth an upgrade. I know some people that aren't bothered by screen tearing, stutter or fluctuating frame rates. It drives me crazy though.

Krusenstern

Banned

Only thing I need to know now Nvidia...

How much?

How much?

velociraptor

Junior Member

This sounds good as I hate both screen tearing and input lag.

Indeed. Only problem is I play all my games (including PC) on a 40" LCD TV.

Bargh.

Hopefully similar tech is developed in TVs in the near future.