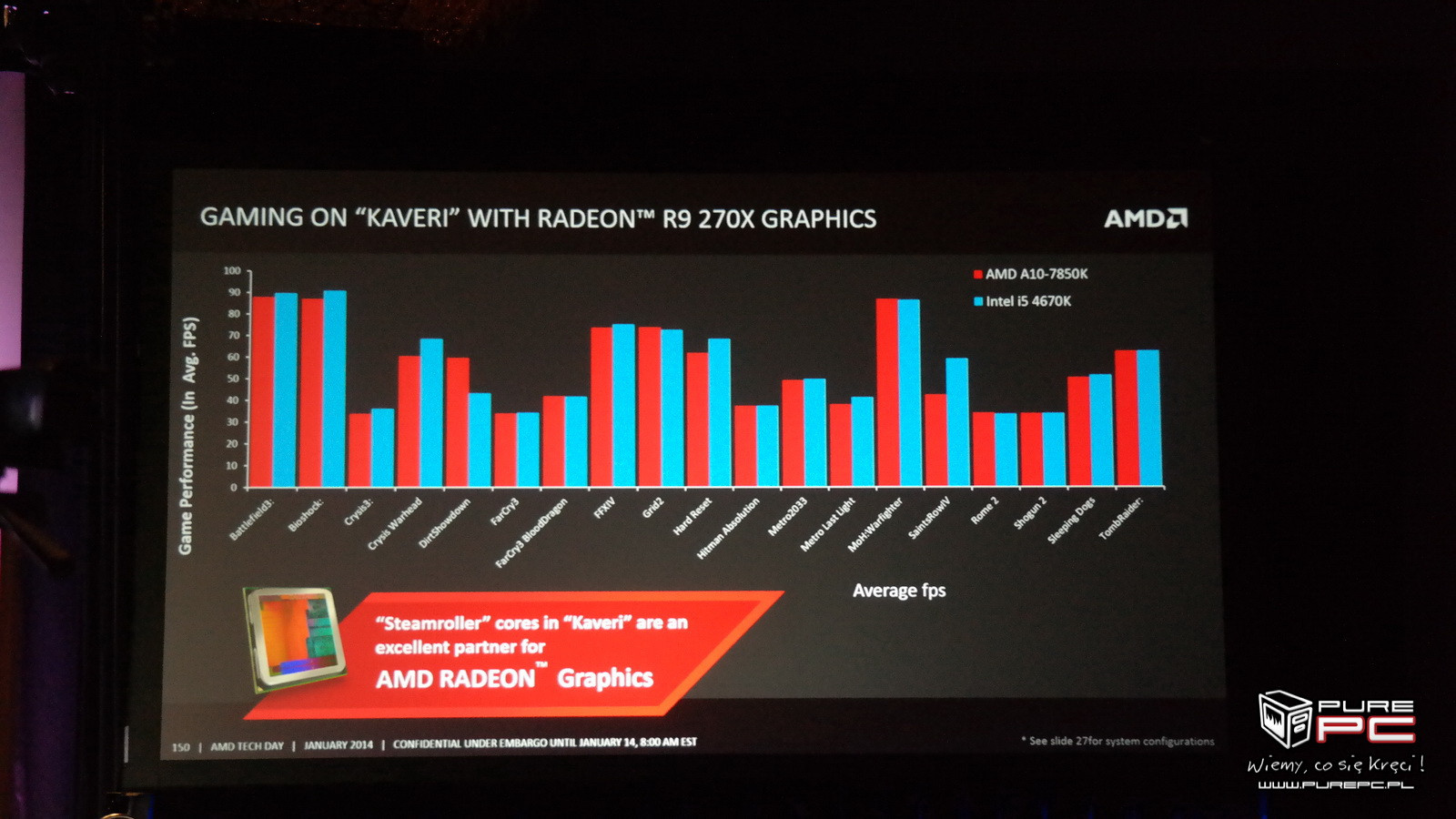

Nah, it doesn't have to be any obvious "cheating". If you carefully design the testing scenario and select the right CPU for the comparison I can totally see 45% (or more!) happening. The real question is how much of that remains in a normal game scenario (and, for most of us, with a fast Intel CPU).I'm shocked people believe all this to be honest, once you've been following PC gaming and hardware for long enough you see these kinds of claims every couple of years.

Someone actually clued up will investigate, identify how Mantle does X Y and Z completely incorrectly, causing buffer blah blah something or others and majorly bad AA or really shitty lighting in some situation or something like that.

I could believe a prioprietary API could get some benefits but 45% ? Overnight? Suuuuuuureeee

That's another thing, I expect the difference to get smaller at higher rendering resolutions.In hard GPU limited situations, it doesn't seem that Mantle will increase your fps

Basically, I expect Mantle to be really advantageous at the low-end, particularly for APUs, since they are generally more CPU limited (and since you usually target lower res at that level), decent at the mid-end and less significant at the high-end/enthusiast level (where you generally use a really fast Intel CPU and target really high IQ). We'll see how wrong or right this expectation is once a set of independent benchmarks is out.

It won't run on an Nvidia card. If your question is "if NV implemented Mantle support", then it's hard to say since we still don't really know just how focused on GCN type hardware Mantle is. And of course, for many reasons this is very unlikely to happen.Would it still beat directx on an nvidia card?