That's my point. Not only do we not know if we was even vetted (as far as well know), but we don't even know what that means.

Yet people are taking his info as gospel.

Would he get banned if he falsely claimed to be vetted? Just wondering.

That's my point. Not only do we not know if we was even vetted (as far as well know), but we don't even know what that means.

Yet people are taking his info as gospel.

That's my point. Not only do we not know if we was even vetted (as far as well know), but we don't even know what that means.

Yet people are taking his info as gospel.

Not with sustained clock speeds.

You have valid points re: architecture, although the real world results have yet to be seen. If RAM bandwidth is really only 25.6 GB/s, I hate to use strong words, but that's pathetic. Worse than Wii U w/ it's eDRAM pool used efficiently. We'll see what they can do with tiling and a small pool of on-die SRAM perhaps. Bandwidth may very well be the bottleneck the poster on anandtech was speaking of. This is all rumor, of course.

What has he been right about before? What proof do we have he was actually "vetted?" And what's the extent of the "vetting" process?

10k's still around

From the source of the figure that I posted.

http://www.anandtech.com/show/8718/the-samsung-galaxy-note-4-exynos-review/6

I leave it up to the Mods to make that call. He's not banned and I'm sure they're reading this thread, so they must figure he's in a position to know things. We've seen folks banned for less and in short order. Now, whether what he knows is right is something else, but Emily has backed up information he's mentioned as well.

He has posted fairly frequently over the past few days and his posts have been sourced a lot, so if he is lying about going to the mods before posting this info we would know by now (a mod would tell us/ban him).

Now, it's true that we have no way to know if his info is legit, but mods typically require insiders to prove that they are in a position to know this info. Not that the info itself has to be accurate. So obviously all insider posts should be taken as such, with a grain of salt.

If we're taking him at his word simply based on him not being banned...

Forget this--I'm going shopping to pick up some Analytical hats for everyone to wear, because gosh darn do some people here need them

That's my point. Not only do we not know if we was even vetted (as far as well know), but we don't even know what that means.

Yet people are taking his info as gospel.

Considering RAM is the one thing Nintendo has never been shy about overdoing, I really don't think we should worry about it.

He has posted fairly frequently over the past few days and his posts have been sourced a lot, so if he is lying about going to the mods before posting this info we would know by now (a mod would tell us/ban him).

Now, it's true that we have no way to know if his info is legit, but mods typically require insiders to prove that they are in a position to know this info. Not that the info itself has to be accurate. So obviously all insider posts should be taken as such, with a grain of salt.

If we're taking him at his word simply based on him not being banned...

Forget this--I'm going shopping to pick up some Analytical hats for everyone to wear, because gosh darn do some people here need them

10k was also vetted or approved or whatever it was to some degree as well, as I recall...and whatever degree that was was enough for people to take him seriously too

10k has had previous info on here after having it approved by mods, only for it later to be confirmed fake.

The guys absolutely crazy.

Because 10k literally gets DM'd by random Twitter people pretending to be insiders and then posts it as insider news.

Like I said, all insider rumors/reports are just rumors. We shouldn't take any of them as fact, vern's included. But why shouldn't we be able to discuss these rumors?

Like I said there's no reason to believe Nintendo will be using A57 on the old 20nm process, if the final retail unit uses A57 at all.

Ideaman had real info, he was just a little to optimistic just like everyone else.I don't remember Ideaman being banned during WUST either.

Like I said, all insider rumors/reports are just rumors. We shouldn't take any of them as fact, vern's included. But why shouldn't we be able to discuss these rumors?

I never said they can't be discussed; they certainly can be in the sense any number thrown out could be. What I warned against was taking them as gospel, which some seemed to based on their replies

Actually, I have been wondering for a while now why this thread was even still open, considering the source of the information in the OP. Yet, the more that I think about it, we have numerous sources saying that Tegra X1 powered a version of the dev kits. Why should we expect a drastic change in the final product? Pascal is basically a shrunken down Maxwell, so that's fine. But to expect double the bandwidth? A different CPU architecture? Seems unrealistic this close to launch. Now, we have a couple of insiders here and Emily Rogers saying that this sounds accurate. I didn't want it to be true, but I'm willing to concede it just might be.

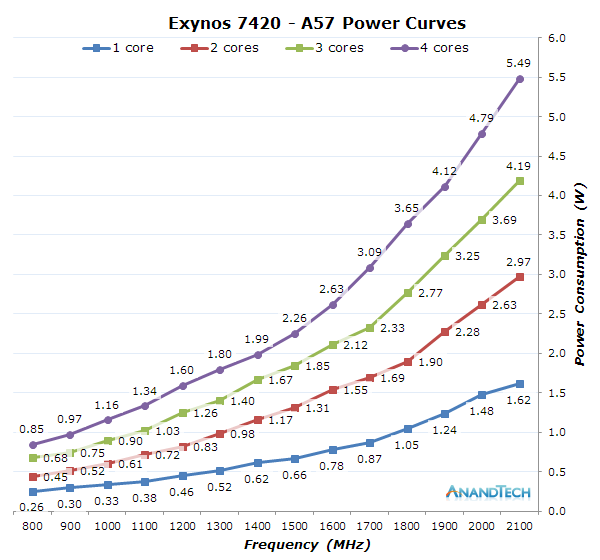

5W just for the CPU is already a lot for a handheld. A smart phone usually has a TDP of 3-4 watts for the entire SoC.

Actually, I have been wondering for a while now why this thread was even still open, considering the source of the information in the OP. Yet, the more that I think about it, we have numerous sources saying that Tegra X1 powered a version of the dev kits. Why should we expect a drastic change in the final product? Pascal is basically a shrunken down Maxwell, so that's fine. But to expect double the bandwidth? A different CPU architecture? Seems unrealistic this close to launch. Now, we have a couple of insiders here and Emily Rogers saying that this sounds accurate. I didn't want it to be true, but I'm willing to concede it just might be.

If we're taking him at his word simply based on him not being banned...

Forget this--I'm going shopping to pick up some Analytical hats for everyone to wear, because gosh darn do some people here need them

10k was also vetted or approved or whatever it was to some degree as well, as I recall...and whatever degree that was was enough for people to take him seriously too

Calm down, man.

That is a entire SoC with 4 of its CPU's maxed out at 2.1Ghz. Switch will also be much bigger than your standard smartphone.

Come again? I'm merely reminding some--such as yourself apparently--to be skeptical. If you took that as anything other than that, you might be in this a little too deep yourself

Yes and I knew these people were deluding themselves.

Have you been reading these threads? Yes, some people absolutely expect this thing to be in spitting distance of the X1/PS4 power-wise.

Is this a joke? Volta isn't gonna be out for a while. Surprised this isn't using graphene 3-dimensional neural array Kappa.

Did you know that the Switch is NOT a phone....

You know the small round icon with the arrow inside, that's next to the person you quoted, links back to the original quote, so there is no reason to make a link out of the entire quote? ;-)Seriously guys, calm the fuck down.

If they do go with pascal for the retail units, I wonder if hitting around 700gf, and upping the bandwidth could be possible considering Parker is somewhere around 700 with higher bandwidth while reducing power consumption.

Here's even more gloom for you: That 1 TFLOP figure is almost surely fp16. Typical fp32 performance will likely be half that.

vern, who has apparently been vetted by mods, claimed so:

Is it really necessary to quote Matt's post on every page?

The real question is, will it be easy enough to port a game to Switch?

That was my interpretation as well up until Vern stepped in and lent credence to the specs in the OP of this thread. It's all rumor, as I keep saying. 50 GB/s would make much more sense to me. It would still be a potential bottleneck, but it would fit more reasonably within the context of the other specs.

I think he said he contacted the mods, they checked him out, but he wanted them to leak the info, but they didn't want to do it. If that is the case, then that would explain why they haven't banned him.

Assuming that the target for Switch games will be a chip at its maximum clock speeds is just setting yourself up for disappointment.

It's actually based on two well known insiders. There's no insider claiming it's a pain in the ass, like the specs in the OP would suggest.

They've literally provided no technical explanation, on how these specs will pull it off. The bandwidth alone is an issue.

Forgive me for not placing my faith in GAF insiders, who provide no substance.

They've literally provided no technical explanation, on how these specs will pull it off. The bandwidth alone is an issue.

Forgive me for not placing my faith in GAF insiders, who provide no substance.

Correct me if I'm wrong but what's been said is this is close to what we should expect. Not an exact spec and not even a claim that these were even the exact specs of any development kit at any point in time. Also going from A57 in a development kit to say A72 in retail certainly wouldn't break anything development wise so is possible if not expected.

There's no reason the product would be radically different. It would just be a minor performance boost. It would still be an Nvidia GPU with 2SM's and the same Shader Architecture. It would still be 4 arm cores with in kernel switching for low power mode. It would still have the same ram amount with the same latency, just potentially slightly higher bandwidth due to clock increases.

A72 and A57 was an incremental improvement. The Core itself would be much smaller at 16nm, offer better performance per watt, and higher clocks. There would be no code changes required. For that matter, A57 on 16nm would offer an improvement over 20nm, just not quite as much. Pascal and Maxwell would be nearly identical at 16nm in a consumer configuration, the only difference being that Pascal has a few updates to color compression that would be advantageous to have in a mobile scenario.

The RAM bandwidth wouldn't necessarily need to change either. There is enough bandwidth with a 64-bit bus if they tweak the cache layout, perhaps just adding an L3 cache or a small pool of ESRAM on die to avoid idle clock cycles.

When Emily Rogers says that the custom tegra chip resembles the Tegra X1, it's because the transition from Pascal to Maxwell was largely to do with the die shrink, and not a major architectural shift. To someone who isn't combing through the very fine sand of this, they would appear to be very similar. My hope is that they went with A72 and Pascal, but if they simply die shrunk Maxwell and A57 to 16nm, the differences would be modest at best.

Is there any credible information on the power of pascal tegra chips? Googled around but didn't find much of anything. lots of noise in my searchterms not helping.

And yet this is where the "hybrid" nature of the thing comes into play. No sane person is expecting CPU/GPU to be running full clock in portable mode... but yes, they just might in docked mode.

Is there any credible information on the power of pascal tegra chips? Googled around but didn't find much of anything. lots of noise in my searchterms not helping.

I've been looking this up, but I haven't found any videos showing it off. I wasnt able to find many for the X1, either.

I'm expecting Pascal but not necessarily Cortex A72. Switching from one ARM uArch to the next doesn't seem like it would cause major problems, but it's still a different core and we don't know if Nintendo were willing to pay the licensing costs for the newer architecture. I hope someone leaks the final specs, so that we don't have to spend months arguing over die photos again.

RAM is something that can change late in the game, but at this point, we are really late in the game and this thing is going to be hitting the assembly lines soon. I believe Emily when she says 4 GB in the final unit, although it would be great if Nintendo upgraded to one of these faster 6 GB modules from Samsung.

As I've already said, CPU and memory performance (normalized to GPU bandwidth / resolution) have to be the same in mobile mode and docked mode, because you cannot scale down the things that run on the CPU as easily as you can scale down resolution, which roughly scales linearly to GPU performance.

They will have to find a sweet spot for CPU clock speeds that allows for usable battery life, and it will certainly not be the max rated clock speed.

Most speculation in this thread is ignoring that fact and is based on maximum clock speeds, which is unrealistic.

Is there any credible information on the power of pascal tegra chips? Googled around but didn't find much of anything. lots of noise in my searchterms not helping.

NVIDIA said:Built around NVIDIAs highest performing and most power-efficient Pascal GPU architecture and the next generation of NVIDIAs revolutionary Denver CPU architecture, Parker delivers up to 1.5 teraflops(1) of performance for deep learning-based self-driving AI cockpit systems.

There's no consumer device powered by Pascal based Tegra yet, so I don't know what video do you expect to see.

We have Matt and OsirisBlack both indicating that PS4/XB1 ports shouldn't be a technical problem...

Seriously there is no quantity of posts from random jagoffs on the net that will amount to someone with actual knowledge of the situation. Having an idea of the RAM needed for the average multiplat game on switch is going to need more than the ability to know 8>4 or list your phone's specs. People are tripping on their way to jump to conclusions. Open insight from devs is a few months away at any rate. People can have fun with the last stretch of speculation I guess.

I am a computer scientist, so I appreciate rational arguments and evidence over arguments from authority.

Is there any credible information on the power of pascal tegra chips? Googled around but didn't find much of anything. lots of noise in my searchterms not helping.

What would that be?It all depends on bandwidth and resolution. There's no way Switch could run console quality ports at 720p, but if it had 50GB/s memory bandwidth it should be able to run console titles at qHD resolution. The CPU would also be weaker than what's in the current consoles, so other cuts would have to be made there as well.

Problem is the dev kit spec was half of what you'd need for that level of qHD performance.

I don't necessarily care for the numbers just real world what it can do. I just feel like Nintendo should be over this and release the specs and what the console can do. Hell let Nvidia do it. They will do a better job than Nintendo as giving us the details.

It's not the entire SoC. Obviously, the GPU is not contributing during the benchmark.

Another reference, the Nvidia Shield Android TV with a Tegra X1 (20nm) is consuming 20W during gaming. The process node is different, but it also doesn't have a display.

Assuming that the target for Switch games will be a chip at its maximum clock speeds is just setting yourself up for disappointment.

People with vocational experience believing themselves to be a voice of authority on what is and isn't possible...

My opinion is merely that on a modern process there is no reason at all why a device of Switch's size would struggle to contain a A57 that can run at 2Ghz during gameplay. We're going to have to agree to disagree.

I thought the Dreamcast did it back then... man I'm out of touch.ACHTUNG: Does anybody remember the "feature" that was supposedly included in the (false) rumored Polaris based GPU, that had the fake dev insider who fed 10k his bullshit, recognize the chip as "Polaris" because it had this "capping" ability, to only render what was in the view of the player (and not render what was left/right/below/above the screen view)?

What was this called again? It was something the PS4 and XBO did not have. Does the Tegra X1 have something similar?