ethomaz

Banned

That exactly that.Naw, Nvidia just put what, roughly 50% of the silicon area to RT & DLSS, let's not bother looking into it too much ...

AMD's Smart Access Memory though.. OH WOW, EXCITING TECH, and i can't wait to see their super resolution.

The age of brute forcing your way into rendering is almost over and many reviewers are stubbornly resisting.

Same with VRAM usage, with the paradigm shift in IO management on consoles and now DirectStorage API coming to PC soon, it's a very archaic view to think that VRAM might be a problem in the future, something that HWUB also tried to use as a boogeyman to recommend the AMD cards. (While they also say they don't think 4K is that common/important, cards aren't that hot for 4k.... bu but VRAM!?)

Sadly, these cards are ML beasts and there's no real methods of testing that in games yet until DirectML starts appearing in games, which most likely will happen soon enough with Xbox series X supported games.

A.I. Expert: RTX 3080 Reviews “Don’t Do It Justice” — TechNoodle

I have a unique perspective on the recent nVidia launch, as someone who spends 40 hours a week immersed in Artificial Intelligence research with accelerated computing, and what I’m seeing is that it hasn’t yet dawned on technology reporters just how much the situation is fundamentally changing withtechnoodle.tv

Let's just say, Nvidia did not triple the tensor ops of these cards compared to Turing only to run DLSS 2.

Nvidia designed the equivalent of a McLaren P1 for the Nürburgring, with straight lines (rasterization) and hard curves (RT & ML) for upcoming games, and then insist on bringing that McLaren P1 to a dragster straight line race... GG

Reviewers are outdated... they need to follow the new focus of the game development industry.

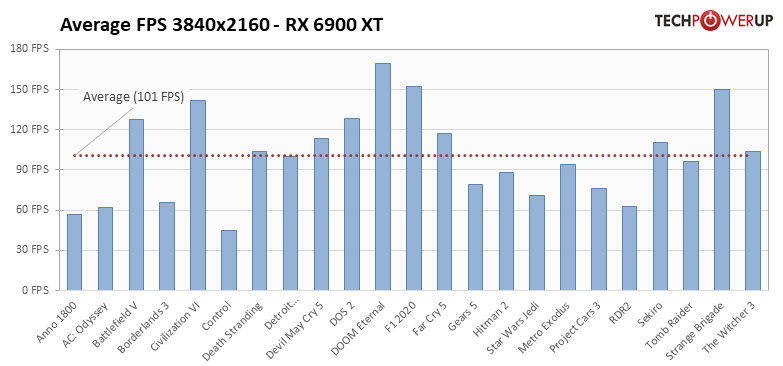

And neither AMD or nVidia is focusing in brute force native 4k anymore but the trade off is not fair... you lose to much performance that can be used in others places to gear up your graphic fidelity and effects.

Last edited: