You are incorrect, the blur reduction is the reduction in ghosting due to the G2G pixel transition time being reduced to near 4.2ms. Going from 16.6ms to 4.2ms transition times is a huge improvement. The closer you get to 0.0ms the less ghosting there is. At 0.0 there would be no ghosting at all. This is how ULMB works. It simulates a 0.0 G2G response time by strobing the backlight to eliminate the veiwing of the pixel transitions happening.

You are confusing scan-out time with response time, and response time with image persistence.

They are all completely separate things.

Scan-out time is how long it takes for the display to draw a new frame - typically from the top to the bottom of the screen.

Response time is how long it takes for pixels to transition from one state to another.

Image persistence is how long an image is held on-screen.

- A 60Hz CRT has a scan-out time of 16.67ms, a response time of <0.1ms, and image persistence of around 0.5ms. (varies depending on the phosphors used)

- Current OLED TVs have a scan-out time of 16.67ms, a response time of about 0.1ms, but image persistence of 16.67ms at 60Hz, since they are flicker-free displays.

- An average 60Hz LCD monitor will have a scan-out time of 16.67ms, a response time of maybe 12ms, and image persistence of 16.67ms.

- A 240Hz G-Sync display will have a scan-out time of 4.17ms, a response time of about 3ms, and image persistence of 16.67ms with a 60 FPS source, since they are also flicker-free displays.

The primary cause of motion blur is image persistence rather than response time or scan-out time.

Response time may cause image smearing/ghosting, but not motion blur, unless it's

really bad.

So the OLED, the G-Sync monitor, and the 'average' LCD will have similar amounts of motion blur, despite varying response times and scan-out times, because the image is still being held on-screen for 16.67ms.

The CRT will have significantly less motion blur than the OLED, despite scan-out and response time being similar, because the image is only held on-screen for a fraction of the time.

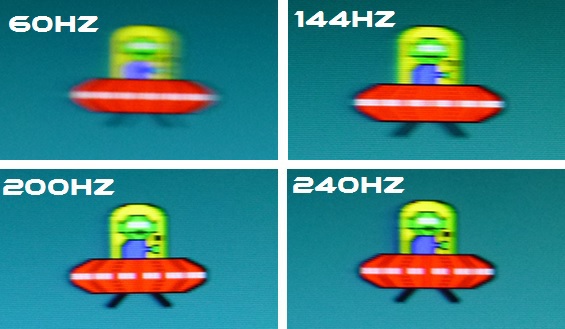

And this shows in real-world tests:

Motion blur largely covers up most of the difference in response time between OLED and LCD.

Not all of it, and it may be more visible on certain color transitions, but response time is largely not a factor with fast motion.

When you enable low-persistence backlight strobing (similar to ULMB) the motion blur is significantly reduced.

This makes the LCD have far less motion blur than the OLED TV, though it also makes its poor response times far more apparent with distinct after-images from the previous two frames now being visible.

This change did not come from faster response times or scan-out time, but from reduced image persistence by switching the backlight off, causing the screen to flicker, instead of holding the image on-screen until the next frame.

Now I will add that, at slower speeds, response time differences are likely to be more noticeable, but it just isn't much of a factor for motion blur with moderately fast motion.

Higher refresh rate LCDs do sometimes have

marginally faster response times when driven at high refresh rates due to other factors, but it's really 1-2ms at most.

What you will mainly be seeing is the difference in response time between a TN panel with ~3ms pixel response and very well-tuned overdrive, compared to whatever your previous 60Hz display was, rather than it having anything to do with scan-out time.

The reason for that should be obvious: you need a much faster LCD panel to produce a 240Hz display than a 60Hz one.

If the average response time was higher than 4ms, it would be blending frames together - which is what happened with the 200Hz (5ms) VA panels that had 11ms response times on average.