ITT: Lacking reading comprehension, bullshots, stretched shots and emulator shots. GAF, I am disappoint.

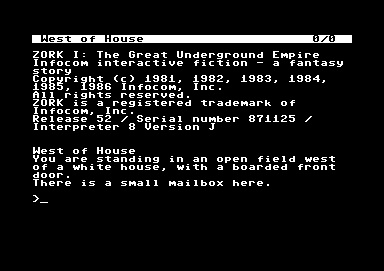

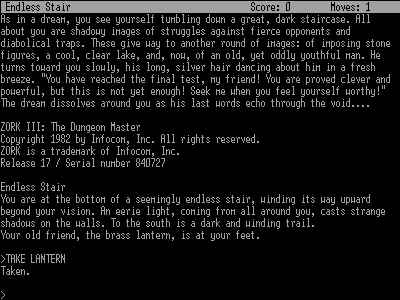

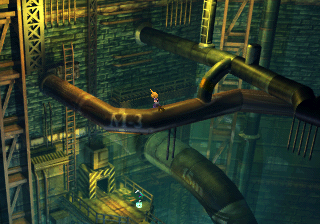

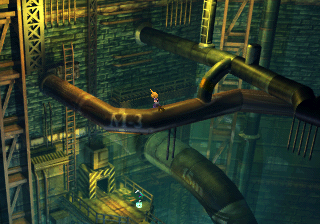

My answer: Final Fantasy VII to Final Fantasy VIII.

Of course I can't definitively prove those haven't been meddled with, but unlike some other posts in here these are at least all the same and - more importantly - native res screenshots.

My answer: Final Fantasy VII to Final Fantasy VIII.

Of course I can't definitively prove those haven't been meddled with, but unlike some other posts in here these are at least all the same and - more importantly - native res screenshots.