I agree with this at some level, but, you don't have to be an expert to see various oddities/dips that seem to plague the XSX in a lot of head-to-head comparisons with the PS5, sure, we can go back to how the GDK isn't mature enough, but, Microsoft is a software company foremost, so that shouldn't be an issue. They've got backwards compatibility nailed, they've got quick resume, etc.

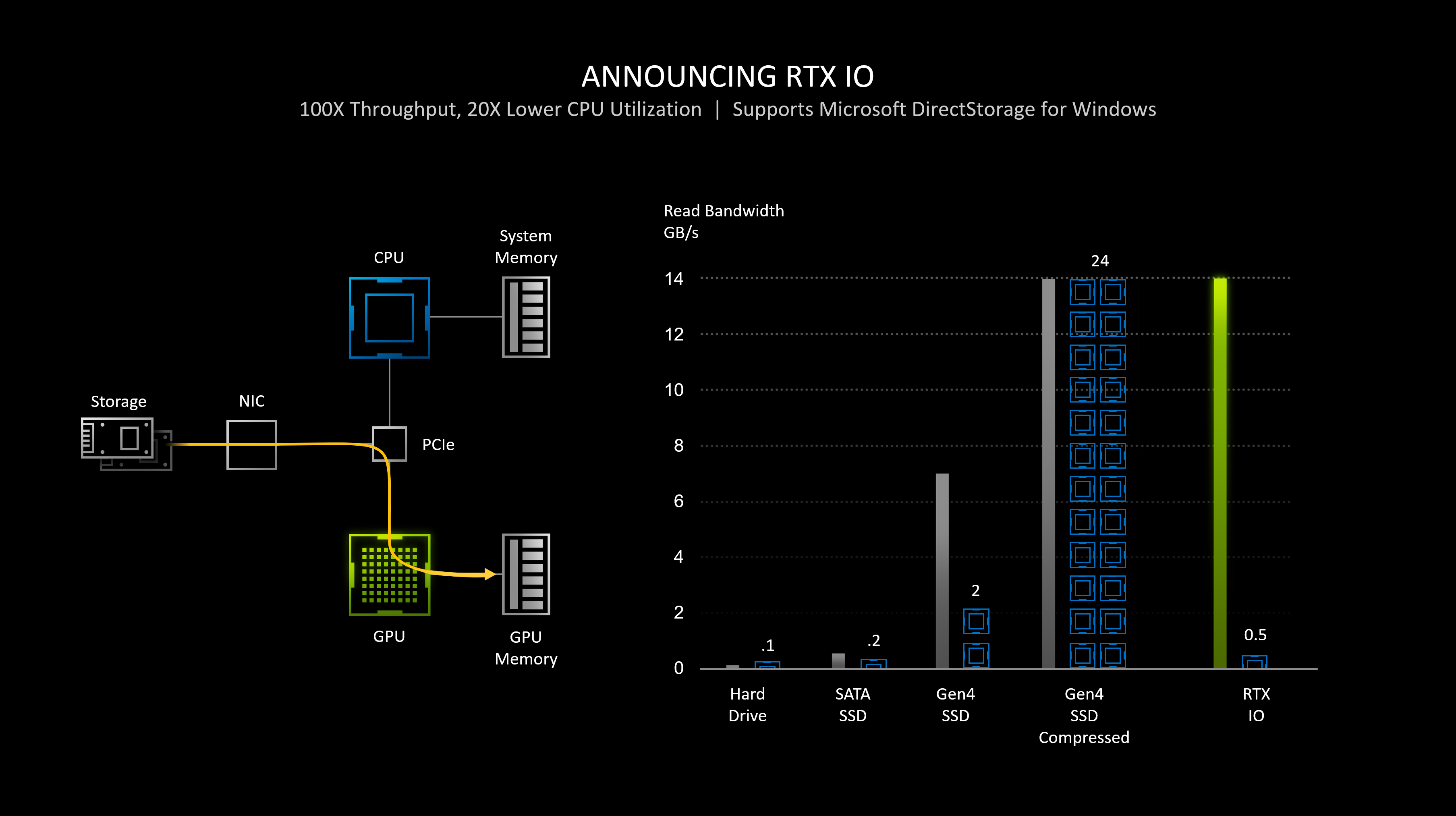

You effectively have highspeed access to 10GB's of GDDR6 which is rated for 560GB/s.

The OS reserves 2.5GB

This leaves 3.5GB of GDDR6 rated at 336GB/s.

If you will, a thought experiment:

-Coldwar, running on aa RTX 3080 essentially allocates all GPU memory during gameplay.

()

-Coldwar, running on an RTX 3090 essentially allocates 12.5-13.5GB's during gameplay.

()

Back to the XSX/PS5

Assets, textures, etc have to be loaded into VRAM.

-So, are they only limiting themselves to 10GB's of GDDR6? Or are they storing assets into that slower tier once the higher tier has been exhausted? Split memory architecture was done for one reason only, to marry cost savings with a 320bit bus so that the APU could be fed with 556GB/s, albeit with only 10GB of GDDR6 accessible at those speeds.

Which system do you think it better apt/efficient for such a task? It's the same reason we don't see NVIDIA or AMD busting out split memory architecture on their graphics card, because why would they?

To me, that's the only explanation. That's the only real thing that could explain it, and maybe TDP aspects of each system as well.

oh man if I had a dollar everytime Xbox fanboys and microsofts came up with an excuse I'd be a millionaires by now.