Ascend

Member

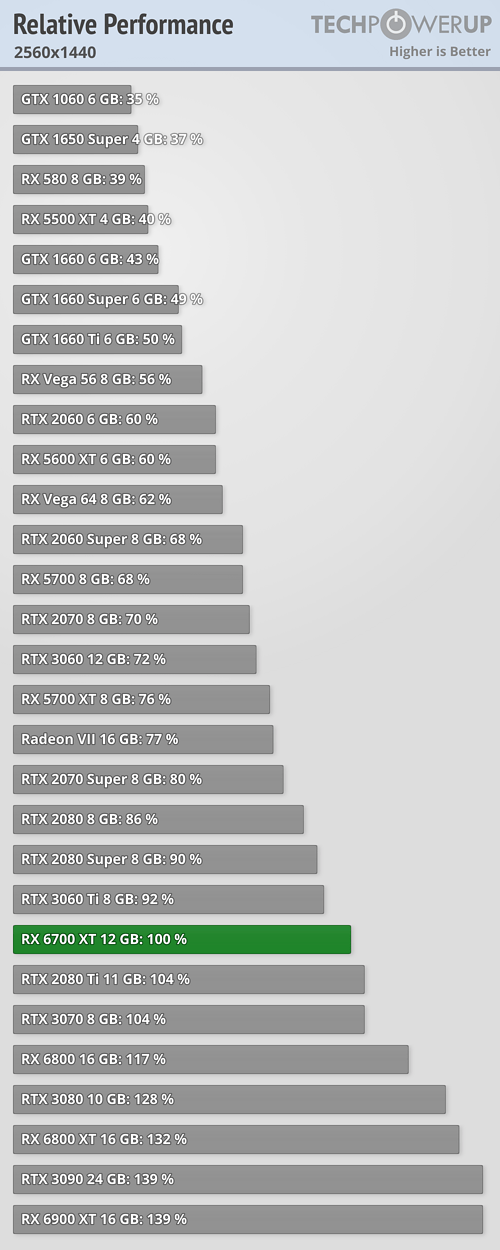

Why did you cherry pick the 6900XT instead of the 6800XT?I wouldn't go that far, when you have a $1000 6900 xt = $650 rtx 3080 .

Horrendous RT performance and 6 months later still nothing like DLSS from AMD.

Why did you cherry pick the 6900XT instead of the 6800XT?I wouldn't go that far, when you have a $1000 6900 xt = $650 rtx 3080 .

Horrendous RT performance and 6 months later still nothing like DLSS from AMD.

That message made all more clear to me. It's a software problem because some tasks are made in hardware by AMD and rely on the game engine to be done in Nvidia cards. Those engines could be efficient or not in performing those tasks. If that's the case, it's still a downside of Nvidia boards. Saying it's a engine/developer issue is the equivalent of saying it's a developer fault if a given website doesn't run well on Internet Explorer 6, because it's engine doesn't follow the W3C directives...Nope, it's neither of those.

It's not driver because under DX12 there isn't a kernel driver, and the driver doesn't manages the hardware, it doesn't even have a clue what the hardware is doing -- the game engine manages the hardware. And it isn't relying on the CPU to do magical tasks, nor offloads any extra work to the CPU.

As I said from the beginning, and as we established a few comments earlier, and proven by PCGamesHardware.de tests it's a matter of how a game engine works (chiefly, how it manages, packages and binds resources) -- the standard way, or in a way that's more ideal for GeForce. That's why in some games the 3090 is ahead of the 6900XT and in others not.

Trick question? Anyway because on average they're equally matched in regular titles. Not including RT obviously, where 3080 is on a whole other level.Why did you cherry pick the 6900XT instead of the 6800XT?

That message made all more clear to me. It's a software problem because some tasks are made in hardware by AMD and rely on the game engine to be done in Nvidia cards. Those engines could be efficient or not in performing those tasks. If that's the case, it's still a downside of Nvidia boards. Saying it's a engine/developer issue is the equivalent of saying it's a developer fault if a given website doesn't run well on Internet Explorer 6, because it's engine doesn't follow the W3C directives...

Aaaand we're back at killing the messenger.They're testing downclocked CPU's now, because gamers downclock their cpu's to get performance now?

What do you suppose your resolution is, if you use 1080p with DLSS Quality? Or... 1440p with DLSS Balanced... Or... 4K with DLSS Performance...?So downlocked cpu, got it.

Maybe the reason why nobody benches at 720p is because nobody plays at that resolution since like late 90's no more? There are 1080p benches as well in the picture I posted.

You can see at 1080p the 3080 avg is 137, vega64 is 60, so no v64 is not beating 3090, well unless you downclock your cpu i guess.

As the root cause, you are right.This still has nothing to do with the CPU : ) ) ) ) ) )

And I never denied that it isn't. I even gave props to HUB and I'm subbed to them fyi, since they're only few tubers whose benches I can trust.What do you suppose your resolution is, if you use 1080p with DLSS Quality? Or... 1440p with DLSS Balanced... Or... 4K with DLSS Performance...?

Quit with the goddamned excuses. This is not about AMD vs nVidia performance. This is about nVidia being more of a performance hog on the CPU.

What do you suppose your resolution is, if you use 1080p with DLSS Quality? Or... 1440p with DLSS Balanced... Or... 4K with DLSS Performance...?

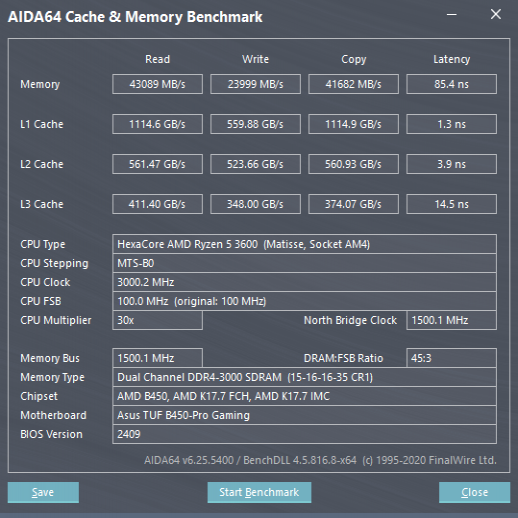

I assume you mean B450? I'd say the 5600X... If you can find one.With that being the case, which cpu should I get for my b540 + gtx1660 super? I was planning a upgrade anyway, and this only affects low end cpus, right?

It's not exactly pointless... I mean, the RTX 3080 and 3090 edge out the 6800 and 6900 at 4k, but that gap is diminished (if not reversed) at 1440p and 1080p. I think right now it's safe to assume that performance of the 3080 and 3090 would have been higher than AMD's cards at all resolutions, if it wasn't for this issue.And I never denied that it isn't. I even gave props to HUB and I'm subbed to them fyi, since they're only few tubers whose benches I can trust.

I told you why it is different from amd and dx11: you can still get easily above 60fps in dx12 on nvidia, but of course nvidia should look into it and work with devs to make sure super low end cpu's get above 60fps and for the most part they do.

But testing with a downlocked CPU [that no gamer ever does that] is kind of pointless, no?

Well, we agree that the problem is way less aggressive than the AMD one on DX11. But I also get the "spirit" of the test. They simulated "extreme" situations just to show that the problem exists. If someone is in a scenario of having to play CPU bound games, or in a PC with limited CPU power, even for a brief period, the results of the test shows what to expect.Sort of yes.

But we have to remember that this Issue doesn't or marginally manifest in situations where these GPUs are expected to be used.... ie. NOT on 720p low settings.

On higher resolutions and settings the GPU runs into other limitations before it runs into barriers on resource management by the engine.

I assume you mean B450? I'd say the 5600X... If you can find one.

It's not exactly pointless... I mean, the RTX 3080 and 3090 edge out the 6800 and 6900 at 4k, but that gap is diminished (if not reversed) at 1440p and 1080p. I think right now it's safe to assume that performance of the 3080 and 3090 would have been higher than AMD's cards at all resolutions, if it wasn't for this issue.

Granted, unless you're into high fps gaming, these cards are overkill for those resolution. But it makes you wonder if they will regress more as games become more CPU heavy.

And considering DLSS renders at lower resolution, you could say that DLSS performance would have potentially been much better than it is right now, if this issue did not exist.

Well, we agree that the problem is way less aggressive than the AMD one on DX11. But I also get the "spirit" of the test. They simulated "extreme" situations just to show that the problem exists. If someone is in a scenario of having to play CPU bound games, or in a PC with limited CPU power, even for a brief period, the results of the test shows what to expect.

When is reBAR coming to Ampere big boys?It's gonna be interesting to see how much perf you will be able to gain on ampere big boys with reBAR.

Hey, I acknowledged HUB's results what more do you want?The Squirrel needs a CPU and Driver upgrade, his rig can't process the discussion.

End of the month supposedly. Most mobo manufacturers already got their bios available for download and flashing. Now need bios for gpu and new drivers from nvidia.When is reBAR coming to Ampere big boys?

Well I agree, I guess. If you want to play on lower resolutions with a weaker CPU, you're better off with an AMD GPU in most of the AAA titles it seems.

It's just not a usual scenario I guess for a 3080 or 3090 owner so I don't know if the developers are going to focus on this more in the future.

Yeah, that. Thanks!I assume you mean B450? I'd say the 5600X... If you can find one.

I have said it before but it is very applicable to people who buy a CPU, Ram and mobo that they keep for 10 years and do several GPU upgrades in that time.

Further as we leave the cross gen part of this console generation I would expect games to need more CPU horsepower to run well because 8c16t zen2 is such a giant step up from the Jaguar cores in the old consoles.

So while it might mainly manifest at 1080p medium now there is no guarantee it will stay that way.

Hey, I acknowledged HUB's results what more do you want?But testing with a downclocked cpu just to get a favourable result is bending a bit too low there.

Trick question? Anyway because on average they're equally matched in regular titles. Not including RT obviously, where 3080 is on a whole other level.

WRONG !Are you joking? you literally wrote:

wouldn't go that far, when you have a $1000 6900 xt = $650 rtx 3080 .

The reason you cherry picked the 6900 vs 3080 and ignored the 6800XT which has the CORRECT PRICE POINT and PERFORMANCE to your comparison, its because in your mind it looks better to Nvidia if you compare an expensive product to your cheaper superior product. Its a completely and utterly, absolutely visible transparent process of how a Biased mind works.

The 6900XT is not equally matched to a 3080. Its on 3090 level unless you only cherry pick your titles, and forget the same can be done for the 6900xt where it wins by a lot.

That could very well be the true.

On the other hand, those days when you could keep a CPU for 10 years and upgrade just the GPU are probably over anyway. It might have worked when Intel gave us +5% performance with every new CPU generation (and the Jaguar baseline was very low anyway), but right now AMD gives us +30% or whatever basicly every other year.

So in other words 8c 16t not good enough for the new Nvidia GPU's?

WRONG !

Lookie here: https://www.techpowerup.com/review/amd-radeon-rx-6900-xt/35.html

rtx 3080 and 6900 xt is neck and neck minus RT.

- 7% faster at VULKAN than the 6900XT

- 2% slower at DX12 (2019-today) than the 6900XT

- 8% faster at old DX12 (2018 and older) than the 6900XT

- 50% more expensive.

4k in their new test suite.WRONG !

Lookie here: https://www.techpowerup.com/review/amd-radeon-rx-6900-xt/35.html

rtx 3080 and 6900 xt is neck and neck minus RT.

Of all crazy takes, the "AMD is more expensive" is the most amazing.I wouldn't go that far, when you have a $1000 6900 xt = $650 rtx 3080 .

Horrendous RT performance and 6 months later still nothing like DLSS from AMD.

Pretty conclusive. Upgrades sometimes are downgrades if your CPU is old by today's standards.

Yeah the testing for some games seemed weird. It's like yes it's an issue in some eSports titles but who is playing cyberpunk with a 3080 at 1080p medium?Again, it can still be a huge upgrade, depending on what you play and what settings you pick

I had a 2700x and a 1080, and sold my gpu for a good price and managed to grab a 3070 at near msrp price.

These were my Cyberpunk benchmarks with the 1080

1920x1080 Preset Medium: 56.2

1920x1080 Preset Ultra: 40.3

These are my new benchmarks with the 3070

1920x1080 Preset Medium: 75.3

1920x1080 Preset Ultra: 72.2

1920x1080 Preset RT Medium: 53.2

1920x1080 preset RT medium + DLSS quality: 68.2

2560x1440 Preset Medium: 73.6

In short, this upgrade took me from getting 40 fps in ultra settings to getting 68 fps with ultra and rt medium settings.

Even at more CPU bound medium settings, i saw a %33 increase, but clearly CPU showed its limitations at 1080p medium (but i wont be playing the game at 1080p medium so yeah, not a huge problem) At 1440p medium though, bottleneck shifted to GPU again, and game became GPU bound.

In short, it is not the end of the world. Be reminded that Cyberpunk is a tough S-O-B when it comes to be CPU bound. Yes, it is also very GPU bound in the same time.

But here's a less GPU bound situation than Cyberpunk

In short, 5600x made no meaningful differences compared to my 2700x at 1080p ultra. this is not even 1440p, which is what 3070/3080 targets

Now, you might say that lowering the settings at 1080p to high might shift the bottleneck to CPU, and 5600x would probably get the upper hand.

But then again, I got 53-54 fps at ultra with my previous gtx 1080. Now i get 79, and that's huge. I might dial back the settings, push the resolution scale a bit for a much sharper and nicer image, and so on.

Again use cases will be different. I can see where this topic going, but according to the video, HBux made it seem like it makes no difference to from a rx 580 to gtx 1080 with a 4790k. (bear in mind that 2700x actually has a gaming performance near 4790k and is considered by lowend ryzen cpu by many people, and it is somewhat lowend at this point).

Yet, here I'm, actually going from 1080 to 3070, and yet still get great uplifts in my performance, at my own preferences of games and settings. Not even at 1440p, and that tells a lot.

Again, I managed to find the 3070 by pure luck, and managed to sell my 1080 for a hefty price, or I would stick with my 1080, since i'm also okay with more optimized/lower settings. But none the less, neither 1080 nor 580 could get the results the 3070 got in these two particular games.

Yeah, WTF?Woah rx580 higher fps than 3080 on i7 4790k in fortnite .

Yeah, WTF?

It would show the AMD dx11 overhead kick in. But dx11 is not the way of the future. Current issues with dx12 are the ones that should be fixed.They should have tested dx11 too in Fortnite, I wonder how that would look like.

It would show the AMD dx11 overhead kick in. But dx11 is not the way of the future. Current issues with dx12 are the ones that should be fixed.

Also, he tested dx11 in the video. You should watch it.

Yeah, WTF?

It doesn't invalidate the findings. Dx12 is here to stay, so it must be addressed.Yes, but on case of Fortnite - DX11 renderer is probably more efficient and it performs better than DX12 why would anyone use that if there is a choice?

Supposedly, they used some special modes in Fortnite, pro players or something like that.i7 4790k is still way more powerful than last gen consoles, surprising to see it cpu bounding fortnight.

so what's causing this extra nvidia overhead? shadowplay? ansel? gsync? shield?