LowParry

Member

Still rocking my i5 4670K. Should I upgrade?

We'll see new CPUs next year which should bring in the big gap in terms of performance with Intel. However, still wait on Ryzen to see how that works out in March.

Still rocking my i5 4670K. Should I upgrade?

Still rocking my i5 4670K. Should I upgrade?

Still rocking my i5 4670K. Should I upgrade?

Still rocking my i5 4670K. Should I upgrade?

Should I upgrade?

wait

hold off

Intel's typical MO would be to push the 6-cores upward.

Hopefully Ryzen isn't a bust, and will change that.

I don't think it's worth the price difference. Paying 30-40% more for less than 10% performance improvement in ideal scenarios. Often no difference at all. Better off putting that money toward a GPU, unless money is no object and you can get top end everything.

Overclocked i5 is fine for most.

I regret buying an i5. To be fair, I did not plan on keeping my CPU for as long as I have, but I picked an i5-2500K back when it "didn't matter for games".

Now my i5 has fallen behind and has been bottlenecking games for the past year or two.

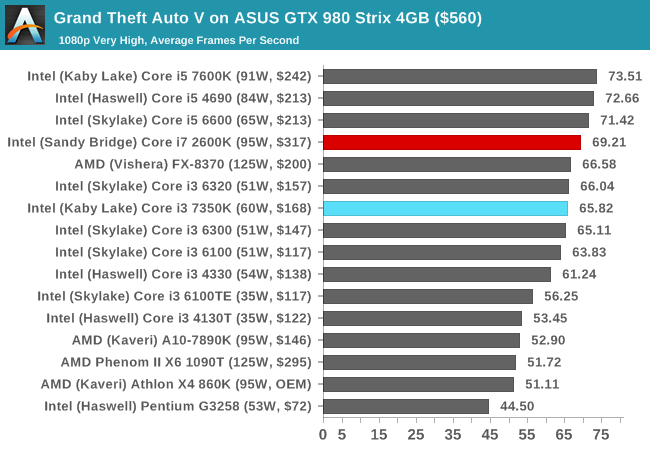

In contrast, the i7-2600K can actually beat an i5-7600K in some games now that they're making use of more than four threads.

These days it's pretty clear that you should buy an i7 and fast RAM if you want the best performance - especially if you care about minimum framerates more than averages.

That said, Ryzen is launching a couple of weeks from now and the leaks so far have been very promising.

It's looking like they will be far better value for money, with a 6c/12t CPU for a similar price as the 4c/4t i5-7600K and 8c/16t for a similar price as the 4c/8t i7-7700K - while offering better performance.

But we still need to wait for proper reviews instead of judging them based on one or two leaked benchmarks.

Even if they don't best Intel, they're still going to be very good value and there will finally be some competition in the CPU space again - which is good for everyone. (except Intel)

In one or two tests. We have no idea how it performs overall yet, and one of the tests is a bit concerning regarding memory latency.

I'm still expecting a 5GHz 7700K to be the best performer in most games, since most games do not take advantage of more than four threads - though many newer ones do now.

But if AMD can get 90% of the performance of the comparable Intel chips at these prices, it's going to have a significant impact - especially with how ridiculous Intel's pricing has been for their high end CPUs in recent years.

Aren't i7s better when playing games while other programs are open?

Still rocking my i5 4670K. Should I upgrade?

If you plan on recording games at all an i7 is essential.

Now this:

Is the kind of stuff that you hear far too often in PC upgrade / build threads.

Not worth it when you can spend the money saved by getting an i5 on a better GPU which has far more value and impact to your performance.

Anandtech don't know how to benchmark games.Not really sure where you're getting this from.

http://images.anandtech.com/graphs/graph11083/85559.png

HT is getting a lot of fame these days and the reasons for this are unclear for me. Sure, it helps sometimes but it's not the same as having the real cores in place of the virtual ones.

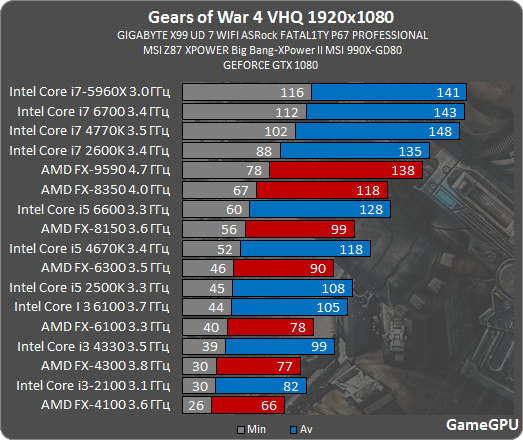

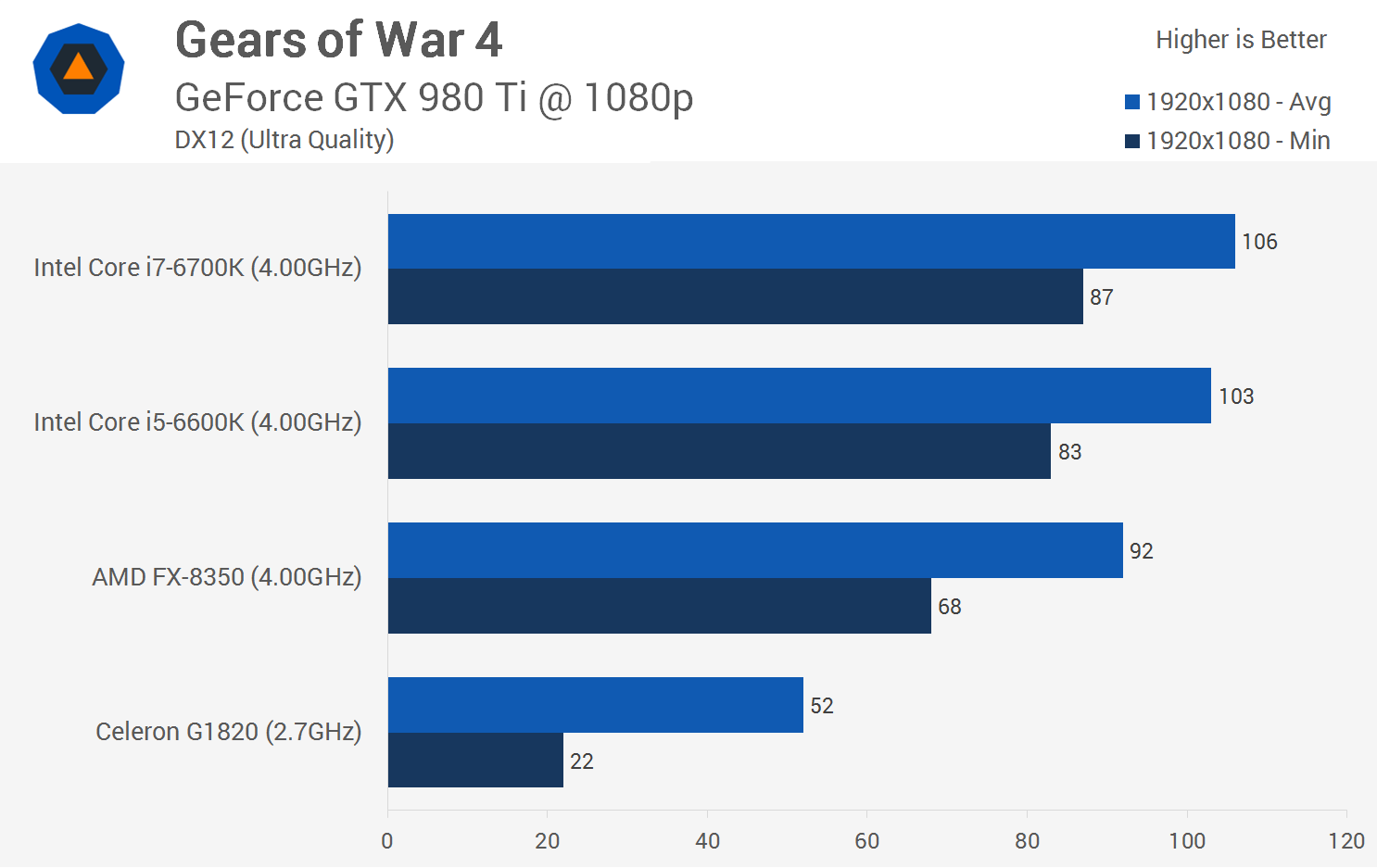

That Techspot Gears 4 test shows that you need a Titan X to get much benefit from the i7 and clock speed matters a lot. For a 980 Ti they are very close. So I guess ultimately GPU is the main limiting factor.

Too bad they didn't have tests at 1440p or 4K with the different CPUs because to me that's where extra performance is starting to matter. I don't really care if a game that runs at 100 fps vs 120 fps, I can't really tell a difference at that point but between getting a stable 30+ fps at 4K vs going under would matter.

You don't need a Titan X. For Ultra settings in Gears of War 4, maybe, but you can be bottlenecked by the CPU with any GPU as long as you choose appropriate settings for it.That Techspot Gears 4 test shows that you need a Titan X to get much benefit from the i7 and clock speed matters a lot. For a 980 Ti they are very close. So I guess ultimately GPU is the main limiting factor.

Yes, I'm really looking forward to seeing how Ryzen compares across many different games, since their CPU requirements can be so varied.WD2 seems to be an example of a modern engine which scales well with CPU parallelism: http://gamegpu.com/images/stories/Test_GPU/Action/WATCH_DOGS_2/new/w3_proz.png

Will be interesting to see Ryzen benchmarks in that.

For gaming you won't see a difference between the i5 and i7. There's been some games that benefit (marginally) from an i7 but that list is short and those games are old.

Yes, I hope some reputable sites do frametime tests in CPU-limited scenarios right off the bat. That's what I'm most interested in. (Well, that and OC potential)Yes, I'm really looking forward to seeing how Ryzen compares across many different games, since their CPU requirements can be so varied.

Some only care about single-core performance, some scale well up to four threads, some scale well beyond four threads, some are very reliant on memory bandwidth/latency etc.

Fewer and fewer games run better without HT. When I upgraded my PC a couple of years back, I benchmarked my games with it on, and with it off. I think only GTA4 gave me better results.Basically this. People tend to say that HT is "free" but it's really not. Paying for HT more than $10 is something which is mostly pointless from a gaming perspective so when you have a choice of an i5 and i7 for 50% more then you should really go with the i5.

It is also true that some games are actually worse with HT than without it.

It would be extremely stupid to upgrade today, when AMD has new CPUs coming in a few weeks. Even if you might not be buying a CPU from them.

Anandtech don't know how to benchmark games.

Their tests are worse than useless - they're very misleading.

You have to use a high-end GPU and low resolutions in a CPU test to ensure that performance is not affected by the GPU in any way.On the contrary, their benchmarks are actually very relevant to how most people will experience these games since only a handful of them will play games in 720p/low or on a TitanXP.

Check the results again, the i7-2600K is ahead of the i5-6600K when you look at minimum framerates.Durante's example of WD2 CPU benchmark shows that in a properly parallelized engine a modern i5 is ahead of an old i7 so as I've said there's only so much benefit HT may give you, virtual cores are just that - virtual cores.

I agree with this, which is why I think it's a shame that average FPS (and even minimum FPS) are still by far the most widespread metric.I'm of the opinion that minimum framerates/0.1% frame times are critically important for gaming, and that averages really don't mean much for the overall gameplay experience.

Check the results again, the i7-2600K is ahead of the i5-6600K when you look at minimum framerates.