captainclutch777

Member

Picked up the 10900KF for 330$ from MC with an open box strix z490 for $230. I'm a happy camper. The 5600x would be a bit better single core, but I figured the 10900KF would age much better with 4 extra cores and I plan on closing the gap with a 5.2ghz overclock and some 4400mhz ram. Also figure I can resell the 10900k for around the same price within the next 2 years, should hold its value well. Either way its good to have some real competition now!Given what they cost at microcenter these days I'd think the best bet would be either a 10700k or a 10850k.($250 and $320 respectively.)

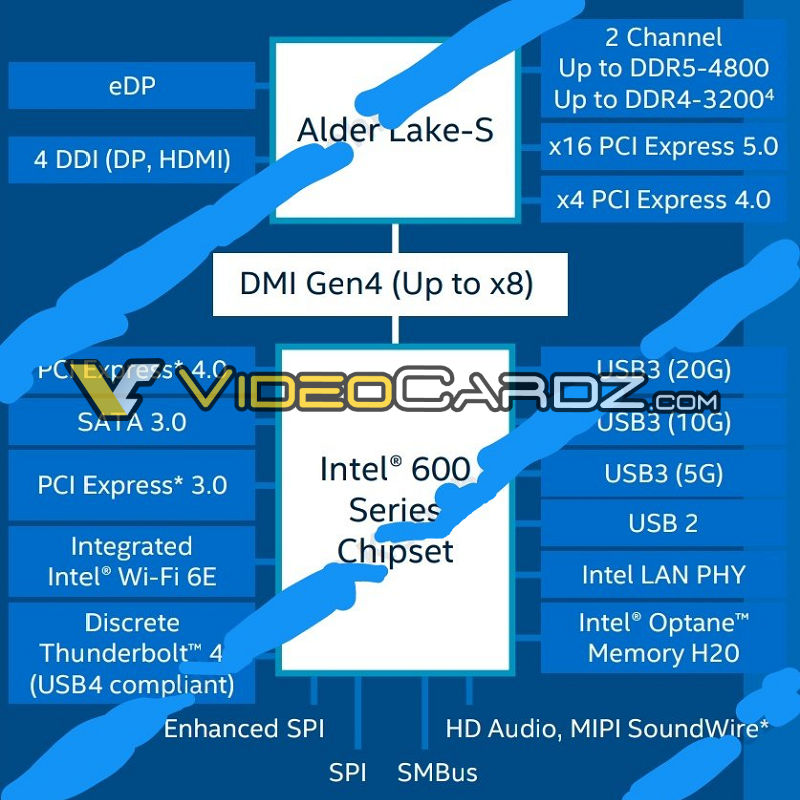

Does seem pointless buying into RL when you can get CL stuff for so cheap right now. Microcenter prices are insane right now. Hopefully Adler Lake picks up the pace and gives Intel some solid IPC gains that benefit games.

Last edited: