nogoodnamesleft

Banned

Any estimate on when the next generation nvidia cards will be out to the public?

I'm confused by this: 16GB HBM2, 900GB/s bandwidth.

That's 4GB/s less than 16Gb GDDR5x, doesn't make sense.

Needed a significant increase in transistor count and die size to get there though.

Still, amazing power efficiency improvements.

I see.

Am I right in thinking (obviously just taking shots in the dark) but that 50% improvement would be a rough estimate of improvement for performance of whatever the 1180 would be over the 1080?

I'm curious if Volta has the potential for true 4K60 on a single card, or if that's another gen out past Volta yet.

50% improvement is pretty much expected on the same process by going with larger dies. However we still have no idea when and if gaming Volta will even launch.

We could extrapolate from Maxwell and Pascal?

Isn't Volta supposed to be the brand new architecture to replace Maxwell/Pascal? I actually used to think Pascal was a new archiecture, but apparently it's just Maxwell with faster clocks and a few slight improvements. Wasn't Volta supposed to be the next big thing?

Also, how does memory bandwidth translate into gaming performance? For example, HBM(2) offers massive bandwidth increased relative to GDDR5, but what would be the actual result of this? Would you need to be playing at 4K or higher in order to see any tangible performance benefit?

Researchers from Arizona State University, Nvidia, the University of Texas, and the Barcelona Supercomputing Centre have published a paper (PDF) that looks at improving GPU performance using Multi-Chip-Module (MCM) GPUs. The team see MCM GPUs as one way to sidestep the deceleration of Moore's Law and the performance plateau predicated for single monolithic GPUs.

Transistor scaling cannot happen at historical rates anymore and chipmakers are staying with certain manufacturing processes longer but optimising performance in other ways. As "the performance curve of single monolithic GPUs will ultimately plateau," researchers are looking at how to make better performing GPUs from package-level integration of multiple GPU modules.

It is proposed that easily manufacturable basic GPU Modules (GPMs) are integrated on a package "using high bandwidth and power efficient signalling technologies," to create multi chip module GPU designs. To see if such a proposal is worthwhile and can bear fruit worth picking, the research team has been evaluating designs using Nvidia's in-house GPU simulator. Theoretical performance comparisons against multi-GPU solutions were also made.

MCM GPUs could do wonders for increasing the SM count and many GPU applications "scale very well with increasing number of SMs," observe the scientists. The research team looked at the possibilities of a 256 SMs MCM-GPU in the paper, and are pleased by its potential. Using the simpler GPM building blocks and advanced interconnects this 256 SM chip "achieves 45.5% speedup over the largest possible monolithic GPU with 128 SMs," assert the researchers

Many of today's important GPU applications scale well with GPU compute capabilities and future progress in many fields such as exascale computing and artificial intelligence will depend on continued GPU performance growth. The greatest challenge towards building more powerful GPUs comes from reaching the end of transistor density scaling, combined with the inability to further grow the area of a single monolithic GPU die. In this paper we propose MCM-GPU, a novel GPU architecture that extends GPU performance scaling at a package level, beyond what is possible today. We do this by partitioning the GPU into easily manufacturable basic building blocks (GPMs), and by taking advantage of the advances in signaling technologies developed by the circuits community to connect GPMs on-package in an energy efficient manner. We discuss the details of the MCM-GPU architecture and show that our MCM-GPU design naturally lends itself to many of the historical observations that have been made in NUMA systems. We explore the interplay of hardware caches, CTA scheduling, and data placement in MCM-GPUs to optimize this architecture. We show that with these optimizations, a 256 SMs MCM-GPU achieves 45.5% speedup over the largest possible monolithic GPU with 128 SMs. Furthermore, it performs 26.8% better than an equally equipped discrete multi-GPU, and its performance is within 10% of that of a hypothetical monolithic GPU that cannot be built based on today's technology roadmap.

Nvidia evaluating multi chip module GPU designs

http://hexus.net/tech/news/graphics/107560-nvidia-evaluating-multi-chip-module-gpu-designs/

Interesting. Would this require them to effectively "solve" the problem of SLI support?

Seems this is the way forward for Nvidia in 2019+

Interesting. Would this require them to effectively "solve" the problem of SLI support?

no, not necessarily

Similar issues would necessarily arise with a multichip setup, no? Though I guess the solution they come up would not necessarily be generalizable to mGPU, or they might not bother doing so.

Similar issues would necessarily arise with a multichip setup, no? Though I guess the solution they come up would not necessarily be generalizable to mGPU, or they might not bother doing so.

Any news on Xavier?

Interesting. Would this require them to effectively "solve" the problem of SLI support?

Well I was thinking of upgrading after Volta (I bought a 970 and 1070), so in terms of TFs for the 70 series (going by 70% increases each time)

Pascal - 6.5 TF

Volta - 11TF

After Volta - 18TF that's gotta be enough for 4k60 at that point.

Just in time for 8k displays to hit the mainstream.

NVIDIA Gives Xavier Status Update & Announces TensorRT 3 at GTC China 2017 Keynote

Earlier today at a keynote presentation for their GPU Technology Conference (GTC) China 2017, NVIDIA's CEO Jen-Hsun Huang disclosed a few updated details of the upcoming Xavier ARM SoC. Xavier, as you may or may not recall with NVIDIA current codename bingo, is the company's next-generation SoC.

Essentially the successor to the Tegra family, Xavier is planned to serve several markets. Chief among these is of course automotive, where NVIDIA has seen increasing success as of late.

In today's GTC China keynote,Jen-Hsun revealed updated sampling dates for the new SoC, stating that Xavier would begin in Q1 2018 for select early development partners and Q3/Q4 2018 for the second wave of partners. This timeline actually represents a delay from the originally announced Q4 2017 sampling schedule, and in turn suggests that volume shipments are likely to be in 2019 rather than 2018.

Nintendo doesn't seem to have a fixed design philosophy other than blue ocean. They could go in a wildly different direction next gen.So Nintendo is officially going with the hybrid approach going forward? I hope they do. Tech is always getting better plus its so convenient having a console and handheld in the one package.

Knowing Nintendo, that could definitely happen.Nintendo doesn't seem to have a fixed design philosophy other than blue ocean. They could go in a wildly different direction next gen.

A new mobile Soc at 12nm+ or 10nm sounds very exciting.

Nvidia CEO says Moores Law is dead and GPUs will replace CPUs

http://www.pcgamer.com/nvidia-ceo-says-moores-law-is-dead-and-gpus-will-replace-cpus/

No doubt Nvidia is concurrently working on at least two more GPU architectures beyond Volta given the company's huge size / and massive R&D budget. I mean AMD has Navi for 7nm and beyond that "Next Gen" for 7nm+ so Nvidia is doing at least something similar if not more -- The real question is, what will be the architecture names?

Fermi

Kepler

Maxwell

Pascal

Volta

?????

?????

When are these expected to hit retail in 2018?

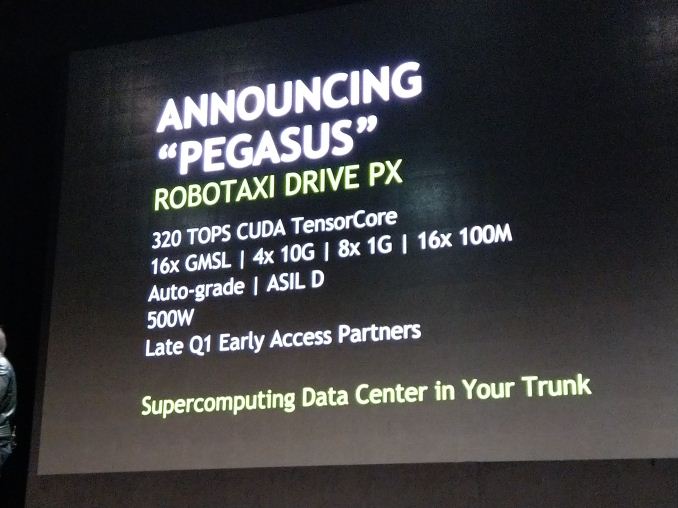

Not sure what you mean by "these" - Drive PX Pegasus which was unveiled today? It will never hit retail as it's a unit made specifically for automotive / taxi companies and it will be sold via B2B channels only probably.

As for when this will happen - 1H18 is for selected partners and 2H18 for large scale availability.

Next GeForce lineup is expected to launch in Spring 2018 but it's anyone's guess on how these two are related.

Sorry I should have specified. I meant the next gen GeForce GPU's.

A mate is building a gaming PC for 4k/60fps and I was wondering if he should hold off for a big leap in power if it's going to be available in the early part of next year. Monies no object to him so I guess he should just wait until next Spring.

Time to throw my 1080 in the bin?