Texture quality isn't only dependent on VRAM. It's also dependent on Texture Fill Rate which is a function of TMUs and clock. An HD7950, which can be found for $200-250 has 112 TMUs at 900MHz vs. the PS4's 72 TMUs at 800MHz and the X1's 48TMUs at 853 MHz. A mid-level card absolutely demolishes the two consoles when it comes to texture fill rate, and that's before even considering how easy it is to overclock these things. My overclocked 7950 is currently sitting at twice the fill rate of the PS4.

-

Hey Guest. Check out your NeoGAF Wrapped 2025 results here!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

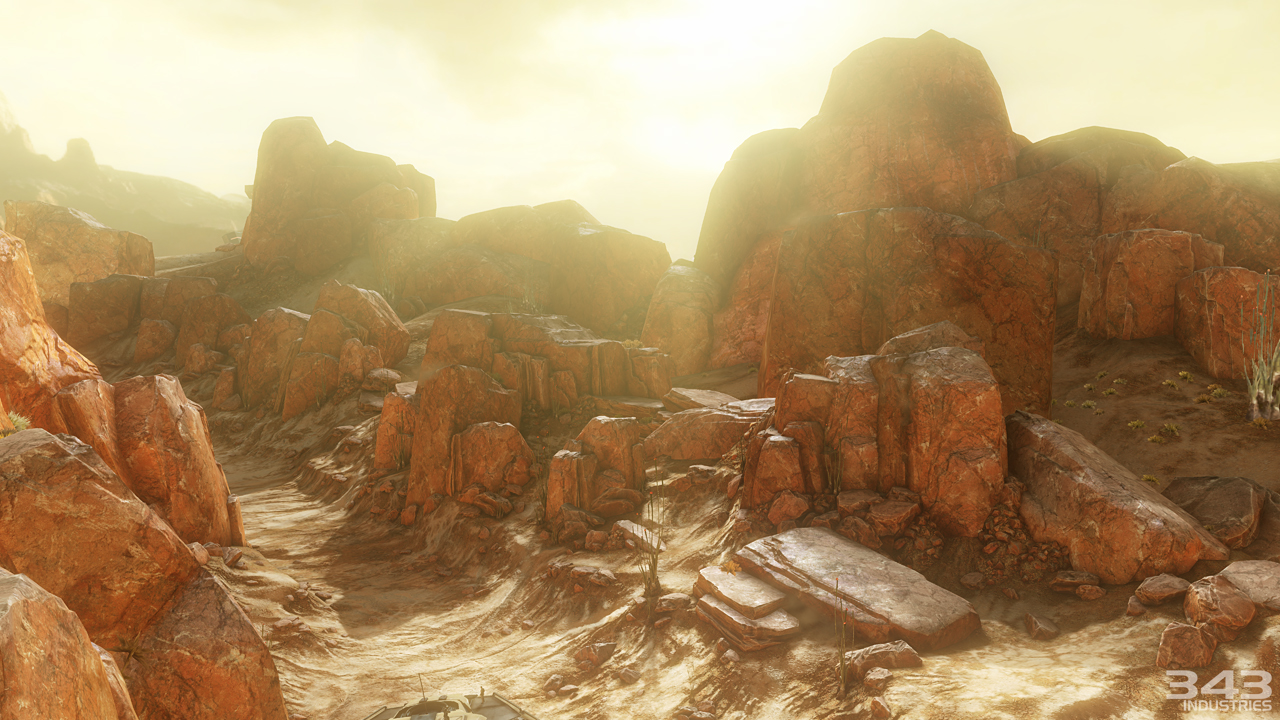

PC Call of Duty Ghosts using higher-res textures than next-gen console versions

- Thread starter x3sphere

- Start date

I got this weird feeling COD will look best on ps4

If we're only talking consoles, definitely, of all platforms though PC will again be the definitive version visually.

Stallion Free

Cock Encumbered

Textures aren't ALU bound at all. "Flops" are meaningless. Most important factors are bandwidth and the volume of memory. PS4 has more volume than most GPUs and more bandwidth outside of top end GPUS. It also has an abundance of ROPs and TMUs.

I think IW just sucks to be honest. Very detail they reveal about this engine makes it seem worse.

You should let IW know so they can fix this error.

Textures aren't ALU bound at all. "Flops" are meaningless. Most important factors are bandwidth and the volume of memory. PS4 has more volume than most GPUs and more bandwidth outside of top end GPUS. It also has an abundance of ROPs and TMUs.

I think IW just sucks to be honest. Very detail they reveal about this engine makes it seem worse.

You have absolutely no idea what you are talking about. Spouting out technical acronyms just makes you look like an idiot.

"Abundance of ROPs and TMUs" Hahaha. It's like your trying very hard to appear smart.

this is a good excuse to get people to triple upgrade. get it on the 360 because you can't wait worth a damn, get it upgraded to xbox one when you get one, and then finish it off later on down the road with the definitive PC version. Activision has business sense like no other. i always say they're at the top of the game.

NullPointer

Member

.It really does.

SneakyStephan

Banned

People wanted to see the next generation of games at E3. What were the publishers supposed to show them, PS4 and XBO games?

Heyoooo

BigTnaples

Todd Howard's Secret GAF Account

It really isn't that clear, and the fault is not how close the camera is, but how small the screenshot is. You underestimate the effect of a camera at inches of the ground, even Skyrim vanilla textures look decent in third person camera.

edit:

yeah, like this one. the resolution is not intended to be on par with that of a first person camera, even though they are more detailed.

This post shows a fundamental lack of knowledge and understanding in how textures work.

The texture work is better in any of those uncharted gameplay shots than the Tech Demo made by Infinity Ward and the camera angle chosen by those in charge of the textures. End of story, this is not debatable, no matter how much you wan't to blame it on a camera, (even though the CoD shot is from no clip mode...)

But okay, here are some first person screens of some games on 360.

This is a closeup of a grenade from a grenade launcher flying through the air in Halo Reach.

Halo 3 from 2007. "If you can read this you're too close."

(I took all these shots myself, yes in replay mode, which no, does not effect texture art nor resolution.)

Halo 4

Loss of detail textures effect, but still great artistically.

All that on 512mb of ram in 2006-2012.

2013, New Hardware, 8000MB of ram, New Engine, screenshot from a tech demo made by devs.

For my money, GHOSTS looks like a PS3 game running at 1080p, with a few bells and whistles, which is probably exactly what it is given it's cross-gen nature.It really doesn't.

Shadowfall is a day one graphical benchmark for this gen, as the gen progresses, it will be outshone many times over. Devs are no longer hindered to any great degree by tech, the quality of the title is entirely down to resources - budget, time and the personnel in your team.

Why does it look like those Halo 3 screenshots are aliasing free, unless my eyes are decieving me.

That explains it, hate theater mode and other bullshit replay modes that don't represent the actual game.

Theater mode does that. In-game the jaggies can kill.

That explains it, hate theater mode and other bullshit replay modes that don't represent the actual game.

NullPointer

Member

Theater mode does that. In-game the jaggies can kill.Why does it look like those Halo 3 screenshots are aliasing free, unless my eye are decieving me.

Why does it look like those Halo 3 screenshots are aliasing free, unless my eyes are decieving me.

No way. Halo 3 ran at a very high resolution and featured unlimited amounts of texture filtering and anti-aliasing. Just like Uncharted and TLoU. Therefore, the posted shots of all these titles are entirely representative of said games, unlike the actual, real & honest framebuffer grab of Call of Duty.

cobragt4001

Banned

You should let IW know so they can fix this error.

LMAO

iamshadowlark

Banned

I will, by not buying a title on their crappy unipressive engine.You should let IW know so they can fix this error.

Well TMUs only matter If there isn't enough of them. Since its a console, the resolution is fixed do you only need "enough" before they lose any additional value.Texture quality isn't only dependent on VRAM. It's also dependent on Texture Fill Rate which is a function of TMUs and clock. An HD7950, which can be found for $200-250 has 112 TMUs at 900MHz vs. the PS4's 72 TMUs at 800MHz and the X1's 48TMUs at 853 MHz. A mid-level card absolutely demolishes the two consoles when it comes to texture fill rate, and that's before even considering how easy it is to overclock these things. My overclocked 7950 is currently sitting at twice the fill rate of the PS4.

Wait, where was I wrong? Since my posts are so idiotic it should be easy to spot. I'll wait.You have absolutely no idea what you are talking about. Spouting out technical acronyms just makes you look like an idiot.

"Abundance of ROPs and TMUs" Hahaha. It's like your trying very hard to appear smart.

Coffee Dog

Banned

Why do some people not understand that for the $400 premade box you're not going to be able to play games at graphically demanding settings?

It's the price you pay for convenience.

It's the price you pay for convenience.

Wait, where was I wrong? Since my posts are so idiotic it should be easy to spot. I'll wait.

You defended the consoles, and so you are an idiot... I don't know, but what i know is that you should know by now that only PC enthusiasts have a clue about what is going on in the metal.

errrrr... I think.

Vulcano's assistant

Banned

This post shows a fundamental lack of knowledge and understanding in how textures work.

The texture work is better in any of those uncharted gameplay shots than the Tech Demo made by Infinity Ward and the camera angle chosen by those in charge of the textures. End of story, this is not debatable, no matter how much you wan't to blame it on a camera, (even though the CoD shot is from no clip mode...)

But okay, here are some first person screens of some games on 360.

This is a closeup of a grenade from a grenade launcher flying through the air in Halo Reach.

Halo 3 from 2007. "If you can read this you're too close."

(I took all these shots myself, yes in replay mode, which no, does not effect texture art nor resolution.)

Halo 4

Loss of detail textures effect, but still great artistically.

All that on 512mb of ram in 2006-2012.

2013, New Hardware, 8000MB of ram, New Engine, screenshot from a tech demo made by devs.

Well obviously the texture work is better, is not like say otherwise. And yeah those Halo shots seem to have higher res that uncharted, although less artistically competent . What are not agreeing on, again?

edit: and sorry about the "you underestimate", I usually try to use language that wont offend people, but you know how these threads are; everyone looks like they are at the defensive, it wasn't my intention. I blame the atmosphere of discussion.

Did DICE confirm they were targeting 60fps for the next-gen console versions of BF4? In that case I guess we just haven't seen what the console versions look like.

They have only confirmed the framerate so far, resolution seems to still be unknown.

SirMossyBloke

Member

Why do some people not understand that for the $400 premade box you're not going to be able to play games at graphically demanding settings?

It's the price you pay for convenience.

Because Ghosts doesn't look graphically demanding? I think that's the point of the arguing in this thread anyway.

Poetic.Injustice

Member

I'm surprised that we have yet to see console footage of these games. We're close to launch yet they're still using PC footage to showcase the games.

BigTnaples

Todd Howard's Secret GAF Account

No way. Halo 3 ran at a very high resolution and featured unlimited amounts of texture filtering and anti-aliasing. Just like Uncharted and TLoU. Therefore, the posted shots of all these titles are entirely representative of said games, unlike the actual, real & honest framebuffer grab of Call of Duty.

Not only is this explained in the post the shots were posted in. But I know you know ENOUGH about technology to know that replay mode in Halo 3 does not effect Texture Resolution and art work. AF only effects texture clarity at a distance and AA has nothing to do with texture work. Lighting, geometry, textures, effects, are all the exact same. And since that is what we are comparing. Yeah.

I never thought I would see a day when these textures were defended as acceptable for next gen hardware.

I literally shouldn't have to compare it to anything at all. It looks substantially worse than 80% of the current gen games we can all pop in and play right now.

Forgot how comparisons make fanboys come out of the woodwork.

JonathanPower

Member

Wait, where was I wrong? Since my posts are so idiotic it should be easy to spot. I'll wait.

You are not wrong. The PC version has bigger textures because it can run at resolutions greater than 1080p.

Why do some people not understand that for the $400 premade box you're not going to be able to play games at graphically demanding settings?

It's the price you pay for convenience.

All next gen demos and launch games are destroying all of what we have seen of COD:Ghosts right now.

I think it's normal to be unhappy about this. You would be unhappy too if you had common sense.

You are not wrong. The PC version has bigger textures because it can run at resolutions greater than 1080p.

This. As it should be. (First post wins again, but i think it wasn't totally intentional).

Principate

Saint Titanfall

I'm surprised that we have yet to see console footage of these games. Were close to launch yet they're still using PC footage to showcase the games.

Eh probably because they're console footage isn't all that impressive so they're going for the bullshot effect.

cobragt4001

Banned

Did DICE confirm they were targeting 60fps for the next-gen console versions of BF4? In that case I guess we just haven't seen what the console versions look like.

60fps is confirmed but no one knows if 1080p will be the res for BF4 on next gen consoles.

BigTnaples

Todd Howard's Secret GAF Account

And in comes Dennis with some more common sense.

BigTnaples

Todd Howard's Secret GAF Account

Well obviously the texture work is better, is not like say otherwise. And yeah those Halo shots seem to have higher res that uncharted, although less artistically competent . What are not agreeing on, again?

edit: and sorry about the "you underestimate", I usually try to use language that wont offend people, but you know how these threads are; everyone looks like they are at the defensive, it wasn't my intention. I blame the atmosphere of discussion.

No worries mate, easy to do here.

cobragt4001

Banned

Are those direct feed pics, if not your comparison is no good.

FullMetalx117

Member

This is a perfect case where people were like "ZOMG it has 8 GB gddr5 ram" but then forgot the gpu at best is a 7850 which is a mid tier gpu. It can have 20 GB gddr5 ram and it still won't touch a 6 GB Titan.

BigTnaples

Todd Howard's Secret GAF Account

Next-gen is defined by textures, not by thinking outside the box. Meh.

Get out of here with this. Just because people who enjoy good graphics discuss them does not mean "Next Gen is defined by textures".

There are people like you at every single console launch, and it never gets less annoying.

I assure you, 8gb of ram allows for more "Thinking outside of the box" than 512 ever did.

Graphics and Gameplay are not mutually exclusive, we can and should ask for both. And we often get both. Which begs the question why this has to be clarified in 2013?

Umm not on a PC. They don't have unified memory, the textures must be resident in VRAMSuprisingly, any ram will do for holding textures.

Are those direct feed pics, if not your comparison is no good.

And then what? We compare texture work here, not IQ. Even if they are more filtered than normal, you should see they are much better than those Ghosts' ones.

And i really think that if those TLOU screens are bullshots, so are the Ghosts' ones.

Vulcano's assistant

Banned

Last time I saw pics of that dog, the "fur" was not very good. The hairs, although flat, better be discernible this time if what they say is true.

BigTnaples

Todd Howard's Secret GAF Account

This is a perfect case where people were like "ZOMG it has 8 GB gddr5 ram" but then forgot the gpu at best is a 7850 which is a mid tier gpu. It can have 20 GB gddr5 ram and it still won't touch a 6 GB Titan.

Agreed. But should it not be able to touch a 6800 from 2006?

That is the point of the discussion.

This is a perfect case where people were like "ZOMG it has 8 GB gddr5 ram" but then forgot the gpu at best is a 7850 which is a mid tier gpu. It can have 20 GB gddr5 ram and it still won't touch a 6 GB Titan.

Yeah, we already know that. And it doesn't prove a shit.

Welcome in that mess by the way

Last time I saw pics of that dog the "fur" was not very good. The hairs, although flat, better be discernible this time if what they say is true.

This is a perfect case where people were like "ZOMG it has 8 GB gddr5 ram" but then forgot the gpu at best is a 7850 which is a mid tier gpu. It can have 20 GB gddr5 ram and it still won't touch a 6 GB Titan.

You're also comparing a $400 console to a $1000 graphics card. People need to compare apples to apples. If you're willing to spend the dough of course you can build a PC that blows away Xbox One and PS4.

Axispowers

Member

What does this mean for titanfall?