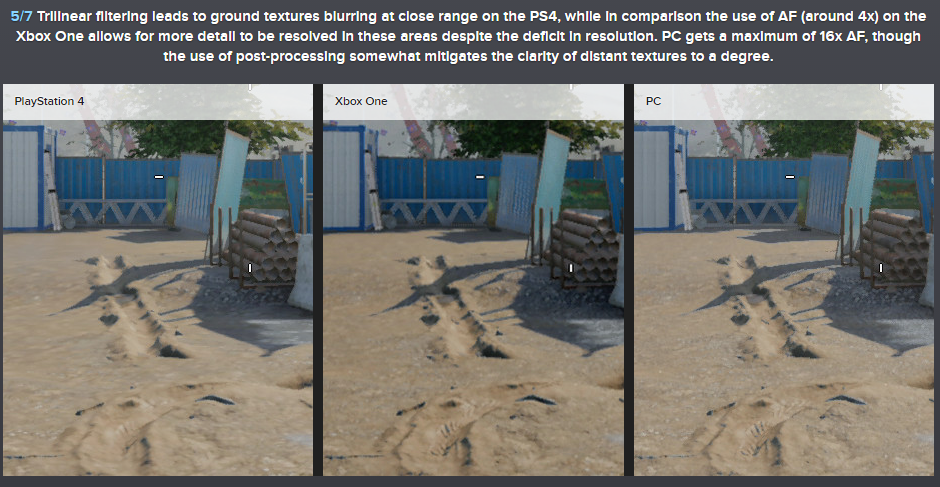

You see that's what irritates me. It's not like these consoles can't handle high AF or will suffer from severe frame drops if the implementation is a tad higher; I mean ideally, I would like 16x AF (like Dark Souls II: SotfS) although I know that may not be possible for current gen only titles, but the fact of the matter is, that history has shown an improvement in texture filtering doesn't have that much performance cost at all (see Dying Light and DmC Definitive edition pre and post patch; they had no performance cost whatsoever when a higher AF method was implemented.

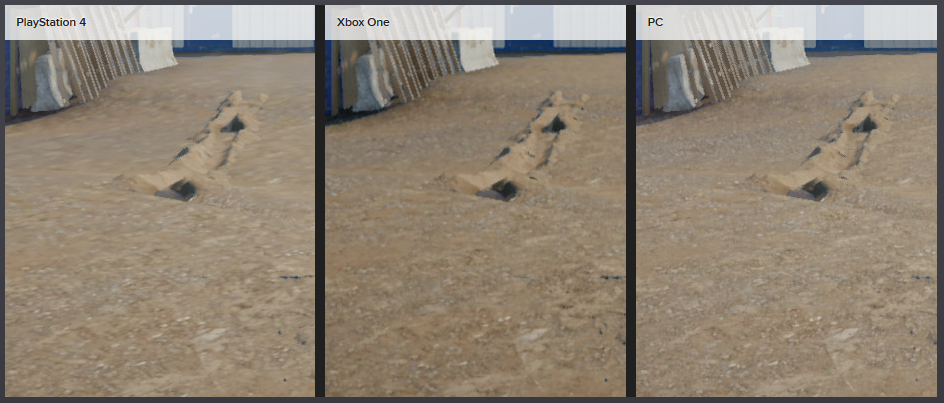

At the very least on current gen titles, I expect 8x AF. I'm generally not sensitive to these issues (hell, I've never *ever* noticed screen tearing in any PS3/4 game I've played -I'm dead serious-), yet poor texture filtering implementation ruins the IQ for many games. Take Destiny as an example of this; despite a nice art direction and aesthetic design, the textures at a distance are really blurry and its quite off putting. Many other games do this as well, like the recent CoD games. It's irritating to say the least, as many of the games that suffer from this on PS4 are quite good graphically to begin with. They just need that extra polish.

Sorry for the rant lol, but it's something that has been going on for over a year, and I expect it to at least be sorted out (I know Sony have included something in their SDK to make it more "obvious" to devs or something, but clearly that hasn't worked). I'd expect some QA testers or at least developers to notice "Hey, this image looks a bit blurry at an angle or from a distance, maybe we can improve the texture filtering so our game can look more polished since it has minimal [to no] performance cost".

Perhaps I'm missing the point or something really obvious, for which I'd actually like to know lol. What do you think? Just sheer incompetence or something not seen as important?