That goes without saying because CU don't scale linearly. Example, if you have 6CU, adding 2 more isn't going to generate the same level of performance as the initial 6; add another 2 and it's not going to generate the same level of performance of the last 8 and repeated. However that doesn't mean there isn't a performance increase at all which is what everyone is assuming. The same thing applies to GCN hardware on desktop GPUs. You're never getting 100% of each additional CU that's added (this goes for Nvidia's CUDA cores as well), but you are getting a performance % increase never the less. One thing we do know is that 12-14CU isn't the ceiling or anywhere near it for AMD's GCN architecture in terms of efficiency.

14:4 doesn't make sense simply because it undermines the entire point of increasing ACEs to 8 with 8 CLs.

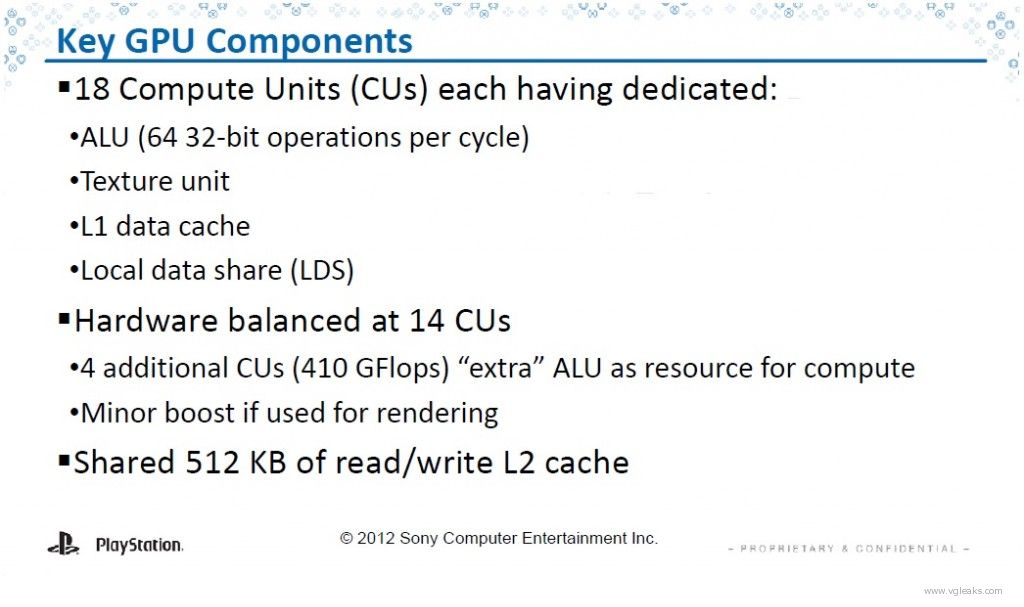

Gemüsepizza;84200703 said:The FLOPS performance doesn't change. And using 18 CUs for rendering is better than using 14 CUs for rendering. Like I said, Killzone uses most CUs for rendering and no compute for particles. But there is a possibility that, if you are using some of those CUs for things like particles instead of for normal rendering, it will look better.

Appreciate this stuff. Anywhere I can go for good reading/learning on it?