Coulomb_Barrier

Member

It's becoming a joke, the cost of system building. DDR4 prices won't come down either. If it werent for Ryzen and AM4 motherboards, building a good system right now would be prohibitively expensive for most.

PCGH showed a little teaser for next week (unknow topic so far).

But they testet a game where Nvidia ist CPU limited and a 1060-1080Ti are pretty much at the same level.

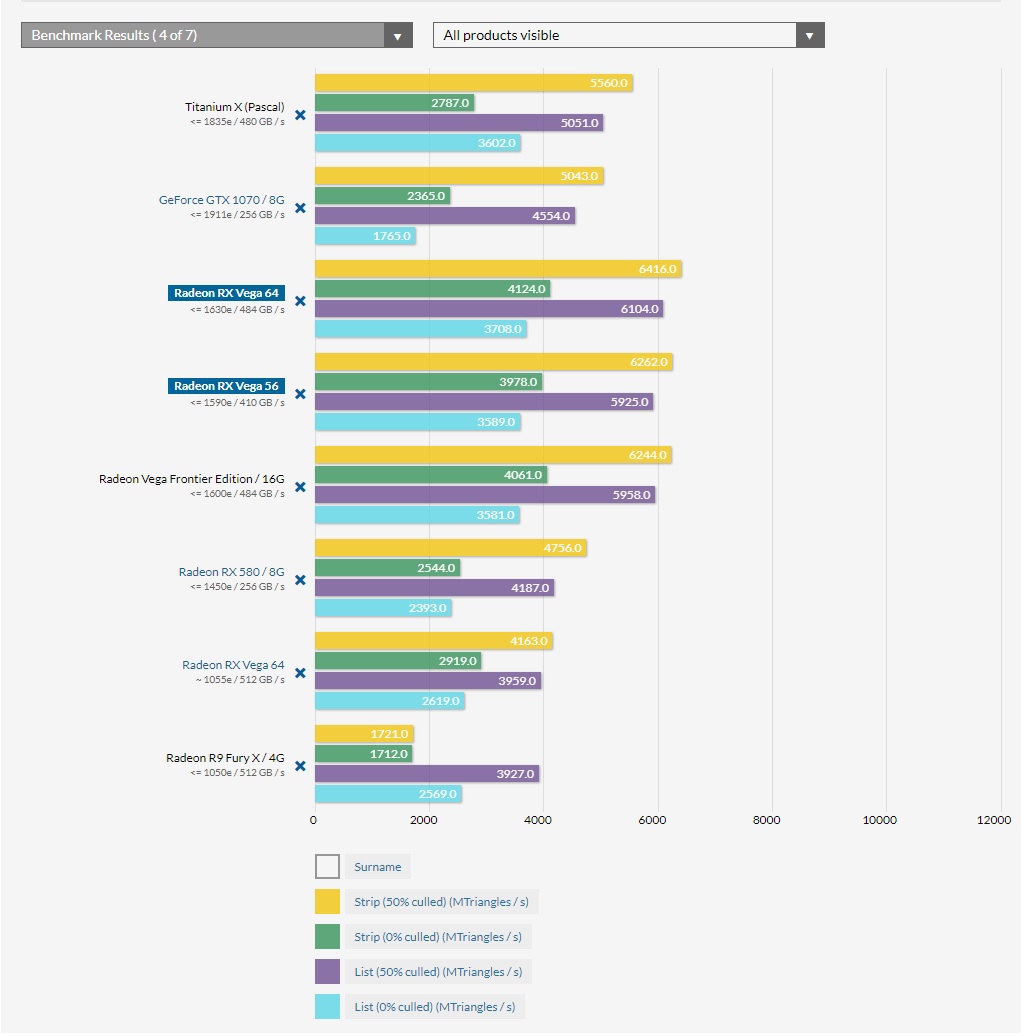

Interestly enough, Vega 56 and Vega 64 are beating all Geforces.

So in this CPU limited case AMD is in front, which is quite rare.

Hawaii (390X) might perform worse than expected, Fury X is just a bit faster than the 580 but Vega really pulls off there.

But increasing the resolution to UHD absolutely tanks the Radeon performance, Phil from PCGH (blaidd in 3DCenter) says that the Vega 56 is roughly performing on 1060 GTX level, Vega 64 ~ 1070 GTX.

The game could be PLAYERUNKNOWN'S BATTLEGROUNDS.

https://www.forum-3dcenter.org/vbulletin/showthread.php?p=11465009#post11465009

https://www.phoronix.com/forums/for...opengl-proprietary-driver?p=970697#post970697AMD bridgman said:Draw Stream Binning Rasterizer is not being used yet in the open drivers yet. Enabling it would be mostly in the amdgpu kernel driver but optimizing performance with it would be mostly in radeonsi and game engines.

HBCC is not fully enabled yet, although we are using some of the foundation features like 4-level page tables and variable page size support (mixing 2MB and 4KB pages). On Linux we are looking at HBCC more for compute than for graphics, so SW implementation and exposed behaviour would be quite different from Windows where the focus is more on graphics. Most of the work for Linux would be in the amdgpu kernel driver.

Primitive Shader support - IIRC this is part of a larger NGG feature (next generation geometry). There has been some initial work done for primitive shader support IIRC but don't know if anything has been enabled yet. I believe the work would mostly be in radeonsi but haven't looked closely.

For both DSBR and NGG/PS I expect we will follow the Windows team's efforts, while I expect HBCC on Linux will get worked on independently of Windows efforts.

It's interesting to see how the Vega FE's performance compares to the Vega 64, the clocks fluctuate quite a lot on both cards in this testing though.

The Witcher 3 AMD RX Vega 64 Vs AMD Vega Frontier Edition Frame Rate Comparison (YouTube)

Apparently, there's a bug whereby the drivers report higher clock rates when overclocking, but won't actually change the actual clock rate.64 is running a step higher clocks and gets 1-2 more frames. This basically shows that there's nothing in the driver specifically to make 64 faster than FE.

Apparently, there's a bug whereby the drivers report higher clock rates when overclocking, but won't actually change the actual clock rate.

Apparently, there's a bug whereby the drivers report higher clock rates when overclocking, but won't actually change the actual clock rate.

The game driver for FE is exactly the same of Vega 64 driver... even the updates are close to each other with the same fixes/features.64 is running a step higher clocks and gets 1-2 more frames. This basically shows that there's nothing in the driver specifically to make 64 faster than FE.

Similar results for both cards.

But the power draw and temperature are increasing when people do this in reviews I've seen

Edit.

Ive seen article. It's not that Vega isn't overclocking, it's just not overclocking to ridiculous clock speeds people think they managing like 2ghz

Just wanted to share what just happened.

Got a friend who works for Nvidia that came over to visit and play some games. While playing, I said "So...AMD...."

He then stopped playing, dropped his controller on his lap, looked at me in the eyes, and started laughing hysterically.

He said what he just did represents the general feeling right now Nvidia has over Vega.

Oh man....

Can you blame him? AMD is years late and their cards can only trade blows with the 1070 and 1080 while consuming more power. This pricing bait and switch isn't going to play out well either. Nvidia is literally sitting on volta because they don't need to release it right now. Volta consumer graphics cards could be released in a couple months if Nvidia wanted to do so but they can stay with Pascal until 2018.

Vega isn't going to shake things up like Ryzen has.

That's not how it worked with 980Ti which was sitting there waiting for Fury X.Product pipelines are already on a planned schedule years in advance. Nvidia doesn't have consumer Volta out because they simply aren't ready to release it. They will release it when they are ready.

Just wanted to share what just happened.

Got a friend who works for Nvidia that came over to visit and play some games. While playing, I said "So...AMD...."

He then stopped playing, dropped his controller on his lap, looked at me in the eyes, and started laughing hysterically.

He said what he just did represents the general feeling right now Nvidia has over Vega.

Oh man....

Their complaint isn't that no cards are available at MSRP, it's that apparently that initial MSRP is a lie and was only valid for one week (which was not disclosed to reviewers). So now the standard MSRP is apparently going to be $100 higher.So AMD sells Vega as:

standalone card

bundle 1 (deal with games)

bundle 2 (another deal I don't know with what)

As bundles are rather shitty, hardly anyone wants them.

AMD, however, officially stated that they restock all the offerings.

Yet, since bundles are shitty, it is dirty play, hidden FE-ing nVidia easily got away with would have been "more open" and less damaging PR wise.

But, reviewer drama is laughable. Oh my god, we have recommended card at MSRP but it is nowhere to be found at MSRP 1-2 weeks after launch, so what?

That's not how it worked with 980Ti which was sitting there waiting for Fury X.

That's not how it worked with 980Ti which was sitting there waiting for Fury X.

Their complaint isn't that no cards are available at MSRP, it's that apparently that initial MSRP is a lie and was only valid for one week (which was not disclosed to reviewers). So now the standard MSRP is apparently going to be $100 higher.

At least, that is my understanding of the issue. They talked about it on the latest WAN show.

980 Ti was not new technology, it was a cut-down Titan.

Volta is a whole different ball of wax at this stage in it's development.

Well Vega release must have been best day at Nvidia ever since AMD released 7970 for 550$ and it turned out they can counter it with midrange GK104 die

Whatever the case the first Volta cards are not releasing until at least 6 months, likely longer. That is great news for AMD actually.

Whatever the case the first Volta cards are not releasing until at least 6 months, likely longer. That is great news for AMD actually.

980 Ti was not new technology, it was a cut-down Titan.

Volta is a whole different ball of wax at this stage in it's development.

Also 1080 Ti was released by Nvidia when it was ready, they didn't bother to wait for Vega either and it turns out now we know no one should have waited for it.

Well Vega release must have been best day at Nvidia ever since AMD released 7970 for 550$ and it turned out they can counter it with midrange GK104 die

It means Navi (7nm) will be a bit "less late".how?

how?

Not unless they sort out the pricing situation. No one will (or should) be buying $500+ Vega 56 or $600+ Vega 64.

I don't see any business value in keeping a product in the backpocket just to greet a competitor. Just release it and get uncontested enthusiast dollars, humans are thirst.Nvidia almost assuredly had multiple 1080Ti launch windows planned, the problem is Vega was delayed so many times they couldn't afford to sit on it any longer.

It means Navi (7nm) will be a bit "less late".

Nvidia almost assuredly had multiple 1080Ti launch windows planned, the problem is Vega was delayed so many times they couldn't afford to sit on it any longer.

It doesn't make any sense to sit on something which could be making you money if it's released. This goes against every rational business decision imaginable.

Not unless they sort out the pricing situation. No one will (or should) be buying $500+ Vega 56 or $600+ Vega 64.

Free sync?Wait, the alleged price controversy was about Vega 64, wasn't it? Or is it expected to include Vega 56 as well?

Anyway, air cooled Vega 64 in Japan (not the bundled version) is 700$. You can have a 1080 for less than 600$.

LC Vega is 850$. You can have a 1080ti for 800$.

I really don't know what to think. Why would ANYONE buy those cards?

I guess they can't pull off two Zen in a single year.

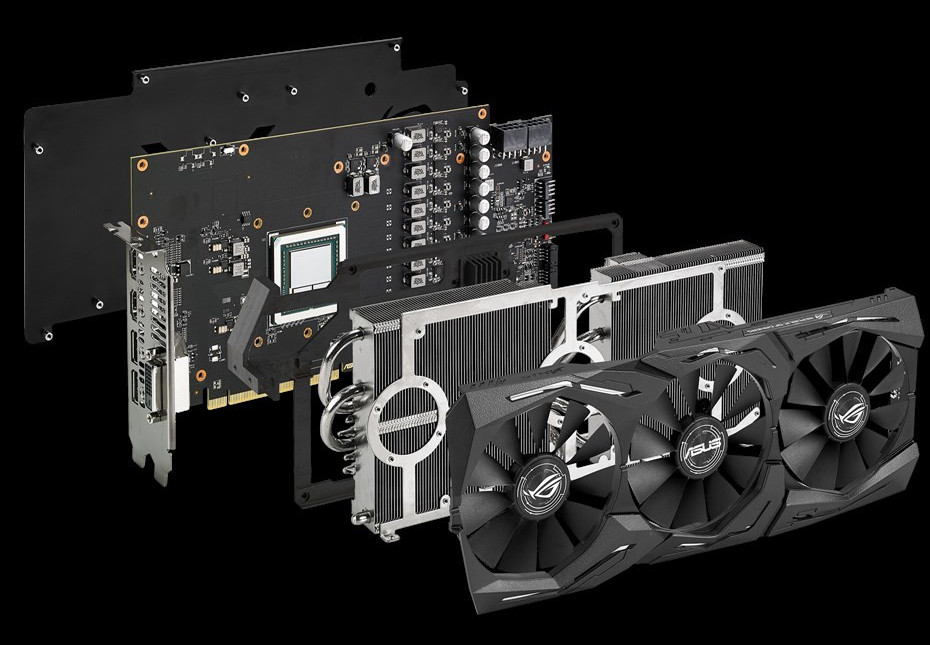

ASUS today introduced the Republic of Gamers (ROG) STRIX Radeon RX Vega 64 O8G graphics card, among its first (and probably the first) custom-design RX Vega 64 to hit the markets (model: ROG-STRIX-RXVEGA64-O8G-GAMING). The card combines a custom-design PCB by ASUS, with the company's latest generation DirectCU III cooling solution the company deploys on its STRIX GTX 1080 Ti graphics card. The cooler features a heat-pipe direct-contact base, from which the heat-pipes pass through two aluminium fin-stacks on their two ends, which are ventilated by a trio of 100 mm spinners. The fans stay off when the GPU is idling. The cooler features RGB multi-color LED lighting along inserts on the cooler shroud, and an ROG logo on the back-plate.

Moving over to the sparsely populated PCB (thanks in part to AMD's HBM2 move), the card draws power from a pair of 8-pin PCIe power connectors, conditioning it for the GPU with a 13-phase VRM. The O8G variant features factory-overclocked speeds that are close to those of the RX Vega 64 Liquid Edition, although ASUS didn't specify them. There's a "non-O8G" variant that sticks to reference clock speeds, boosting to around 1495-1510 MHz. What ASUS is really selling here is better clock sustainability under load, lower noise, and zero idle-noise; besides all the ROG STRIX bells and whistles. The card also drives two 4-pin PWM case fans in-sync with the cards, like most ROG STRIX graphics cards from this generation. ASUS also rolled out the ROG STRIX RX Vega 56, which features the same exact PCB, and sticks to AMD reference speeds. The company didn't reveal pricing.

No, that's not how this is done, Vega won in 4 games in dx11, 1070 won 2 in dx11 with a tie in witcher 3...In DX12 and vulkan Vega won in all games..Similar results for both cards.

The only issue I see is that neither card is aimed to 2160p (4k)... both can't hit the minimum target of 30fps in this resolution.

Yes it will, just you wait. The Vega 56 has a pretty nice lead over the 1070 and that's due to increase much more....The highest selling cards are not 1080ti's and titan XP's, they are 1080p cards like the rx 580/1060 and 1440p cards like vega 56/1070. with the lead vega 56 has over the 1070 at launch it will be the card to beat.This might be a bad fallout for amd marketing.

Can you blame him? AMD is years late and their cards can only trade blows with the 1070 and 1080 while consuming more power. This pricing bait and switch isn't going to play out well either. Nvidia is literally sitting on volta because they don't need to release it right now. Volta consumer graphics cards could be released in a couple months if Nvidia wanted to do so but they can stay with Pascal until 2018.

Vega isn't going to shake things up like Ryzen has.

The fallout from the deceptive pricing scheme for Vega continues. JayzTwoCents is done with AMD.

BTW; We can also expect prices for Polaris and Pascal to further increase. Samsung wants to concentrate a bit more on NAND flash and wants to cut down GDDR production. They expect GDDR5 prices to go up by ~30%. Now I don't know how expansive they are in the first place, but we can expect at least a slight price bump for GPUs, again.

Basically, there's a behind the scenes thing where tech companies give retailers coupons/rebates for stuff, and Vega got a one day coupon/rebate to boost sales. GamersNexus explains it better than I can: Market Development Fund & Hardware Companies.What coupon is he talking about? He now even thinks to abondon his Threadripper build. A bit of an overreaction tbh.

Anyway, I think it's supply & demand. They released some blockchain drivers, pushing the Vega 56 to 30.8 MH/s, with overclocked memory to 1GHz 32 MH/s in Ethereum-Mining: Claymore 9.8 DAG 199 according to hardwareluxx.de.

I assume with some fine-tuning and under-volting and -clocking, the Vega 56 will be sold out for a very long time.

No, that's not how this is done, Vega won in 4 games in dx11, 1070 won 2 in dx11 with a tie in witcher 3...In DX12 and vulkan Vega won in all games..

I agree that these are not 4k cards at the highest settings in newer games, but as it stands even the Vega 64 and GTX1080 are not...I think 1440p is the sweet spot for the these cards...

Yes it will, just you wait. The Vega 56 has a pretty nice lead over the 1070 and that's due to increase much more....The highest selling cards are not 1080ti's and titan XP's, they are 1080p cards like the rx 580/1060 and 1440p cards like vega 56/1070. with the lead vega 56 has over the 1070 at launch it will be the card to beat.

Generally, I think people are overreacting because there are hardly any cards to buy at MSRP anyway.....The 1070 and 1080 have been in the market for over a year, when was the last time you saw a card for MSRP, how about the 1080ti?

Suggested Etail Price is simply AMD's recommendation for selling the cards online, and business wise, I could see how they would encourage the retailers to push the bundles or that most of their stock would go towards the bundles they set. They sold through their stock on day one, they did say they delayed vega to push stock, but even then it seems they didn't have enough, notwithstanding the card everybody wants to get their hands on launches on the 28th....So yes, I'm sure more standalone cards will become available soon enough......There are also custom AIB cards coming soon too (64 and 56), so there will be plenty of stock soon enough.

I also think that a 56 should go for more than a 1070, it's a better compute card, it's a better gaming card, it has a more advanced memory technology and architecture and eventually we will see it stretch it's lead significantly in games utilizing it's unique architecture. It's also a higher quality made card over the NV cards...... HBM is new and expensive, GDDR5 prices are going to rise, you can't blame AMD here, it's what they woke up to in the industry....Even RAM has gone up significantly since I built my system last year.....In any case, even if prices go up $100.00, not saying it will, you're still buying into a more affordable ecosystem with Vega and Freesync as opposed to Nvidia and Gsync.

I think people should let these cards come into stock, and at least let the AIB cards roll in, you will get the cards at reasonable prices, very close to the SEP MSRP at least...

Free sync?

Yes, they are definitely solid 4k cards if you lower a few settings for 4k 60 or 4k 30fps in some cases. I didn't want to think of 4k 30fps since so many pc gamers say they don't play at that refresh....With freesync though, 4k @ 40-45fps should be worth it on a Vega 56... I would imagine......I think a 1070 and especially 56 are decent 4k cards as they do 4k30 pretty much maxed and generally you can get 60 without sacrificing much, or just go with 4k30 for single player type games and 1440/60 for multi-player.

I had been doing just fine with 1x 1070 on a 4k monitor but picked up a second for mining. Sli if well implemented gets me 4k60 max but that honestly doesn't look much better than tweaking settings and getting the same fps on a single card.

BF1 is the only game where Nvidia does better in DX11 over DX12, but even then, AMD's performance in DX12 still supercedes NV's dx 11 code by a good bit, so the results would remain the same...I think overall, the results are fair and representative...Also, you will have to get used to more dx12 and vulkan benchmarks in the future, devs are moving on as it is....Benching games only on dx12 on a game that has dx11 available as well is a bit shitty as in most cases nvidia cards perform better on dx11 than they do on dx12, so wouldnt the more equal output be to run vega on dx12 and nvidia on dx11? You are looking for the best performance out of the cards..

It doesn't make any sense to sit on something which could be making you money if it's released. This goes against every rational business decision imaginable. But some people really believe that literally everything related to Nvidia is a vast conspiracy so I'll leave them to their wild conspiracy theories.

anyone have any insight into why amd is so much faster than nvidia at non culled geometry?

1080Ti was selling since August 2016, as Titan X (Pascal), at $1200.

1080Ti released in March was basically a huge price cut of $500 on this card from NV.

They released it at the point where all possible sales at $1200 were done, this is pretty clear as there was (and still is) no other reasons to even release 1080Ti.

They would've likely released it a lot earlier if AMD would launch Vega back in 2016 with a significantly better performance than 1080.

Any links?