LowEndTorque

Member

On the topic of power consumption, does anyone know roughly how much power a GTX 580M uses?

You said the avg gpu uses 4 times as much as a console gpu. Launch 360 gpu used 100w. So yeah you are wrong.

Even so cpus have come a long way in efficiency. I see no reason why a optimized cpu could clear up room for a 125w gpu which is very close to some current amd offerings. I know you like to shit on consoles but these next gen boxes will be monsters even if its middle of the road tech.

On the topic of power consumption, does anyone know roughly how much power a GTX 580M uses?

Top of the line PC GPUs these days use 300W. When 360 was released they used 100. So a contemporary top of the line GPU is completely out of the question.

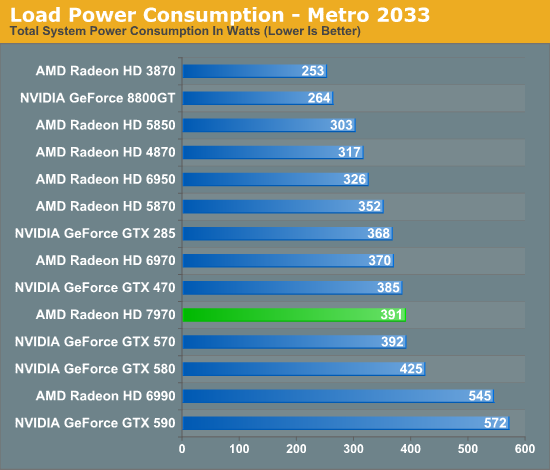

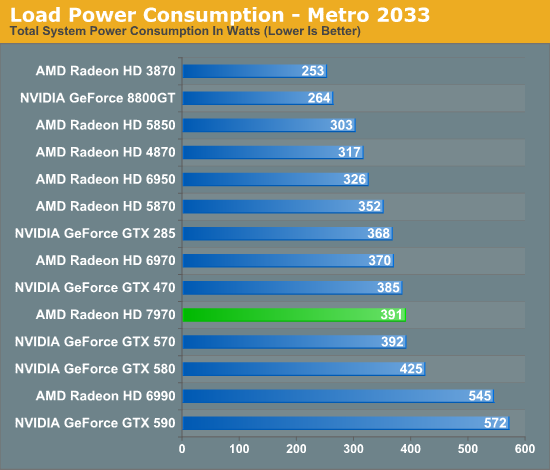

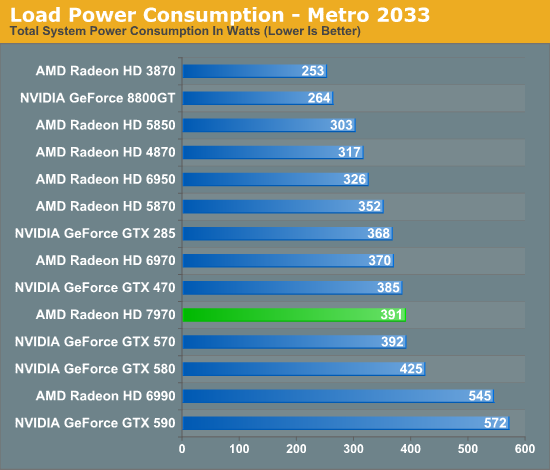

And if you look at the chart I supplied you'll see that, on average, current GPUs are drawing those amounts of power under peak conditions. We can play this game now where you go and count how many are exceeding 400W and then we can argue for hours and pages about whether that number is equivalent to "average" but I don't see much point since my main argument has already been established; that is, that modern GPUs draw a lot of power and generate a lot of heat, so in their current forms are not appropriate for consoles.

Also, I'm not sure who you are but it's nice that you've come to know and love me. I actually feel a bit warm and fuzzy.

Check the chart, it's on this page.

That would be awesome, but in a post-Kutaragi world I don't really see it happening.Well to be fair, the OG PS1 probably pulled 10-20W, the OG PS2 40-50W, and the OG PS3 200W. Obviously the same has applied to case volume as well. If the new consoles are the same size (or smaller) drawing the same (or less) power, they will be bucking a 20+ year trend. I do agree that it's very unlikely they'll exceed the PS3's phat dimensions and PSU, but it's not entirely out of the question either. For example, I wouldn't be taken completely by surprise if one of them decided to go with a foot print more on the scale of Cable/SAT DVR set tops, or a slime line AVR--especially Sony.

7970 only uses 210w. The 7870 (6970 equivalent) is rumored to only use 120w.

Depends on the card and the software. 300W is about right for a 7970 running Furmark. GPU manufacturer's hate that program. It adds about 50W over normal gaming.I'm with this.

They can optimize for 60fps at 1080 no problem with something like that.

300 and 400W GPU figures? That's entire PC load. Cards than do 60fps at 1080p use under 140W. Much less is needed for optimization if its targeted at a wattage, and less even than that because devs can optimize for a fixed GPU.

Now cut that in half again and you have a GPU that has adequate power draw for a console.

I should start bookmarking all these posts for the serious servings of crow when next gen consoles release. no way does the most powerful next gen console have a 60w gpu.

'Modern' can be a 6850 which can draw 120W, or it can be a GTX 580.

A trimmed and targeted 6850 would be a perfect fit for a newer console.

Newer consoles won't have a 200W GPU because it costs too much fucking money and makes no sense. With optimizations and a focus on yields/power draw they could easily have a 65W 6850 equivalent that will handle 1080 60fps. If it's 28nm that much easier and possibly lower.

I don't think anyone claimed that this won't be doable. But when the consoles are released the 6970/580 performance level will be ~2 GPU generations out of date in high-end PC GPU terms.I don't see a custom console GPU with roughly the performance of a 6970/580 as being such a big problem regardless of the power consumption arguments that are being made here, but I guess we'll just have to wait and see =)

I too like to ignore things which contradict my preconceptions.

I don't think anyone claimed that this won't be doable. But when the consoles are released the 6970/580 performance level will be ~2 GPU generations out of date in high-end PC GPU terms.

What are you expecting? The entire console will probably draw 100-120W at launch. I don't see why people expect MORE than a 60W or so GPU, unless you're also expecting more five-ninety-nine US dollar price tags.

Besides just thermal characteristics you need to take into account that Sony and Microsoft's investors are probably really tired of loss-leader consoles that never quite make back their investment.

as i said before, the power draw and heat output of top-of-the-line chips in 2005 was far more muted than today. efficiency has scaled with time, but the ceiling has become that much higher.Was the xenos a "cutting-edge" gpu on release? What is stopping the next xbox from having a gpu that is top of the line for 2013?

The 6870 is about the equivalent of the 560Ti.

The 560Ti pretty much already exists in notebooks in the form of the 580M.

I don't see a custom console GPU with roughly the performance of a 6970/580 as being such a big problem regardless of the power consumption arguments that are being made here, but I guess we'll just have to wait and see =)

Shouldn't you guys be comparing laptop gpus and not desktops?

Im expecting a typical ms console. Strong gpu, avg cpu with a big ass power brick and a draw of 150-200w. I have no clue what sony will bring.

Yes. It's much easier to relate to a desktop GPU though and you have similar comparisons like what KJack posted below.Shouldn't you guys be comparing laptop gpus and not desktops?

Right, just speeds down and you have a 65W part and optimization makes up for the rest to bring consoles to a doable 1080 60fps.The 6850 and 6870 are already in notebooks too. They're called the 6970M (~95W) and 6990M (~100W).

The GTX 580M is a solid 100W under load, btw.

Well to be fair, the OG PS1 probably pulled 10-20W, the OG PS2 40-50W, and the OG PS3 200W. Obviously the same has applied to case volume as well. If the new consoles are the same size (or smaller) drawing the same (or less) power, they will be bucking a 20+ year trend. I do agree that it's very unlikely they'll exceed the PS3's phat dimensions and PSU, but it's not entirely out of the question either. For example, I wouldn't be taken completely by surprise if one of them decided to go with a foot print more on the scale of Cable/SAT DVR set tops, or a slime line AVR--especially Sony.

I'm confused as to why the 300W GPU powerhouses are being mentioned. It's only if you think the next generations of consoles will be some kind of insane powerhouses that blow out PCs today. I would think parity with a 6850 / GTX 460 would be a targeted goal.

I'm betting right now that it will take 150W of power and not equal the 6970.

edit: TDP of 90W? Well, that might shut me up. That would be an incredible feat, to more than double power efficiency within about a year's time. I'll remain skeptical.

It seems too good to be true, especially when the 7970 doesn't achieve anywhere near that efficiency. That quote is suggesting that you would get 80% of the 7970's performance for less than half of the power cost.

Question to the techies. How does not having to deal with pcie, dual monitor ports, and other pc standards affect gpu size?

The discussion started because someone asked why no one expects the upcoming consoles' GPUs to be on-par with high-end PC GPUs around the time of launch, even though the 360's GPU was (to some extent). And the answer to that is the disparity in power consumption between high-end PC GPUs then and now.I'm confused as to why the 300W GPU powerhouses are being mentioned. It's only if you think the next generations of consoles will be some kind of insane powerhouses that blow out PCs today. I would think parity with a 6850 / GTX 460 would be a targeted goal.

Negligible.Question to the techies. How does not having to deal with pcie, dual monitor ports, and other pc standards affect gpu size?

very little. i'd say that 80% of a card's mass is purely to do with cooling, and even so, if you shoved it in a console sized case it'd still fry.Question to the techies. How does not having to deal with pcie, dual monitor ports, and other pc standards affect gpu size?

The prediction from many people is that next gen console launch games will shit on current games running on a high end PC, and the power to do that has to come from somewhere.Yes. It's much easier to relate to a desktop GPU though and you have similar comparisons like what KJack posted below.

Right, just speeds down and you have a 65W part and optimization makes up for the rest to bring consoles to a doable 1080 60fps.

I'm confused as to why the 300W GPU powerhouses are being mentioned. It's only if you think the next generations of consoles will be some kind of insane powerhouses that blow out PCs today. I would think parity with a 6850 / GTX 460 would be a targeted goal.

OkThe discussion started because someone asked why no one expects the upcoming consoles' GPUs to be on-par with high-end PC GPUs around the time of launch, even though the 360's GPU was (to some extent). And the answer to that is the disparity in power consumption between high-end PC GPUs then and now.

I strongly disagree with that opinion.The prediction from many people is that next gen console launch games will shit on current games running on a high end PC, and the power to do that has to come from somewhere.

360 was a 299/399USD console and it draw ~180W under load.What are you expecting? The entire console will probably draw 100-120W at launch. I don't see why people expect MORE than a 60W or so GPU, unless you're also expecting more five-ninety-nine US dollar price tags.

Also software sales are down. New game designs need new hardware. FPS on rails is boring. Or how about a Zelda with a large world that isn't mostly air or water that you can explore.

Bright spots came from HD console software sales, which were up 9 percent in 2011

I guess I'm the only one expecting a relatively small increase with performance somewhere in the ballpark between a GTX260-GTX460.

Maybe that just means I'll be surprised if we see something better.

A GTX460 would be a big upgrade compared to what we have now.

Yes they will. Closed platform with similar power to what PC's have today (maybe a little less) is going to blow away what PC games are doing right now, no doubt about it. But PC games will move forward as well so console games may only have a slight edge for a little bit.

as i said before, the power draw and heat output of top-of-the-line chips in 2005 was far more muted than today. efficiency has scaled with time, but the ceiling has become that much higher.

also consider that the 360 was originally intended to be a total gaming powerhouse, a real dragster that frequently whirred and melted itself into oblivion. microsoft's next living room device will be nothing of the kind. it will be an ultra sleek and discreet jack-of-all-trades tv box that will seek to become the iphone of the living room, with gaming as an asset rather than a focus.

you will never see a console with the equivalent contemporary performance of a launch day xbox 360 again.

And you know this cause you work at Microsoft?

What makes you say anything as dumb as saying the Next XBOX won't focus on gaming as its prime feature? Baseless claims i read.

It's not exactly an unsupported claim. Microsoft has been moving in that direction for a few years now.

If you expect the 360 successor to be as cutting edge as the 360 was at the time, then expect their new console to be twice of the size of the original 360. I just don't think that's going to happen.

Also i believe we will see 40 or 50 MB edram buffer in the next xbox which will make up for the vram speed diffrences

Why ? The 360 was drawing close to 200w at launch.

Want to see something cool ?

Anand tested the 7970 with system specs listed

I7 3936 @ 4.3ghz

EVGA X79 SLI

Samsung 470 240GB ssd

16 gigs of ddr 3 1867

The 7970 with that complete system is only 100w off the mark of last gen consoles .

Now remember the CPU has a 1GHZ overclock on it and is a 130W TDP by itself at default speeds that chip carrys a 80W diffrence over the i5 2500k with the same hardware.

YOu also have a power hungry motherboard with a ton of features that wont be required in a console .

I think they can easily get 7970 performance in a 200w box . 200w puts it right were the 360 was at launch.

How willing they are to do this depends on how close 22nm is from the consoles launch. If the console hits in 2012 and 22nm is schedualed for 2013 or the console hits in 2013 and 22nm is schedualed for 2013 then i see no reason why ms wouldn't take advantage even if initial chips are on 28nm

They won't use 22/20nm, at least not at launch. Even assuming it's ready yields likely won't be high enough yet. And there's no way you can magically cut down a 250W part and fit it in a closed box just because it's a closed box.I think they can easily get 7970 performance in a 200w box . 200w puts it right were the 360 was at launch.

How willing they are to do this depends on how close 22nm is from the consoles launch. If the console hits in 2012 and 22nm is schedualed for 2013 or the console hits in 2013 and 22nm is schedualed for 2013 then i see no reason why ms wouldn't take advantage even if initial chips are on 28nm

Bigger bus means more RAM chips, meaning more expensive boards, and that means it's hard to cost-cut later. We'll probably see 128 bit GDDR5, or XDR RAM. No reason to have a huge 50MB eDRAM pool though. Anything more than 32MB is overkill as a framebuffer. In fact 24MB is probably all they would need.I dont really see why we would need edram. I dont really follow edram technology, but X360 edram has transfers similar to 7970 GDDR 5 on 384bit bus.

I would love to have 4gb of GDDR5 shared for whole console in next-gen systems.

I dont really see why we would need edram. I dont really follow edram technology, but X360 edram has transfers similar to 7970 GDDR 5 on 384bit bus.

I would love to have 4gb of GDDR5 shared for whole console in next-gen systems.

Why ? The 360 was drawing close to 200w at launch.

The 7970 with that complete system is only 100w off the mark of last gen consoles .