Finally we could see if AMD's solution will live up to it and how it can somehow compete with DLSS

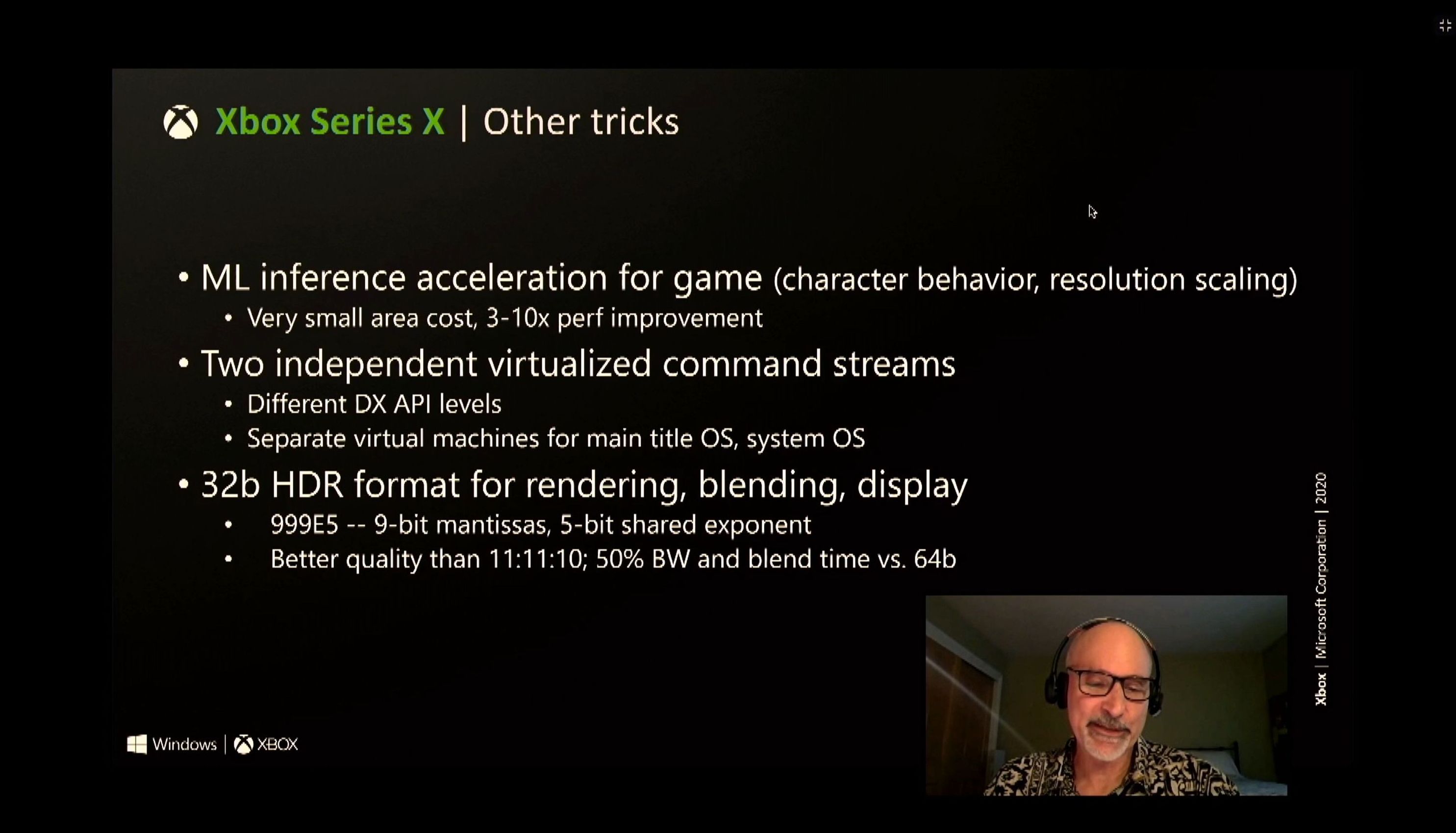

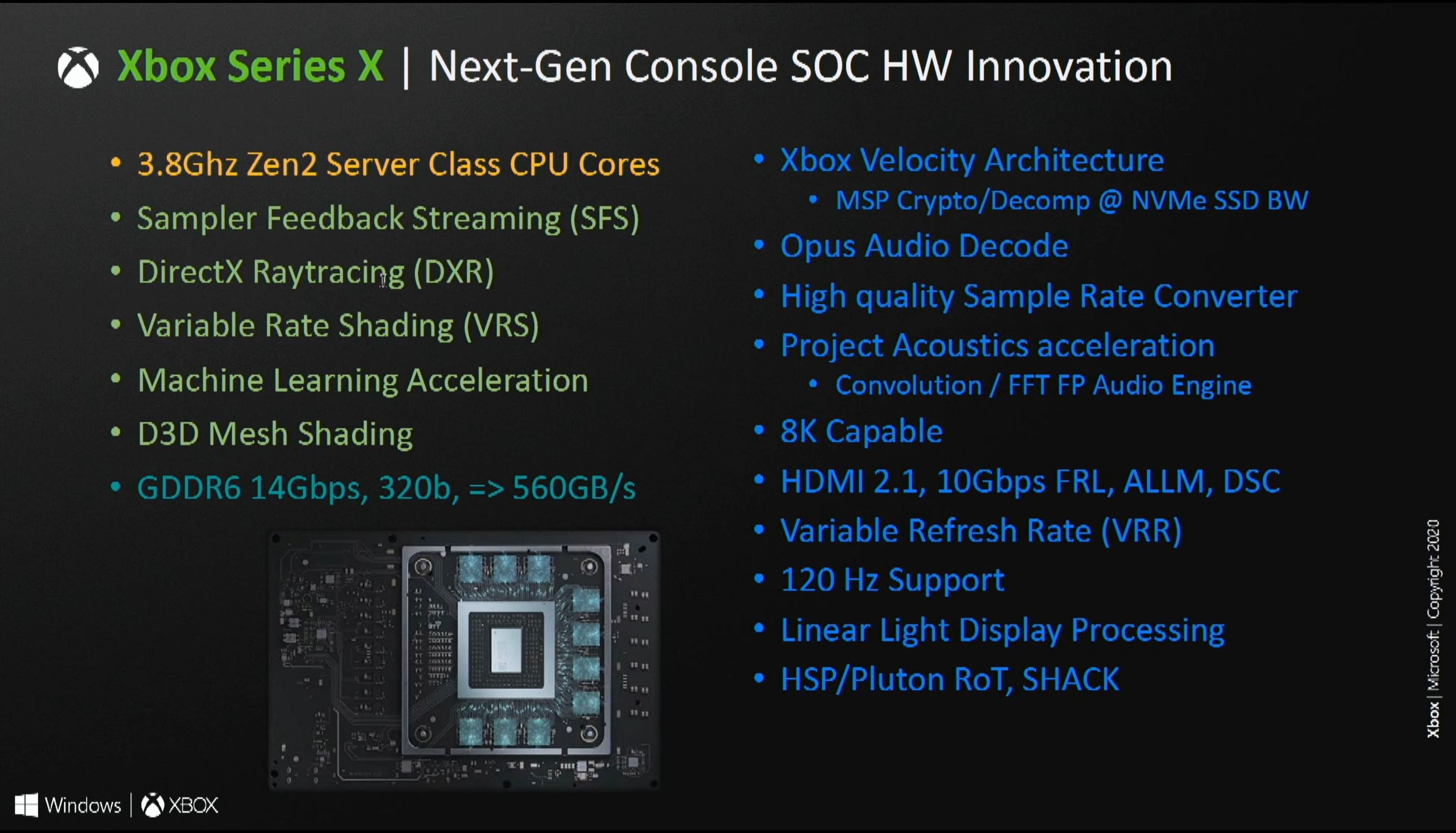

It would also be interesting to know if the consoles will use it and if the Xbox Series X|S will be able to take advantage of the modification made to the CU's of the gpu to support machine learning.

videocardz.com

videocardz.com

It would also be interesting to know if the consoles will use it and if the Xbox Series X|S will be able to take advantage of the modification made to the CU's of the gpu to support machine learning.

AMD FidelityFX Super Resolution technology may launch this spring - VideoCardz.com

AMD finally ready to launch its DLSS competitor? A new report puts a possible timeframe on the FidelityFX Super Resolution technology launch. It has been more than two years since NVIDIA introduced its Deep Learning Super Sampling technology. At first, it was mocked for how blurry the games...

.png)