Here is the input lag study. It's part of a larger "G-sync 101" series of articles.

Originally posted by Paragon in the G-sync thread, I believe this deserves more recognition.

It's one of the most comprehensive studies of true in-game end-to-end (input-to-monitor) studies I've ever seen. The methodology is described in detail in the article, but basically it involves recording 1000 FPS video, having a specifically modified input device which lights a LED on input, and then counting the frames (which are ms) until a reaction occurs on screen.

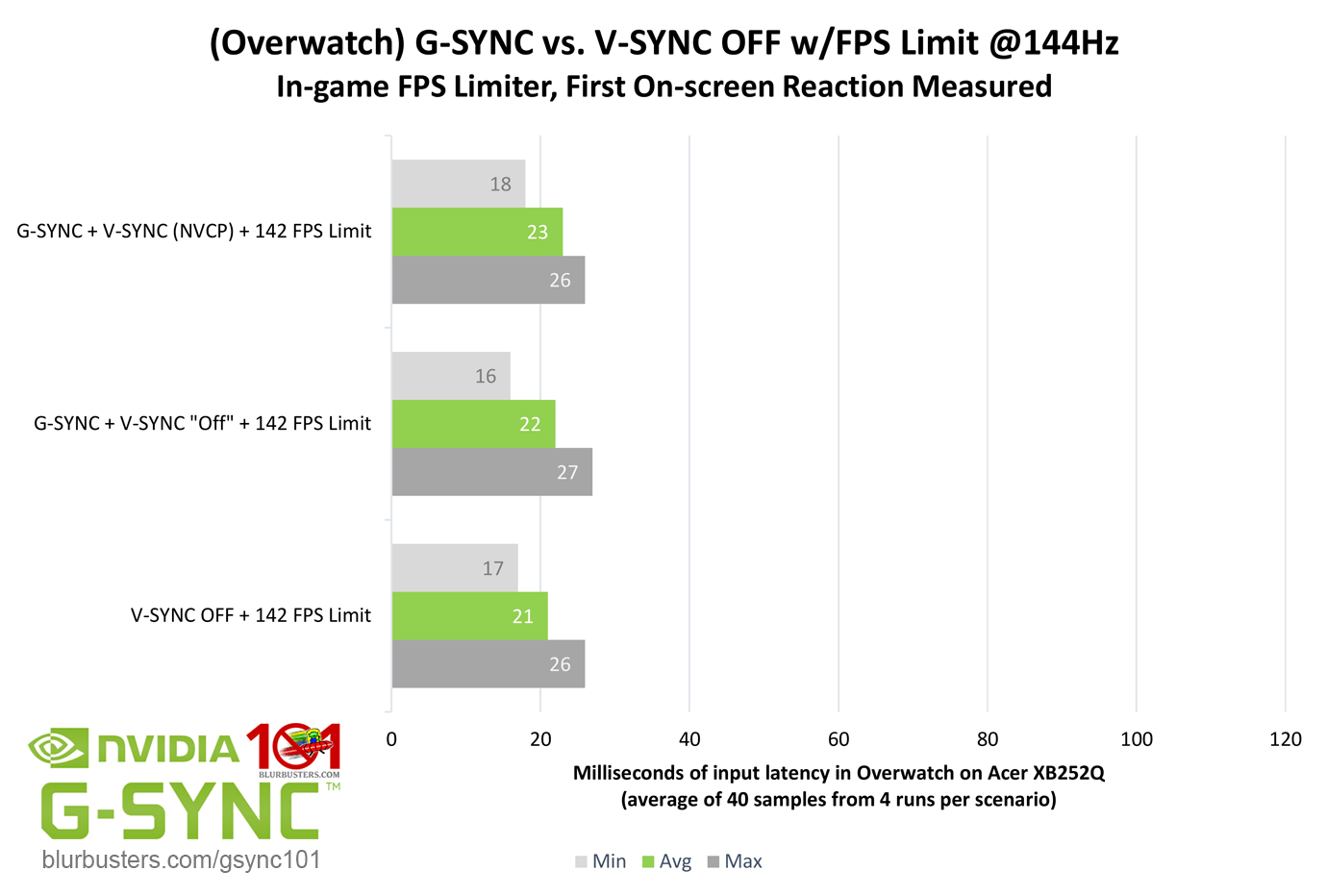

There is a lot of great data in the article, but what I found particularly valuable is this:

What it shows are two important facts:

These are points I've argued for before, but it's good to have solid data backing up the theory. (Honestly, the difference in this particular game is in fact more pronounced than I would have expected)

Originally posted by Paragon in the G-sync thread, I believe this deserves more recognition.

It's one of the most comprehensive studies of true in-game end-to-end (input-to-monitor) studies I've ever seen. The methodology is described in detail in the article, but basically it involves recording 1000 FPS video, having a specifically modified input device which lights a LED on input, and then counting the frames (which are ms) until a reaction occurs on screen.

There is a lot of great data in the article, but what I found particularly valuable is this:

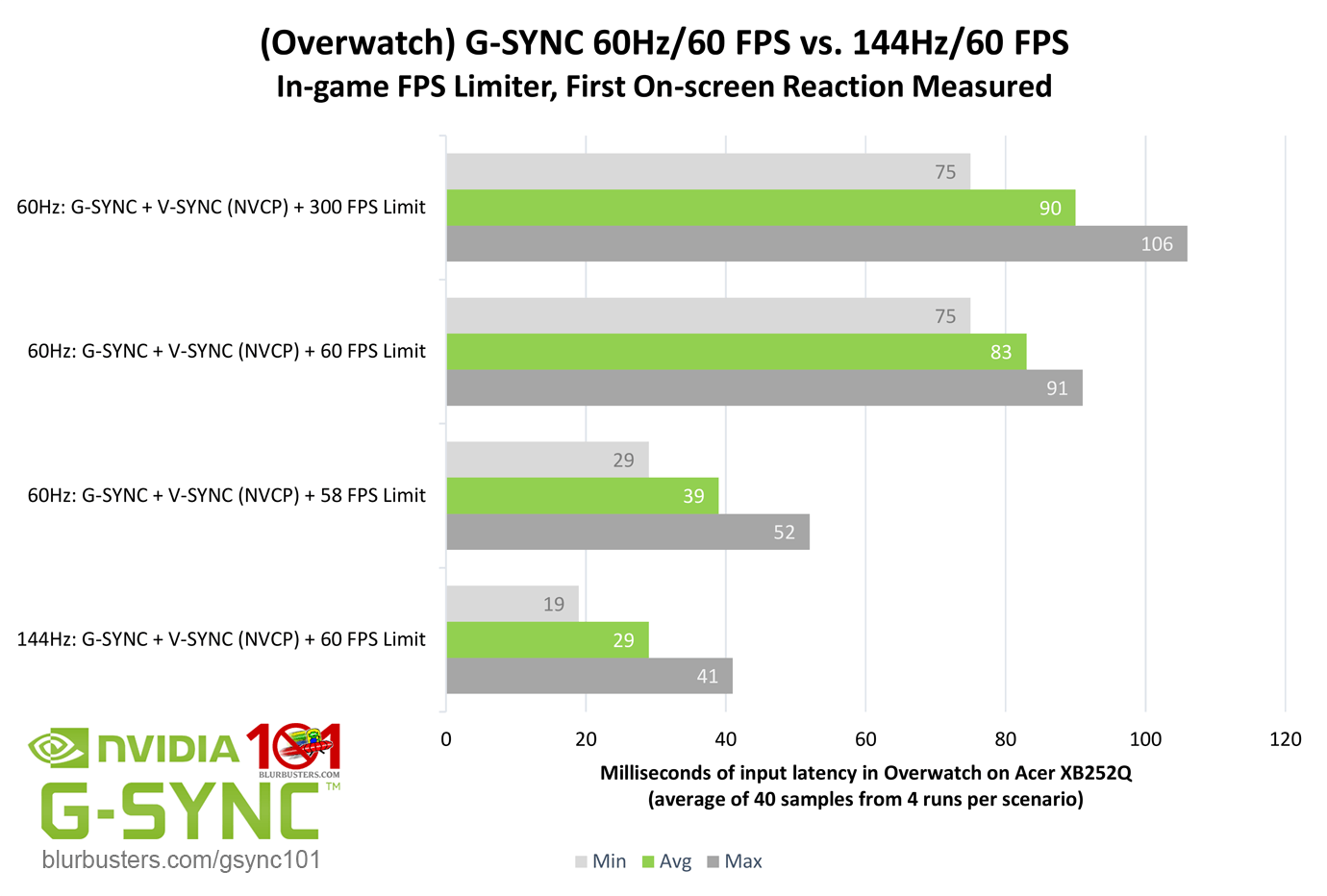

What it shows are two important facts:

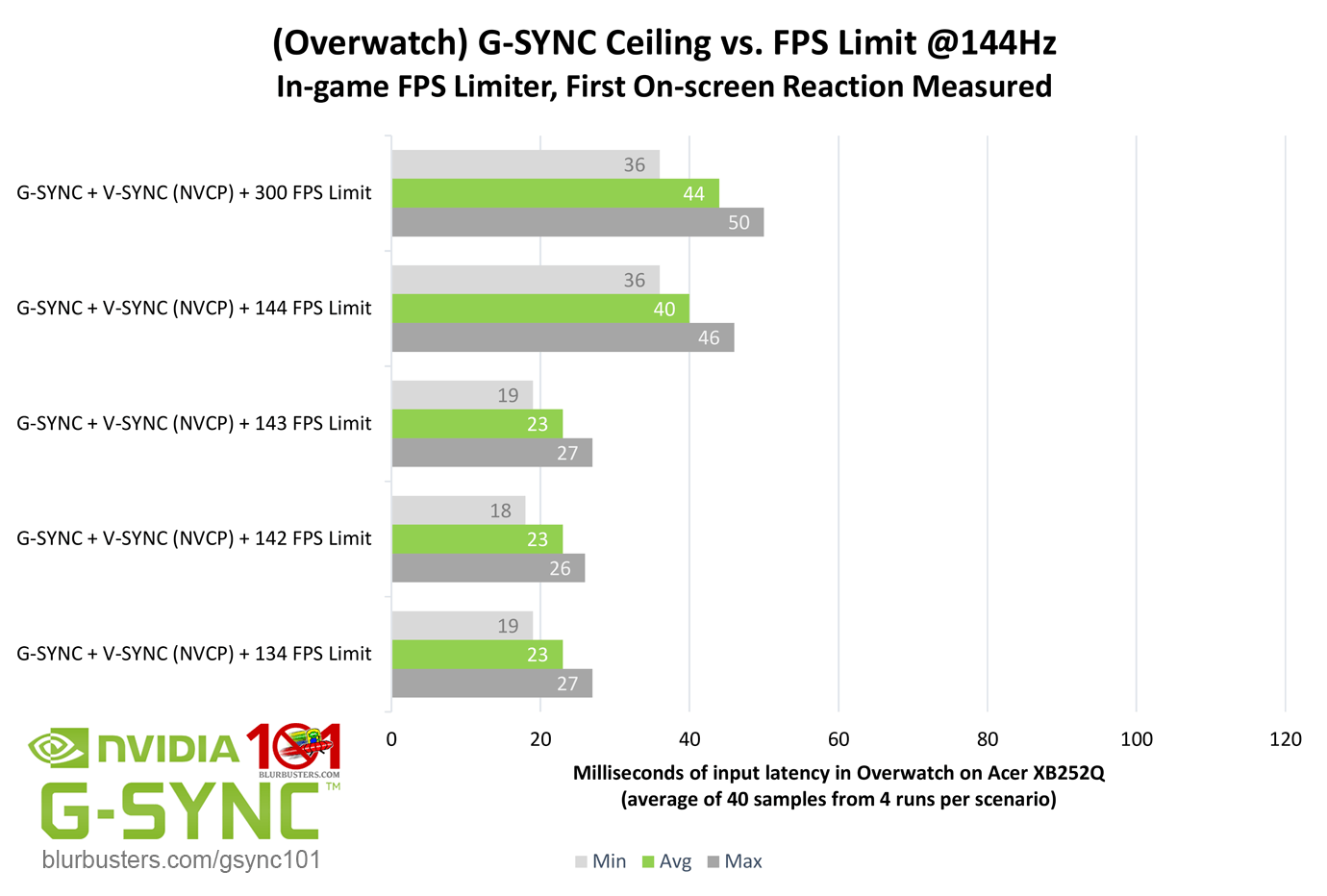

- that G-sync allows you to set a frame limit slightly below your refresh rate while maintaining a smooth picture, which can massively reduce your input lag

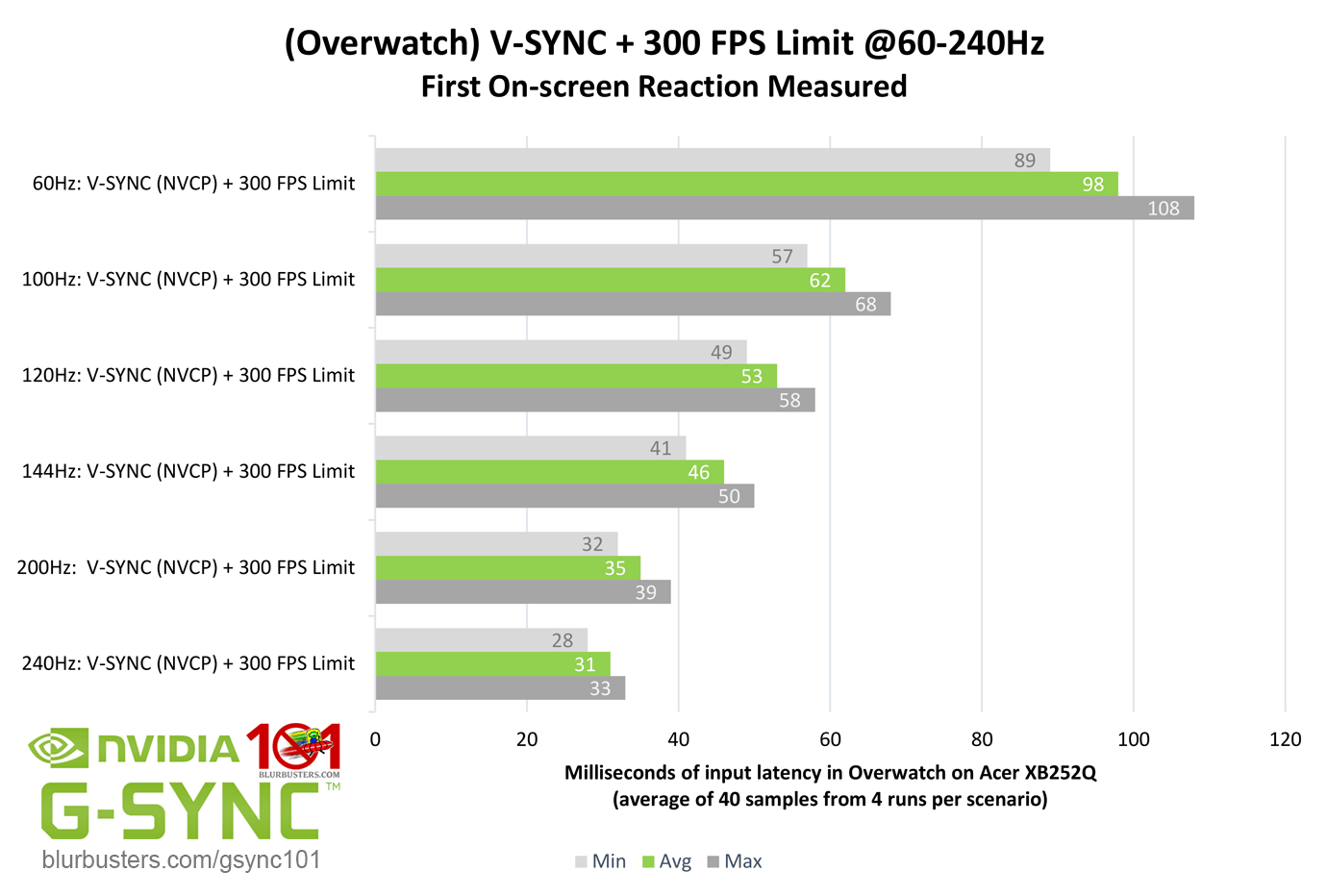

- that higher refresh rates improve your input behaviour considerably even if you don't match their refresh rate in terms of FPS

These are points I've argued for before, but it's good to have solid data backing up the theory. (Honestly, the difference in this particular game is in fact more pronounced than I would have expected)