-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

DF: Quantum Break: Better on DirectX 11! Gameplay Frame-Rate Tests

- Thread starter Nachtfalke

- Start date

Might have to triple dip.

You can run at native res in the Windows Store Version.

I'd guess this is equal or better.

Has the image quality been improved??

You can run at native res in the Windows Store Version.

I'd guess this is equal or better.

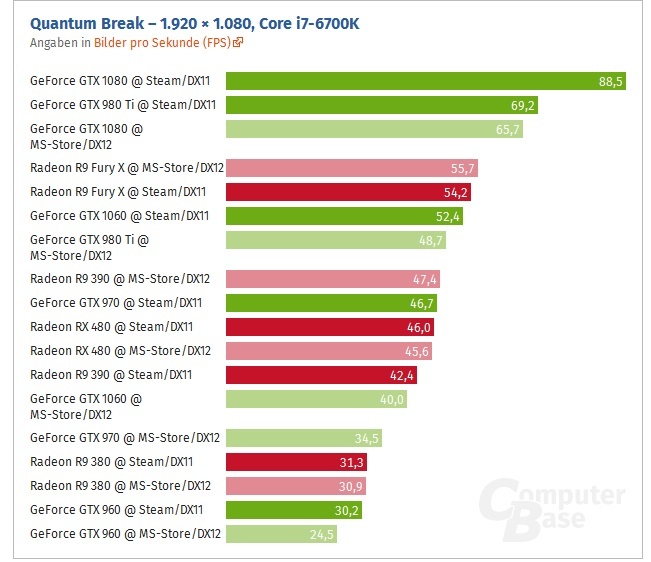

AMD keeps winning with DX12, AMD's DX12 performance is pretty much on par with Nvidia's DX11 performance. It's disappointing that AMD didn't get a boost with DX11 though.

I think this is a case where you can lay the blame on both the developer and Nvidia.

Is it? I see a 1060 beating the 480. I'd say the win goes to Nvidia.

Basically another shitty DX12 implementation. No advantage for AMD over DX11 and a decrease in performance for Nvidia.

AMD keeps winning with DX12, AMD's DX12 performance is pretty much on par with Nvidia's DX11 performance. It's disappointing that AMD didn't get a boost with DX11 though.

I think this is a case where you can lay the blame on both the developer and Nvidia.

Or UWP

LukasTaves

Member

Unless you can prove the difference is due it being uwp instead of dx11 vs dx12 I don't see how being uwp is relevant.I'm waiting to see how those clowns in the Performance Thread are going to try to continue to justify UWP performance now. smh

digitalrelic

Banned

Unless you can prove the difference is due it being uwp instead of dx11 vs dx12 I don't see how being uwp is relevant.

I'm not blaming anything on UWP. I'm just simply stating that many people in the performance thread were trying to deem the performance as being acceptable for the last few months. These new benchmarks clearly show that that wasn't the case.

digitalrelic

Banned

Have you actually had a refund request denied, or are you just guessing?

I've not heard of anyone having a request denied.

I'm happy to report that I was able to get a refund just now from Microsoft support. It was like pulling teeth though. I started in chat, explained in detail the situation, they stated that they couldn't do anything to help me. I had to request a supervisor on three different occasions before they finally agreed.

They requested a number to call, and the supervisor called me about 5 minutes later. I brought her up to speed on the phone, she put me on hold for another 5 minutes or so, and told me that that she could do a one time refund as a courtesy to me.

So yeah, $63.29 refunded to my card, for my Quantum Break Win 10 copy that I purchased back in April.

dragonelite

Member

Or UWP

How they are using the Same api calls in win32 and uwp for dx12 and probably dx11. Unless uwp has its own api?

Or UWP

Uhm, how?

KimonoNoNo

Member

I had no idea that the Steam version was Dx11, I just assumed it was the Win10 version with the UWP stuff stripped out.

DryvBy

Member

My deal with DX12 is that the games don't look much better. Actually in all my tests, they never look different. But they perform worse. I don't see why anyone would want to be apart of it.

My rig is an i7, 1080, 16GB, Windows 10. Tried Rise of the Tomb Raider, Hitman and something on UWP.

My rig is an i7, 1080, 16GB, Windows 10. Tried Rise of the Tomb Raider, Hitman and something on UWP.

digitalrelic

Banned

My deal with DX12 is that the games don't look much better. Actually in all my tests, they never look different. But they perform worse. I don't see why anyone would want to be apart of it.

My rig is an i7, 1080, 16GB, Windows 10. Tried Rise of the Tomb Raider, Hitman and something on UWP.

It's still the early days of DX12. If performance is still lackluster a year from now, when games are starting to come out that were built from the ground up on DX12, then we can declare DX12 to be a bust. It's premature to make any judgments until then.

It's still the early days of DX12. If performance is still lackluster a year from now, when games are starting to come out that were built from the ground up on DX12, then we can declare DX12 to be a bust. It's premature to make any judgments until then.

Aren't all UWP games like this? They got made for XB1 and then got ported to UWP. Can't get more "from the ground up" than that.

Aren't all UWP games like this? They got made for XB1 and then got ported to UWP. Can't get more "from the ground up" than that.

Rise of the Tomb Raider is noticably better for me in DX12, in DX11 I have constant stutter and FPS dips to the high 20s whereas DX12 doesn't hitch or stutter at all and dips to the 40s. Then again that's Nixxes and not every studio is Nixxes. Similarly, Dolphin does run a bit faster in DX12 as well. But yeah, no DX12 title has been as dramatic of a leap as Vulkan was for DOOM, going from High to Ultra preset while giving me a higher framerate was a welcome addition.

digitalrelic

Banned

Aren't all UWP games like this? They got made for XB1 and then got ported to UWP. Can't get more "from the ground up" than that.

Umm, no.

My deal with DX12 is that the games don't look much better. Actually in all my tests, they never look different. But they perform worse. I don't see why anyone would want to be apart of it.

My rig is an i7, 1080, 16GB, Windows 10. Tried Rise of the Tomb Raider, Hitman and something on UWP.

They're not supposed to look any different. DX12 didn't bring new graphical effects like DX11 did. The performance issues should be fixed as devs get more used to it. It seems like consumers are losing patience on top of Windows 10 being hated, so DX12 will most likely end up like DX10 did and never receive proper support. Also, RoTR does in fact run better in DX12. The max FPS will be lower, but the framerate is more consistent overall, which is far more important.

Rise of the Tomb Raider is noticably better for me in DX12, in DX11 I have constant stutter and FPS dips to the high 20s whereas DX12 doesn't hitch or stutter at all and dips to the 40s. Then again that's Nixxes and not every studio is Nixxes. Similarly, Dolphin does run a bit faster in DX12 as well. But yeah, no DX12 title has been as dramatic of a leap as Vulkan was for DOOM, going from High to Ultra preset while giving me a higher framerate was a welcome addition.

I was specifically talking about the "from the ground up" statement. I mean there's no DX11 involved in UWP games so my argument was that UWP games are basically built up from the gound in DX12. XB1 practically uses a custom version of DX12.

I know DX12 can give better performance with good developers. I assume you run AMD though? I bet if they ported DOOM from OpenGL to DX11 properly you would've also gotten a big boost.

Umm, no.

Great post. That clarifies your point very well.

nextgeneration

Member

Anyone with the windows 10 store version should be given a Steam key as well.

I can't believe I am stuck with the gimped version and if I knew it was coming to steam I would never have purchased on the win 10 store as the whole thing was a nightmare.

Yes, so much this! This is really a huge fuck you to the people who have the win 10 version.

dragonelite

Member

Starting to wonder what the hell the point of DX12 is. Aside from forcing people to upgrade to windows 10.

Sneaky devs are just using it as a selling point right now. What they are probably doing is dx11 on dx12 porting.

DryvBy

Member

It's still the early days of DX12. If performance is still lackluster a year from now, when games are starting to come out that were built from the ground up on DX12, then we can declare DX12 to be a bust. It's premature to make any judgments until then.

Absolutely. I argued the same thing when DX10 came out. Until more games are built around it, there's no point.

Absolutely. I argued the same thing when DX10 came out. Until more games are built around it, there's no point.

How far in DX10's life was RE5? Because that's the only game I actually remember using it, (apart from the first two Bioshocks which used it for sexier water) made a pretty nice difference performance wise too.

digitalrelic

Banned

Great post. That clarifies your point very well.

Sorry, it was just really confusing how completely incorrect you are. Making a game for XB1 has nothing to do with DX12.

How far in DX10's life was RE5? Because that's the only game I actually remember using it, (apart from the first two Bioshocks which used it for sexier water) made a pretty nice difference performance wise too.

Wii U, its DX10 capable, All the lighting and shading in Mario Kart and Captain Toad are probably not possible on 360.

I was specifically talking about the "from the ground up" statement. I mean there's no DX11 involved in UWP games so my argument was that UWP games are basically built up from the gound in DX12.

Recore and Killer Instinct are UWP and DX11.

LelouchZero

Member

Recore and Killer Instinct are UWP and DX11.

Halo 5 Forge as-well.

ecosse_011172

Junior Member

Bugger, I bought a UWMP Code but still haven't played it.

As I have a 970/3570K combo, this would have been much better.

I'm waiting for Steam versions from now on.

As I have a 970/3570K combo, this would have been much better.

I'm waiting for Steam versions from now on.

I'm glad to see performance is improved. I'll definitely pick the game up when it drops a bit in price - can't justify that spending right now. I feel a bit bad for the people who forked out full price on the Windows Store though. I'm still avoiding that disaster like the plague.

Caayn

Member

I can go into visual studio right now and create a DX11 UWP project. Game engines are free to utilize DX11 in UWP as well. There are even a few games that utilize a DX11 renderer in UWP.I was specifically talking about the "from the ground up" statement. I mean there's no DX11 involved in UWP games so my argument was that UWP games are basically built up from the gound in DX12. XB1 practically uses a custom version of DX12.

I'm happy to report that I was able to get a refund just now from Microsoft support. It was like pulling teeth though. I started in chat, explained in detail the situation, they stated that they couldn't do anything to help me. I had to request a supervisor on three different occasions before they finally agreed.

They requested a number to call, and the supervisor called me about 5 minutes later. I brought her up to speed on the phone, she put me on hold for another 5 minutes or so, and told me that that she could do a one time refund as a courtesy to me.

So yeah, $63.29 refunded to my card, for my Quantum Break Win 10 copy that I purchased back in April.

I will have to try this.

JohnnyFootball

GerAlt-Right. Ciriously.

Just tell me one thing:

I have a Geforce 1070.

Can I get 1080p (NO SCALING) and 60fps?

Yes, I am willing to turn down effects.

I have a Geforce 1070.

Can I get 1080p (NO SCALING) and 60fps?

Yes, I am willing to turn down effects.

Or UWP

Wut?

Delusibeta

Banned

You should be able to, but at high settings, not ultra.Just tell me one thing:

I have a Geforce 1070.

Can I get 1080p (NO SCALING) and 60fps?

Yes, I am willing to turn down effects.

dr_rus

Member

Should be possible on medium settings with some of them being on high even maybe.Just tell me one thing:

I have a Geforce 1070.

Can I get 1080p (NO SCALING) and 60fps?

Yes, I am willing to turn down effects.

Starting to wonder what the hell the point of DX12 is. Aside from forcing people to upgrade to windows 10.

The point is to remove a large portion of the driver which basically checks after the developers to make sure that they didn't write something which would result in a crash / error / low performance / etc. This part of the driver also manages all the resources and memory partitions, making sure that the proper data is in a proper place all the time.

This part of the driver is why DX11 driver consume so much CPU - because it constantly assess the code coming from the application for correctness, parse it to prepare resources, trying to "guess" what the application may need in the near future. It's basically a very complex runtime code analyzer with predictions and deep knowledge of the h/w it's running on. Removing this part means that a lot of CPU can be free to do other stuff, either in graphics or in something else like AI.

Unfortunately this also means that developers must be the ones who will implement the same resource management in their renderers (validation checks are removed completely in a hope that developers won't ship a broken game which can't even launch).

And this isn't a simple thing when you consider that such implementation should not only be tweaked for all possible PC configurations (RAMs from 4GBs to 32GBs, VRAMs from 2GBs to 16GBs, different PCIE speeds, different storage speeds, different background apps, etc) but also be quite a bit different for different GPUs, not only from different vendors but between different GPU families of one vendor even.

So if a developer manages to implement all of this properly he will get a lot more CPU ceiling to play with which may result in either more draw calls submitted (meaning more objects on the screan at each time) or CPU used for advanced physics or AI simulation.

If a developer won't be able to implement this properly DX12 renderer will run worse than DX11 one where this is already implemented by the IHVs in their drivers, and while it will still provide additional CPU resources, this will happen at the cost of worse GPU usage which is hardly a good trade to make as most games are GPU limited on PC.

With QB it's pretty apparent that Remedy used the console codebase for the DX12 renderer ignoring the fact that it's a bad fit for all h/w beyond AMD's GCN GPUs. Moving the game to DX11 thus resulted in BIG gains on NV h/w (and Intel's probably, if anyone's willing to check) since the inefficient part of the DX12 renderer for NV h/w was substituted with the highly efficient DX11 driver implementation from NV.

I'm happy to report that I was able to get a refund just now from Microsoft support. It was like pulling teeth though. I started in chat, explained in detail the situation, they stated that they couldn't do anything to help me. I had to request a supervisor on three different occasions before they finally agreed.

They requested a number to call, and the supervisor called me about 5 minutes later. I brought her up to speed on the phone, she put me on hold for another 5 minutes or so, and told me that that she could do a one time refund as a courtesy to me.

So yeah, $63.29 refunded to my card, for my Quantum Break Win 10 copy that I purchased back in April.

Glad to hear it!

Don't judge dx12 and its potential by this one game. I thought things would be easier than this for competent devs though. Take the XBO development environment then add cool PC specific optimization and features. What decent developer doesn't want the power to make hardware sing? I guess that's a responsibility some would rather not have.

This was my first purchase on the windows store.

Needless to say I feel scammed and will never use it ever again.

Yeah, I ain't ever buying anything from the Windows Store again. I just tried to get a refund, but the supervisor only offered me a $15 store credit. I said they could keep it.

SapientWolf

Trucker Sexologist

In theory, it allows for better optimizations on the PC by giving developers direct access to the hardware. But you're not going to see the benefits in a quick DX11->DX12 port.Starting to wonder what the hell the point of DX12 is. Aside from forcing people to upgrade to windows 10.

One exciting prospect is the reduction of engine input lag.

TheSpoiler

Member

My FX4300 is handling this at Medium presets, but man this heat is rising tho...

rokkerkory

Member

Is this really dx12's fault?

DemonCleaner

Member

The point is to remove a large portion of the driver which basically checks after the developers to make sure that they didn't write something which would result in a crash / error / low performance / etc. This part of the driver also manages all the resources and memory partitions, making sure that the proper data is in a proper place all the time.

This part of the driver is why DX11 driver consume so much CPU - because it constantly assess the code coming from the application for correctness, parse it to prepare resources, trying to "guess" what the application may need in the near future. It's basically a very complex runtime code analyzer with predictions and deep knowledge of the h/w it's running on. Removing this part means that a lot of CPU can be free to do other stuff, either in graphics or in something else like AI.

Unfortunately this also means that developers must be the ones who will implement the same resource management in their renderers (validation checks are removed completely in a hope that developers won't ship a broken game which can't even launch).

And this isn't a simple thing when you consider that such implementation should not only be tweaked for all possible PC configurations (RAMs from 4GBs to 32GBs, VRAMs from 2GBs to 16GBs, different PCIE speeds, different storage speeds, different background apps, etc) but also be quite a bit different for different GPUs, not only from different vendors but between different GPU families of one vendor even.

So if a developer manages to implement all of this properly he will get a lot more CPU ceiling to play with which may result in either more draw calls submitted (meaning more objects on the screan at each time) or CPU used for advanced physics or AI simulation.

If a developer won't be able to implement this properly DX12 renderer will run worse than DX11 one where this is already implemented by the IHVs in their drivers, and while it will still provide additional CPU resources, this will happen at the cost of worse GPU usage which is hardly a good trade to make as most games are GPU limited on PC.

With QB it's pretty apparent that Remedy used the console codebase for the DX12 renderer ignoring the fact that it's a bad fit for all h/w beyond AMD's GCN GPUs. Moving the game to DX11 thus resulted in BIG gains on NV h/w (and Intel's probably, if anyone's willing to check) since the inefficient part of the DX12 renderer for NV h/w was substituted with the highly efficient DX11 driver implementation from NV.

good read

Should be possible on medium settings with some of them being on high even maybe.

The point is to remove a large portion of the driver which basically checks after the developers to make sure that they didn't write something which would result in a crash / error / low performance / etc. This part of the driver also manages all the resources and memory partitions, making sure that the proper data is in a proper place all the time.

This part of the driver is why DX11 driver consume so much CPU - because it constantly assess the code coming from the application for correctness, parse it to prepare resources, trying to "guess" what the application may need in the near future. It's basically a very complex runtime code analyzer with predictions and deep knowledge of the h/w it's running on. Removing this part means that a lot of CPU can be free to do other stuff, either in graphics or in something else like AI.

Unfortunately this also means that developers must be the ones who will implement the same resource management in their renderers (validation checks are removed completely in a hope that developers won't ship a broken game which can't even launch).

And this isn't a simple thing when you consider that such implementation should not only be tweaked for all possible PC configurations (RAMs from 4GBs to 32GBs, VRAMs from 2GBs to 16GBs, different PCIE speeds, different storage speeds, different background apps, etc) but also be quite a bit different for different GPUs, not only from different vendors but between different GPU families of one vendor even.

So if a developer manages to implement all of this properly he will get a lot more CPU ceiling to play with which may result in either more draw calls submitted (meaning more objects on the screan at each time) or CPU used for advanced physics or AI simulation.

If a developer won't be able to implement this properly DX12 renderer will run worse than DX11 one where this is already implemented by the IHVs in their drivers, and while it will still provide additional CPU resources, this will happen at the cost of worse GPU usage which is hardly a good trade to make as most games are GPU limited on PC.

With QB it's pretty apparent that Remedy used the console codebase for the DX12 renderer ignoring the fact that it's a bad fit for all h/w beyond AMD's GCN GPUs. Moving the game to DX11 thus resulted in BIG gains on NV h/w (and Intel's probably, if anyone's willing to check) since the inefficient part of the DX12 renderer for NV h/w was substituted with the highly efficient DX11 driver implementation from NV.

What are the chances for engines like UE4 and others to implement this so developers don't have to deal with it themselves for every game anew?

Interesting read... I'm not an expert on this stuff, but to me, this complete removal of validations checks, as you put it, doesn't sound good, and honestly, leaves me more than a bit skeptical on the proper utilization of DX12 if we're left at the "mercy" of developers who have to manually account for so many variables. You make DX12 sound like a "one step forward, two steps backwards" solution, there's potential to free up the CPU, but making use of it sounds like a lot of work.The point is to remove a large portion of the driver which basically checks after the developers to make sure that they didn't write something which would result in a crash / error / low performance / etc. This part of the driver also manages all the resources and memory partitions, making sure that the proper data is in a proper place all the time.

This part of the driver is why DX11 driver consume so much CPU - because it constantly assess the code coming from the application for correctness, parse it to prepare resources, trying to "guess" what the application may need in the near future. It's basically a very complex runtime code analyzer with predictions and deep knowledge of the h/w it's running on. Removing this part means that a lot of CPU can be free to do other stuff, either in graphics or in something else like AI.

Unfortunately this also means that developers must be the ones who will implement the same resource management in their renderers (validation checks are removed completely in a hope that developers won't ship a broken game which can't even launch).

And this isn't a simple thing when you consider that such implementation should not only be tweaked for all possible PC configurations (RAMs from 4GBs to 32GBs, VRAMs from 2GBs to 16GBs, different PCIE speeds, different storage speeds, different background apps, etc) but also be quite a bit different for different GPUs, not only from different vendors but between different GPU families of one vendor even.

So if a developer manages to implement all of this properly he will get a lot more CPU ceiling to play with which may result in either more draw calls submitted (meaning more objects on the screan at each time) or CPU used for advanced physics or AI simulation.

If a developer won't be able to implement this properly DX12 renderer will run worse than DX11 one where this is already implemented by the IHVs in their drivers, and while it will still provide additional CPU resources, this will happen at the cost of worse GPU usage which is hardly a good trade to make as most games are GPU limited on PC.

With QB it's pretty apparent that Remedy used the console codebase for the DX12 renderer ignoring the fact that it's a bad fit for all h/w beyond AMD's GCN GPUs. Moving the game to DX11 thus resulted in BIG gains on NV h/w (and Intel's probably, if anyone's willing to check) since the inefficient part of the DX12 renderer for NV h/w was substituted with the highly efficient DX11 driver implementation from NV.

Based on your post, wouldn't the best solution be some kind of a middle ground, where this DX11 "code analyzer" part is streamlined and simplified, but not entirely scrapped?