Intel calls end to Moore's Law

Not just that Moores Law is coming to an end in practical terms, in that chip speeds can be expected to stall, but is actually likely to roll back in terms of performance, at least in the early years of semi-quantum-based chip production, with power consumption taking priority over what has been the fundamental impetus behind the development of computers in the last fifty years.

What does this have to do with gaming?

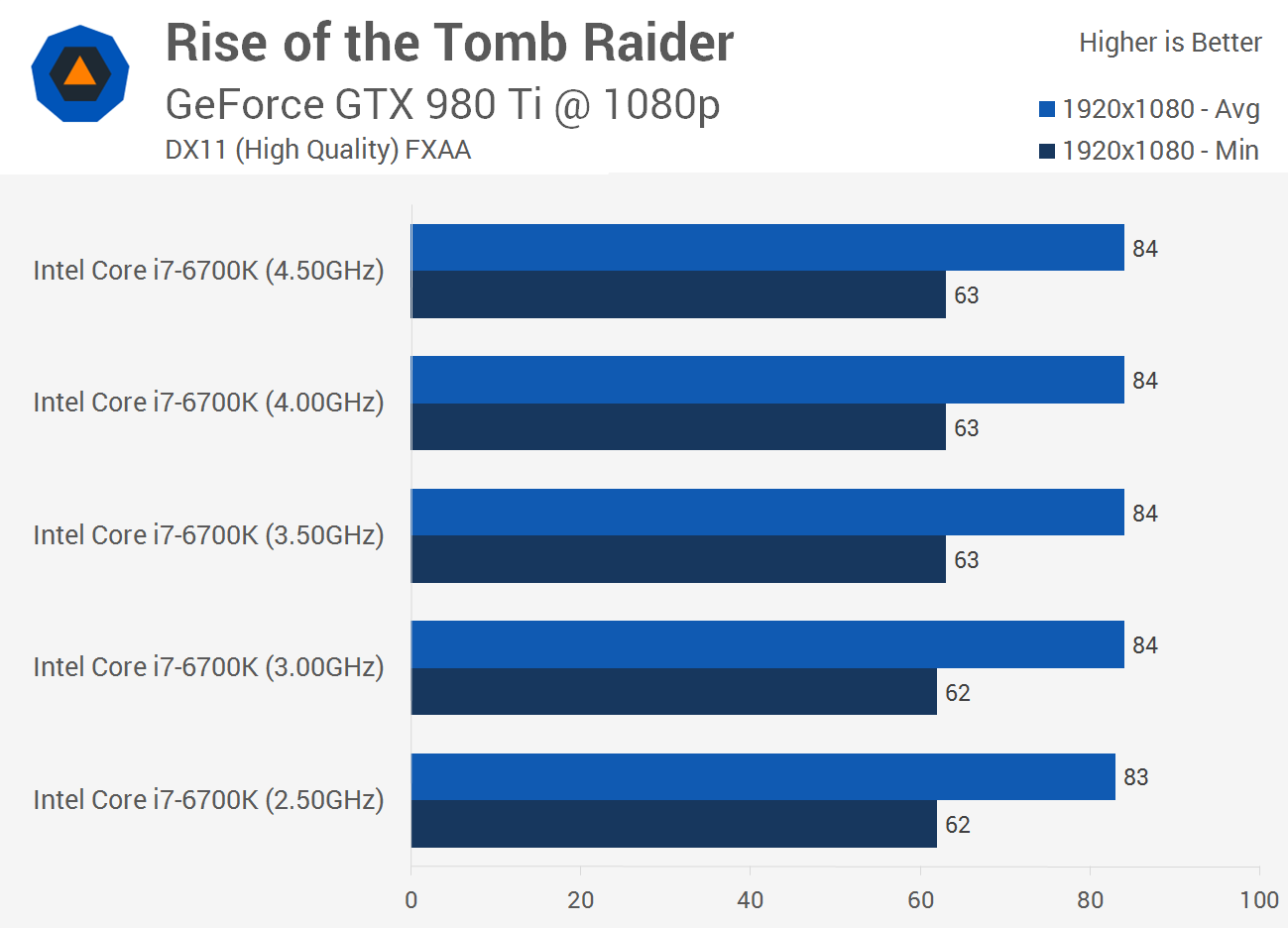

- Your 2600K or 5820K might last a very long time =/

- No point waiting for next CPU gen for your gaming rig unless you want future tech like Optane

I feel like that article is pretty bad in that I'm not sure I agree with his conclusions of the Intel CEOs quotes at all..

What I got from what he was saying was:

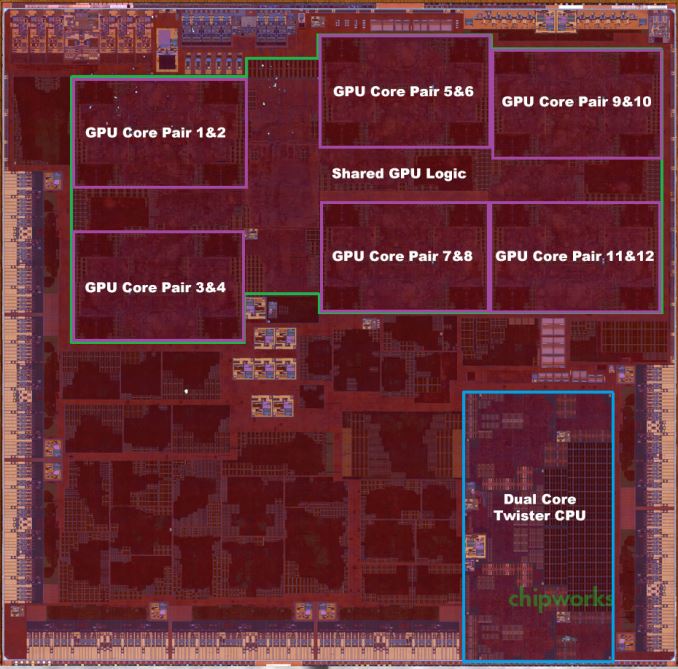

- Conventional semiconductor research into performance gains by increasing density of logical fabric is hitting both shrinkage and heat dissipation walls soon (something 3D X-point, aka 3D stacking, will likely not solve...)

- Medium-to-long term strategy is to migrate to altogether separate fundamental technologies (quantum tunnel transistors, aka one-small-step-towards-full-blown-quantum-computers, and spintronics)

- Said technologies are maturing, with spintronics likely commercially viable in the next 18 months

- As part of this migration, <clock-speed + transistor-count> will no longer represent the true measure of computational performance metric comparable to current-gen tech

- Some new set of metrics will be established and so naturally Moore's Law will end (since it's specifically dedicated to clock-speeds as the core factor) and Moore's Law 2.0 (or some other more appropriate name) will replace it

- Computational performance

will still continue to increase though, with a likely exponential jump somewhere along the technology paradigm shift

I suspect the decrease in clock speeds when moving over to quantum-tunnel transistors or magnetic-tunnel transistors, will be more than made up for in raw power, due to higher computational power, and the lower power requirements will eventually translate into higher densities and computational leaps in the mobile computing space.