The human eye has a response-time of 30hz

lmao

The human eye has a response-time of 30hz

I know right?! But then, he kind of made me uncertain. He explained it as this: The human eye has a response-time of 30hz, where everything completely in sync with this would look completely smooth (if it has motion blur and so forth) but that we perceive higher framerates better because it means there's more information leading to a smaller chance of your eye getting out of sync with the video.

He's been teasing me for a while for being somewhat PC-masterrace, and he told me this when I remarked to DayZ looking really choppy on his PC.

I'm kind of stumped, I want to prove him wrong but I seem to not find any good sources that can disprove him.

Although he does say it's just a placebo effect from becoming an elitist blind to NeoGAF's words... It kind of pissed me off... I tried to explain how higher framerates lead to better reponsetime and smoother gameplay, but he just mocked me.

it's not actually different for video. 120hz just doubles the frames (for 60fps content) or quadruples for 30fps content. or 5x for 24fps content. If you're seeing "smooth" video this is frame interpolation which is disgusting and wrong.

(talking about film/video)

But then, if it's a plasma, that's handled differently and really plasmas are something like 600hz, if it's even measurable. Pioneer FTW

This isn't true.

Even without interpolation, a 120hz display shows movies/TV significantly better as well because 24 fps goes evenly into 120 fps which means you suffer no pulldown. If they were watching actual native 120fps content, then the smoothness of that is leaps and bounds better and noticeable.

Even with motion interpolation, when it's done well it looks really fantastic. If you have a premium TV set, the days of crappy and ghosted interpolation are behind us. If you have a decent 120hz TV set and a great PC, you also have options like SmoothVideoProject which will add motion interpolation to videos you watch in specific video players. With the power of a beefy PC behind it you can get fantastic results.

I was watching an episode of Hannibal with my SO with the interpolation on and they didn't know about it. After we get through the first scene my SO commented "Wow I love how this show is shot. Did you notice how magnificent and smooth the pans were? Everything looks so lifelike and real. It felt like you could really be pulled in to the concert hall." That was coming from a person who in the past commented on not liking the "smoothing" on cheap TV's we'd seen at stores.

I remember reading that most people can't tell the difference above 72 frames a second.

Am I pulling this out my arse or is there any truth to that?

Something that's pretty neat for discussion is taking the person's point at face value rather than assuming a worst case scenario.it's not actually different for video. 120hz just doubles the frames (for 60fps content) or quadruples for 30fps content. or 5x for 24fps content. If you're seeing "smooth" video this is frame interpolation which is disgusting and wrong.

(talking about film/video)

But then, if it's a plasma, that's handled differently and really plasmas are something like 600hz, if it's even measurable. Pioneer FTW

Something that's pretty neat for discussion is taking the person's point at face value rather than assuming a worst case scenario.

120 FPS screen probably means a gaming monitor that actually displays a series of ~8.3ms frames.

No no, I'm talking about 120 FPS gaming on a 120Hz panel. Seems likely that is what the person you quoted was talking about.120hz for gaming would to 60fps very well. I'm more talking about native 24/30 video (which was stated in my post a couple times)

No no, I'm talking about 120 FPS gaming on a 120Hz panel. Seems likely that is what the person you quoted was talking about.

Why such a debate? just look at science

http://www.100fps.com/how_many_frames_can_humans_see.htm

No.So? If you can identify an object that was flashed for 1/220th of a second, does that not mean you can see 220fps?

Made a WebM version: http://a.pomf.se/pxvezy.webm

I wondering if it's more of a case of 'feeling' the difference more so than 'seeing' it?.

Take that 30vs60fps website, I can see a slight difference in the 2 comparisons but I wouldn't say its massive. Yet when I'm actually playing a game that's 30fps and then if I lower some settings to get a locked 60fps I can see and feel the difference massively, yet looking at those videos on the 30vs60fps site I struggle to see a big difference.......odd.

it's not actually different for video. 120hz just doubles the frames (for 60fps content) or quadruples for 30fps content. or 5x for 24fps content. If you're seeing "smooth" video this is frame interpolation which is disgusting and wrong.

(talking about film/video)

But then, if it's a plasma, that's handled differently and really plasmas are something like 600hz, if it's even measurable. Pioneer FTW

I have a friend who believes that 60 FPS is only a marketing trick by the console manufacturers to sell new hardware since the graphis themselves aren't "that much better" this time around.

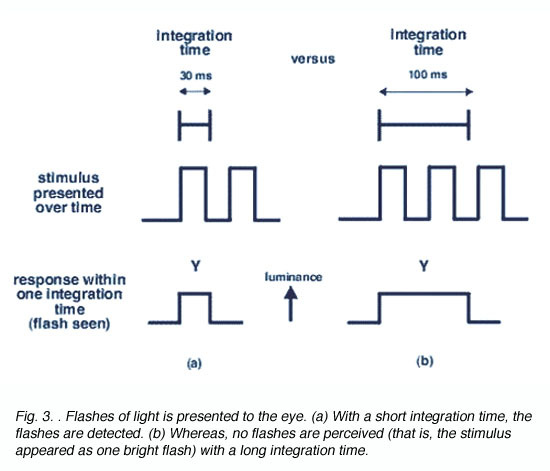

One thing that confuses the issue is that having perceptually smoother motion at higher frame rates does not mean that a person can seperately perceive each individual frame. If we're asking how fast you can have successive frames before our brain blends them together then it's going to be a pretty low number.

Sorta, kinda. Depends on the Plasma. There were 600 focus field drive ones and now they have even higher. 2500 FFDs on the GT50, VT50, ST60 etc and 3000 FFDs on the VT60, ZT60.

They basically work like this: http://paytherant.tumblr.com/post/20057368974/panasonic-2012-plasma-2500hz-ffd-explanation-attempt

Which is why Plasmas are so much better at motion resolution than LCD TVs typically are. 60 FPS games are just smooth as silk on my ST60 and there's pretty much no motion blur at all. It is just wonderful to see them in motion. Sadly, 30 FPS games still have a lot of it.

The brain probably prefers to blend frames together in the first place, unless other considerations dictate that it's not sensible to do so at that moment. Those are exactly the type of decisions our brain constantly makes about the input it gets. Our eyes send out so much visual data it's impossible to constantly interpret it all as it is, so we're always making assumption that allow us to simplify and reduce the amount of information, and only when necessary do we simplify less.

That's why there's a clear difference between asking "what is the minimum threshold required for the brain to accept a discrete signal as a continuous one?", and "what is the shortest temporal interval the brain can perceive when specifically required to do just that?".

Slap your friend.

My eyes are 24fps and 144p.

Can't tell the difference, can ya?!