-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Poll: RTX 3000, Big Navi, PlayStation 5, or Xbox Series X?

- Thread starter BluRayHiDef

- Start date

Salmon Snake

Member

I'll get a PS5 for sure, but maybe this gen I have to get a PC on the side.

Bernd Lauert

Banned

So? It's still unclear how high the PC requirements for pure (not cross gen) next gen games will be. These numbers mean shit.

Cards coming out around the release of new consoles have always been outtdated more quickly than the ones released later.

It will always depend on what you need. If you wanna play on settings similar to the two consoles, you gonna need a maybe slightly better PC. Which means that even the 3070 will be more than enough.

Jack Videogames

Gold Member

Ampere first, PS5 later

Black_Stride

do not tempt fate do not contrain Wonder Woman's thighs do not do not

Rumors only mention card notably faster than 3070, not 3080. (although it might be close to 3080)

Not even faster than the RTX 3080?

It better be cheaper than the RTX 3070 then.....or come with some serious perks that displace RTX I/O, DLSS and RTX.

turtlepowa

Banned

RTX 3000 is very tempting, but i think i'll get the One X and later for 200 bucks the PS5.

Soulblighter31

Banned

The circle jerk on Ree over Nvidia's new cards is disgusting!

The casuals on there don't even understand what they are looking at i.e thinking a 3080 is '2X the performance of s 2080 Ti' I've seen on there!!

No, the rasterization performance looks exactly as MLID and others leaked:

3080 is 25% faster than 2080 Ti 'only', according to DF numbers. It's using Samsung's inferior 8nm process. It only has 10gb. This leaves AMD a very good chance to beat it.

How did you come up with 25% faster than 2080Ti, when the 3080 had areas where it reached 205% over 2080. The 2080TI is only around 20-25% faster than 2080. 3080 destroys 2080TI by a bigger margin than we expected the 3090 to do it.

And it mopes the floor with ps5 in a way that i didnt even dreamed of. The 500 dollar 3070 wipes the floor with ps5. That means a 300 dollar 3060 will be equal or better than a ps5. This makes building a PC of comparable power at close to the same price as the consoles a reality.

Last edited:

kruis

Exposing the sinister cartel of retailers who allow companies to pay for advertising space.

I went for PS5 + Ampere, but I'm only going to buy the PS5 this year. That PS5 is going to last an entire console generation, but Ampere will be top of the bill only two years at most.

Next year Intel will introduce systems with support for PCIE 5.0 and DDR5. AMD will follow in 2022. For me that's the perfect time to upgrade my PC. By then it should also be clearer how much VRAM memory next gen games really need.

Next year Intel will introduce systems with support for PCIE 5.0 and DDR5. AMD will follow in 2022. For me that's the perfect time to upgrade my PC. By then it should also be clearer how much VRAM memory next gen games really need.

hard_boiled

Neophyte

My PC has a 1070, I'll run that for the next several generations if I want to play anything on it.

Coulomb_Barrier

Member

How did you come up with 25% faster than 2080Ti, when the 3080 had areas where it reached 205% over 2080. The 2080TI is only around 20-25% faster than 2080. 3080 destroys 2080TI by a bigger margin than we expected the 3090 to do it.

And it mopes the floor with ps5 in a way that i didnt even dreamed of. The 500 dollar 3700 wipes the floor with ps5. That means a 300 dollar 3060 will be equal or better than a ps5. This makes building a PC of comparable power at close to the same price as the consoles a reality.

You should wait for benchmarks to see real perf metrics. No chance in hell a 3080 is close to 205% faster than a 2080 on average, that's fantasy stuff

Stop worrying about PS5, it's not competing directly with a gaming PC. The Series X and it's modest 12tflops is. You either play all those PC games on XsX at lower settings or you get a next gen card.

This was why its smart Sony focused on games, IO, SSD and games again instead of losing battle with hardware and gpus.

Ellery

Member

100% going with the PS5, because that is where the games that I want to play are and the new controller looks absolutely amazing.

There is a chance I am considering the RTX 3080, but my graphics card is still decent and third party games with slightly prettier visuals and Xbox Game Pass games are not my priority and the RTX 3080 wouldn't open any doors for me.

I do really like PC hardware a lot and would love to slot that card in, but that is more of a materialistic weakness of mine and doesn't correlate as much with pure gaming enjoyment since the additional performance mostly goes to overly taxed Ultra settings.

There is a chance I am considering the RTX 3080, but my graphics card is still decent and third party games with slightly prettier visuals and Xbox Game Pass games are not my priority and the RTX 3080 wouldn't open any doors for me.

I do really like PC hardware a lot and would love to slot that card in, but that is more of a materialistic weakness of mine and doesn't correlate as much with pure gaming enjoyment since the additional performance mostly goes to overly taxed Ultra settings.

GenericUser

Member

Team Big Navi/Geforce + PS5

BluRayHiDef

Banned

Imagine The Last of Us Part II, which looks fantastic on the PlayStation 4 Pro, running on a 3090 at native 4K. I would love to see that.100% going with the PS5, because that is where the games that I want to play are and the new controller looks absolutely amazing.

There is a chance I am considering the RTX 3080, but my graphics card is still decent and third party games with slightly prettier visuals and Xbox Game Pass games are not my priority and the RTX 3080 wouldn't open any doors for me.

I do really like PC hardware a lot and would love to slot that card in, but that is more of a materialistic weakness of mine and doesn't correlate as much with pure gaming enjoyment since the additional performance mostly goes to overly taxed Ultra settings.

Armorian

Banned

My PC has a 1070, I'll run that for the next several generations if I want to play anything on it.

4 years later the newest xx70 card still has the same amount of memory.

Soulblighter31

Banned

You should wait for benchmarks to see real perf metrics. No chance in hell a 3080 is close to 205% faster than a 2080 on average, that's fantasy stuff

Stop worrying about PS5, it's not competing directly with a gaming PC. The Series X and it's modest 12tflops is. You either play all those PC games on XsX at lower settings or you get a next gen card.

This was why its smart Sony focused on games, IO, SSD and games again instead of losing battle with hardware and gpus.

I mean, read more carefully what i said. Not 205% faster on average. There were scenes, in Doom Eternal, where it reached 205% over 2080. On average, seems to be some 90%. Some games its 70%, some 80%. EIther way, its an enormous lead over both 2080 and 2080Ti. Just enormous. Until reviews hit, DF had their video where these lead in percentages were shown. Its just as good as a benchmarks with average framerates, better even, because the difference in percentage is how you measure it, not counting frames out of context

hard_boiled

Neophyte

Imagine The Last of Us Part II, which looks fantastic on the PlayStation 4 Pro, running on a 3090 at native 4K. I would love to see that.

Ellery

Member

Imagine The Last of Us Part II, which looks fantastic on the PlayStation 4 Pro, running on a 3090 at native 4K. I would love to see that.

Yeah me too. I would love to see any Naughty Dog game on PC if I am honest.

Or even better would be a Naughty Dog exclusive PC game where they squeeze every last drop out of high end hardware, but that will forever be a dream.

El Pistolero

Banned

PS5...

Coulomb_Barrier

Member

I mean, read more carefully what i said. Not 205% faster on average. There were scenes, in Doom Eternal, where it reached 205% over 2080. On average, seems to be some 90%. Some games its 70%, some 80%. EIther way, its an enormous lead over both 2080 and 2080Ti. Just enormous. Until reviews hit, DF had their video where these lead in percentages were shown. Its just as good as a benchmarks with average framerates, better even, because the difference in percentage is how you measure it, not counting frames out of context

It really isn't that useful, as this was likely paid marketing deal between DF and Nv usinh hand-picked titles. For real perf metrics I use TPU, they usually bench 12 games or Computerbase.de

Last edited:

llien

Member

Lisa wants to tell you two words, told my senses, one starts with f and another with o.Not even faster than the RTX 3080?

It better be cheaper than the RTX 3070 then..

Seriously, why would the much faster card need to be cheaper?

RTRT is supported (whoever is adored by those RT effects in WoW and about a dozen of games)serious perks that displace RTX I/O, DLSS and RTX.

"RTX IO"... is misleading and quite akin to what has been done in consoles.

So it likely went AMD implements it => Microsoft (hey, games gotta run on Windowzwz) creates corresponding API => Microsoft tells Huang to move it, move it. I still wonder what needs to be there in hardware for SSD to be able to send data straight to GPU, perhaps so far it's about decompression alone.

As for DLSS, I guess, it's there mainly to show that DF is full of shit, for Huang to claim 3090 is a 8k GPU and for other ways of misleading customers.

Anyway, at least XSeX AMD chip does support AI inference (although it wasn't meant to be mainly used to upscale images)

replicant-

Member

3080 and PS5 100% guranteed at launch. I do like my consoles so chanches are I will pick up a XSX at launch too.

Sethbacca

Member

MS has nothing on the horizon, and the biggest news in PC gaming is 3-4 year old Sony games. I have a gaming PC but see no need to upgrade it anytime soon as there aren't any killer apps on the horizon. PS5 is where it will be at for a while until PC devs start utilizing this new hardware. Seems a waste to invest in this early generation pc shit when devs won't be using it for a while. Might as well wait for 2nd or 3rd gen of the tech.

Black_Stride

do not tempt fate do not contrain Wonder Woman's thighs do not do not

Lisa wants to tell you two words, told my senses, one starts with f and another with o.

Seriously, why would the much faster card need to be cheaper?

RTRT is supported (whoever is adored by those RT effects in WoW and about a dozen of games)

"RTX IO"... is misleading and quite akin to what has been done in consoles.

So it likely went AMD implements it => Microsoft (hey, games gotta run on Windowzwz) creates corresponding API => Microsoft tells Huang to move it, move it. I still wonder what needs to be there in hardware for SSD to be able to send data straight to GPU, perhaps so far it's about decompression alone.

As for DLSS, I guess, it's there mainly to show that DF is full of shit, for Huang to claim 3090 is a 8k GPU and for other ways of misleading customers.

Anyway, at least XSeX AMD chip does support AI inference (although it wasn't meant to be mainly used to upscale images)

Is this what having a stroke while typing is like?

AMD havent shown us their GPU decompression, when they do ill put a tick on it.

Their big Navi would need to be price competitive to get me to move from CUDA/RTX features.

DLSS is mainly to show that DF is full of shit......hahahaha.

Okay buddy.

Seems a waste to invest in this early generation pc shit when devs won't be using it for a while. Might as well wait for 2nd or 3rd gen of the tech.

You mean like not buying 1st generation RT GPUs....and buying 2nd generation RT GPUs......as in Ampere RTX 30 GPUs?

Sethbacca

Member

Is this what having a stroke while typing is like?

AMD havent shown us their GPU decompression, when they do ill put a tick on it.

Their big Navi would need to be price competitive to get me to move from CUDA/RTX features.

DLSS is mainly to show that DF is full of shit......hahahaha.

Okay buddy.

You mean like not buying 1st generation RT GPUs....and buying 2nd generation RT GPUs......as in Ampere RTX 30 GPUs?

I'm talking more about the I/O tech in the RTX30, the RT tech has clearly matured. I'm guessing 2nd gen of this I/O tech will put it more in line with PS5 speeds and have actual games that actually use it available at that point.

KungFucius

King Snowflake

Ampere (if I can get one) then maybe PS5 ( If I can get Ampere and then get PS5)

llien

Member

Dude, what's wrong with you?Is this what having a stroke while typing is like?

Don't want to have a civil conversation, just move on.

Jeez.

Hotty Botty

Member

PS5 is all I need.

Type_Raver

Member

Since there was no Nintendo Switch 2 option, I had to vote for the next option - Ampere and PS5.

But it would definitely be Ampere and Switch 2 (pro or whatever its called).

But it would definitely be Ampere and Switch 2 (pro or whatever its called).

MasterCornholio

Member

I need to do more research first but in thinking about getting a PS5 first and then when the 3070 drops in price thats when I'll upgrade my PC.

Black_Stride

do not tempt fate do not contrain Wonder Woman's thighs do not do not

You think devs arent going to use DX12U DirectStorage?I'm talking more about the I/O tech in the RTX30, the RT tech has clearly matured. I'm guessing 2nd gen of this I/O tech will put it more in line with PS5 speeds and have actual games that actually use it available at that point.

If DirectStorage has minimal hit on GPU/CPU and SSD speeds keep increasing....PCIE5 DirectStorage speeds will easily be 28+GB/s.

Microsoft supporting DirectStorage in the DX API makes it almost a given that the adoption rate will be quite high.

I take it you mean the compression ratios getting better or something?

Dude, what's wrong with you?

Don't want to have a civil conversation, just move on.

Jeez.

I replied to pretty much everything you typed.

You decided not to bother reading anything else cept my attempt at describing how scattered your post was.

But if you dont want to have a civil conversation, just move on.

Sethbacca

Member

You think devs arent going to use DX12U DirectStorage?

If DirectStorage has minimal hit on GPU/CPU and SSD speeds keep increasing....PCIE5 DirectStorage speeds will easily be 28+GB/s.

Microsoft supporting DirectStorage in the DX API makes it almost a given that the adoption rate will be quite high.

I take it you mean the compression ratios getting better or something?

Yeah, basically it's gonna take a bit for the games to appear to begin with, and the next iterations will have better compression ratios. Might as well wait really.

hard_boiled

Neophyte

I'm waiting for AMD to reveal Big Navi and I will be buying Big Navi.

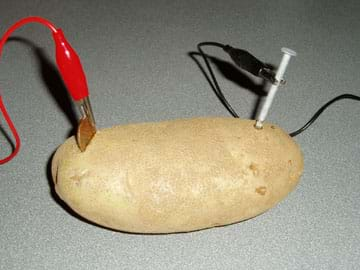

call the cops, I don't care

Here is your Big Navi sir.

captainraincoat

Banned

i just bought a 2080ti 2 months ago...point and laugh at me

Ulysses 31

Member

3090 before anything else, rest is still wait and see for me.

Ulysses 31

Member

2080ti is still a beast and it also depends what you upgraded from and what price.i just bought a 2080ti 2 months ago...point and laugh at me