Asset streaming is the future and, beyond a certain GPU performance threshold, due to what they saw on Unreal Engine 5, every engine developer and their dogs are going to be focusing on exactly that.

Even the Metro dev team 4A, who were among the first developers implementing raytracing on PC and

now have a large plethora of RT features in their engine, are saying asset streaming is the future and they'll need to keep up with it:

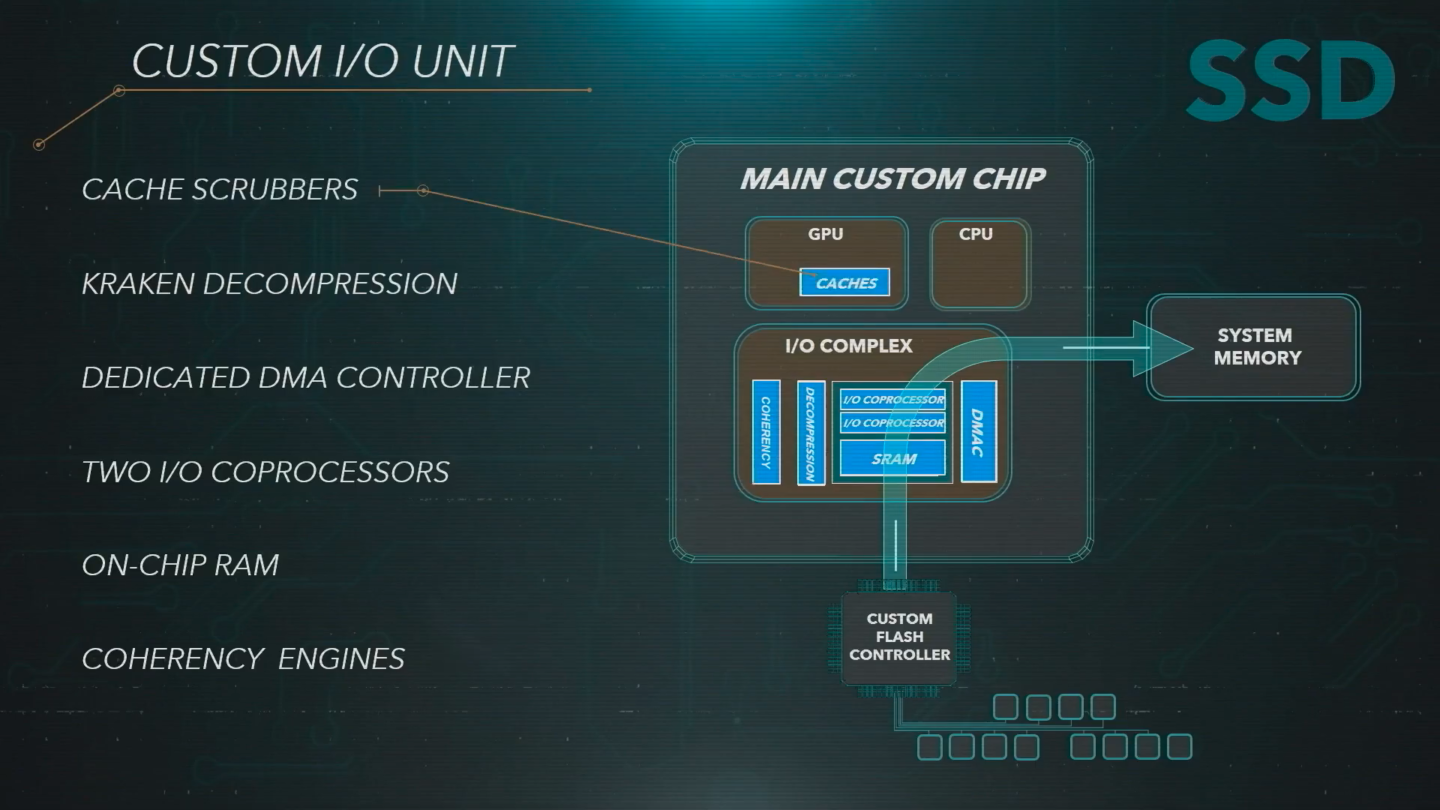

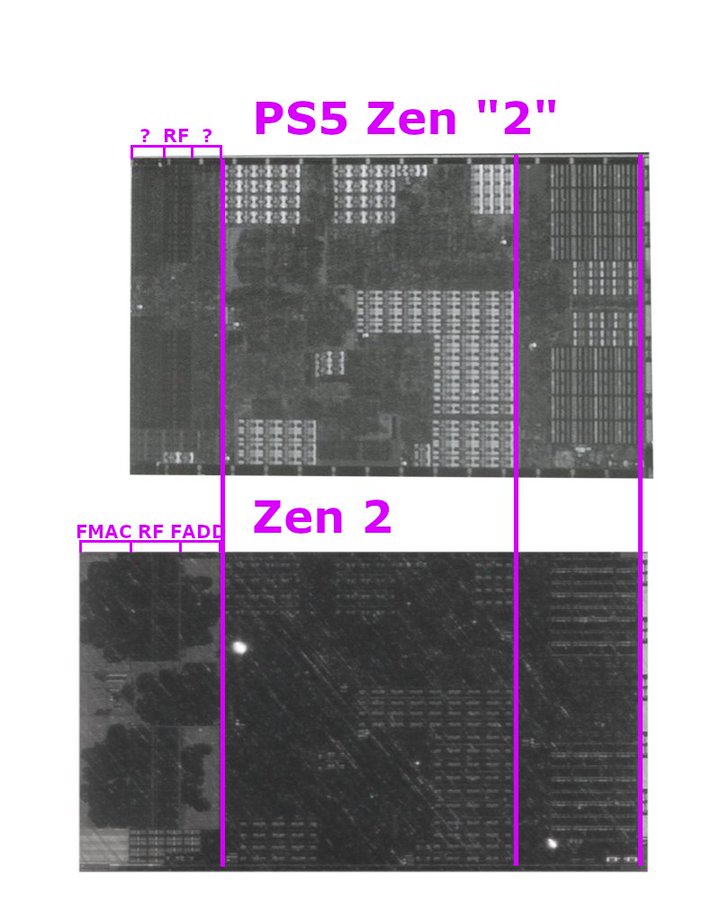

I would really like to see a more in-depth analysis of the I/O block on the PS5's SoC. How big is that ESRAM? And the Kraken decompressor capable of 22GB/s output?

That could also tell us how soon and for how much can we put something similar on PC hardware?

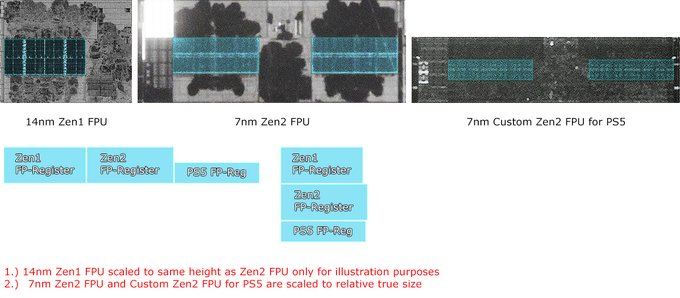

At this point I think analysing the I/O might be more important than measuring FPU sizes, but I guess it's also a lot harder to discern within the pictures.

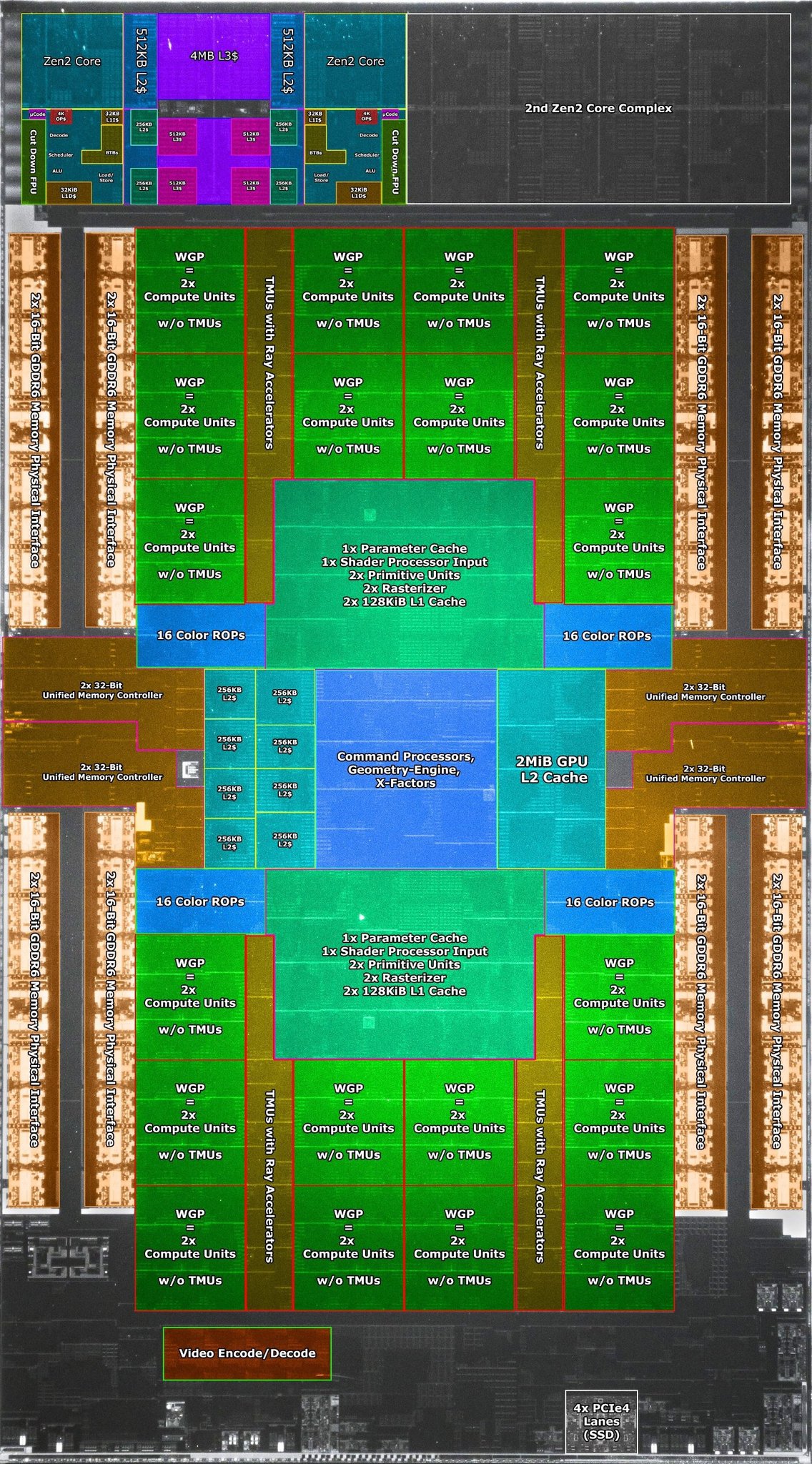

Having the full RDNA2 ISA compatibility for DX12 Ultimate features on the SeriesX is important for Microsoft because of their strategy for doing simultaneous DX12 PC <-> XBox game releases.

It's not important for Sony, who have their own sandboxed and fully controlled environment. They aren't dependent on DX12's reference process for foveated rendering, geometry culling and others.

I.e. they're not dependent on what amounts to a consortium where Microsoft, AMD, nvidia, Intel and others have their say on how to process these features (and where nvidia probably pulls a lot more weight than the others).

It was AMD who had to adapt their hardware to Microsoft's DX12 specs for Navi2x and the Series SoCs, not the other way around.

Just like RDNA1 before it, RDNA2 is defined by a range of versions for WGP, ROP, Geometry Engine, etc. It's not defined by its supported features on DX12 Ultimate. Some games won't even use DX12, like the ones using idtech and Source engines.

Besides, AMD is so

relaxed on their naming conventions that at some point they even called

Vega to what amounts to a Polaris chip glued to a HBM chip.