Romulus

Member

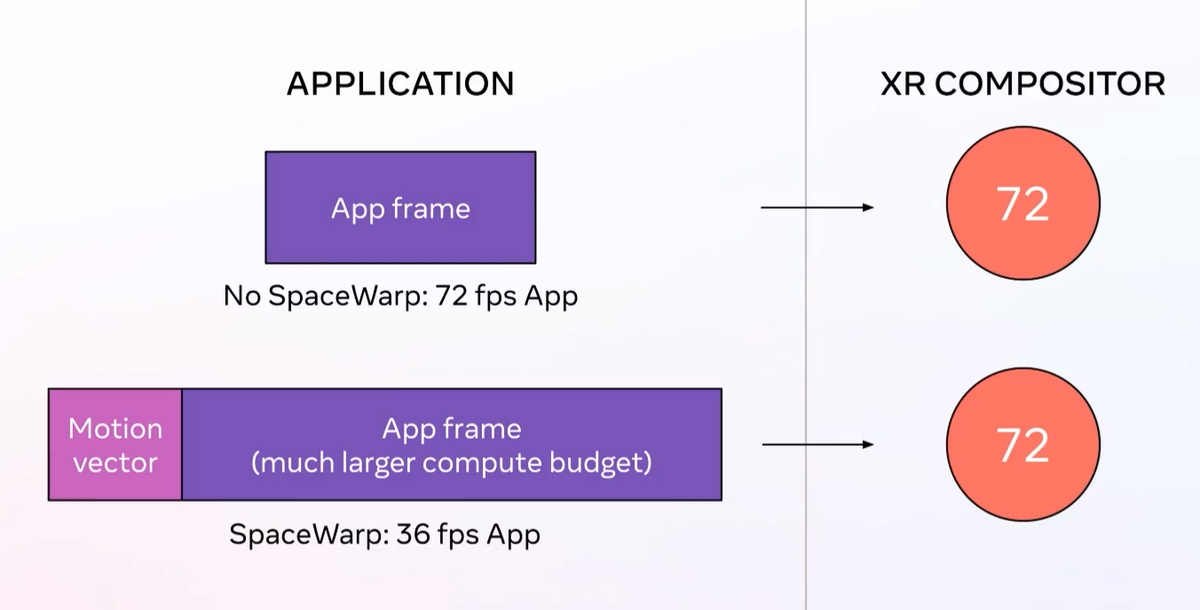

ASW can give apps roughly 70% more power to work with compared to rendering at full framerate. That’s the kind of jump usually seen between hardware generations. It could lead to noticeably higher fidelity graphics or even new games that wouldn’t otherwise be possible on standalone VR.

That means apps using ASW on Quest 1 will be able to render at 36 FPS, while on Quest 2 developers can choose between 36 FPS, 45 FPS, or 60 FPS by changing the refresh rate mode.

Application SpaceWarp Can Give Quest Apps 70% More Performance

Quest headsets have a new feature called Application SpaceWarp, letting apps run at half framerate by generating every other frame synthetically. This article was originally published November 4. It has been updated to reflect ASW’s release on November 11. Application SpaceWarp is enabled...

Very interesting for Quest, but also for future standalone headsets as they become more powerful.