-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Radeon HD 7900 Launch set for December 22nd, 2011 - R1000 | Tahiti | GCN

- Thread starter artist

- Start date

Is there a video of this demo for us non-owners? Looks pretty.

http://developer.amd.com/samples/demos/pages/AMDRadeonHD7900SeriesGraphicsReal-TimeDemos.aspx

Should be soon, but it looks like they screwed up the hosting as usual.

how does that 7970 direct cu 2 compare to 2 6950 direct cu 2's?

i really wanna go back to 1 card in stead of 2, and i would only have to put 50 euro in to get the 7970 i think if i sell my 2 6950's

Performance is comparable, but considering how much easier it is to OC one gpu rather than two I wouldn't be surprised if a 7970 can with ease beat two 6950. Then you also get rid of all the dual gpu headaches and for only 50 euros I'd do it in a heartbeat.

May I ask how you can manage to only lose 50 in the trade?

Performance is comparable, but considering how much easier it is to OC one gpu rather than two I wouldn't be surprised if a 7970 can with ease beat two 6950. Then you also get rid of all the dual gpu headaches and for only 50 euros I'd do it in a heartbeat.

May I ask how you can manage to only lose 50 in the trade?

well the card costs 270 euro here in holland, and someone gave me 450 for both.

so im going to sell them sunday and wait for the 7970.

will it arrive within 2 weeks?

well the card costs 270 euro here in holland, and someone gave me 450 for both.

so im going to sell them sunday and wait for the 7970.

will it arrive within 2 weeks?

Ah nice deal! Yeah definitely go for the 7970 over cf 6950.

Ooooh Leo tech demo looks nice.

http://www.youtube.com/watch?v=4gIq-XD5uA8

EDIT: Dave Baumann with AMD confirmed that it works on 5XXX/6XXX series cards as well, but obviously it will run like shit compared to the 7970 with the features implemented.

http://www.youtube.com/watch?v=4gIq-XD5uA8

EDIT: Dave Baumann with AMD confirmed that it works on 5XXX/6XXX series cards as well, but obviously it will run like shit compared to the 7970 with the features implemented.

Leo runs good for a tech demo, I get 35-40 FPS at 2560x1600.

Took a vid here http://www.youtube.com/watch?v=x5EqmgqhjW4

Took a vid here http://www.youtube.com/watch?v=x5EqmgqhjW4

longdi

Banned

Ooooh Leo tech demo looks nice.

http://www.youtube.com/watch?v=4gIq-XD5uA8

EDIT: Dave Baumann with AMD confirmed that it works on 5XXX/6XXX series cards as well, but obviously it will run like shit compared to the 7970 with the features implemented.

runs smooth on 6970 at 1920x1200, it uses up to 1.5gb of video memory, the highest i seen.

runs smooth on 6970 at 1920x1200, it uses up to 1.5gb of video memory, the highest i seen.

interesting. I'm trying this on a 2GB 6950 at 2560 x 1440 and I get 9 to 10 fps.

does anyone know how to change the screen res of the demo, if at all? I tried changing desktop resolution before launching it but nothing. it defaults to native res.

edit. nvm, it's in the sushi config file. max 20fps at 1600 x 900, lol. avg around 15 or so

LabouredSubterfuge

Member

Interesting piece on the Leo tech demo on Anandtech regarding anti-aliasing:

Anandtech Article said:In a traditional (forward) renderer, the rendering process is rather straightforward and geometry data is preserved until the frame is done rendering. And while this normally is all well and good, the one big pitfall of a forward renderer is that complex lighting is very expensive to run because you don’t know precisely which lights will hit which geometry, resulting in the lighting equivalent of overdraw where objects are rendered multiple times to handle all of the lights.

In deferred rendering however, the rendering process is modified, most fundamentally by breaking it down into several additional parts and using an additional intermediate buffer (the G-Buffer) to store the results. Ultimately through deferred rendering it’s possible to decouple lighting from geometry such that the lighting isn’t handled until after the geometry is thrown away, which reduces the amount of time spent calculating lighting as only the visible scene is lit.

The downside to this however is that in its most basic implementation deferred rendering makes MSAA impossible (since the geometry has been thrown out), and it’s difficult to efficiently light complex materials. The MSAA problem in particular can be solved by modifying the algorithm to save the geometry data for later use (a deferred geometry pass), but the consequence is that MSAA implemented in such a manner is more expensive than usual both due to the amount of memory the saved geometry consumes and the extra work required to perform the extra sampling.

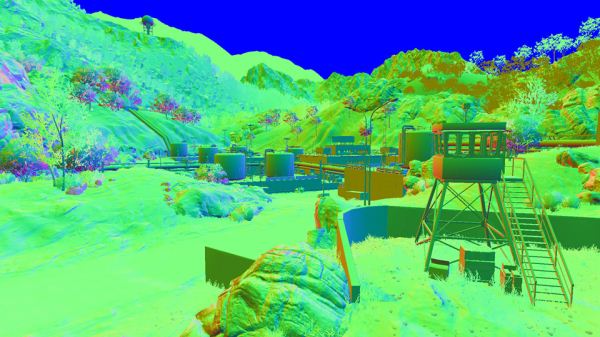

(Battlefield 3 G-Buffer)

For this reason developers have quickly been adopting post-process AA methods, primarily NVIDIA’s Fast Approximate Anti-Aliasing (FXAA). Similar in execution to AMD’s driver-based Morphological Anti-Aliasing, FXAA works on the fully rendered image and attempts to look for aliasing and blur it out. The results generally aren’t as good as MSAA (and especially not SSAA), but it’s very quick to implement (it’s just a shader program) and has a very small performance hit. Compared to the difficultly of implementing MSAA on a deferred renderer, this is faster and cheaper, and it’s why MSAA support for DX10+ games is anything but universal.

(AMD's Leo tech demo)

But what if there was a way to have a forward renderer with performance similar to that of a deferred renderer? That’s what AMD is proposing with one of their key tech demos for the 7000 series: Leo. Leo showcases AMD’s solution to the forward rendering lighting performance problem, which is to use a compute shader to implement light culling such that the compute shader identifies the tiles that any specific light will hit ahead of time, and then using that information only the relevant lights are computed on any given tile. The overhead for lighting is still greater than pure deferred rendering (there’s still some unnecessary lighting going on), but as proposed by AMD, it should make complex lighting cheap enough that it can be done in a forward renderer.

As AMD puts it, the advantages are twofold. The first advantage of course is that MSAA (and SSAA) compatibility is maintained, as this is still a forward render; the use of the compute shader doesn’t have any impact on the AA process. The second advantage relates to lighting itself: as we mentioned previously, deferred rendering doesn’t work well with complex materials. On the other hand forward rendering handles complex materials well, it just wasn’t fast enough until now.

Leo in turn executes on both of these concepts. Anti-aliasing is of course well represented through the use of 4x MSAA, but so are complex materials. AMD’s theme for Leo is stop motion animation, so a number of different material types are directly lit, including fabric, plastic, cardboard, and skin. The total of these parts may not be the most jaw-dropping thing you’ve ever seen, but the fact that it’s being done in a forward renderer is amazingly impressive. And if this means we can have good lighting and excellent support for real anti-aliasing, we’re all for it.

runs smooth on 6970 at 1920x1200, it uses up to 1.5gb of video memory, the highest i seen.

Get like 1FPM on 6780x2 unless I lower the resolution from 1080p to 720p, then I get about 30-50fps

Memory hog!

A nice demo, better than their usual monkey and toad demos...

LabouredSubterfuge

Member

I know that having all that done real time is impressive tech wise but knowing you can get the same results by faking a lot of it makes it look blah. Samaritan looks better.

That's not the point. The point is you could now reasonably do Samaritan (if Epic were so inclined to drastically change their plans for UE3.9) in a more efficient manner with better visual quality. For example, Epic were advertising the fact that they were doing MSAA on their DX11 pipeline which makes one think they're taking the brute force approach with it.

I know that having all that done real time is impressive tech wise but knowing you can get the same results by faking a lot of it makes it look blah. Samaritan looks better.

Yeah, I'm more interested in how developers will put some of these technologies to good use than this demo. Quick question, can you 'free cam' this demo in realtime like past ATI/AMD demos, or is it restricted to watching the reel?

Get like 1FPM on 6780x2 unless I lower the resolution from 1080p to 720p, then I get about 30-50fps

Memory hog!

A nice demo, better than their usual monkey and toad demos...

Well it's obviously a memory hog because they are adding geometry redundancy by creating the buffer

Even in a UMA scenario, it's unavoidable unless they were to build flags in individual or "key" vertices, essentially adding an extra bit/byte of data per vertex and therefore reducing the ridiculous redundancy

Not sure why they thought it would be easier to do it their way

Unless I'm misunderstanding what is going on in the renderer, that is

Lactose_Intolerant

Member

I know that having all that done real time is impressive tech wise but knowing you can get the same results by faking a lot of it makes it look blah. Samaritan looks better.

Wasn't Samaritan being run on 3 Nvidia cards too? I honestly don't understand some complaints.

LiquidMetal14

hide your water-based mammals

Very impressive demo indeed.

Expect the 7950 SKUs to come with 1.5GB.I don't know if this been asked before but is there any news on when the 1.5GB version of the 7970 will be released? I mean 3GB is nice but not the worth the price.

Expect the 7950 SKUs to come with 1.5GB.

Oh right fair enough. I could of sworn I saw 7970 1.5GB versions. Ah well hopefully the performance of the 7950 is worthly. Currently got a 6850 and unsure if its worth an upgrade or wait for Nvidia's new cards.

http://www.newegg.com/Product/Produ...-1&isNodeId=1&Description=radeon+7950&x=0&y=0

Seems like $449 will be the SRP and 3GB will be standard, atleast in the first batch.

900MHz

Seems like $449 will be the SRP and 3GB will be standard, atleast in the first batch.

900MHz

Reviews are out: http://hardocp.com/article/2012/01/30/amd_radeon_hd_7950_video_card_review/1

We managed to achieve a highest stable GPU overclock at 1050MHz, which is 250MHz over the stock frequency, an increase of 31%. We managed to get the memory up to 1500MHz, which is also 250MHz over stock, or an increase of 20%. We had to have the fan set manually to run at 50% which kept it cool enough to allow this. These are not the highest values allowable in Overdrive, but these are close. We definitely feel that with some Voltage tweaking and custom cooling we should be able to achieve 1.1GHz. For now, with the stock cooler and Voltages 1050MHz/6GHz is the final stable overclock.

As you can see, the overclock has made a significant difference in performance in every game. The overclocked HD 7950 is 26% faster than it was at stock frequencies in Batman. In BF3 the overclocked Radeon HD 7950 is also 26% faster than it was at stock frequencies. In Deus Ex the overclock yielded a 23% performance increase.

Our testing is clear, this Radeon HD 7950 overclocked well, and provided a significant performance improvement. Of course, ours is a reference video card, and we can't say that all retail cards will overclock similar to ours. As we evaluate more HD 7950 cards we will see how those overclock, especially with Voltage tweaking and custom cooling. This at least shows the potential that overclocking can have with the Radeon HD 7950.

:lolIf we see the price drop to $399.99 or below in 60 days, we will change the award to a GOLD (From Silver).

Putting the pressure on AMD now.

PCPer review: http://pcper.com/reviews/Graphics-Cards/AMD-Radeon-HD-7950-3GB-Graphics-Card-Review

Ryan (PCPer) addresses the pricing concern::lol

Putting the pressure on AMD now.

PCPer review: http://pcper.com/reviews/Graphics-Cards/AMD-Radeon-HD-7950-3GB-Graphics-Card-Review

What if the SRP is $349 and due to demand the retailers price it at $400+?At $100 less than the Radeon HD 7970 3GB, the HD 7950 3GB actually seems like a pretty good buy; you can overclock the performance enough to ALMOST reach the reference performance of the more expensive card. Compared to the GeForce GTX 580 1.5GB average selling price of $499, the HD 7950 again looks like a great card considering it outperforms the NVIDIA option. I can't shake the feeling that AMD is doing a disservice to itself in the long run by keeping prices this high though I understand WHY they are doing it.

The fact is that AMD is likely still capacity constrained by the 28nm process at TSMC and pricing any of these cards lower might actually HURT the company's reputation. If the HD 7950 3GB were being released today for $349 I think just about every sane gamer on the planet would want one but if AMD doesn't have the inventory to cater to that many buyers, what is the point of offering it at that price? It would cause anger and resentment from the very audience they are trying to cater to so instead we see higher prices that allow AMD to make a bit more profit and temper demand as well until capacity might soon catch up.

As many GAFFer's have already said - they'll enjoy the 7900 series for a few months, sell it and go for the cheaper GK104 if and truly it's worth it for them.No one, absolutely no one, should be even contemplating, let alone going out, to buy the 7900 series until GK104/GTX 660 Ti arrives in April.

The temporary pleasure will not be the worth pain in the wallet 2 months later.

Skel1ingt0n

I can't *believe* these lazy developers keep making file sizes so damn large. Btw, how does technology work?

If the 7950 drops to $350, I'll definitely sell my GTX570 and upgrade for ~$100.

The 7950s aren't worth the price Newegg is charging for them ($459+). If you are going to spend that much on a GPU no reason not to go for the flagship which is less than $100 more...

Bought my 7970 on launch day and have been enjoying it a lot. It delivers the performance I need at 2560x1600, no other single GPU does. If Nvidia releases a product that blows away the 7970 I will of course sell mine and buy it, somehow I doubt that will happen though.

No one, absolutely no one, should be even contemplating, let alone going out, to buy the 7900 series until GK104/GTX 660 Ti arrives in April.

The temporary pleasure will not be the worth pain in the wallet 2 months later.

Bought my 7970 on launch day and have been enjoying it a lot. It delivers the performance I need at 2560x1600, no other single GPU does. If Nvidia releases a product that blows away the 7970 I will of course sell mine and buy it, somehow I doubt that will happen though.

BoobPhysics101

Banned

Skipping the 7970 and keeping my 2.5 GB GTX 570 for a bit longer.

From Anand's 7950 review:

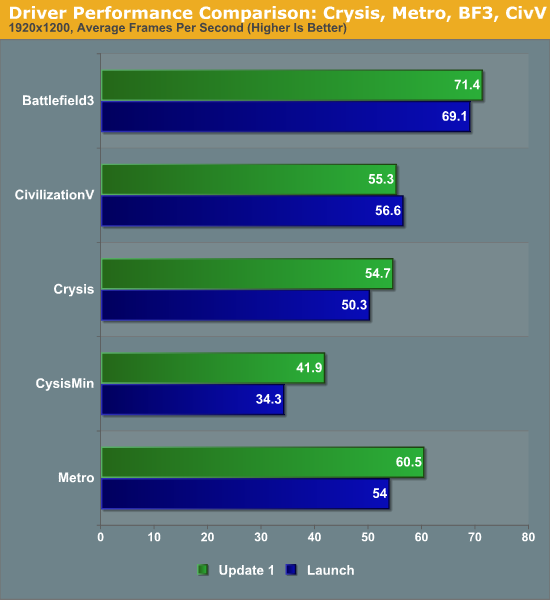

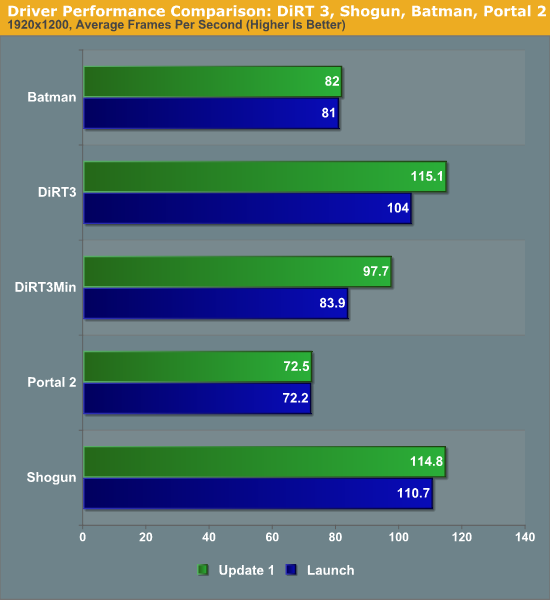

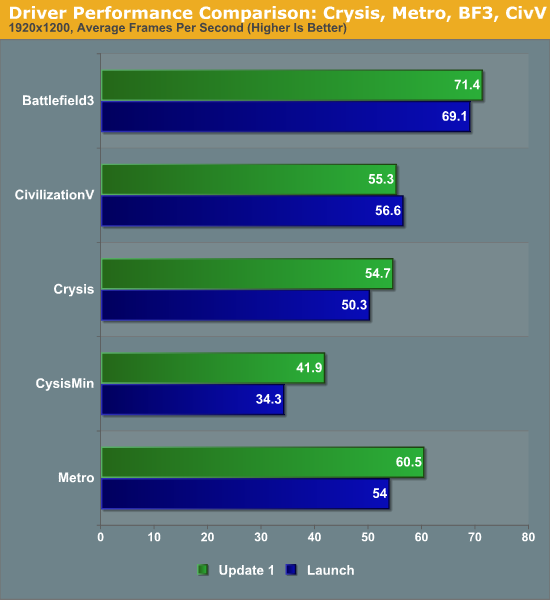

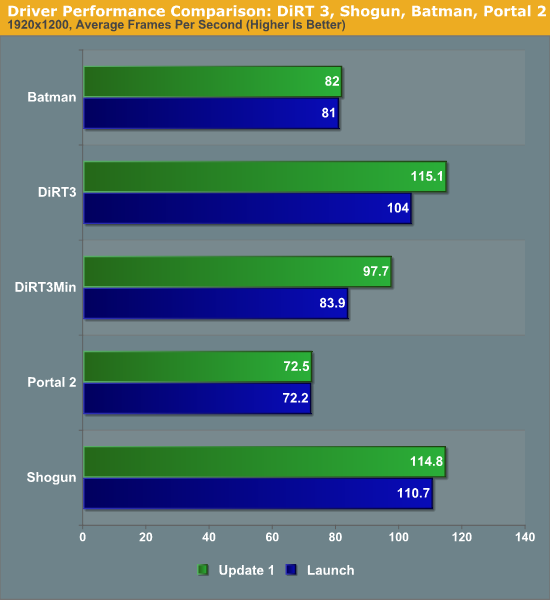

As to be expected, at this point in time AMD is mostly focusing on improving performance on a game-by-game basis to deal with games that didnt immediately adapt to the GCN architecture well, while the fact that they seem to be targeting common benchmarks first is likely intentional. Crysis: Warhead is the biggest winner here as minimum framerates in particular are greatly improved; were seeing a 22% improvement at 1920, while at 2560 theres still an 11% improvement. Metro:2033 and DiRT 3 also picked up 10% or more in performance versus the release drivers, while Battlefield 3 has seen a much smaller 2%-3% improvement. Everything else in our suite is virtually unchanged, as it looks like AMD has not targeted any of those games at this time.

As one would expect, a result of these improvements the performance lead of the 7970 versus the GTX 580 has widened. The average lead for the 7970 is now 19% at 1920 and 26% at 2560, with the lead approaching 40% in games like Metro that specifically benefited from this update. At this point the only game the 7970 still seems to have trouble pulling well ahead of the GTX 580 is Battlefield 3, where the lead is only 8%.

I don't know if this been asked before but is there any news on when the 1.5GB version of the 7970 will be released? I mean 3GB is nice but not the worth the price.

Given my 580 runs out of vram in the odd game now at 1080p I'd say it would take a brave man to spend a lot of money on a 1.5GB card currently.

I think it's actually priced well right now given the competition. It's cheaper than the GTX 580 and clearly offers better performance and then blows it away when overclocked. If you're doing a new build now, you'd be crazy not to get one. If you can wait, the 79xx series has room to drop for sure when the Nvidia cards come out. I hope for Nvidia's sake that it really is a lot more powerful, because AMD has a lot of flexing room both in clock speeds and in pricing. Not to mention the fab process will be a lot more mature yielding more cards.

I'm very surprised how well it scales clock to clock versus the 7970.

I'm very surprised how well it scales clock to clock versus the 7970.

Yup, clock for clock the 7950 is only 4% slower. So the disabled CU (cutdown in shaders) is not such a big deal.I think it's actually priced well right now given the competition. It's cheaper than the GTX 580 and clearly offers better performance and then blows it away when overclocked. If you're doing a new build now, you'd be crazy not to get one. If you can wait, the 79xx series has room to drop for sure when the Nvidia cards come out. I hope for Nvidia's sake that it really is a lot more powerful, because AMD has a lot of flexing room both in clock speeds and in pricing. Not to mention the fab process will be a lot more mature yielding more cards.

I'm very surprised how well it scales clock to clock versus the 7970.

It's just exactly where it should be.I think it's actually priced well right now given the competition. It's cheaper than the GTX 580 and clearly offers better performance and then blows it away when overclocked. If you're doing a new build now, you'd be crazy not to get one. If you can wait, the 79xx series has room to drop for sure when the Nvidia cards come out. I hope for Nvidia's sake that it really is a lot more powerful, because AMD has a lot of flexing room both in clock speeds and in pricing. Not to mention the fab process will be a lot more mature yielding more cards.

I'm very surprised how well it scales clock to clock versus the 7970.

This was a new arch and new process, why is it slotting in the price bracket everyone expected? Zzzzzzz

Slayer-33

Liverpool-2

specialguy

Banned

The reviews of the 7950 make it look like it is a pretty good card, but I'm still waiting to see what Kepler brings. I'd probably instabuy the 7950 if it was $100 cheaper though.

Yeah, the best that could have been hoped for was probably $50 cheaper on both 7970 and 7950 (399/499) and that would have been nice. As is the pricing is understandable and justifiable, but not particularly exciting.

Still waiting for the lower priced stuff here. Wish we knew more about that roadmap.

Odd that the 7950 is only down 4% when clocked equally. Guess shaders aren't the bottleneck, but then what is?