Where is this from though, I've been following Navi since the earliest I can remember and only recently I saw Digital Foundry made a claim that Navi is just GCN.

But nowhere can I find anything that backs that up, as far as things stand and as I know (lately I haven't been following Navi much) is that it will have next-gen memory (I reckon that's GDDR6 or HBM3).

And that it can be found in a Linux driver from AMD, but beyond that I haven't came across anything that remotely indicate it will be GCN based at all (it makes sense in a lot of ways, but is it confirmed?).

They should be able to add CU's, MS did it with XBOX (that's based off a RX480/RX580 I think, 36CU count), it has 40+4, 4 disabled.

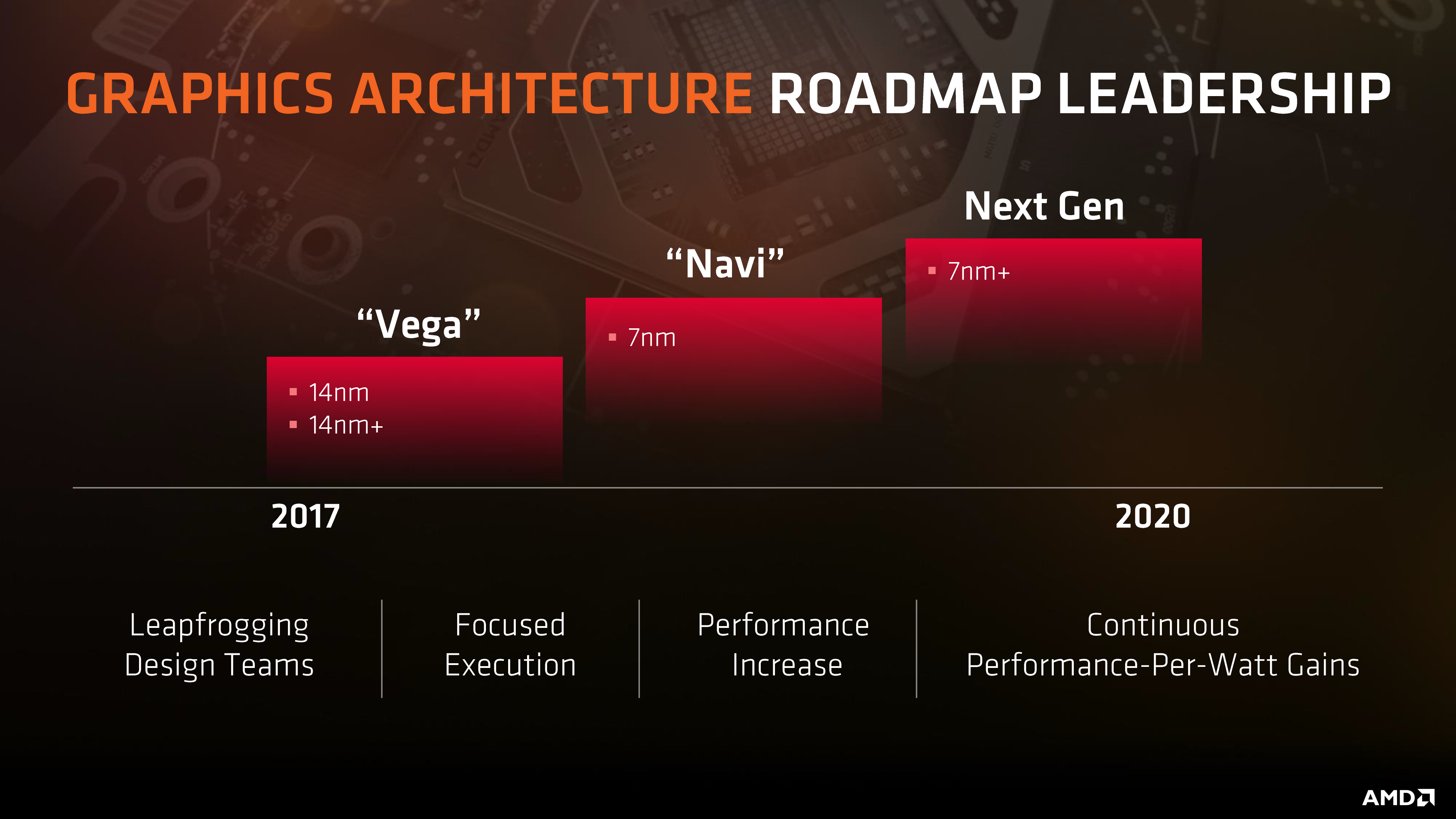

More reading between the lines tbh. They've been on GCN since 2012 and a substantial move off it would seem like it would be playing into their very extended hype cycles. Right now, all they seem to be promising for it is 7nm, connected dies via infinity fabric, and whatever nextgen memory is. Also named "next gen" (sigh) is where the earliest most people are expecting a true move off GCN to be.

"Performance improvements", scalability (multi-die), faster memory, "focused execution", not quite screaming a ground up move off of GCN, earliest expectation seems to be "next-gen" after Navi.

AMD working on GCN successor after Navi

https://hardforum.com/threads/amd-working-on-next-gen-gpu-after-navi-for-2020-2021.1954109/

"Navi Might Be Final GCN GPU from AMD"

https://www.overclockersclub.com/news/41365/

I do wonder if the lack of Navi info is connected to PS5. Could it be almost the exact chip of PS5 (that will end up a PC GPU later) and AMD revealing any info gives the game away?

The other possibility -

"Navi may be the last GCN architecture as RTG is looking forward to a non-GCN future.

Work on Navi has been scaled back as RTG plans a brand new architecture or at least drastically different enough to be considered non-GCN.

A major goal is to significantly reduce power consumption.

Of cause, if this new non-GCN is not released on schedule, we may be looking at some stopgap GCN architectures. "

" development for this next architecture began prior to

Raja Koduri's departure from the Radeon Technologies Group, but development has been increasing enough to produce more chatter under

RTG's new leadership. Everything is speculation currently, but it seems AMD's intent is to make the leap to the new macro architecture as significant a performance jump as it was from TeraScale to GCN, or greater. Navi-based GPUs are expected to launch in 2019, likely early in the year, putting this GCN-successor in 2020 or 2021, assuming everything goes as planned and hoped. "

Additional features scaled down from Navi to focus on NextGen being a larger leap from all GCN architectures. Navi gets multi-die, faster memory, and 7nm for the meantime.

Now, it may be Sony takes us all by surprise by not using 7nm chips and releasing PS5 way before anyone expected. But while they're certainly capable of some braindead business decisions (this has been proven time and again), surely they're not that stupid?

Once again in Cerny we trust, he maintained incredibly levelheaded decisions in creating the PS4s hardware.