-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

vg247-PS4: new kits shipping now, AMD A10 used as base, final version next summer

- Thread starter Globox_82

- Start date

Gemüsepizza

Member

Playstation 3 device? ^^

This seems to indicated you would/might be able to turn any flat, tablet-like, surface into a virtual touchpad - perhaps by placing specific strips on them. Like making your own AR cards for vita

This seems to be a product a Tablet for the PlayStation.

This seems to be a product a Tablet for the PlayStation.

If you've ever used AR cards on the vita or PS3 you will see how similar this is to that, especially the last passage/page you quoted

If you've ever used AR cards on the vita or PS3 you will see how similar this is to that, especially the last passage/page you quoted

it's a PlayStation EyePad

http://worldwide.espacenet.com/publ...30213&DB=worldwide.espacenet.com&locale=en_EP

http://worldwide.espacenet.com/publ...30213&DB=worldwide.espacenet.com&locale=en_EP

OK my mistake, didn't realise the cameras were actually on the device. Note to self, read patents carefully first before commenting.

Still having difficulty understanding the second page though. Does the unit have a screen or not? If so why would you not just make it touch screen instead of using cameras to judge swipes and positioning?

OK my mistake, didn't realise the cameras were actually on the device. Note to self, read patents carefully first before commenting.

Still having difficulty understanding the second page though. Does the unit have a screen or not? If so why would you not just make it touch screen instead of using cameras to judge swipes and positioning?

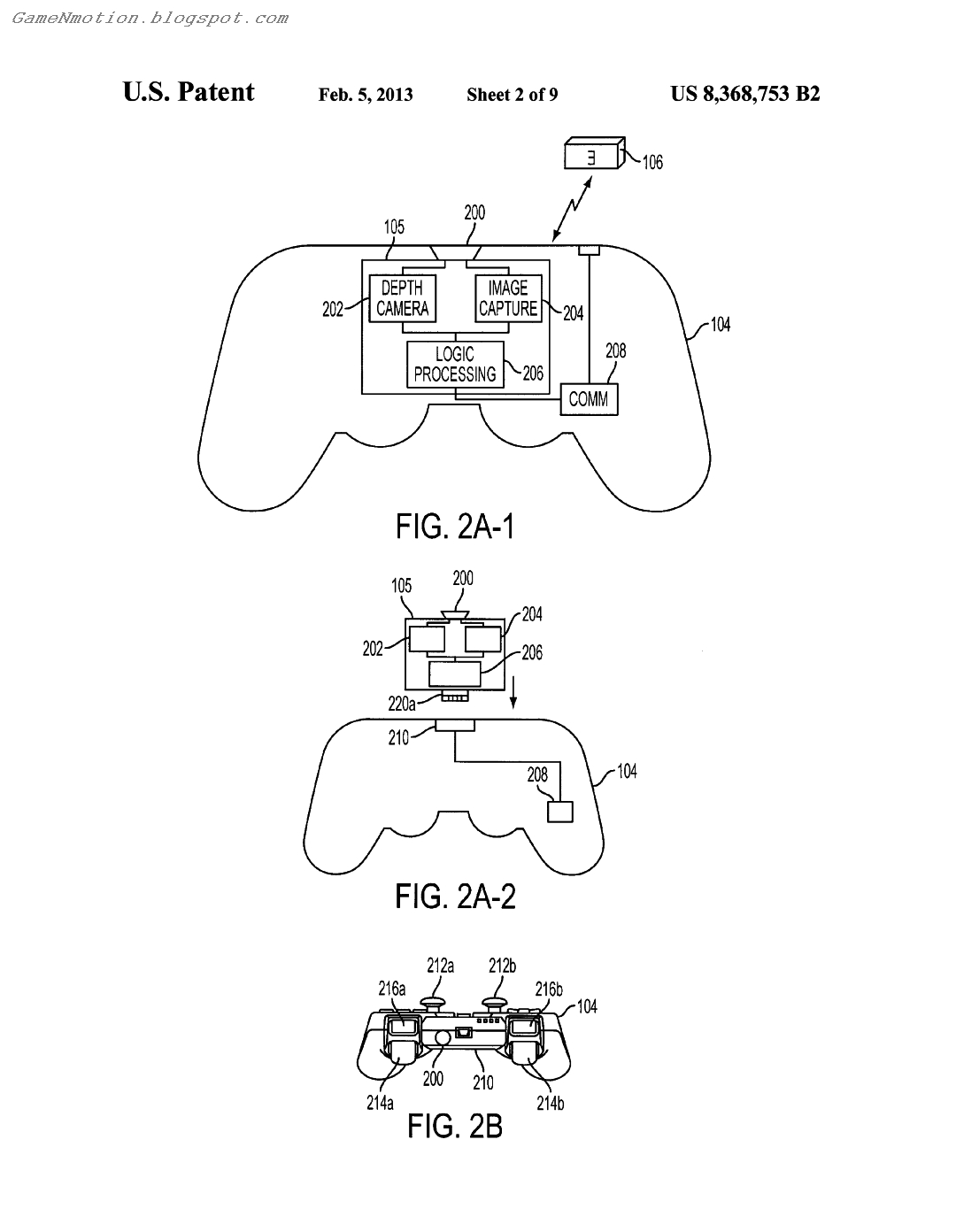

Touchpad which leads me to believe that this is the DS4 controller.

& I hope I'm right because that would mean that you can place the controller on your table & it will be able to track your hands in 3D & it will also be used for 3D scanning of objects into games & it can also scan your face.

Touchpad which leads me to believe that this is the DS4 controller.

& I hope I'm right because that would mean that you can place the controller on your table & it will be able to track your hands in 3D & it will also be used for 3D scanning of objects into games & it can also scan your face.

Won't it have to be rather... huge to facilitate decent scanning of objects like hands - or soda cans as in the patent. For that reason i doubt it's the DS4. Most likely an added peripheral.

A further theory/speculation - it is the pad which can be utilized with the "break-apart" DS4 controller

Won't it have to be rather... huge to facilitate decent scanning of objects like hands - or soda cans as in the patent. For that reason i doubt it's the DS4. Most likely an added peripheral.

A further theory/speculation - it is the pad which can be utilized with the "break-apart" DS4 controller

the cameras could have wide angle lens & also if you read it they talk about rotating the object to get a full scan if it's too big.

the cameras could have wide angle lens & also if you read it they talk about rotating the object to get a full scan if it's too big.

I've finally read all of it (had some time to burn at work). The device seems to range from being a simple (if not more robust) AR marker with controls attached, to having capabilities such as a touchscreen, 3D facial and skeletal scanning and even its own built in co-processor. The latter features obviously being more expensive and difficult to implement.

Research appears to be quite detailed based on the patent so it remains to be seen what features the device ships with - if or when it does. Co-processors, active stereoscopic cameras and touchscreens could potentially all lead to large power consumption issues

The 'EyePad' thing is just a tongue in cheek reference to iPad...

I think the patent is just the product of brainstorming that might have been linked to PS4's controller (with the touchpad and all). With the rumours we have, I don't think its key idea - the depth volume for finger/object tracking around the touchpad - has made it into the final product.

I think the patent is just the product of brainstorming that might have been linked to PS4's controller (with the touchpad and all). With the rumours we have, I don't think its key idea - the depth volume for finger/object tracking around the touchpad - has made it into the final product.

The 'EyePad' thing is just a tongue in cheek reference to iPad...

I think the patent is just the product of brainstorming that might have been linked to PS4's controller (with the touchpad and all). With the rumours we have, I don't think its key idea - the depth volume for finger/object tracking around the touchpad - has made it into the final product.

Would be great to mess around with functionality like accurate hand and finger tracking and, more importantly, mapping though. I could think of quite a few accessibility options it opens up. After all, the leap motion is supposedly doing it already - how well it does that remains to be seen but the reviews seem promising.

jeff_rigby

Banned

This might explain the Sony one camera depth sensing (Via reflected IR intensity) patent and the two camera depth sensing (Via Parallax) patents. Both may be used next generation with different accessories. One camera depth sensing being cheaper with less overhead and in smaller cheaper accessories and perhaps in a new camera for the PS3 Playstation Eye. Two cameras in the PS4 Playstation Eye which likely usesthe cameras could have wide angle lens & also if you read it they talk about rotating the object to get a full scan if it's too big.

Good point and after a few minutes thought; these features are in handhelds operating on batteries and draw little power in part because of accelerator hardware (Co-processors) which I expect in the PS4 and Xbox 720.Razgreez said:I've finally read all of it (had some time to burn at work). The device seems to range from being a simple (if not more robust) AR marker with controls attached, to having capabilities such as a touchscreen, 3D facial and skeletal scanning and even its own built in co-processor. The latter features obviously being more expensive and difficult to implement.

Research appears to be quite detailed based on the patent so it remains to be seen what features the device ships with - if or when it does. Co-processors, active stereoscopic cameras and touchscreens could potentially all lead to large power consumption issues

In any case I expect all this is to support casual and Augmented Reality.

Gotta watch this video on the PS4 Press Conference; Do's and Don'ts

The 'EyePad' thing is just a tongue in cheek reference to iPad...

I think the patent is just the product of brainstorming that might have been linked to PS4's controller (with the touchpad and all). With the rumours we have, I don't think its key idea - the depth volume for finger/object tracking around the touchpad - has made it into the final product.

Maybe? & maybe not!

Eyedentity

PlayStation Eye

Remember the HMZ Sony demoed with the camera mounted on it?

http://kotaku.com/5945181/ive-seen-the-future-of-virtual-reality-and-it-is-terrifying

That's one area where you really need stereo cameras as opposed to mono + depth sensing.

http://kotaku.com/5945181/ive-seen-the-future-of-virtual-reality-and-it-is-terrifying

That's one area where you really need stereo cameras as opposed to mono + depth sensing.

Just thought about something!

Sony sell cameras to most of the Smart Phone makers & they sell TV's , Movies, Music , Games & Entertainment products , all of this would benefit from having a better control interface & the Eyepad could be just an example of how this control interface can be inserted into a product so it could be anything from a Smart Phone with 2 3D Cameras on the side of the screen or a TV remote with the cameras be side the touchpad , to the PlayStation 4 controller with the cameras beside the touchpad.

PlayStation 4 would be the best way to get their control interface into homes & make other companies see it as something that they would want in their products.

Sony sell cameras to most of the Smart Phone makers & they sell TV's , Movies, Music , Games & Entertainment products , all of this would benefit from having a better control interface & the Eyepad could be just an example of how this control interface can be inserted into a product so it could be anything from a Smart Phone with 2 3D Cameras on the side of the screen or a TV remote with the cameras be side the touchpad , to the PlayStation 4 controller with the cameras beside the touchpad.

PlayStation 4 would be the best way to get their control interface into homes & make other companies see it as something that they would want in their products.

jeff_rigby

Banned

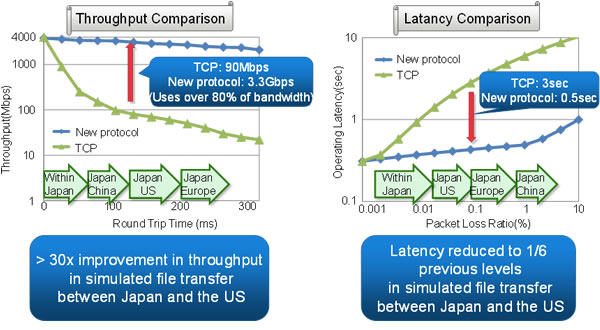

In Theory: why cloud gaming is coming home

PS4 to Gaikai like serve to handhelds in the home and to be served via Sony's Gaikai server.Having failed to find a sustainable business model in its original form, cloud gaming technology is coming home. By the end of the year, several major manufacturers will be offering players the ability to stream gameplay from PCs to mobile devices and set-top boxes in the home - with next-gen consoles perfectly positioned to follow suit.

Nvidia has already revealed its plans with the intriguing Project Shield announcement at CES last month. Integrating a state-of-the-art mobile processor in the form of Tegra 4, Shield not only allows for Android gaming on the move but also connects via WiFi to a GeForce "Kepler" GTX-equipped PC, allowing for any game to be streamed over a home network onto the handheld. Valve is set to follow suit with its entry-level Steambox, which in concept sounds very similar indeed to the OnLive microconsole - a low-power device designed for media streaming and equipped with interfaces for gaming controllers.

AuthenticM

Member

Do you think Sony will do something similar to Smartglass ? I mean, they already have their own cellphones running Android (I don't know if they have tablets though). I could see them making such an application for Android and iOS. If the controller really has a touchscreen, maybe they could do something with that.

jeff_rigby

Banned

My guess also.Gaikai (PSTV): The complete back catalogue of PS games from PS1/PS2/PSP/PS3/Vita/PSMobile, streamable to ANY device that is connected to the internet.Your ID is locked to the new controller and NOT the console.PSTV would be integrated into every Bravia TV too and any TV that bought the licensing agreement.

In fact I believe that IS the biggest announcement we will get on WED.

Streaming a game GAIKAI style in a home network from PS4 to handheld over a Gigabit network or WiFi Direct or even just wifi will have much less latency than from a Gaikai server hundreds of miles from the IP server head end. Depending on the type of game, some can be Gaikai served from a remote server farm and some can't, those that can't be served from a remote Gakai server farm could be served locally in the home by a PS4.

Sony last week updated "Home" to 1.75 and moved personal data from the PS3 in the home to a "Home" server. This I believe is the first step in moving "Home" to a Gaikai server model. Instead of moving each Home area's assets to be locally generated in your PS3, they will be generated in a GAIKAI server and streamed as video to Smart TVs, Networked blu-ray players and handhelds. This, I think, is to be one of the announcements on the 20th.

The GAIKAI video streaming will be via HTML5 DASH. The PS3 and other Sony CE platforms should get a major firmware update to the browser to support this. Either a separate app or browser with W3C game controller standards will be used. The PS3 is overdue for a browser update.

Sony with the above will announce their new stores, apps, Home and revamped and expanded Playstation Network. Some of the PS4 features may be announced that support the above Gaikai game streaming in the home and other streaming services to handhelds and CE platforms.

With the same software stack for the above, RVU can be supported and it's supposed to be here NOW.

Flip the above with the PS4 as the set top box supporting GAIKAI/playstation network/PSTV instead of the DVR cablebox. So two boxes in the home network supporting all these new features (PS4 & Cable DVR/RVU). Rumored for the Xbox 720 is it's one box that does it all (Cable DVR and server to handhelds).

EDIT: Just found this on SemiAccurate by MTd2 on solving latency for GaiKai over networks.

seattle6418

Member

Home 1.75 has us moving local stored personal data to the server. This likely means Home will soon be Gaikai served which also means it can expand to Handhelds and Smart TVs.

With this there should be no load times to move from site to site in Home.

I expect Gaikai to be a big part of the Playstation announcements on the 20th.

Makes sense. Home was a good idea poorly executed due to PS3 limitations. If it's through Gaikai, then it could really be cool, specially if i can use it from other devices like my smartphone.

Home 1.75 has us moving local stored personal data to the server. This likely means Home will soon be Gaikai served which also means it can expand to Handhelds and Smart TVs.

With this there should be no load times to move from site to site in Home.

I expect Gaikai to be a big part of the Playstation announcements on the 20th.

Gah, I hope they revamp the art style first. No revamp, no announcement !

Graphics Horse

Member

the cameras could have wide angle lens & also if you read it they talk about rotating the object to get a full scan if it's too big.

That controller pic shows the lens on the back next to the usb port so it's a different idea, but I think there could be something to this Leap Motion theory simply because the pad looks the same size and shape and glassy looking. You'd be able to put the pad down in front of you and do intricate kinecty finger movements above it.

That controller pic shows the lens on the back next to the usb port so it's a different idea, but I think there could be something to this Leap Motion theory simply because the pad looks the same size and shape and glassy looking. You'd be able to put the pad down in front of you and do intricate kinecty finger movements above it.

That's something that they talked about in the EyePad patent.

& I really hope they have the cameras in the controller because the scanning stuff sounds pretty cool & being that you can move it around means you can go 3D Scanner Crazy in your house.

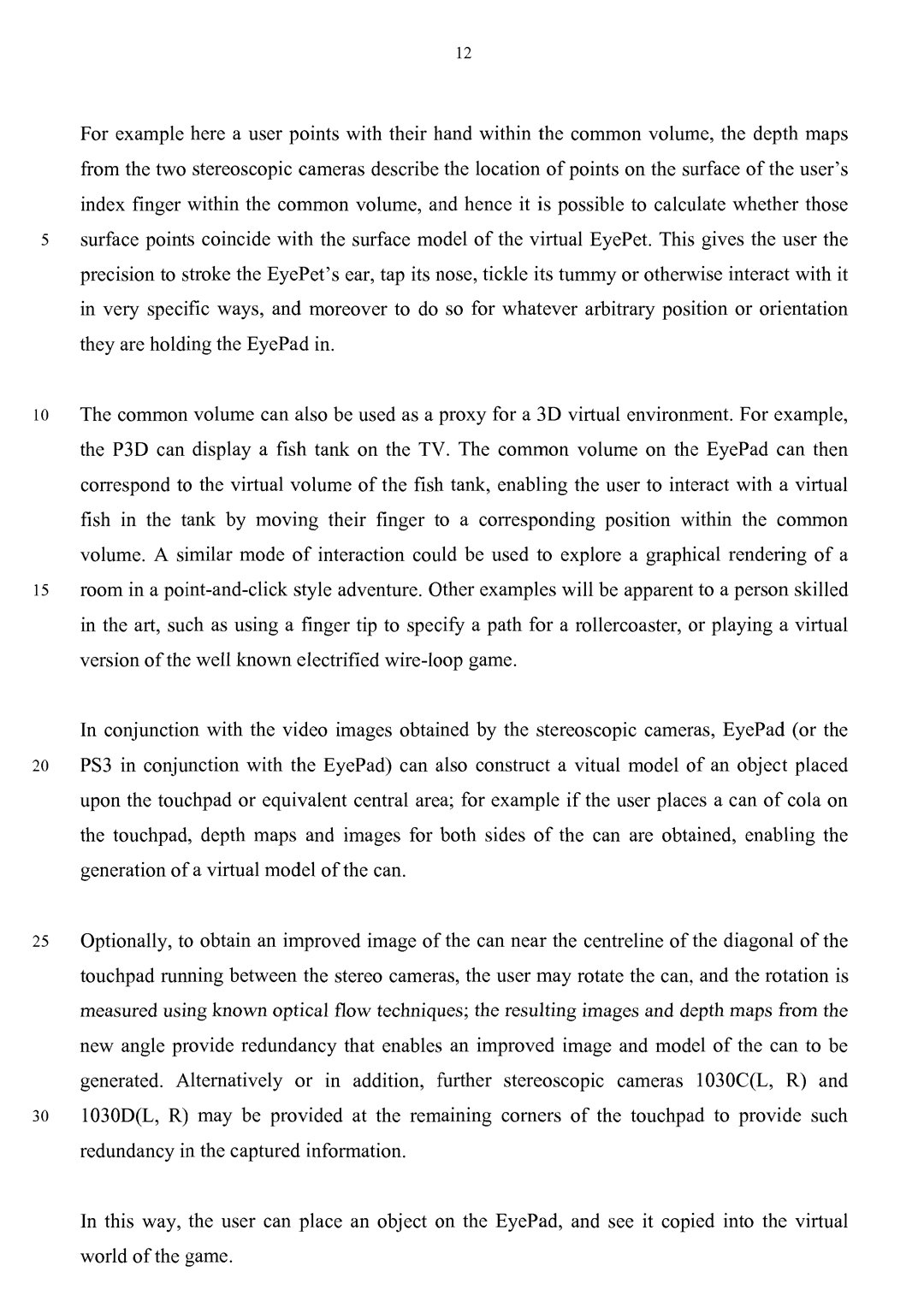

[0074] In addition to this AR marker functionality, as noted above the stereoscopic views of the common volume located above the touch panel (or equivalent surface area) of the EyePad provide depth maps for any real object that is positioned within the common volume, such as the user's hand. From these depth maps and the known positions of the cameras on the EyePad, it is possible to construct a 3D model or estimate of the user's hand with respect to the location and orientation of the EyePad and hence also with respect to the location and orientation of the EyePet (or other virtual entities) the are interacting with the EyePad. The 3D model of the EyePet and the 3D model of the user's hand can thus occupy a common virtual space, enabling very precise interaction between them.

[0075] For example here a user points with their hand within the common volume, the depth maps from the two stereoscopic cameras describe the location of points on the surface of the user's index finger within the common volume, and hence it is possible to calculate whether those surface points coincide with the surface model of the virtual EyePet. This gives the user the precision to stroke the EyePet's ear, tap its nose, tickle its tummy or otherwise interact with it in very specific ways, and moreover to do so for whatever arbitrary position or orientation they are holding the EyePad in.

[0076] The common volume can also be used as a proxy for a 3D virtual environment. For example, the P3D can display a fish tank on the TV. The common volume on the EyePad can then correspond to the virtual volume of the fish tank, enabling the user to interact with a virtual fish in the tank by moving their finger to a corresponding position within the common volume. A similar mode of interaction could be used to explore a graphical rendering of a room in a point-and-click style adventure. Other examples will be apparent to a person skilled in the art, such as using a finger tip to specify a path for a rollercoaster, or playing a virtual version of the well known electrified wire-loop game.

[0077] In conjunction with the video images obtained by the stereoscopic cameras, EyePad (or the PS3 in conjunction with the EyePad) can also construct a vitual model of an object placed upon the touchpad or equivalent central area; for example if the user places a can of cola on the touchpad, depth maps and images for both sides of the can are obtained, enabling the generation of a virtual model of the can.

[0078] Optionally, to obtain an improved image of the can near the centreline of the diagonal of the touchpad running between the stereo cameras, the user may rotate the can, and the rotation is measured using known optical flow techniques; the resulting images and depth maps from the new angle provide redundancy that enables an improved image and model of the can to be generated. Alternatively or in addition, further stereoscopic cameras 1030C(L, R) and 1030D(L, R) may be provided at the remaining corners of the touchpad to provide such redundancy in the captured information.

[0079] In this way, the user can place an object on the EyePad, and see it copied into the virtual world of the game.

[0080] In a similar manner, the user can put their face within the common volume in order to import their own face onto an in-game character or other avatar. Where the common volume is smaller than the user's face, again an optical flow technique can be used to build multiple partial models of the user's face as it is passed through the common volume, and to assemble these partial models into a full model of the face. This technique can be used more generally to sample larger objects, relating the accumulated depth maps and images to each other using a combination of optical flow and the motion detection of the EyePad to create a final model of the object.

jeff_rigby

Banned

Cable TV giants might be plotting cloud gaming service to take on Xbox, PlayStation

Neogaf thread already started on this subject

Point of this is Mid 2013 everything apparently is starting from Cable TV RVU True2way DVR boxes (DLNA + DTCP-IP), Silicon Dust cablecard digital tuners that serve via DLNA + DTCP-IP, ATSC 2.0 and XTV and cable companies according to the articles will be offering Cloud gaming. Sony and Microsoft need their platforms shipping earlier than the traditional end of year season.

Neogaf thread already started on this subject

Point of this is Mid 2013 everything apparently is starting from Cable TV RVU True2way DVR boxes (DLNA + DTCP-IP), Silicon Dust cablecard digital tuners that serve via DLNA + DTCP-IP, ATSC 2.0 and XTV and cable companies according to the articles will be offering Cloud gaming. Sony and Microsoft need their platforms shipping earlier than the traditional end of year season.

jeff_rigby

Banned

PS4 PDF from Sony with overview and specs

GDDR5 used....that makes me and multiple professionals I've cited wrong. That they needed GDDR5 bandwidth, that's still correct but Cost and power are still issues. Maybe they played it safe (not knowing in 2010 if TSVs and Interposer would be ready) and GDDR5 will be a temporary solution till an early refresh next year. 176 GB/s can be attained with 256-bit interface under 5.5 Gbps transfer rate. You can't stack GDDR5 more than two high due to heat and with two high you can wirebond top memory to bottom so no TSVs are needed. 8Gbytes is allot of GDDR5 chips and why prevailing guesses eliminated 8 GByte GDDR5 as a possible.

No mention of XTV/RVU or Google TV features as speculated in the Xbox 720 leak. GDDR5 is troubling for these features (too much power used) unless there is a SOC with it's own memory to handle that.

Best info on PS4 here: http://www.neogaf.com/forum/showthread.php?t=514512

GDDR5 used....that makes me and multiple professionals I've cited wrong. That they needed GDDR5 bandwidth, that's still correct but Cost and power are still issues. Maybe they played it safe (not knowing in 2010 if TSVs and Interposer would be ready) and GDDR5 will be a temporary solution till an early refresh next year. 176 GB/s can be attained with 256-bit interface under 5.5 Gbps transfer rate. You can't stack GDDR5 more than two high due to heat and with two high you can wirebond top memory to bottom so no TSVs are needed. 8Gbytes is allot of GDDR5 chips and why prevailing guesses eliminated 8 GByte GDDR5 as a possible.

No mention of XTV/RVU or Google TV features as speculated in the Xbox 720 leak. GDDR5 is troubling for these features (too much power used) unless there is a SOC with it's own memory to handle that.

Best info on PS4 here: http://www.neogaf.com/forum/showthread.php?t=514512

Graphics Horse

Member

Looks like there was.nothing to that mini eyepad idea now the trackpad is no longer glossy.

Looks like there was.nothing to that mini eyepad idea now the trackpad is no longer glossy.

Their is always the new Wonderbook or EyePet accessory that will come down the road.

would have been nice to have it built into the controller though.

jeff_rigby

Banned

Some interesting developments:

Super DAE arrested and a big article on Kotaku...the leaks were real and bigger than reported.

This Microsoft patent on Web-Browser Based Desktop And Application Remoting Solution ties, I think, to Office 365 showing up on Samsung smart TVs. It's cloud served Office productivity tools showing up on consumer Smart TVs. Special applications not needed if a Browser (W3C standards are including multiple new input devices) is available that supports Video. A change in the tiled - only updating parts of the screen that change - to the entire screen updated at video FPS rates using video. New Codecs, more powerful hardware and faster internet connections are changing remote desktop, allowing Cloud Desktop and gaming. Onlive first did this and got into trouble with Microsoft.

There are two parts to the above patent, one being Web Browser based desktop which I speculated might be coming for the PS3 in 2010. The software stack to support XTV can support a Browser Desktop and is the idea behind the Chrome OS, Gnome Mobile, Android and PS Mobile (Gnome Mono); limited number of cross platform native libraries supporting a Browser/virtual engine to allow cross platform applications. The original XTV (Xtended TV using browser and Java for apps) standard uses Java for applications and to that the Javascript engine should be part of the standard when ATSC 2.0 reaches candidate status spring 2013. Notice the W3C or WWW (World Wide Web Committee) is creating web browser standards that are being used to support APIs for the entire CE industry. This is the reason I've been stressing HTML5 and Web browsers in the PS3.

Voice recognition standards: There have been Voice recognition APIs in W3C since 2004 and updated Oct 2012. Microsoft is adopting the W3C standards as the basis for their Speech recognition.

Gesture Recognition Standards: Gesture recognition and the W3C starts with device orientation and shaking (acceleration detection) of handheld phones. It continues into W3C specs for gamepad and gesture recognition using a gamepad.

http://www.academia.edu/175436/iGesture_A_General_Gesture_Recognition_Framework

Top end Samsung Smart TVs have Voice and gesture control, built in Skype camera, Browser and Apps. In addition they have a upgrade path (Evolution Kit) that allows for updated features and performance via a built in module bay. <= Reread

If Microsoft and Sony want to capture the living room they will need to compete with Samsung and others as to features. In my opinion, the design of the PS4 and Xbox 720 take this into account and both will have Voice and Gesture recognition.

Game consoles can not be updated or they break compatibility. Sony and Microsoft have to build in performance (8 Gbyte GDDR5 memory) to equal what they might need 5 years down the road when even CE platforms have 8 GB of cheap 200 GB/sec memory using WideIO stacked DRAM.

CE platforms like the Samsung TV I cited above already have low power support for XTV and the Evolution port allows them to support future more powerful applications that take more performance and memory but in the near future cheaper and using little more power than is currently needed in a CE TV.

The PS4 and Xbox 720 because they are consoles that can't have updated performance must provide both low power (XTV, RVU, always on, voice activation, serving handhelds) and more memory and higher performance at the same time NOW.

Within two years a refresh to both can probably provide both low power and performance without having two systems in one. This was the basis for my stating the PS4 would have Wide IO stacked DRAM, Jaguar processor, Mobile 8000M GPU and accelerators and be based on Kabini or Pennar tablet designs. I thought this was possible (low power AND performance design at the same time) in 2013 as did Yole. Contrary to Charlie's (SemiAccurate) first post on the PS4 and the Sony CTO & SVP Technology platform, there are probably no TSVs and interposer in the PS4 as announced (needed to support as Charlie said in his PS4 article; "expect stacked memory and lots of it"). So many things in Charlies articles have proven true like "lots of memory" just not stacked as we all expected.

Many have been stating that 2014 would have been a better launch date for next generation but the Wii U, ATSC 2.0 and the cable industry (RVU and Tru2way) all launching in 2013 probably had a hand in the 2013 launch of PS4 and Xbox 720. Sony and the Blu-ray 4K standard looks like it will be 2014 only because of the drive standard for 4 layer/100Gbyte support is stalled. HEVC (h.265) called the 4K blu-ray codec by some has already been published.

Edit to be clear:

If Sony is using 8 GB GDDR5 for main RAM then they must have two systems in the PS4, a low power and a Performance SoC all in one. The low Power system can not use GDDR5 memory or the USB3 port and this is likely the reason for the separate Kinect/Eye port. Google TV platforms pull 8 watts average and a PS3 pulls 61 watts minimum. A PS4 with GDDR5 memory even clocked lower with a Jaguar CPU and only 4 CUs active is going to pull more than 25 watts and likely more than 45 watts at idle or streaming mainly because of the GDDR5 memory (45 watts is the current max for IPTV streaming and that is likely to drop). A Temesh with DDR3 and 2 CUs pulls between 5-15 watts and with Wide IO 176GB/sec DRAM a few watts more; add to this 5 watts for the Zero power GPU mode. There will be power mode regulations that impact the PS4 and Xbox 720 designs.

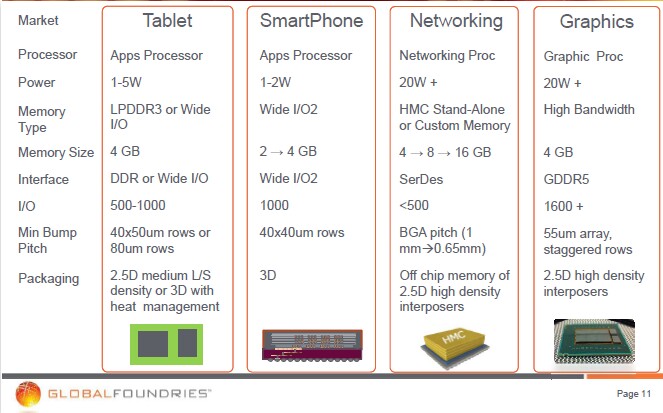

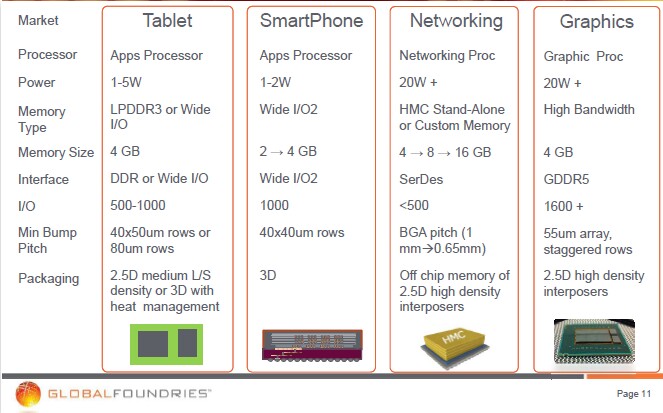

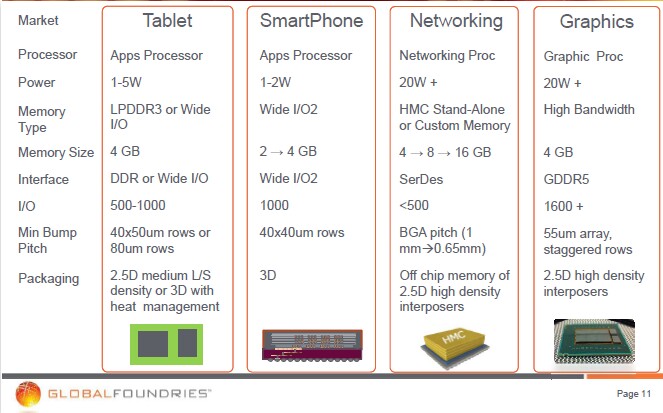

Edit: ALL use wide memory interfaces including a unexpected GDDR5 with 1600 connections. Wide IO GDDR5 running at lower clock speeds on Interposer far right???? Is that the future for AMD Graphics and could we be talking PS4 on Interposer with GDDR5? That might change everything.

Super DAE arrested and a big article on Kotaku...the leaks were real and bigger than reported.

This Microsoft patent on Web-Browser Based Desktop And Application Remoting Solution ties, I think, to Office 365 showing up on Samsung smart TVs. It's cloud served Office productivity tools showing up on consumer Smart TVs. Special applications not needed if a Browser (W3C standards are including multiple new input devices) is available that supports Video. A change in the tiled - only updating parts of the screen that change - to the entire screen updated at video FPS rates using video. New Codecs, more powerful hardware and faster internet connections are changing remote desktop, allowing Cloud Desktop and gaming. Onlive first did this and got into trouble with Microsoft.

There are two parts to the above patent, one being Web Browser based desktop which I speculated might be coming for the PS3 in 2010. The software stack to support XTV can support a Browser Desktop and is the idea behind the Chrome OS, Gnome Mobile, Android and PS Mobile (Gnome Mono); limited number of cross platform native libraries supporting a Browser/virtual engine to allow cross platform applications. The original XTV (Xtended TV using browser and Java for apps) standard uses Java for applications and to that the Javascript engine should be part of the standard when ATSC 2.0 reaches candidate status spring 2013. Notice the W3C or WWW (World Wide Web Committee) is creating web browser standards that are being used to support APIs for the entire CE industry. This is the reason I've been stressing HTML5 and Web browsers in the PS3.

Voice recognition standards: There have been Voice recognition APIs in W3C since 2004 and updated Oct 2012. Microsoft is adopting the W3C standards as the basis for their Speech recognition.

Gesture Recognition Standards: Gesture recognition and the W3C starts with device orientation and shaking (acceleration detection) of handheld phones. It continues into W3C specs for gamepad and gesture recognition using a gamepad.

http://www.academia.edu/175436/iGesture_A_General_Gesture_Recognition_Framework

http://www.theregister.co.uk/2013/01/15/chrome_adopts_web_speech_api/ said:The API is a W3C initiative that makes it possible for browsers to tune into audio input, or even record sound.

If you're brave enough to run the Chrome 25 beta, available here, Google's demo of a voice-driven email composer shows off the new feature nicely. Google says the API also works in the Chrome Android beta.

Once the feature makes it into production versions of Chrome, things could get mighty interesting. A voice-enabled Android browser would take the mobile OS's voice-recognition capabilities beyond its current Voice Actions abilities. It will also take Android past Apple's flawed speech Siri recognition – which is, in your correspondent's experience, rather less useful than the voice-driven search in Google's iOS search app. A speechified version of Chrome on iOS with speech baked in would put the cat among the pigeons.

Speech in Chrome is not Google's only post-WIMPs experiment. One worth looking at is the collection of games created to promote global men's health charity Movember. The games use a PC's camera to control an on-screen mustache, with changes of your head position and wiggles of your upper lip replacing more conventional game controllers.

Google's adoption of the Web Speech API comes on top of numerous gesture-recognition efforts, including Intel's recent release of an SDK for its perceptual computing toolkit, Kinect for PC, and gesture-driven interfaces in all manner of Smart TVs. The frequency of announcements of this ilk signal that the industry is moving beyond the WIMPs interface. Google's addition of speech to Chrome will offer another post-WIMPs method of interaction and do so in a crucial class of application.

Top end Samsung Smart TVs have Voice and gesture control, built in Skype camera, Browser and Apps. In addition they have a upgrade path (Evolution Kit) that allows for updated features and performance via a built in module bay. <= Reread

If Microsoft and Sony want to capture the living room they will need to compete with Samsung and others as to features. In my opinion, the design of the PS4 and Xbox 720 take this into account and both will have Voice and Gesture recognition.

Game consoles can not be updated or they break compatibility. Sony and Microsoft have to build in performance (8 Gbyte GDDR5 memory) to equal what they might need 5 years down the road when even CE platforms have 8 GB of cheap 200 GB/sec memory using WideIO stacked DRAM.

CE platforms like the Samsung TV I cited above already have low power support for XTV and the Evolution port allows them to support future more powerful applications that take more performance and memory but in the near future cheaper and using little more power than is currently needed in a CE TV.

The PS4 and Xbox 720 because they are consoles that can't have updated performance must provide both low power (XTV, RVU, always on, voice activation, serving handhelds) and more memory and higher performance at the same time NOW.

Within two years a refresh to both can probably provide both low power and performance without having two systems in one. This was the basis for my stating the PS4 would have Wide IO stacked DRAM, Jaguar processor, Mobile 8000M GPU and accelerators and be based on Kabini or Pennar tablet designs. I thought this was possible (low power AND performance design at the same time) in 2013 as did Yole. Contrary to Charlie's (SemiAccurate) first post on the PS4 and the Sony CTO & SVP Technology platform, there are probably no TSVs and interposer in the PS4 as announced (needed to support as Charlie said in his PS4 article; "expect stacked memory and lots of it"). So many things in Charlies articles have proven true like "lots of memory" just not stacked as we all expected.

Many have been stating that 2014 would have been a better launch date for next generation but the Wii U, ATSC 2.0 and the cable industry (RVU and Tru2way) all launching in 2013 probably had a hand in the 2013 launch of PS4 and Xbox 720. Sony and the Blu-ray 4K standard looks like it will be 2014 only because of the drive standard for 4 layer/100Gbyte support is stalled. HEVC (h.265) called the 4K blu-ray codec by some has already been published.

Edit to be clear:

If Sony is using 8 GB GDDR5 for main RAM then they must have two systems in the PS4, a low power and a Performance SoC all in one. The low Power system can not use GDDR5 memory or the USB3 port and this is likely the reason for the separate Kinect/Eye port. Google TV platforms pull 8 watts average and a PS3 pulls 61 watts minimum. A PS4 with GDDR5 memory even clocked lower with a Jaguar CPU and only 4 CUs active is going to pull more than 25 watts and likely more than 45 watts at idle or streaming mainly because of the GDDR5 memory (45 watts is the current max for IPTV streaming and that is likely to drop). A Temesh with DDR3 and 2 CUs pulls between 5-15 watts and with Wide IO 176GB/sec DRAM a few watts more; add to this 5 watts for the Zero power GPU mode. There will be power mode regulations that impact the PS4 and Xbox 720 designs.

Edit: ALL use wide memory interfaces including a unexpected GDDR5 with 1600 connections. Wide IO GDDR5 running at lower clock speeds on Interposer far right???? Is that the future for AMD Graphics and could we be talking PS4 on Interposer with GDDR5? That might change everything.

Very interesting read summarizing PS4 architectural choices (sorry if old, I've not seen it):

http://www.bradfordtaylor.com/insert-blank-press-start/ps4-vs-the-great-discord/

http://www.bradfordtaylor.com/insert-blank-press-start/ps4-vs-the-great-discord/

Edit to be clear:

If Sony is using 8 GB GDDR5 for main RAM then they must have two systems in the PS4, a low power and a Performance SoC all in one. The low Power system can not use GDDR5 memory or the USB3 port and this is likely the reason for the separate Kinect/Eye port. Google TV platforms pull 8 watts average and a PS3 pulls 61 watts minimum. A PS4 with GDDR5 memory even clocked lower with a Jaguar CPU and only 4 CUs active is going to pull more than 25 watts and likely more than 45 watts at idle or streaming mainly because of the GDDR5 memory (45 watts is the current max for IPTV streaming and that is likely to drop). A Temesh with DDR3 and 2 CUs pulls between 5-15 watts and with Wide IO 176GB/sec DRAM a few watts more; add to this 5 watts for the Zero power GPU mode. There will be power mode regulations that impact the PS4 and Xbox 720 designs.

Hey Jeff, I assume you missed the reveal that the PS4 has an onboard ARM CPU, for OS and/or low power tasks.

http://www.eurogamer.net/articles/df-hardware-spec-analysis-playstation-4

DF said:There was also talk of a new processing module in the PS4 hardware designed to handle tasks like background downloading. Our sources suggest a low-power ARM core designed to handle "standby" tasks along these lines, while the console also saves the current gameplay state when the system is closed down, meaning instant access to the last game you played when you power up again.

DF said:There are a number of new ideas we love about the PlayStation 4, revealed for the first time last night. A low-power ARM processor manages PS4 while it's on standby, and freeze-frames current gameplay in memory for instant-on gaming when you power-up

jeff_rigby

Banned

Thanks for the cites...I do understand that there is probably a complete low power system in addition to a performance system in the PS4. If Sony is going to support something like Google TV as speculated by Microsoft in their leaked Xbox 720 powerpoint then a low power system (less than 10 watts) with most of the Google TV external box features is needed (including 8 GB memory). How are they going to accomplish that? Also when, as rumored, Sony releases an "other OS" for the PS4 is it going to include support for all hardware or only parts of it.Hey Jeff, I assume you missed the reveal that the PS4 has an onboard ARM CPU, for OS and/or low power tasks.

http://www.eurogamer.net/articles/df-hardware-spec-analysis-playstation-4

ARM IP is cheap and complete Android SoC systems built on ARM are only about $15 (USB dongle). The issues are not going to be the cost of the ARM components (including RAM or can GDDR5 be used for both?) but interfacing it with AMD hardware and sharing the ports. Part of that is OS complexity not previously seen. Multiple CPUs and accelerator families requiring different native languages and using different BUSS/handshaking. This is what HSAIL/HSA is supposed to support, I just have a problem visualizing the OS doing this.

So two RAM pools both 8 GB in size (LPDDR3 and GDDR5) with plans to quickly move to Wide IO stacked or Stacked GDDR5 as seen in the following GF future products (far right). Or is the background low power system using a small amount of RAM and meant only to support a few modes with a fast wake of the rest of the system to support other features? What does Sony consider to be acceptable power use?

Wide io GDDR5 running at a much lower clock speed and able to support low power modes as in the following paper co authored by AMD:

Energy-efficient GPU Design with Reconfigurable In-package Graphics Memory Published June 2012 wide IO GDDR5 memory on Interposer

bigboss370

Member

Very interesting read summarizing PS4 architectural choices (sorry if old, I've not seen it):

http://www.bradfordtaylor.com/insert-blank-press-start/ps4-vs-the-great-discord/

nice find, i hadn't seen this yet. its a great article.

jeff_rigby

Banned

Think of me as an informed armchair quarterback with no inside track. I understand systems and can follow schematics, flow charts and timing charts but do not design or program on these systems. I'm 61 and was involved in programming in the 80's with two partners and developed 4 commercial products of which one was bought by EA (Music Construction Set for the ST) and one distributed in Europe (Revolver), this was before GPUs. As such my opinion on gaming performance is worthless other than I agree with the very good article cited above your post.Jeff I am curious, what are your thoughts on the PS4 as a gaming machine?

I look at the PS4 as a complete system building on the PS3 features and Google TV (XTV, RVU, Tru2way, ATSC 2.0). Sony's Feb 20th reveal was only about 1/4 or less of the features coming in the PS4. Speculation based on other CE platforms like Samsung's Smart TV and knowing what's coming in 2013 that can make or break for Sony and Microsoft if they want to control the living room.

Google TV like features include services (TV Guides and databases) that Sony and Microsoft can charge for. There are going to lots of free features and tiers of features that have monthly fees.

An example of Sony forward thinking on features is that the Vita OS includes handwriting script to text which hasn't been used yet. Vita now with latest firmware update has an accelerated webkit2 browser but the PS3 is still waiting for the same update to it's webkit2 browser. Why? My guess is there is more coming with the browser update due to RVU, XTV and APPs that ATSC 2.0 will support.

jeff_rigby

Banned

DTCP-IP was supposed to come with firmware update 3.0 and the PS3 Slim was released without Linux. With Firmware 3.21 Other OS Linux support was removed from the PS3 and DTCP-IP was implemented. The playstation blog stated this was to have a more secure system. androvsky found a post pre-Firmware 3.0 that predicted most of the firmware 3.0 features and also claimed DTCP-IP was coming with firmware 3.0.....it didn't until firmware 3.21 a few months later.

The reason for Other OS Linux removal was likely as stated (Security) but for streaming IPTV security (On-line and in the home) which was the thrust of firmware 3.0 - 3.50. Firmware 3.50 - 4.31 and higher is for the HTML5 webkit2 browser as webkit is a work in progress. Still missing is DASH HTML5 IPTV streaming with Google/Microsoft/Netflix sponsored DRM using Microsoft's Playready DRM which Sony has already announced they will be using. This likely signals a change in the DRM and Player currently being used for adaptive streaming. Somewhere near or with PS3 firmware 3.5, AVM+ (Adobe Flash Free for non-commercial use) was implemented and the PS3 started using Flash adaptive streaming with the same standards seen in Flash server 3.5.

Note: There was a discussion on BY3D about the adaptive IPTV player being used by Sony and one of my finds was AVM+ (free from Adobe for non commercial use and supported by Mozilla) and the Flash server 3.5 standards matched Sony statements about soon to come features in EU IPTV apps (Confirmed in the PS3 about menu under settings). Lots of criticism on BY3D about this and I then found the Sony SNAP site that was using Gstreamer and assumed that was the adaptive player being used; this may still happen but was 3 years premature (Gstreamer is the standard player in Firefox, Opera, GTKwebkit, Sony SNAP and in multiple Sony TVs).

DTCP-IP is used to encrypt IPTV streams from a DLNA server in the home to the PS3 and other platforms. RVU is a standard which requires a slightly modified version of DLNA + DTCP-IP + (bitmapped or Vector graphics commands) for the UI that is now accepted as part of DLNA. RVU (remote View) allows control of Cable box DVR boxes and streaming the content in the box or using the tuners for live play. Plans are to use DLNA for Tru2way, RVU with DVR box or cable box 2 way communication with a head end box that may just contains tuners and no recording ability and/or to support "clear" unencrypted cable and OTA RVU. Silicon dust has a tuner that can accept a cable card and serve DLNA-RVU for both OTA and Cable. Speculation is it should work with the PS3 now or soon with a PS3 DLNA upgrade.

The PS4 is going to have a ARM CPU to support "Trustzone" and it appears the codec accelerators in the PS4 are designed to work with the background ARM CPU which I assume is part of TRUSTZONE. DTCP-IP should be more secure and Linux not an issue with Security for the PS4.

Accelerated Webkit (GPU) composting is now supported on the Vita but the PS3 webkit has not been updated to support GPU acceleration. (Both the Vita and PS3 use the same GTKwebkit2 APIs and got webkit updates at nearly the same time until the latest Vita browser update.)

Vista windows and I think Microsoft Windows OS after Vista use the GPU as a choke point for DRM security as all Video has to go through the GPU. This is the reason for a number of issues; 1) Microsoft stating WebGL which gives access to the GPU as a security issue, 2) Open Source Linux GPU Drivers releases are slow or non-existent and as a result Linux is considered not secure for DRM if there is a Open source GPU driver, 3) Sony did not give access to the GPU for PS3 Other OS Linux for security reasons and to this point the PS3 has no GPU acceleration support for the Webkit2 browser.

So Linux support from the above could come to the PS4 but Sony needs to find a way to secure IPTV streaming in all it's forms on the PS3 that doesn't require the GPU as a choke point and/or insure WebGL and webkit GPU acceleration is secure. This is all speculation on my part and the cite I found seems to indicate that Sony has found a way to secure IPTV streaming on the PS3. This has likely been a major project for Sony since the PS3 was hacked. At the present time all secure IPTV streaming requires a reboot to insure a clean PS3 and full RUN support by the application rather than relying 100% on the PS3 software stack.

Job posting and posts indicating the PS3 and other platforms will be using WebMAF and Playready DRM.

PS3 (likely not a production firmware) supporting RVU in 2010

Job postings "Heavy single page HTML5 media apps"

More job postings for HTML5 apps using OpenGL or similar

If you look at the Playstation Store using HTML5 and the PS4 screenshots of the social features they appear similar. My speculation late 2010 was that the PS3 would eventually have a browser (HTML5) desktop and of course future Game consoles like the PS4 also. To this point the PS3 only partially supports a browser desktop and the last part, OpenGL ES support for the XMB to support Cairo, (currently uses XML using OpenVG which is a subset of Cairo that does not require OpenGLES) is still to come likely with webkit GPU acceleration. RVU uses HTML5 for the Menu UI and XTV will likely use HTML5 and Java or Java and XHTML 4.01 (without javascript using XML supported by OpenVG)

HDMI CEC may be used to control the Tru2way cable box connected to the Xbox 720 or PS4 HDMI IN port. Via RVU over the home network the control is via a new DLNA standard.

__________________

Provided an implementation for Tru2Way APIs for HDMI CEC messages This in 2009 and plans were at that time to support CEC on Tru2way boxes. Panasonic and Comcast are working to integrate HDMI-CEC technology with tru2way enabled set-top boxes.

Samsung Tru2way set top boxes for Cable and Satellite without CEC.

Cisco Tru2way set top box with CEC support. So some are supporting CEC over HDMI.

Turn your TV into a smart TV with a $100 USB stick that plugs into the HDMI and USB port on a TV.

Product INFO sheets started listing Tru2way boxes Oct-Nov 2012.

OpenCable OCAP 1.2.2 Reference Implementation Release Candidate-F Release Notes 9/2012 mention testing with the PS3

Linux build environment for OCAP Includes Cairo-Pango, XML2, jpeg, Java and more. I.E. it's a XHTML + Java implementation that is already in the PS3 (except for pango).

The reason for Other OS Linux removal was likely as stated (Security) but for streaming IPTV security (On-line and in the home) which was the thrust of firmware 3.0 - 3.50. Firmware 3.50 - 4.31 and higher is for the HTML5 webkit2 browser as webkit is a work in progress. Still missing is DASH HTML5 IPTV streaming with Google/Microsoft/Netflix sponsored DRM using Microsoft's Playready DRM which Sony has already announced they will be using. This likely signals a change in the DRM and Player currently being used for adaptive streaming. Somewhere near or with PS3 firmware 3.5, AVM+ (Adobe Flash Free for non-commercial use) was implemented and the PS3 started using Flash adaptive streaming with the same standards seen in Flash server 3.5.

Note: There was a discussion on BY3D about the adaptive IPTV player being used by Sony and one of my finds was AVM+ (free from Adobe for non commercial use and supported by Mozilla) and the Flash server 3.5 standards matched Sony statements about soon to come features in EU IPTV apps (Confirmed in the PS3 about menu under settings). Lots of criticism on BY3D about this and I then found the Sony SNAP site that was using Gstreamer and assumed that was the adaptive player being used; this may still happen but was 3 years premature (Gstreamer is the standard player in Firefox, Opera, GTKwebkit, Sony SNAP and in multiple Sony TVs).

DTCP-IP is used to encrypt IPTV streams from a DLNA server in the home to the PS3 and other platforms. RVU is a standard which requires a slightly modified version of DLNA + DTCP-IP + (bitmapped or Vector graphics commands) for the UI that is now accepted as part of DLNA. RVU (remote View) allows control of Cable box DVR boxes and streaming the content in the box or using the tuners for live play. Plans are to use DLNA for Tru2way, RVU with DVR box or cable box 2 way communication with a head end box that may just contains tuners and no recording ability and/or to support "clear" unencrypted cable and OTA RVU. Silicon dust has a tuner that can accept a cable card and serve DLNA-RVU for both OTA and Cable. Speculation is it should work with the PS3 now or soon with a PS3 DLNA upgrade.

The PS4 is going to have a ARM CPU to support "Trustzone" and it appears the codec accelerators in the PS4 are designed to work with the background ARM CPU which I assume is part of TRUSTZONE. DTCP-IP should be more secure and Linux not an issue with Security for the PS4.

Accelerated Webkit (GPU) composting is now supported on the Vita but the PS3 webkit has not been updated to support GPU acceleration. (Both the Vita and PS3 use the same GTKwebkit2 APIs and got webkit updates at nearly the same time until the latest Vita browser update.)

Vista windows and I think Microsoft Windows OS after Vista use the GPU as a choke point for DRM security as all Video has to go through the GPU. This is the reason for a number of issues; 1) Microsoft stating WebGL which gives access to the GPU as a security issue, 2) Open Source Linux GPU Drivers releases are slow or non-existent and as a result Linux is considered not secure for DRM if there is a Open source GPU driver, 3) Sony did not give access to the GPU for PS3 Other OS Linux for security reasons and to this point the PS3 has no GPU acceleration support for the Webkit2 browser.

So Linux support from the above could come to the PS4 but Sony needs to find a way to secure IPTV streaming in all it's forms on the PS3 that doesn't require the GPU as a choke point and/or insure WebGL and webkit GPU acceleration is secure. This is all speculation on my part and the cite I found seems to indicate that Sony has found a way to secure IPTV streaming on the PS3. This has likely been a major project for Sony since the PS3 was hacked. At the present time all secure IPTV streaming requires a reboot to insure a clean PS3 and full RUN support by the application rather than relying 100% on the PS3 software stack.

If RVU support is coming for the PS3 and Webkit is to get accelerated (GPU) composting then Sony likely has an answer that would allow Other OS Linux for the PS3.Insiders from Sony say they have introduced a customized kernel version rather than using the basic kernel to support this feature. This customized kernel may support specific versions of Linux only as a part of beta testing. Subsequently Sony will enable all version support after successful completion of beta testing.

But this time Sony is confident that they wont block this feature, and that they have an alternative to block the security threats.

An inside source also says Sonys firmware upgrade during the release of PlayStation 4 will re-enable the other OS support in PlayStation 3 as well. So its good news for PlayStation 3 owners too after suffering for couple of years. Moreover its believed to be a gamble to boost PlayStation 4 sales.

Job posting and posts indicating the PS3 and other platforms will be using WebMAF and Playready DRM.

PS3 (likely not a production firmware) supporting RVU in 2010

Job postings "Heavy single page HTML5 media apps"

More job postings for HTML5 apps using OpenGL or similar

If you look at the Playstation Store using HTML5 and the PS4 screenshots of the social features they appear similar. My speculation late 2010 was that the PS3 would eventually have a browser (HTML5) desktop and of course future Game consoles like the PS4 also. To this point the PS3 only partially supports a browser desktop and the last part, OpenGL ES support for the XMB to support Cairo, (currently uses XML using OpenVG which is a subset of Cairo that does not require OpenGLES) is still to come likely with webkit GPU acceleration. RVU uses HTML5 for the Menu UI and XTV will likely use HTML5 and Java or Java and XHTML 4.01 (without javascript using XML supported by OpenVG)

HDMI CEC may be used to control the Tru2way cable box connected to the Xbox 720 or PS4 HDMI IN port. Via RVU over the home network the control is via a new DLNA standard.

Same applies to the PS4.http://forum.beyond3d.com/showpost.php?p=1716237&postcount=968 said:This is the HDMI CEC standard: http://en.wikipedia.org/wiki/HDMI#CEC

The most interesting IMO parts are the tuner control, I guess that means that the Xbox ought to be able to tell the cable device to switch to X channel. There is also one touch play, one touch record, volume, time record etc. I would say that an Xbox with inline HDMI (HDMI pass-thru) ought to be able to tell the cable box to record X program, or switch to Y channel at a set time and record X program and directly play any stored information on the device. I don't think there are too many cable companies/satellite companies so as a standard it ought to be workable with the most common dozen or so devices.

__________________

Version 2.0

The HDMI Forum is working on the HDMI 2.0 specification.[151][152] In a 2012 CES press release HDMI Licensing, LLC stated that the expected release date for the next version of HDMI was the second half of 2012 and that important improvements needed for HDMI include increased bandwidth to allow for higher resolutions and broader video timing support.[153] Longer term goals for HDMI include better support for mobile devices and improved control functions.[153]

On January 8, 2013, HDMI Licensing, LLC announced that the next HDMI version is being worked on by the 83 members of the HDMI Forum and that it is expected to be released in the first half of 2013.[12][13][14]

Based on HDMI Forum meetings it is expected that HDMI 2.0 will increase the maximum TMDS per channel throughput from 3.4 Gbit/s to 6 Gbit/s which would allow a maximum total TMDS throughput of 18 Gbit/s.[154][155] This will allow HDMI 2.0 to support 4K resolution at 60 frames per second (fps).[154] Other features that are expected for HDMI 2.0 include support for 4:2:0 chroma subsampling, support for 25 fps 3D formats, improved 3D capability, support for more than 8 channels of audio, support for the HE-AAC and DRA audio standards, dynamic auto lip-sync, and additional CEC functions.[154] The Sony PlayStation 4 will utilize this standard.

Provided an implementation for Tru2Way APIs for HDMI CEC messages This in 2009 and plans were at that time to support CEC on Tru2way boxes. Panasonic and Comcast are working to integrate HDMI-CEC technology with tru2way enabled set-top boxes.

Samsung Tru2way set top boxes for Cable and Satellite without CEC.

Cisco Tru2way set top box with CEC support. So some are supporting CEC over HDMI.

Turn your TV into a smart TV with a $100 USB stick that plugs into the HDMI and USB port on a TV.

Product INFO sheets started listing Tru2way boxes Oct-Nov 2012.

OpenCable OCAP 1.2.2 Reference Implementation Release Candidate-F Release Notes 9/2012 mention testing with the PS3

Linux build environment for OCAP Includes Cairo-Pango, XML2, jpeg, Java and more. I.E. it's a XHTML + Java implementation that is already in the PS3 (except for pango).

Jeff, I love your posts.jeff_rigby said:...EVERY SINGLE THING HE SAYS...

I have no bloody idea what any of them ever mean but I still believe them, every single word of them!

jeff_rigby

Banned

RVU Ported to Sony PS3 & Bravia TVs February 05, 2013 Will be ported.

The advent of RVU soft clients not only on new Sony BRAVIA TVs but also on the estimated 16 million active connected PlayStation 3s in the US represents a significant expansion in the number of TVs that can use a RVU software client

Strategically, the relatively fast porting of the RVU client to new platforms emphasizes a key advantage of simple, remotely-rendered remote user interface (RUI) standards such as RVU: moving complexity from the client to the server (Picture based Menu) makes it easier to port the client to a range of hardware platforms.

The other class of remote UI being discussed in the industry is one based on HTML5, which would rely more heavily on the local graphics and rendering capabilities in the clients. HTML5-based remote UIs are being discussed by a wide range of service providers and pay-TV software companies. It is too early foresee how RVU and HTML5 will propagate through the market, whether one standard will supersede the other, or whether any degree of cross-compatibility will be achieved between them

jeff_rigby

Banned

ARM Trustzone in the PS4:

Three posts so far describing this and no one has questions? BY3D is still weeks behind NeoGAF in understanding the system. This applies to the Xbox 720 also!

The Cortex A5 used by AMD as a Trustzone is a package with up to 4 CPUs with instruction and data cache and a system Buss.

Search for Google TV & Arm Cortex A5 gives this: 06 tv ip box,Brand new 2012,ARM Cortex-A5 android Google tv

android TV Box, 1.2GHz ARM Cortex A5, tv box up to

I.E. There are two SoCs in one with a ARM low power trustzone separate from the High performance Game Console...ARM system for secure and low power IPTV and X86/GPU for gaming/performance. Some fabric must exist to manage threads across the ARM buss and X86 buss as they are different and incompatible...this is why in some use cases we can have two systems in one.http://infocenter.arm.com/help/index.jsp?topic=/com.arm.doc.prd29-genc-009492c/ch06s03s02.html said:The security of the system is achieved by partitioning all of the SoCs hardware and software resources so that they exist in one of two worlds - the Secure world for the security subsystem, and the Normal world for everything else. Hardware logic present in the TrustZone-enabled AMBA3 AXI bus fabric ensures that no Secure world resources can be accessed by the Normal world components, enabling a strong security perimeter to be built between the two. A design that places the sensitive resources in the Secure world, and implements robust software running on the secure processor cores, can protect almost any asset against many of the possible attacks, including those which are normally difficult to secure, such as passwords entered using a keyboard or touch-screen.

Which means the ARM Trustzone processor can also be used for some of the work in the "real world" Game Console side.The second aspect of the TrustZone hardware architecture is the extensions that have been implemented in some of the ARM processor cores. These additions enable a single physical processor core to safely and efficiently execute code from both the Normal world and the Secure world in a time-sliced fashion. This removes the need for a dedicated security processor core, which saves silicon area and power, and allows high performance security software to run alongside the Normal world operating environment.

Three posts so far describing this and no one has questions? BY3D is still weeks behind NeoGAF in understanding the system. This applies to the Xbox 720 also!

The Cortex A5 used by AMD as a Trustzone is a package with up to 4 CPUs with instruction and data cache and a system Buss.

http://www.arm.com/products/processors/cortex-a/cortex-a5.php said:The ARM Cortex-A5 processor is the smallest, lowest cost and very energy efficient applications processor capable of delivering the internet to the widest possible range of devices: from low-cost entry-level smartphones, feature phones and smart mobile devices, to pervasive embedded, consumer and industrial devices.

http://en.wikipedia.org/wiki/Advanced_Microcontroller_Bus_Architecture said:The Advanced Microcontroller Bus Architecture (AMBA) is used as the on-chip bus in system-on-a-chip (SoC) designs. This is useful for major project in VLSI stream. Since its inception, the scope of AMBA has gone far beyond microcontroller devices, and is now widely used on a range of ASIC and SoC parts including applications processors used in modern portable mobile devices like smartphones.

The AMBA protocol is an open standard, on-chip interconnect specification for the connection and management of functional blocks in a System-on-Chip (SoC). It facilitates right-first-time development of multi-processor designs with large numbers of controllers and peripherals.

Advanced eXtensible Interface (AXI)

AXI, the third generation of AMBA interface defined in the AMBA 3 specification, is targeted at high performance, high clock frequency system designs and includes features which make it very suitable for high speed sub-micrometer interconnect:

AMBA products

A family of synthesizable intellectual property (IP) cores AMBA Products licensable from ARM Limited that implement a digital highway in a SoC for the efficient moving and storing of data using the AMBA protocol specifications. The AMBA family includes AMBA Network Interconnect (NIC-301), SDRAM and FLASH memory controllers (DMC-34x, SMC-35x), DMA controllers (DMA-230, DMA-330), level 2 cache controllers (L2C-310), etc.

Some manufacturers utilize AMBA buses for non-ARM designs. As an example Infineon uses an AMBA bus for the ADM5120 SoC based on the MIPS architecture.

Search for Google TV & Arm Cortex A5 gives this: 06 tv ip box,Brand new 2012,ARM Cortex-A5 android Google tv

android TV Box, 1.2GHz ARM Cortex A5, tv box up to

jeff_rigby

Banned

@Jeff,

I'm really trying to follow your greatly documented news and analyses, but I - and I believe I speak for many more - have no clue what you want to say. So, what does that all mean in terms of real life applications / user cases?

Something like Google TV is coming to both consoles and will use ARM processor/buss and accelerators so that they can get really low power (under 10 watts) and secure IPTV. How much is running on the ARM buss is unknown. How they are going to integrate ARM and X86 busses and CPU code is unknown. The OS just got interesting <grin>.

As to real life applications/use cases I think I have gone over this. Take Google TV features + cloud + RVU-DLNA+DTCP-IP + XTV + ATSC 2.0 + OCAP applications + HTML5 + HTML5 Browser Desktop + Voice and Gesture recognition + Skype + IPTV and Augmented Reality, some imagination and think Own the living room.

phosphor112

Banned

Something like Google TV is coming to both consoles and will use ARM processor/buss and accelerators so that they can get really low power (under 10 watts) and secure IPTV. How much is running on the ARM buss is unknown. How they are going to integrate ARM and X86 busses and CPU code is unknown. The OS just got interesting <grin>.

As to real life applications/use cases I think I have gone over this. Take Google TV features + cloud + RVU-DLNA+DTCP-IP + XTV + ATSC 2.0 + OCAP applications + HTML5 + HTML5 Browser Desktop + Voice and Gesture recognition + Skype + IPTV and Augmented Reality, some imagination and think Own the living room.

Can the OS run on the ARM processor as well (at the same time) in the end freeing up the Jag CPU's for games?

BlockheadBrown

Member

This would all be very nice. Let's hope it happens, Jeff!

jeff_rigby

Banned

Likely the ARM processor is handling the blue tooth for the Six Axis Controller, maybe the Kinect like Gesture and voice control is via accelerators connected to the ARM buss, who knows? If it makes sense for security or low power then I'd guess the ARM low power subsystem is being used.Can the OS run on the ARM processor as well (at the same time) in the end freeing up the Jag CPU's for games?

jeff_rigby

Banned

For those that don't understand anything beyond Google TV like features; All the initials are about STANDARDS that require a Software Stack that starts with a "networked" Blu-Ray player that has a HTML5 Browser and supports DLNA with DTCP-IP security (Think PS3 as a "networked" Blu-ray player). Think of a PS4 as a PS3 on steroids with a more secure DRM called "Trustzone" that allows for low power IPTV so Google TV is practical.@Jeff, thanks - this I can understand

Think no waiting for applications to load...more memory and starting with Software Standards the PS3 has been getting in updates since 2010 and still to come this year. From what I can gather it's 95% in place but ATSC 2.0 won't get candidate status till about March of this year. RVU is also reaching Candidate status, DASH IPTV with DRM sponsored by Google/Microsoft/Netflix is also nearing Candidate status, HEVC (h.265) has been published. These standards build on the existing software stack leveraging HTML5 and native libraries supporting the Webkit browser as well as DLNA.

Search for Google TV & Arm Cortex A5 gives this: 06 tv ip box,Brand new 2012,ARM Cortex-A5 android Google tv

android TV Box, 1.2GHz ARM Cortex A5, tv box up to

Mini Google TV Box Android 4.0 Cortex A5 Wifi All-in-One Entertainment Box for HD Media Player Comp... $48.00

Features

2.4G wireless keyboard and mouse: Support, 2.4G air flying squirrel: Support.

Architecture: HD video decoder(1080P@60Fps); HD Video.

Audio: Music Player MP3, WMA, WAV, OGG, AAC, FLAC, 3GP.

Color:Black.

Core Type: TCC8925.

DC-IN: USE Micro USB.

DLNA: DIGITAL LIVING NETWORK ALLIANCE.

Encoder(1080P@30Fps); Five Layers for Display and Graphic Process Unit; PMU (Power Manage Unit); HDMI 1.4; Standard HDMI Interface.

Files Manager: R.E. Manager.

Game of Android: APK of Android.

HTML5: Support.

Input Method: Android Keyboard.

Input Method2: 2.4G Mouse.

Item size: 84*24*15mm.

Language: Support Multi Languages.

Main Frequency: 1.0 Ghz (MAX).

Memory & Flash & POWER: Memory: DDR3: 512M(16bit), Nand Flash: Internal 4G Flash, Power consumption: Main Unit + 2.4G sender(Mouse) less than 700mA@5V.

Net weight: 150g.

Online stream playback: Youtube & YOUKU.

Operation System: OS: Android4.0(Later), Linux Kernel: 2.6.35.7+.

Others: 3D GPU inside(mail 400).

Package Include: 1 x Android Mini PC, 1 x USB cable, 1 x User manual."

Picture Viewer: Gallery.

Pre-Load Applications: Adobe Flash Player: V11.1 or Later.

TXT editor: IREAD.

Type: CORTEX-A5 (45nm CMOS).

USB Socket: Micro USB(OTG)&USB-4P (HOST).

Video: Movie Player MPEG2, MPEG4, AVI, WMV, MKV, MOV, RM, RMVB.

Web Browser: Browser.

WIFI Mobile DISPLAY: Support wifi connect with Mobile display.

WiFi: IEEE 802.11 b/g/n(AR6103).

WPS: Wireless Provisioning Services.

Compare this (3.5 watts) with the PS3 using 61 watts idle and less than 90 watts IPTV streaming.Mali-400 MP

The world's first OpenGL® ES 2.0 conformant multi-core GPU provides 2D and 3D acceleration with performance scalable up to 1080p resolutions, while maintaining ARM® leadership on power and bandwidth efficiency.

With support for 2D vector graphics through OpenVG 1.1 and 3D graphics through OpenGL ES 1.1 and 2.0, the Mali-400 MP provides a complete graphics acceleration platform, based on open standards.

Scalable from 1 to 4 cores the Mali-400 MP enables a wide range of different use cases, from mobile user interfaces up to smartbooks, HDTV & mobile gaming, to be addressed with a single IP. One single driver stack for all multi-core configurations simplifies application porting, system integration and maintenance. Multicore scheduling and performance scaling is fully handled within the graphics system, with no special considerations required from the application developer

The provision of an industry standard AMBA® AXI interface makes integration of Mali-400 MP into system-on-chip designs straight-forward, and also provides a well-defined interface for connecting to other bus architectures. ARM is in the unique position to provide an optimized compute platform that uses ARM Cortex processors, Mali GPU and ARM CoreLink CCI-400 technologies. This heterogeneous approach means that a range of applications is more efficiently processed when shared between the CPU and the GPU. This makes full use of the inherent capabilities of each system component to achieve the best possible balance of power and performance.

AMD Strengthens Security Solutions through Technology Partnership with ARM