NoirVisage

Banned

wonderful thread.

It appears that it's possible to have 2 gigs of wide I/O inside the SOC now but 4 gigs would have to wait and/or be more expensive. The following needs to be understood completely, quad channel DDR3 or 4 "don't think about it" needs to be understood, it's not gong to be in a future design for the same reason the PS3 Cell @ 40nm can't be easily scaled to 32nm, the XDR interface is too large just like 4 DDR channels in a AMD Fusion would be. The next node process shrink would have issues and that should be part of Sony and Microsoft long range plans. A custom memory interface is an absolute MUST!Microsoft's lawyers confirmed it was real. It's old but it's not fake. This is the plan they wanted to take back in 2010. And I've read on B3D rumor that Xbox next gen spec is finalized. Undoubtedly upgrade from this paper, well hopefully.

I think they had a different game plan than Sony. MS really had to think ahead with the hw, since they are attempting to do a ton more things than play games and movies. Sony was waiting up until last year and now to start looking at what chips are on the market and what they can build for a price, like vita. Cramming, it takes a year to design a console. And then production and QA takes several months. Supposedly PS4 design spec is still up in the air, I wonder if they do have two completely different systems - one mid range and one high end ? Not PS3 redesign but two possible versions of next gen. But some claim to know the latest SDKs now have 4GB GDDR5. Only 2GB in total against a possible 8GB MS system would be a big weakness. Sony should be finalizing soon, if they want to launch in 2013.

http://www.amdzone.com/phpbb3/viewtopic.php?f=532&t=139005&start=50#p218132 said:DDR4 is not much faster than DDR3 ... it all depends on latencies (tymings). Also DDR4 tends to consume more relative power for the higher "speeds" ... better would be AMD to launch a LR (load reduced) non-registered non-ECC high speed standard!... just a crazy idea!... but since AMD is now in the DRAM business also, it could make better with what is already there.

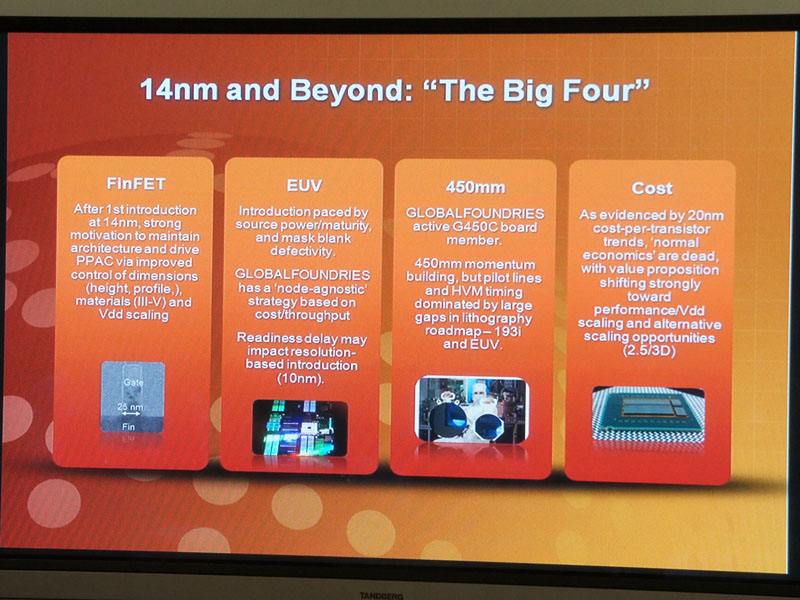

About a quad channel APU, just DON'T think about it... DRAM DIMM interfaces are absolutely HUGE and scale terribly bad with smaller process nodes ... the necessary lane layout would mean a much larger chip than necessary, perhaps curtailing the possibility of ULV 17W bins. Also it defeats the purpose for mobile, entry to mainstream desktop markets...

The better way to deal with the memory bandwidth problem of APUs is to have TSV eDRAM... IBM could help in the design ( i think AMD already has a license for the macro designs) and also the Wide I/O DRAM interface of which consortium AMD is part, all fits like a charm.

So after Kavery we can have an APU with 512 to 1GB of TSV DRAM on the package, no POPs no interposers, cooling solutions already exist, so the "execution" parts don't have to lose much (if anything).

heck! it can have also an interposed on package like Haswell will have 512MB to 1GB of DRAM

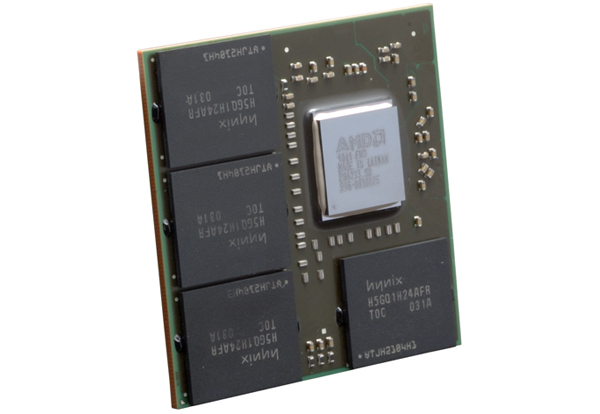

Heck this is nothing new, quite before any Haswell, only it could be way much better... and instead of a Radeon it will be an APU on that package... and by the time it will be necessary it can have 2 DRAM chips instead of 4, cause TSV is starting to be big among DRAM IDMs, and those chips can have up to 8 stacked dies (4Gbit/die each means 4GB/chip or 8 GB for total of 2 DRAM chips on package -> meaning 1 to 2GB total will be relative inexpensive... heck! 4 DRAM dies + 1 controller (a LR type ) and it will be 2GB in one single chip (with up to 128bit interface on the Wide I/O standard)... for the GPGPU on the APU!..

and what happened when you woke up?

Sigh....try to keep up.

After I asked my question, steviep said there is not 12 GBs of DDR4 in the devkit. So I asked him which one is it, is there not 12GBs of ram or is there 12 GBs of GDDR5 or something. He then smugly/strangely replied with something about there is no way you are getting 12GBs in a standard built mid-range pc, which is irrelevant and doesn't provide any clarity at all. As I explained dev-kits are a different beast entirely.

And you are way off-base about PCs. For one GDDR isn't comercially available to consumers and secondly if you want to get technical, you can easily stick two 4GB cards in a high end pc to give you 8GBs or more if you desire. If you want 12GB nvidia does have special cards but they're more for professionals. So um yeah there are high-end PCs with 8GBs of GDDR5.

I probably shouldn't have asked stevie for info anyway cause welll........you see his tag

Well,

GDDR5 Is not really costly at all. I have the chart with component breakdown from the AMD briefing and can post it if you want. On amount of chips, AMD/Nvidia/Intel all have products with 8GBs GDDR5 on PCI-E sized cards coming late 2012/early 2013 and while I wouldn't personally bet on 8GB being the final number, for a Q3/4 2013 console it is hardly the unicorn you make it out to be. I'd say 6GBs GDDR5 with a 2 GB pool of DDR is a perfectly plausible estimate especially given the nature of the rather modest specs the document describe.

Gesh, Micron is making custom and semi-custom memory for next generation game consoles; will someone please post this on BY3D and Read and understand it here!!!!Those are high-end dual-GPU cards, sir. Those are incredibly power-hungry and produce a lot of heat. Oh yeah, they're also expensive as shit. So yeah, there are high-end PCs with 8GB of GDDR5 RAM. I think I forgot to make it clear earlier that I was talking about the vast majority of high-end PCs. Those Nvidia cards you are mentioning aren't even built for gaming, so I don't know why you brought those up. Yes, there are 8GB GDDR5 in really high-end PCs. Do you think that makes it any more likely that the devkits, let alone the actual console itself will have 12/8GB GDDR5?

StevieP's response wasn't necessarily "smug". I took it as more of "You clearly don't see this in even a standard mid-range PC, why would you think it'll be there in the devkit for a console?" <--- I don't want to put words into his mouth, and I'd appreciate if he could clear it up.

GDDR5 has a density of 256MB per chip. In order to get your grand total of 8GB GDDR5, you would need 32 chips on a single board. Even with 6GB of GDDR5, that's 24 chips. With twice the density, that's 16 chips and 12 chips, respectively, which is manageable, I assume (certainly more so than 24 or 32). DDR3 fits that bill with 512MB chips.

Don't get me wrong--I still expect there to be a certain amount of GDDR5 in there--just that the majority will be DDR3 or DDR4 due to a very likely high-footprint OS and background tasks (we're talking about a media hub, right?).

Please provide the chart if that isn't a hassle.

Regarding the chip count..... Those PCI-E sized cards have nothing on them besides the GPU itself. Console PCBs have much more than just a GPU to worry about.

Sony Invents Optical Communication Glasses for Work & Play

I guess Glasses is going to be the big thing Next Gen Sony , MS & Google.

jeff_rigby?

Isn't he the guy that used to prophesize about the PS3/4, and it turned out he was crazy?

Tom Hanks is always awesome.2009 prototype

Feel free to giggle at my tag all you'd like. The kits are literally similar to standard pcs even if your source is only web leaks. The type of memory you'd find the amount of 12gb (or 8gb) in pcs at the moment is?Sigh....try to keep up.

After I asked my question, steviep said there is not 12 GBs of DDR4 in the devkit. So I asked him which one is it, is there not 12GBs of ram or is there 12 GBs of GDDR5 or something. He then smugly/strangely replied with something about there is no way you are getting 12GBs in a standard built mid-range pc, which is irrelevant and doesn't provide any clarity at all. As I explained dev-kits are a different beast entirely.

And you are way off-base about PCs. For one GDDR isn't comercially available to consumers and secondly if you want to get technical, you can easily stick two 4GB cards in a high end pc to give you 8GBs or more if you desire. If you want 12GB nvidia does have special cards but they're more for professionals. So um yeah there are high-end PCs with 8GBs of GDDR5.

I probably shouldn't have asked stevie for info anyway cause welll........you see his tag

He was dismissing it on Twitter. Must be pretty embarrassed now.

Sony Invents Optical Communication Glasses for Work & Play

I guess Glasses is going to be the big thing Next Gen Sony , MS & Google.

He was dismissing it on Twitter. Must be pretty embarrassed now.

I already have to wear glasses, so this idea can go to hell for all I care. I damn sure don't want gaming glasses, lol.

Yeah, Microsoft actively trying to remove the doc must have been the reason why he wrote that article. But still, this whole thing is so funny.I doubt he's embarrassed. Most of the article has him trying to still disprove it, albeit now, it's dismissive of Microsoft's plans rather than show it up as poor man's 50+ pages fake.

Don't read too much into that. The doc is old.i really don't know anything about hardware specs so are these specs in the document good for next gen or is it just a incremental step?

Going by what's coming first;Read the document. What are the most important things I should take out of it?

Ryoku said:Those are high-end dual-GPU cards, sir. Those are incredibly power-hungry and produce a lot of heat. Oh yeah, they're also expensive as shit. So yeah, there are high-end PCs with 8GB of GDDR5 RAM. I think I forgot to make it clear earlier that I was talking about the vast majority of high-end PCs. Those Nvidia cards you are mentioning aren't even built for gaming, so I don't know why you brought those up. Yes, there are 8GB GDDR5 in really high-end PCs. Do you think that makes it any more likely that the devkits, let alone the actual console itself will have 12/8GB GDDR5?

Ryoku said:StevieP's response wasn't necessarily "smug". I took it as more of "You clearly don't see this in even a standard mid-range PC, why would you think it'll be there in the devkit for a console?" <--- I don't want to put words into his mouth, and I'd appreciate if he could clear it up.

GDDR5 has a density of 256MB per chip. In order to get your grand total of 8GB GDDR5, you would need 32 chips on a single board. Even with 6GB of GDDR5, that's 24 chips. With twice the density, that's 16 chips and 12 chips, respectively, which is manageable, I assume (certainly more so than 24 or 32). DDR3 fits that bill with 512MB chips.

Don't get me wrong--I still expect there to be a certain amount of GDDR5 in there--just that the majority will be DDR3 or DDR4 due to a very likely high-footprint OS and background tasks (we're talking about a media hub, right?).

Please provide the chart if that isn't a hassle.

Regarding the chip count..... Those PCI-E sized cards have nothing on them besides the GPU itself. Console PCBs have much more than just a GPU to worry about.

Feel free to giggle at my tag all you'd like. The kits are literally similar to standard pcs even if your source is only web leaks. The type of memory you'd find the amount of 12gb (or 8gb) in pcs at the moment is?

Or, to put it in different terms: 8gb of gddr5 ain't happening. Ryoku has more correctly conveyed what I was trying to say but was too lazy to type due to crappy smartphone touch typing.

My opinion is the Oban SOC that started production Dec 2011 is both the Xbox361 and PS3.5 SOC and it's at 32 nm with 1 gig of Wide I/O stacked RAM in the SOC.Is there any indication of upcoming 28nm shrink for PS3 gpu/cpu?

My opinion is the Oban SOC that started production Dec 2011 is both the Xbox361 and PS3.5 SOC and it's at 32 nm with 1 gig of Wide I/O stacked RAM in the SOC.

This is my opinion and hindsight based on the Digitimes rumor, Xbox Code10 project, Xbox361 in the Xbox 720 powerpoint as well as Microsoft-sony.com and sony-microsoft.com. Also when you start to look at how a HDMI pass-thru and standby mode/low power mode Xbox 360 and PS3 could be made, it's obvious, at least to me. You have a massive redesign or use existing AMD building blocks and enough PPC and SPUs to make emulation possible for both on the same SOC.

Im skeptical.

Yup.One chip for both consoles, with enough horsepower to emulate both at same levels as original consoles [they would probably not want to piss off 60+mil userbases from both camps by providing games that preform way better on these redesigns...]?

Im skeptical.

Very possible. Oban is a Japanese name for a blank oblong gold coin and that would work for WiiU. In any case what I described is coming this holiday season and the news report have Oban being made for Microsoft not the Wii.bgassassin said:I think Oban has a better chance of being for Wii U than PS360 remodels.

If you look into what would be required to produce a refreshed PS3 or Xbox360 with HDMI pass-thru and low power modes it rapidly becomes evident that using AMD building blocks and emulating a PS3 or Xbox 360 is the more practical course. Key here is stacked memory and AMD building blocks plus IBM TSV substrate.Graphics Horse said:That's politer than how I'd have put it.

A bit OT:

The more PC-like these component configurations appear to be the more i begin to wonder - why bother purchasing any of these next gen consoles, as currently slated, when a powerful PC should logically be able to emulate them with somewhat greater ease than ever before

Yup.

And it's going to be a PS3.5 not PS3. Check out discussions on a PS3.5. It has to have more than 512 meg for the emulation overhead so most likely 2 512 meg Wide I/O stacked memory packages are in the SOC. Anything not used by emulation can be used by Sony for additional OS features like Cross game chat.

The question is what will Sony support given they don't want to fragment the PS3 user base. According to Digitimes it has a Kinect interface for the PS3.5 and of course the Xbox 361.

http://kotaku.com/5817874/report-the-playstation-4-will-have-kinect+style-motion-controls. The same month this rumor came out Microsoft reserved the domain names sony-microsoft.com and microsoft-sony.com.I find it very hard to believe most of what you say, I don't know why as its very detailed and to the point, but, you are telling me there is going to be a PS3.5 with Kinect ? This late into the generation?

Where do you get your information from?

It's more expensive in small quantities as it's currently CUSTOM memory but in quantity it's cheaper, faster, uses less power and produces less heat. Micron is making CUSTOM and semi-custom memory for game consoles. With both Sony and Microsoft using it there is an economy of scale.DieH@rd said:This oban however will have stacked ram on it, and that will not be cheap to implement.

I doubt he's embarrassed. Most of the article has him trying to still disprove it, albeit now, it's dismissive of Microsoft's plans rather than show it up as poor man's 50+ pages fake.

The only thing that can somehow make me believe in this oban x361/ps3.5 rumor is that inverview with Sony exec, in which he stated that the company is prepared to spend around 1 billion for chip r&d.

I dont see how PS4 APU [with more or less standard design, one newish CPU and GPU smacked together] can worth so much money. This oban however will have stacked ram on it, and that will not be chap to implement.

Will Epic be satisfied with 4x-8x power of 360? I think not. Is that even 2.5 trflops that they want as a minimum?

Now you are asking questions I can't answer. Game side would have to have upscaling to either 720P or 1080P to allow for easy windows and overlay. That might not be noticed by the average gamer. I'd expect better kinect (more accurate) and better voice recognition (more accurate) and most likely accessories will be cheaper for the Xbox 361 than for Xbox 360.A lot of speculation and guess work maybe, don't get me wrong, you could be very right, but one has to question where you are gathering all this information from.

I'm hoping you're wrong to be honest as id prefer the next gen much sooner and these machines would likely push it back.

So, with the 361, what will it have over the 360? What features will HDMI pass thru give us and will I be playing 360 games with better graphics?

Will Epic be satisfied with 4x-8x power of 360? I think not. Is that even 2.5 trflops that they want as a minimum?

They recently said 1 teraflop is the minimum for full featured UE4.

Considering all the hints of a 1 teraflop machine by insiders on Neogaf and beyond3d, it's probably 4x. 8x 360 is actually more than 2.5 teraflops and is very optimistic.

They recently said 1 teraflop is the minimum for full featured UE4.

Considering all the hints of a 1 teraflop machine by insiders on Neogaf and beyond3d, it's probably 4x. 8x 360 is actually more than 2.5 teraflops and is very optimistic.