So there is a common misconception here on GAF (and probably else where too) that the previous generation of consoles were some sort of super-duper computers or power houses of some sort compared to their PC brethren, so I went together putting some simple performance numbers for the GPUs specifically.

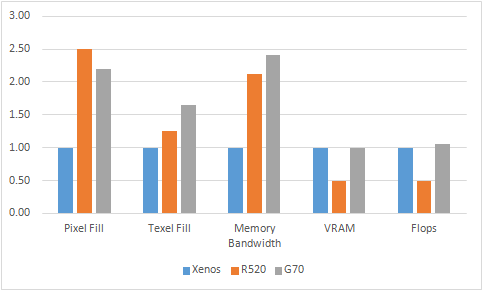

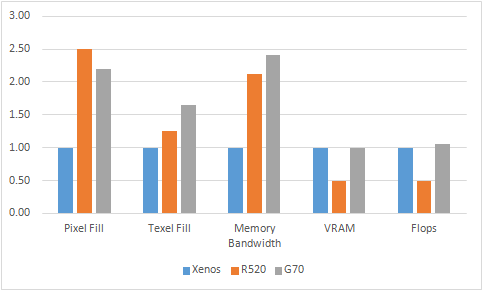

Keep in mind that this is a basic comparison and isn’t comprehensive. Also keep in mind that due to different architectural reasons the comparison can never be 100% alike. With that out of the way, this is how the Xenos compared to a PC GPU from the same period. The graphs are normalized with Xenos as a baseline.

So really, the Xenos wasn’t some monster. Sure it had the Unified Shader Architecture and 10MB EDRAM but it was some technological leap in raw processing power. Keep in mind that for G70, I calculated the flops based on Nvidia’s documentation and not the BS figure of 400GFlops of the RSX/G71.

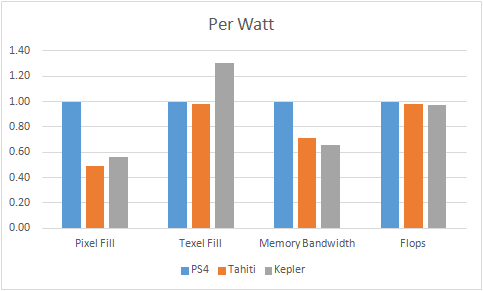

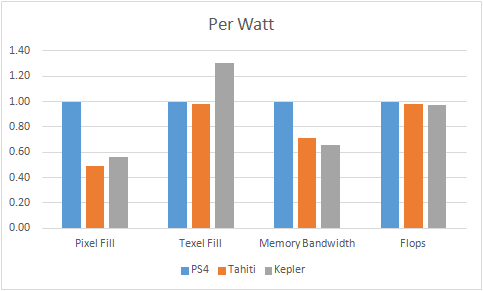

Now let’s take a look at the PS4 GPU. Again, the graphs are normalized with PS4 GPU as a baseline.

So the overall picture isn’t much different from the previous generation. The two major areas lacking is the Texel fill and the overall Flops. Despite Flops being a more usable metric now compared to the previous cycle, the Titan isn’t really 2.5x as fast.

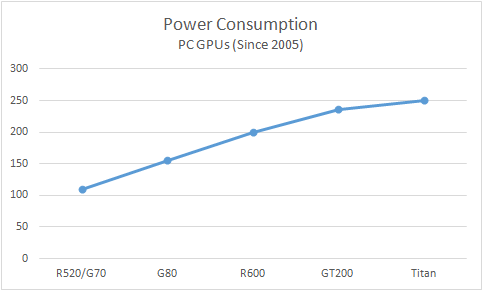

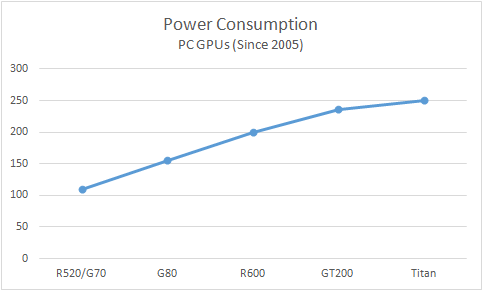

This comparison is purely in vacuum. Let’s look at the TDP of PC GPUs since 2005

(For the sake of simplicity, I haven’t charted every GPU. This is just to illustrate that power consumption of PC GPUs has grown significantly over the years)

In simple words, it’s a 2.5x increase since 2005. This is not considering anamolies such as the GT200 and some other GPUs which were closer to the 300W mark. Even though there aren’t accurate TDP numbers for Xenos/RSX out there, for the sake of discussion let’s consider them to be 75W. A similar increase would mean a 190W console GPU!

But we know that things like that (an open and expanding standard) for PCs don’t work in the console segment. So the consoles are limited by their TDP budgets severely. So let’s break down the same comparison but with per watt metric.

Tables turned on the PC GPUs? Not really, this view is only if the PC GPUs had similar TDP constraints but it still gives you an idea of how Microsoft/Sony are maximizing the raw power given their TDP budgets. It also highlights how inefficient Xenos was (TDP perspective), mainly because at that time there was more room in the TDP budget. Just look at which two GPUs are the top performers in the performance/watt category;http://www.techpowerup.com/reviews/NVIDIA/GeForce_GTX_Titan/28.html

I’d also like to ask people that scoff at the 18CU GPU in the PS4, what GPU realistically do you think Microsoft/Sony should have gone with instead?

Feel free to criticize and add your own comparisons.

Keep in mind that this is a basic comparison and isn’t comprehensive. Also keep in mind that due to different architectural reasons the comparison can never be 100% alike. With that out of the way, this is how the Xenos compared to a PC GPU from the same period. The graphs are normalized with Xenos as a baseline.

So really, the Xenos wasn’t some monster. Sure it had the Unified Shader Architecture and 10MB EDRAM but it was some technological leap in raw processing power. Keep in mind that for G70, I calculated the flops based on Nvidia’s documentation and not the BS figure of 400GFlops of the RSX/G71.

Now let’s take a look at the PS4 GPU. Again, the graphs are normalized with PS4 GPU as a baseline.

So the overall picture isn’t much different from the previous generation. The two major areas lacking is the Texel fill and the overall Flops. Despite Flops being a more usable metric now compared to the previous cycle, the Titan isn’t really 2.5x as fast.

This comparison is purely in vacuum. Let’s look at the TDP of PC GPUs since 2005

(For the sake of simplicity, I haven’t charted every GPU. This is just to illustrate that power consumption of PC GPUs has grown significantly over the years)

In simple words, it’s a 2.5x increase since 2005. This is not considering anamolies such as the GT200 and some other GPUs which were closer to the 300W mark. Even though there aren’t accurate TDP numbers for Xenos/RSX out there, for the sake of discussion let’s consider them to be 75W. A similar increase would mean a 190W console GPU!

But we know that things like that (an open and expanding standard) for PCs don’t work in the console segment. So the consoles are limited by their TDP budgets severely. So let’s break down the same comparison but with per watt metric.

Tables turned on the PC GPUs? Not really, this view is only if the PC GPUs had similar TDP constraints but it still gives you an idea of how Microsoft/Sony are maximizing the raw power given their TDP budgets. It also highlights how inefficient Xenos was (TDP perspective), mainly because at that time there was more room in the TDP budget. Just look at which two GPUs are the top performers in the performance/watt category;http://www.techpowerup.com/reviews/NVIDIA/GeForce_GTX_Titan/28.html

I’d also like to ask people that scoff at the 18CU GPU in the PS4, what GPU realistically do you think Microsoft/Sony should have gone with instead?

TITAN

Feel free to criticize and add your own comparisons.