mansoor1980

Gold Member

i remember DF mentioning it quite a few timesOnly at the very begining of the generation in a few select games, this was not a general trend at all.

i remember DF mentioning it quite a few timesOnly at the very begining of the generation in a few select games, this was not a general trend at all.

This doesnt make it less stupid.Because they were always benchmarks in previous generations.

Man, that 18 seconds is a far cry from how I understood Cerny's presentation...

(barely any difference, from what I see, both locked to 30fps???):

Yes. I can see faster graphics memory bandwidth, sustained system performance and 30% more compute units along with a full RDNA 2.0 feature set. All of this on top of 2 more TFLOPS.Can you read past TF?

This doesnt make it less stupid.

Yes. I can see faster graphics memory bandwidth, sustained system performance and 30% more compute units along with a full RDNA 2.0 feature set. All of this on top of 2 more TFLOPS.

AMD GCN, RDNA, RDNA2 are all modular.Mmm... not sure if I follow. RDNA1 doesn't have RT accelerated hardware, you can dedicate the shader cores to compute intersections and so on but the performance is horrible, NVIDIA enabled raytracing on the 1000 series and I believe not even the 1080TI could produce acceptable results. On RDNA2 is part of the CUs. The TMUs are also part of the CUs as well.

Why are people pretending like poorly-optimized cross gen ports are benchmarks

for system performance?

Despite its 7% higher clocks the XB1 was still at a huge disadvantage. 16 ROPs on XB1 vs 32 on PS4, less ACEs, night and day difference in bandwidth etc. There was just no advantage at all on the GPU side for XB1.From your logic an RTX2080 would be faster than a 2080TI due to its faster clocks. This is obviously not the case. I also remember XBOX One higher clocks vs PS4 and everybody knows how that turned out....LOL

AMD GCN, RDNA, RDNA2 are all modular.

You can choose which version of each module you want to have... or which version of the CUs you want... you can combine CU version 1.5 with ROPs version 1.3 for example (RDNA is suppose to start in version 2.0).

What I don't know which RT is really a separably module or together.

AMD is not really clear about if it is together with TMU module or not.

IMO if RT is it own module then it makes easy to AMD to insert/remove RT for each GPU they will launch in the future.

Just as a side note, we do not know the number of ACE's for PS5's GPU yet.Despite its 7% higher clocks the XB1 was still at a huge disadvantage. 16 ROPs on XB1 vs 32 on PS4, less ACEs, night and day difference in bandwidth etc. There was just no advantage at all on the GPU side for XB1.

PS5 and XSX GPU differences are nowhere near like that. Both have same amount of 64 ROPs, same amount of ACEs. Computational power is higher on SX, but elsewhere the PS5 GPU has other advantages.

And the games' performance show that.

This wasn't the case between PS4 & XB1.

Stuff that in particular games happen only in a console like tearing in some place, lacking a certain option in the menus, VRR support on the console level, some 5fps dip that only happens the first time you run that stage, the implementation of some effect etc may be fixed with patches in the next few weeks.Once again....launch hardware on launch titles....there will most likely be a huge patch shortly

don’t know why people are getting worked up over results that are going to change

Yet the PS5 is outperforming XSX consistently.Yes. I can see faster graphics memory bandwidth, sustained system performance and 30% more compute units along with a full RDNA 2.0 feature set. All of this on top of 2 more TFLOPS.

Didn't they went with 4 ACEs for PS4 Pro? I'm assuming they retained the same number of ACEs for PS5..

Just as a side note, we do not know the number of ACE's for PS5's GPU yet.

I believe the RT module is a somewhat customized TMU but I get what you are saying.

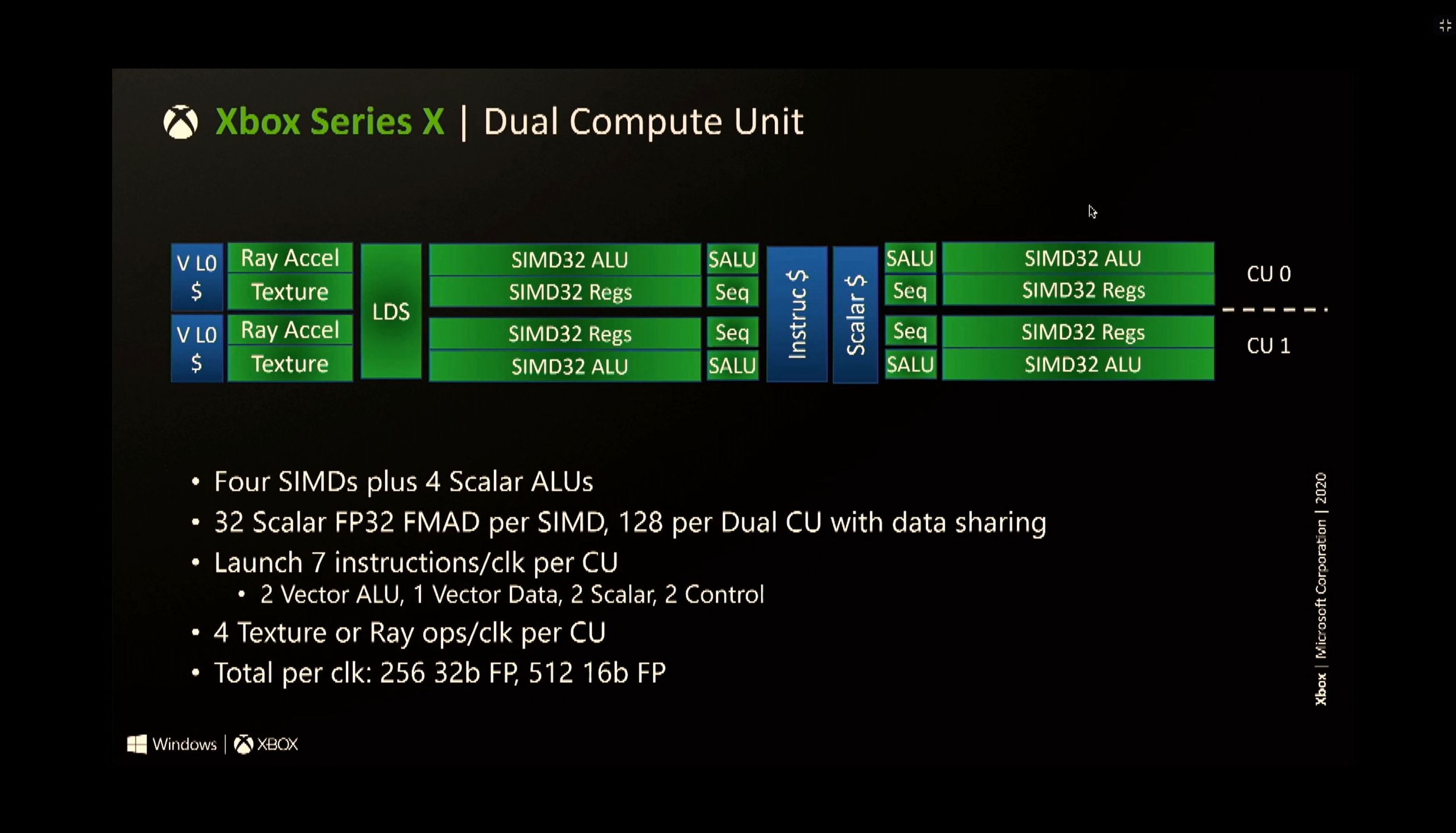

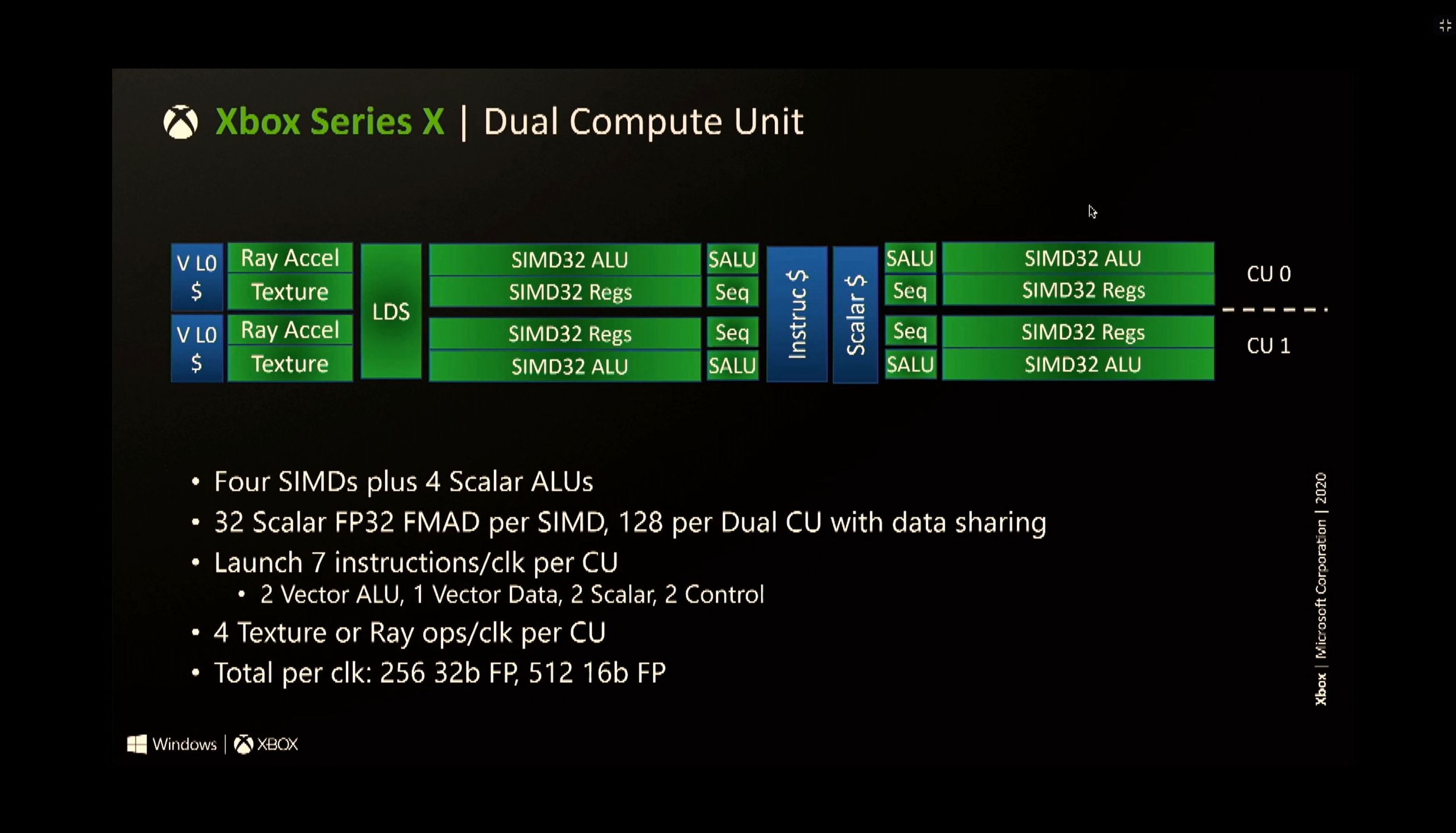

I guess we'll never know until we get a closer look at PS5's chips. XB does seem to have the same CU config:

BTW, the 30% IPC increase is totally wrong. It's 30% increase in performance at the same power level, but IPC I think is substantially lower.

Of course you do...They are both bugs, don't get so angry

I prefer puddles myself.

so PS5 wins again. performance is the same, but PS5 loads faster and has miles superior controller.

PS5 THE Best Place to play!

Basically choose whichever bugs you like the most.

In multiplat launch games. Circular logic. We have no idea which platform was the lead platform. Code optimization matters and I don't believe this game is optimized for any platform.Yet the PS5 is outperforming XSX consistently.

What about PS5's 22% higher pixel fillrate, and rasterization rate? Higher cache bandwidth? Cache scrubbers that help improve GPU perf?

Faster mem b/w only for the 10GB segment. The rest is slower than PS5. Full RDNA 2.0 feature set is a purposefully misleading marketing phrase. TF isn't the be-all and end-all, absolute indicator of GPU's perf.

The PS4 Pro and Xbox One X were "close" in performance as well. But optimized code always ran better on the faster console.Devs have also been saying that these two are very close in perf. since months. This proving to be true.

2000Mhz 20CUs will have better performance than 1000Mhz 40CUs.

That is true to any GPU.

The only exceptions are when there is bandwidth differences between the cases.

I have no ideia where you find that claim lol

BTW Series X and PS5 are different architectures.

I agree that Sony has proven to be more efficient with what they have than Xbox so far. It's impressive.The PS5's 22% uplift in pixel fillrate over SX likely makes up for the lack of 2 TF.

That's a smart GPU design, IMO. Do more with less.

I agree that Sony has proven to be more efficient with what they have than Xbox so far. It's impressive.

It is nice to see Sony worked on their AF implementation in SDK.

From what I remember a lot of games had AF issues on PS4 due the SDK having a bit more hard way to use it.

Seems like with PS5 SDK it is fine because are devs are using high AF implementation already.

The desperation is strong and shows that all the walking back after the 13 TFLOP rumors crashed and burned was just fluff. A few months down the line it will be glorious to look back at these threads.Wait, there are fanboys here trying to get a win with this game?

We have one bit of missing AF on Xbox, some missing Ray tracing in Windows reflections of a puddle, and lower PS5 resolution on the robot spider and there's a win to be found?

Wow.

Most likely.This will go throughout the generation. Minimal difference at best. The differentiating factor will be which 1st party studio can impress the mass market the most.

After that whole ssd campaign about double the speed. They can only get 8 seconds on the xsx and even slower on some games. Not really much to celebrate there

Edit: doesn't take much but just some logic to upset the sony cult lol

This is not the case , pixel fillrate and rasterization performance are bottlenecked first by memory bandwidthThe PS5's 22% uplift in pixel fillrate over SX likely makes up for the lack of 2 TF.

That's a smart GPU design, IMO. Do more with less.

Yeah this is pure BS

Is agree, not with pixel fillrate thoughSony is doing something right. Otherwise there would be a massive difference between the two systems.

Is agree, not with pixel fillrate though

Parts of the PS5 GPU is faster than XSX GPU. And more than one game's perf show this. End of story.In multiplat launch games. Circular logic. We have no idea which platform was the lead platform. Code optimization matters and I don't believe this game is optimized for any platform.

You mean "up to" 22% higher pixel fillrate. PS5 uses boost clocks so none of your metrics reflect sustained or average performance. No amount of tweaking can make one piece of hardware perform better than another piece of superior hardware. An overclocked RTX 2060 will never run optimized code better than a stock RTX 2080.

You don't know what you're arguing here. If you wanted to argue that the PS5 has more graphics memory available to it that the Series X, then you have a point. But if this was somehow a counter to my argument that the Series X has faster graphics memory than the PS5, then you're wrong.

The PS4 Pro and Xbox One X were "close" in performance as well. But optimized code always ran better on the faster console.

The fact that devs are scrambling to release patches to some of these games proves my point. The code is buggy and unoptimized which is to be expected of launch games that are released on 5 different platforms.

you might not think it's a big deal for some reason, but after the year of bullcrap from Microsoft fanatics, Parity +better controller+better loading times is a massive win.

The desperation is strong and shows that all the walking back after the 13 TFLOP rumors crashed and burned was just fluff. A few months down the line it will be glorious to look back at these threads.

Then I guess XSX is bottlecked by memory bandwidth in almost every game so far?This is not the case , pixel fillrate and rasterization performance are bottlenecked first by memory bandwidth

Then what then?

I always thought the high clocks was helping them out quite a bit but if they dont mean anything then I don't know what else it could be.

Mmm... not sure if I follow. RDNA1 doesn't have RT accelerated hardware, you can dedicate the shader cores to compute intersections and so on but the performance is horrible, NVIDIA enabled raytracing on the 1000 series and I believe not even the 1080TI could produce acceptable results. On RDNA2 is part of the CUs. The TMUs are also part of the CUs as well.

I do agree that 4 additional CUs per SA is odd and can be detrimental. The same way that RDNA2 cards as well as the PS5 clock very high and the XSBX doesn't, which could be another hint that is not running on the most optimal config.

In addition, there are two shader arrays with all the CUs active, but the number of rasterizers, RBs, L1 cache, is the same. So there could be bottle necking there, the GPU may have issues distributing loads efficiently as well. We'll see. I guess XBSX first party will be optimized to overcome the issues and it will be mostly equal on multiplats.

Explained what I believe above. Ps5 runs as it should beThen I guess XSX is bottlecked by memory bandwidth in almost every game so far?

I've called for parity from the beginning. Celebrating issues with dev kits is just as stupid as the ones already suggesting ms will be paying devs to sabotage ps ports.

The controller is subjective. I wouldn't use it for a multiplayer game. But I'd definitely rock it on single player.

Loading, considering the specs is not a win when you have literally double the speed and can barely edge out an 8 second advantage, sometimes less, and even slower.

It is way easier to fill 36 cu's with work. More mature tools since they are using an upgraded version of ps4 ones.

On the other hand, driver problems, Split memory and more cu's are more difficult with utilization

Cache scrubbers are used with IO though.Well the higher clock speed does help keep the CUs fed. Not to mention there's probably more cache per CU which helps as well. Then there's the cache scrubbers which helps out work the cache system. Seems like all of that leads to a GPU being very efficient and easy to work with.

but it's still better, and by reviews, the controller is a massive difference, it's a tangible difference the general public will feel vs not feel as with the xbox one, especially in a game like this.

If you asked 100 ransom people would they prefer their car with power steering or without, or electric windows with or without, which one do you think they will choose?

I am not sure why people are not taking this into consideration, its a massive difference in how the game plays and feels. If it was a pure fps i could see your point, this is not, its got a gazillion things in it to do besides just shooting.

Nope. Bandwidth on PS5 is just enough for the pixel fill rate with L2 compression.This is not the case , pixel fillrate and rasterization performance are bottlenecked first by memory bandwidth

Cache scrubbers are used with IO though.

Not sure how much cache ps5 has

I've played astrobot with it. I liked it way more than I expected. I just still don't see myself using it for multiplayer games, at least not competitive ones like say cod for example.

But for DS, GoW, etc, I'd use all its features.

The desperation is strong and shows that all the walking back after the 13 TFLOP rumors crashed and burned was just fluff. A few months down the line it will be glorious to look back at these threads.

In multiplat launch games. Circular logic. We have no idea which platform was the lead platform. Code optimization matters and I don't believe this game is optimized for any platform.

You mean "up to" 22% higher pixel fillrate. PS5 uses boost clocks so none of your metrics reflect sustained or average performance. No amount of tweaking can make one piece of hardware perform better than another piece of superior hardware. An overclocked RTX 2060 will never run optimized code better than a stock RTX 2080.

You don't know what you're arguing here. If you wanted to argue that the PS5 has more graphics memory available to it that the Series X, then you have a point. But if this was somehow a counter to my argument that the Series X has faster graphics memory than the PS5, then you're wrong.

The PS4 Pro and Xbox One X were "close" in performance as well. But optimized code always ran better on the faster console.

The fact that devs are scrambling to release patches to some of these games proves my point. The code is buggy and unoptimized which is to be expected of launch games that are released on 5 different platforms.

Not sure if this has anything to do with rasterization. It is part of the IOI thought the Cache Scrubbers helped the GPU out.