-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Radeon VII Review Thread....

- Thread starter thelastword

- Start date

Allnamestakenlol

Member

Uh oh

ethomaz

Banned

https://www.anandtech.com/show/13923/the-amd-radeon-vii-reviewKicking off our wrap-up then, let's talk about the performance numbers. Against its primary competition, the GeForce RTX 2080, the Radeon VII ends up 5-6% behind in our benchmark suite. Unfortunately the only games that it takes the lead are in Far Cry 5 and Battlefield 1, so the Radeon VII doesn't get to ‘trade blows’ as much as I'm sure AMD would have liked to see. Meanwhile, not unlike the RTX 2080 it competes with, AMD isn't looking to push the envelope on price-to-performance ratios here, so the Radeon VII isn't undercutting the pricing of the 2080 in any way. This is a perfectly reasonable choice for AMD to make given the state of the current market, but it does mean that when the card underperforms, there's no pricing advantage to help pick it back up.

Comparing the uplift over the original RX Vega 64 puts Radeon VII in a better light, being about 24% faster at 1440p and 32% faster at 4K. Reference-to-reference, this might even be grounds for an upgrade rather than a side-grade. But the fact of the matter is that its predecessor was competing against the second-tier GTX 1080, and now with the Radeon VII, Vega is still looking to match the performance of last generation’s flagship, the GTX 1080 Ti. The positioning is still set on pure gaming terms too, and power efficiency isn’t one of the allures of the Radeon VII.

Where it does have some interesting potential is on the compute and professional visualization side, though given our limited tests there’s little conclusive to say. So where does this leave AMD? Their situation is improved, but the overall competitive landscape hasn’t been significantly changed. The renewed AMD option to high-performance graphics is still important in terms of maintaining FreeSync possibilities. As an upgrade choice, the Radeon VII offers itself better as a high-VRAM prosumer card for gaming content creators, and of course that is intended justification for its $699 cost, a price point initially carved out by its competitor. For pure gamers, then the price is really the point of contention.

Seems like that I expected... below GTX 2080 without talking about DSLL and ray-tracing.

A band-aid because has nothing to release/show until Navi.

A curious fact with FarCry 5 the only game that uses FP16 and have better performance on Vega and Radeon VII... that hints at the RPM solution from AMD a little better than nVidia.

Last edited:

shark sandwich

tenuously links anime, pedophile and incels

Exactly what I was predicting.

Same price as RTX 2080, louder, hotter, higher power consumption, worse performance in most games, and none of the RTX features.

Might be good for “prosumers” but there’s very little reason for a gamer to choose this card.

This is clearly a stopgap product just so AMD can at least show their face in the high-end gaming market. Wait for Navi.

Same price as RTX 2080, louder, hotter, higher power consumption, worse performance in most games, and none of the RTX features.

Might be good for “prosumers” but there’s very little reason for a gamer to choose this card.

This is clearly a stopgap product just so AMD can at least show their face in the high-end gaming market. Wait for Navi.

Insane Metal

Member

Damn this is cruel

Edit: 7nm AND it still chops up more power than nVidia @ 14 (or is 12?). Can we say GCN needs to die?

Edit: 7nm AND it still chops up more power than nVidia @ 14 (or is 12?). Can we say GCN needs to die?

Last edited:

thelastword

Banned

Just want to say, JayzTwoCents is hilarious and he also made some good points, explaining how temperatures work on this new card etc...It's also clear that there are some driver issues at launch but that's AMD for you........Look at the benches though, not necesarily the opinions, stats are more important here.....

OhhH......There are some interesting stats in LTT's video, even against the 2080ti and it was a revelation seeing someone showing how synthetic benchmarks are just pure crap.......They can support a particular brand more than others and it means nothing against real world performance....Some of these synthetics may have focused on older tech and never updated their algorithms to take advantage of newer technologies or products.........3Dmark, Port Royale and even FF benchmarks can all be skewed......They're all pretty much a farce tbh....

Anyway, love this guy's videos, he gives so much info and visual aids in his presentation.......I wish one of the English guys displayed their stuff like him and went into so much detail.....

OhhH......There are some interesting stats in LTT's video, even against the 2080ti and it was a revelation seeing someone showing how synthetic benchmarks are just pure crap.......They can support a particular brand more than others and it means nothing against real world performance....Some of these synthetics may have focused on older tech and never updated their algorithms to take advantage of newer technologies or products.........3Dmark, Port Royale and even FF benchmarks can all be skewed......They're all pretty much a farce tbh....

Anyway, love this guy's videos, he gives so much info and visual aids in his presentation.......I wish one of the English guys displayed their stuff like him and went into so much detail.....

Insane Metal

Member

Techradar's review was MUCH more positive... as is IGN's

https://www.techradar.com/reviews/amd-radeon-vii

https://www.ign.com/articles/2019/02/07/amd-radeon-vii-review-and-benchmarks

Digital Trend's review is even better https://www.digitaltrends.com/computing/amd-radeon-vii-review/

Weird.

https://www.techradar.com/reviews/amd-radeon-vii

https://www.ign.com/articles/2019/02/07/amd-radeon-vii-review-and-benchmarks

Digital Trend's review is even better https://www.digitaltrends.com/computing/amd-radeon-vii-review/

Weird.

Last edited:

kraspkibble

Permabanned.

i've only watched the Jayz video but it seems at 1440p there's only about a 6% difference between the VII + 2080 and at 4K it's about 4%.

Last edited:

kraspkibble

Permabanned.

here in the UK the VII is going for about £650-700. a 2080 is £750-850. both are expensive as shit but the VII you can get a bit cheaper upfront. so it ain't a terrible deal if you don't care about RTX/DLSS. well, i guess the "savings" you get from buying a VII will be lost because your power bill will skyrocket lol.AMD, stay losing. 2080 is the same price with more features.

not defending AMD because they should be doing better. i think that Nvidia offers more value overall.

Last edited:

CuNi

Member

AMD, stay losing. 2080 is the same price with more features.

The 2080 is even less than the VII in Germany. Cheapest 2080 goes for around 670. If AMD sells for MSRP than the 2080 is cheaper by 60€. That's around 8% cheaper and 6% faster on top with RTX and DLSS in there for basically free.

ResilientBanana

Member

Technically I feel like this card's major competitor is the 1080TI and in that sense, it's still not enough. Nice looking card though.

EDIT:

Looking at Digital Trends review, when paired with an AMD threadripper, it's benchmarks look rather good.

EDIT:

Looking at Digital Trends review, when paired with an AMD threadripper, it's benchmarks look rather good.

Last edited:

demented waffle

Member

Could be a meh year for hardware upgrades. Sure, Navi could be killer for mainstream if the rumors are true. But this(R7) is just a shrink of already an old arch. Clock for clock it looks bad., it's just a higher clock speed for the most part. Are some differences in some games, but meh.

I'm guessing 2020 will the year of high-end hype for gpus with all 3 players getting involved again. Err 2 again and 1 new...

The new AMD cpus should be interesting at least. So cpu market might see a shake up again.

I'm guessing 2020 will the year of high-end hype for gpus with all 3 players getting involved again. Err 2 again and 1 new...

The new AMD cpus should be interesting at least. So cpu market might see a shake up again.

Last edited:

Redneckerz

Those long posts don't cover that red neck boy

Its decent and for content creation that 16 GB helps out a lot.

It also draws a lot of power and its hovering inbetween 2080/1080Ti levels.

The biggest setback is its limited availablity on purpose. Because they will be rare cards, they are only useful if you can snag them now. Everyone else can just hop over to Nvidia or use Crossfire setups and get your performance there.

So its usefulness is literally limited to a month or so, which is just a terrible idea in general.

A lot of innovation, but only useful in the here and now.

It also draws a lot of power and its hovering inbetween 2080/1080Ti levels.

The biggest setback is its limited availablity on purpose. Because they will be rare cards, they are only useful if you can snag them now. Everyone else can just hop over to Nvidia or use Crossfire setups and get your performance there.

So its usefulness is literally limited to a month or so, which is just a terrible idea in general.

A lot of innovation, but only useful in the here and now.

manfestival

Member

If I hear another "wait for Navi" I will just go ahead and buy everything intel and nvidia because you can wait forever for new hardware as there is always something new on the horizon with all of the promise of summer.

thelastword

Banned

Tech Jesus's Review was detailed and full of great info as usual......No review thread is complete without Tech Jesus:

Leadbetter had a pretty solid and fair review as well....

Keep watching reviews guys....I've just been watching them myself.....One thing is for sure, I've never seen so many techtubers get access to a GPU before...It seems everyone and their niece got a Radeon 7 to review, so many reviews......Many differences between them though........

Also, the drivers the tech guys are using are not public drivers and clearly, there are some issues with the media drivers.......Temperature/thermals OSC is not working or properly, some issues with wattman, blackouts and crashes, overclocking not working......In some games, AMD performance at 1080p seems to be lower than it should be, but of course at higher rez it performs admirably....So some issues for reviewers, but I think customers should get a driver that fixes most of these things anyway....

Generally though, at $700.00, this card with less optimized drivers is doing just what AMD said it would do. Compete with the 2080 and do nicely in productivity....I mean, even Leadbetter seems to love the 16Gb of Vram for his job, and Tech Jesus shows how strong it is for productivity....

Another thing to note; is that this card is being compared to an $800 OC'd 2080FE, whilst the Radeon 7 is at reference clocks.......It is not being compared to the $700.00 standard 2080 with lower clocks...So take that as you will, or as it is.......

squigglefizzle

Banned

Another thing to note; is that this card is being compared to an $800 OC'd 2080FE, whilst the Radeon 7 is at reference clocks.......It is not being compared to the $700.00 standard 2080 with lower clocks...So take that as you will, or as it is.......

That should have no real impact. It's only a 90MHz offset, if I recall correctly, and the difference between reference and overclocked is almost non-existent in practice. Looking at different versions of the 2060, for instance, the EVGA XC Black (bone stock, 1680MHz listed boost clock) vs the ASUS Rog Strix (overclocked to 1860MHz) shows no real performance difference (1-2 fps.)

Last edited:

I go to Hardocp.com for gaming reviews. I don't care for benchmarks like the few I've seen. So, I'll be waiting for that one to drop.

Moreover, I took a look at Anandtech's compute evaluations. It makes sense that this card is fantastic for compute given it's server origins.

Over all, I'm more curious about MacOS support and see what it can do there especially with the memory provided.

Moreover, I took a look at Anandtech's compute evaluations. It makes sense that this card is fantastic for compute given it's server origins.

Over all, I'm more curious about MacOS support and see what it can do there especially with the memory provided.

thelastword

Banned

Great review here........Probably one of the best...He was the only one I've seen able to get the card OC'd too....Great gaming results, Superior production capabilites and great temps/wattage.......The truth is, if you undervolt Radeon 7 slightly, you can boost up clocks over 200Mhz easily, you can also boost the memory as well......Of course, by now, many tech guys should know, that the first thing you do on an AMD card is to boost the power limit to max.....I think we will see some interesting results from Radeon 7, when all folk get the memo on how to use the card properly.....

Another solid review with a different config....

The way the Radeon 7 is designed with it's sensors, at default it pushes the fan speed up quite a bit. AMD is aware of that and will offer a quiet mode option, but undervolting the Vcore is not only allowing an OC on Radeon 7, but it allows, lower temps, lower decibels and of course lower fan speeds....Some updated/public drivers should sort a few issues with afterburner etc......

Another solid review with a different config....

The way the Radeon 7 is designed with it's sensors, at default it pushes the fan speed up quite a bit. AMD is aware of that and will offer a quiet mode option, but undervolting the Vcore is not only allowing an OC on Radeon 7, but it allows, lower temps, lower decibels and of course lower fan speeds....Some updated/public drivers should sort a few issues with afterburner etc......

Bonfires Down

Member

Too bad it's so noisy. I'd easily give up a few percent of performance to get double the VRAM.

ethomaz

Banned

Weird is a review with only two games tested where one RTX 2080 have a good lead and the other it trade blows...Techradar's review was MUCH more positive... as is IGN's

https://www.techradar.com/reviews/amd-radeon-vii

https://www.ign.com/articles/2019/02/07/amd-radeon-vii-review-and-benchmarks

Digital Trend's review is even better https://www.digitaltrends.com/computing/amd-radeon-vii-review/

Weird.

And the dude says it is a good launch lol

TechRadar has no shame at all... I won’t even talk about they wrong mensures VRAM usage too or that it runs cooler lol

Last edited:

Trogdor1123

Member

So... Is good? Looks to be much better than I expected

pawel86ck

Banned

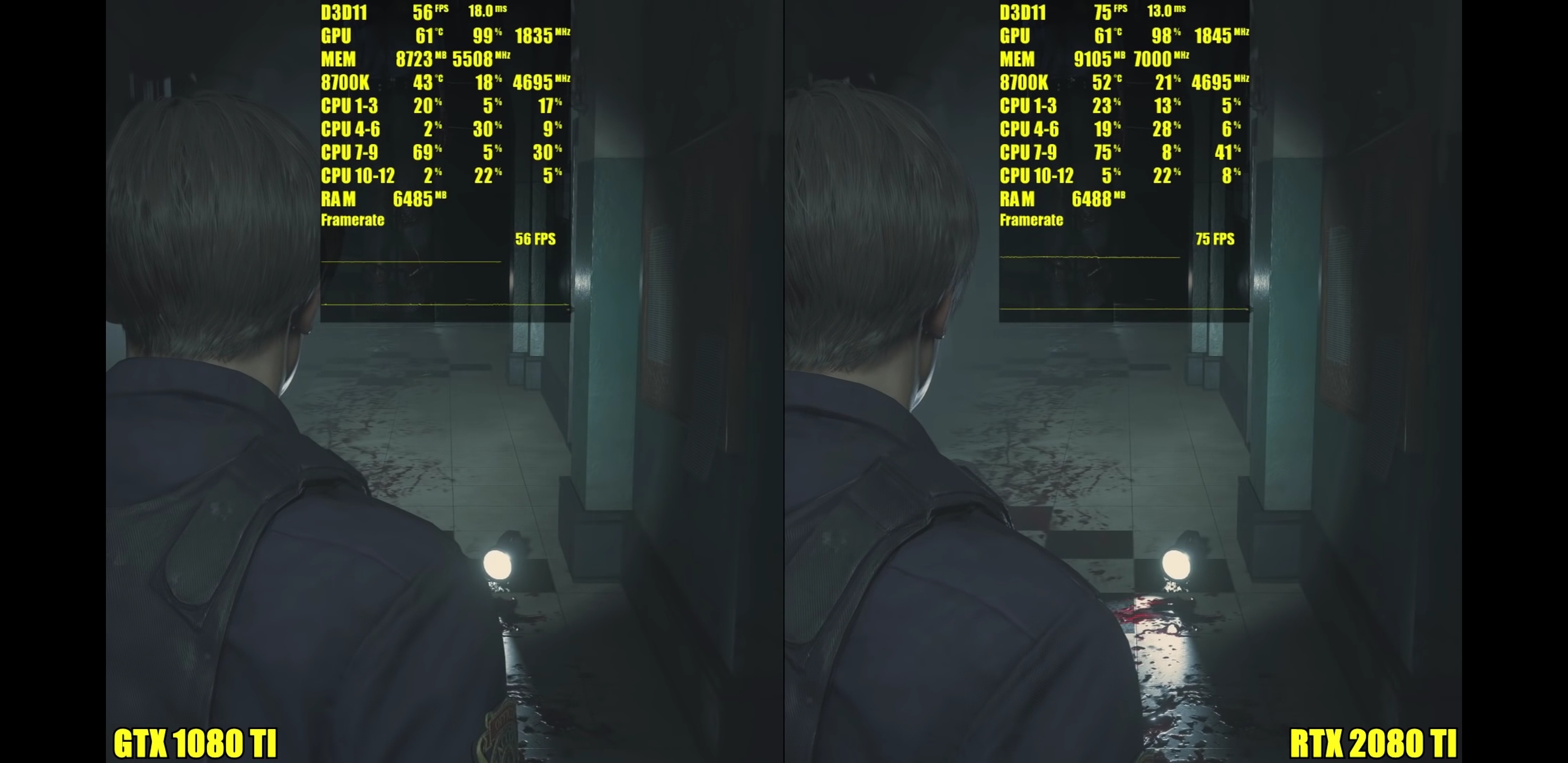

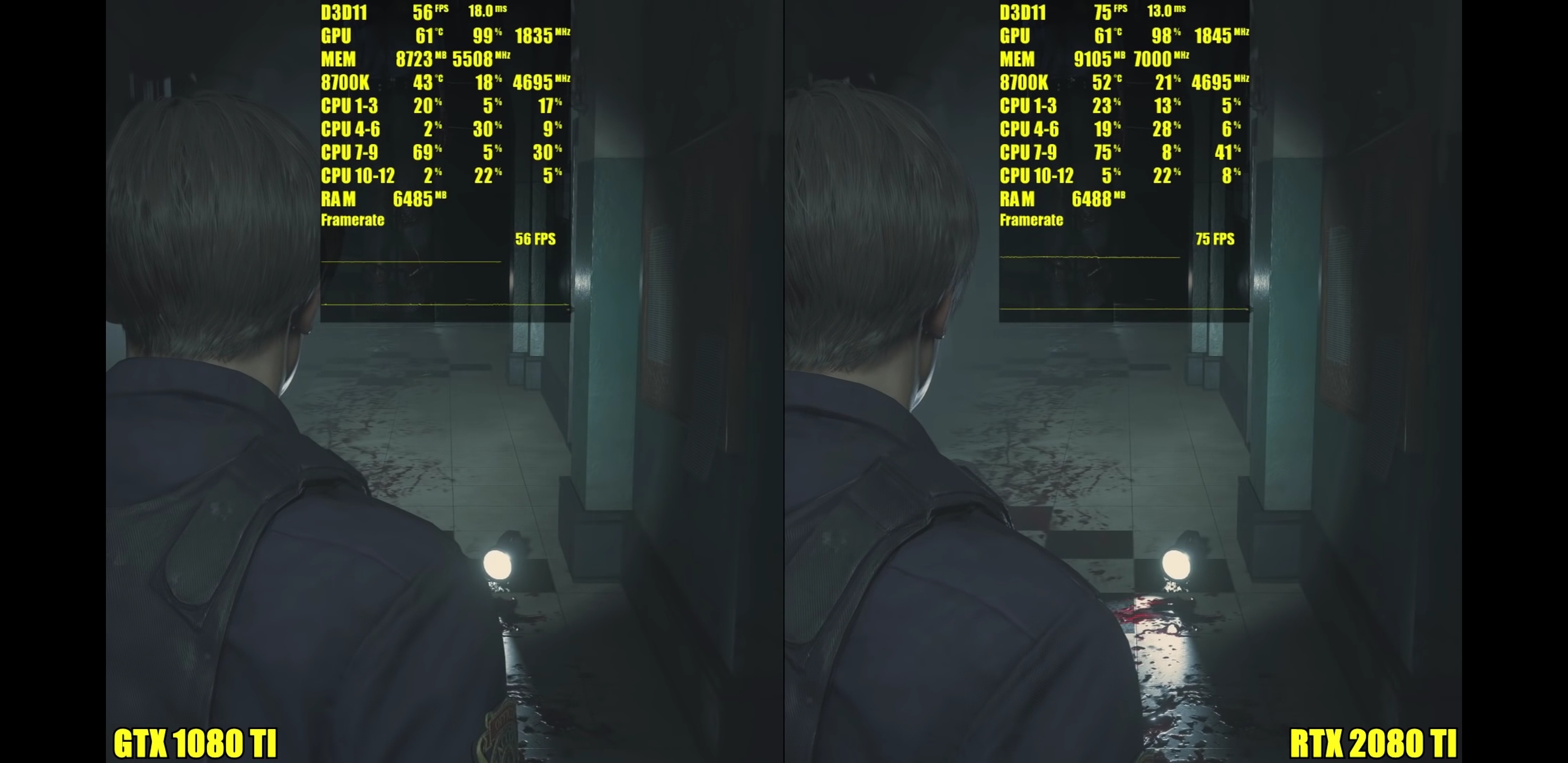

I'm surprised Radeon VII can match 2080 results, I wasnt expecting so big performance jump from vega64. Compared to RTX 2080 there's of course one issue here, lack of tensor and RTX cores equivalent but on the other hand Radeon VII have impressive 16GB vram. The amount of vram should be very important factor in the near future, because one year from now we should see next gen consoles with around 16-24GB of system memory so when 8GB cards like RTX 2080 will run out of vram (games like RE2 remake can allocate around 9GB even today and that's game made with current consoles in mind), 16GB on radeon should sufice for a long time.

thelastword

Banned

Every bit helps and skews results........Sometimes a game wins by 1 -2fps, it's still recorded as a win.....Besides, depending on the game, I think an OC can give you a bit more than that....If a title is already an Nvidia developed title or heavily favors NV, the OC boosts it even more over the competition........It would just be fairer to compare a $700.00 card against a $700.00 card, then if they want they can OC both of them.....That should have no real impact. It's only a 90MHz offset, if I recall correctly, and the difference between reference and overclocked is almost non-existent in practice. Looking at different versions of the 2060, for instance, the EVGA XC Black (bone stock, 1680MHz listed boost clock) vs the ASUS Rog Strix (overclocked to 1860MHz) shows no real performance difference (1-2 fps.)

In any case, the Radeon VII still trades blows with the RTX 2080FE or rather competes with it very well, offers 8 more GB of Vram and over twice the bandwidth.....Even now, there has been many examples of the extra Vram/bandwidth being useful in today's games and applications, farless for some of the games coming later in the year.....OTOH, I'm not so sure I can say RTX is a valid feature on a 2080 at this point however, since there is only one RTX game, a game which underperformed according to the publisher, so it's really not like the whole world is playing BFV.....Also, there isn't one playable game with DLSS enabled.........It's looking likely that AMD will catchup to NV and outshine it with DirectML, looking at how their RTX features are panning and trickling in or rather not......Yet, one thing that continues to ring true across the benchmarks, is how much better frametimes are on Radeon overall, there are times when NV edges out on average framerate, but the microstutter on NV cards is immense on certain titles.....

So it's like this, I think AMD is already matching the 2080FE, even with the small launch niggles, but it will outpace it more and more as we go along........That extra 8Gb and x2 bandwidth is going to come in handy.......Just watch this space, because by the end of this year, you will hear from persons who thought 8Gb or even 6Gb is enough.......

JohnnyFootball

GerAlt-Right. Ciriously.

It's a decent card, but the problem is that there is little reason to get it over the 2080. I wouldn't be surprised if nvidia announces a tiny price drop on the 2080 to make it better.

shark sandwich

tenuously links anime, pedophile and incels

Sorry but this is ridiculous. You really think it makes sense to buy a card that has worse performance today in the hopes that it’ll maybe have better performance in the future?I'm surprised Radeon VII can match 2080 results, I wasnt expecting so big performance jump from vega64. Compared to RTX 2080 there's of course one issue here, lack of tensor and RTX cores equivalent but on the other hand Radeon VII have impressive 16GB vram. The amount of vram should be very important factor in the near future, because one year from now we should see next gen consoles with around 16-24GB of system memory so when 8GB cards like RTX 2080 will run out of vram (games like RE2 remake can allocate around 9GB even today and that's game made with current consoles in mind), 16GB on radeon should sufice for a long time.

I guess we’ll see, but I’d be surprised if the relative performance between 2080 and Radeon VII changes all that much. I certainly wouldn’t base a $700 purchase on that hope.

DemonCleaner

Member

i can't believe they've done such a bad job on the cooler.

good thing i've ordered one before watching the reviews

good thing i've ordered one before watching the reviews

Unknown Soldier

Member

This thing only exists because Navi was delayed for a respin. It's not even really a stopgap since the rumors are only 5,000 units are available worldwide.

With such a tiny production run, it's more likely to be a museum piece than a video card anyone will actually use.

With such a tiny production run, it's more likely to be a museum piece than a video card anyone will actually use.

pawel86ck

Banned

Performance today is very comparable and when games will start using more vram then RTX 2080 results will only get much worse, and it's not a guestion of if, because when next gen consoles will launch in the near future with 16-24GB of system memory, then vram usage in multiplatform games on PC will increase without any doubts. It happened many times before and it will happen again. I still remember how angry I was when I bought GTX 680 2GB in 2012, it was extremely fast card, but very soon PS4 has launched and although I could run PS4 games with 2x better performance or 2x higher resolution my GPU was vram limited in almost every game, and stuttering killed whole experience.Sorry but this is ridiculous. You really think it makes sense to buy a card that has worse performance today in the hopes that it’ll maybe have better performance in the future?

I guess we’ll see, but I’d be surprised if the relative performance between 2080 and Radeon VII changes all that much. I certainly wouldn’t base a $700 purchase on that hope.

Nv should never release RTX 2080 with just 8GB. Nv probably wanted to make more money on RTX 2080 cards so they went with just 8GB. Personally I have learned on my own mistake from the past and right now I consider GPU vram amount extremely important aspect. 1,5 year ago I could buy GTX 1080 with 8GB, but I wanted more vram, so I bought 11GB 1080ti and today there are alreqdy games like RE2 that use around 9GB vram in 4K and next year when next gen consoles will launch people will see more and more games like that.

thelastword

Banned

Well I think there is more than a little......I guess it can also depend on which vendor you prefer, because people buy cards or don't based on that reason alone, but technically; Radeon 7 has +8Gb of Vram +2.1 more bandwidth which translates to less stuttering in games.....(watch the optimum tech video for a few examples).......So these features makes it more futureproof.....Nobody buys a card at $700.00 simply for games you can get now or for older games, which as was shown in many of the videos, even the bittech video btw. There are many games and applications where the extra memory is being used now....Even Richard Leadbetter's productivity application errored out with an NV card decked with 12GB of Vram but finished the job with the 16Gb Radeon.....It's a decent card, but the problem is that there is little reason to get it over the 2080. I wouldn't be surprised if nvidia announces a tiny price drop on the 2080 to make it better.

I think the Radeon 7 is perfectly poised, it offers high end performance for 1440p and 4k gaming with less stutter over NV...So a great gaming card which doubles up as the best production card in that price range is insane value.......

As a matter of fact. Radeon 7 is able to beat the 2080ti in many productiivity suites as well, as shown in some of the reviews.....It even has +5Gb Vram +40% percent more bandwidth over that card......Too bad there's no crossfire, because if you could get two Radeon 7's, it would still be cheaper than many of the 2080ti cards I'm seeing on Newegg or just $200 more than the standard $1200 price offering/range.... Cheapest 2080ti on Newegg is $1149 and the most expensive I'm seeing is $1658, the averge price for that card is higher than $1200.00.......Not that Radeon competes with the 2080ti in gaming, it was never aiming for it, but the extra vram and bandwidth and it's productivity strength over that card, just speaks to it's value as well......

ethomaz

Banned

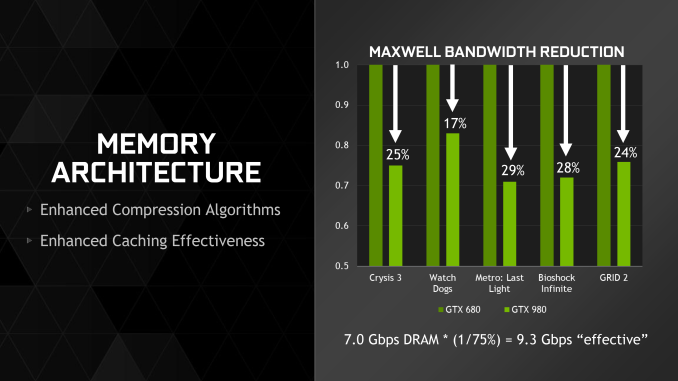

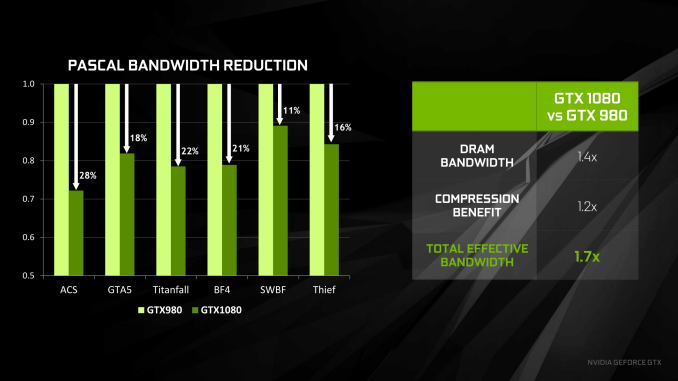

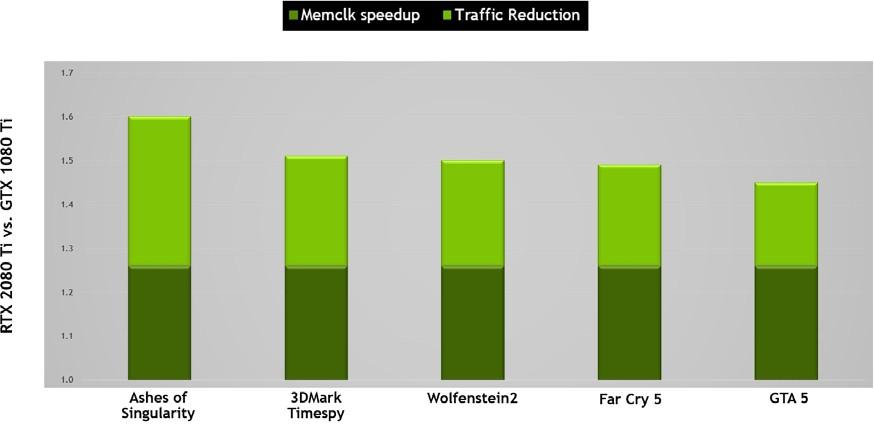

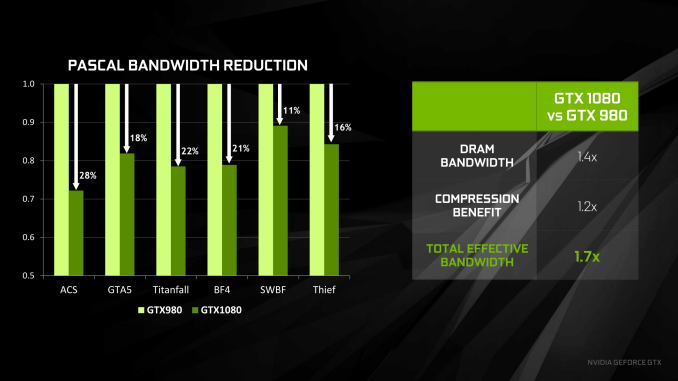

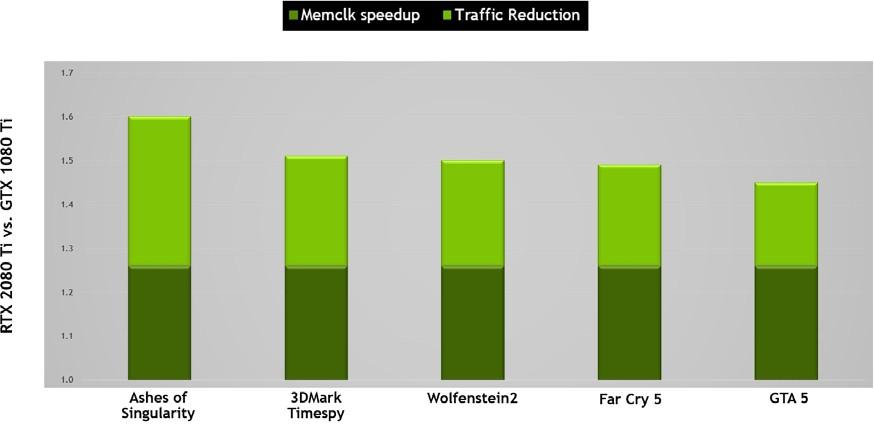

VRAM usage won't be moved that much in the future years... it wil have a spike when games started to use 8k resolutions but the trend is to better memory management and compression to use less and less VRAM.Performance today is very comparable and when games will start using more vram then RTX 2080 results will only get much worse, and it's not a guestion of if, because when next gen consoles will launch in the near future with 16-24GB of system memory, then vram usage in multiplatform games on PC will increase without any doubts. It happened many times before and it will happen again. I still remember how angry I was when I bought GTX 680 2GB in 2012, it was extremely fast card, but very soon PS4 has launched and although I could run PS4 games with 2x better performance or 2x higher resolution my GPU was vram limited in almost every game, and stuttering killed whole experience.

Nv should never release RTX 2080 with just 8GB. Nv probably wanted to make more money on RTX 2080 cards so they went with just 8GB. Personally I have learned on my own mistake from the past and right now I consider GPU vram amount extremely important aspect. 1,5 year ago I could buy GTX 1080 with 8GB, but I wanted more vram, so I bought 11GB 1080ti and today there are alreqdy games like RE2 that use around 9GB vram in 4K and next year when next gen consoles will launch people will see more and more games like that.

Next-gen consoles are set to use 16GB shared memory... maybe 20GB shared memory... no more than that.

That is what is happening each new GPU architecture...

Some games allocate the full VRAM but they didn't use all of them so running them with 8GB or 4GB cards won't make any difference between performance or graphic quality.

Radeon VII has 16GB for prosumers... not gamers.

We reached a era in PC world that more space (that include memory) is not needed that is why the advance is so slow.

Last edited:

pawel86ck

Banned

Your graphs shows memory bandwidth reduction, but not memory usage reduction! RE2 is game made with current gen consoles in mind and look at vram usage on PC in 4K, around 9GB! There are also other games like with COD and final fantasy with similar vram usageVRAM usage won't be moved that much in the future years... it wil have a spike when games started to use 8k resolutions but the trend is to better memory management and compression to use less and less VRAM.

Next-gen consoles are set to use 16GB shared memory... maybe 20GB shared memory... no more than that.

That is what is happening each new GPU architecture...

Some games allocate the full VRAM but they didn't use all of them so running them with 8GB or 4GB cards won't make any difference between performance or graphic quality.

Radeon VII has 16GB for prosumers... not gamers.

We reached a era in PC world that more space (that include memory) is not needed that is why the advance is so slow.

Next gen consoles will have at least twice as much memory compared to current gen consoles, so if 8GB vram can be an issue already then you can bet next gen consoles will push vram requirements to the new level.

2080 unlike radeon VII has very interesting RTX feature (DLSS also) but even RTX feature increase vram requirements even more. Battlefield 5 use around 5-6GB vram without RTX, and 8GB with RTX. Basically speaking 8GB vram is not enough for high end card with RTX feature on top of that, 2080 has 8GB the same amount of memory as much cheaper and old 580 RX! But I'm must say what Nv have done is very clever, because now people will buy 2080 anyway, and 1/2 years later people will be forced to buy new GPU because of vram issues.

Last edited:

ienjoyrawfish

Member

So if I'm in the market for a high end GPU for 4K gaming, but can't afford a 2080ti, am I right in thinking it goes something like this in terms of best price/performance:

1080ti used>>>>Radeon VII>>>>>2080

?

Isn't Navi going to be aimed more at the mid range market though?

1080ti used>>>>Radeon VII>>>>>2080

?

This is clearly a stopgap product just so AMD can at least show their face in the high-end gaming market. Wait for Navi.

Isn't Navi going to be aimed more at the mid range market though?

Pagusas

Elden Member

Great review here........Probably one of the best...He was the only one I've seen able to get the card OC'd too....Great gaming results, Superior production capabilites and great temps/wattage.......The truth is, if you undervolt Radeon 7 slightly, you can boost up clocks over 200Mhz easily, you can also boost the memory as well......Of course, by now, many tech guys should know, that the first thing you do on an AMD card is to boost the power limit to max.....I think we will see some interesting results from Radeon 7, when all folk get the memo on how to use the card properly.....

Another solid review with a different config....

The way the Radeon 7 is designed with it's sensors, at default it pushes the fan speed up quite a bit. AMD is aware of that and will offer a quiet mode option, but undervolting the Vcore is not only allowing an OC on Radeon 7, but it allows, lower temps, lower decibels and of course lower fan speeds....Some updated/public drivers should sort a few issues with afterburner etc......

Why do you.... type setences...like this....

And why are you defending this card? It’s overpriced to the extreme for what it is.

JohnnyFootball

GerAlt-Right. Ciriously.

Based on the reviews, this has been a disaster. It's hard to fathom how stupid AMD was to release these out to review with the drivers being in such poor shape.

Despite that, the performance in a lot of cases is decent, but not exceptional.

The thing that surprised me is how good they made the 1080 Ti look. It's too bad everyone missed out when they could be had for $600 - $650.

Despite that, the performance in a lot of cases is decent, but not exceptional.

The thing that surprised me is how good they made the 1080 Ti look. It's too bad everyone missed out when they could be had for $600 - $650.

Last edited:

JohnnyFootball

GerAlt-Right. Ciriously.

No. It's priced right at where it's supposed to be. It's performance is on par with the 2080 and is priced at that.Why do you.... type setences...like this....

And why are you defending this card? It’s overpriced to the extreme for what it is.

That's not to say it's a great deal by any means, but it's not overpriced.

Insane Metal

Member

...except it doesn't have half the features the 2080 has.No. It's priced right at where it's supposed to be. It's performance is on par with the 2080 and is priced at that.

That's not to say it's a great deal by any means, but it's not overpriced.

Aintitcool

Banned

Bomba means a good price for gamers, with a new fan system I am interested for its better Media capabilities. Am super interested in ray tracing but I can assume this one will be on sale in 6 months.

ethomaz

Banned

Did you checked with another tool? Because it didn't use 9GB of VRAM... the ingame tool maths are wrong... it is broken.Your graphs shows memory bandwidth reduction, but not memory usage reduction! RE2 is game made with current gen consoles in mind and look at vram usage on PC in 4K, around 9GB! There are also other games like with COD and final fantasy with similar vram usage

Next gen consoles will have at least twice as much memory compared to current gen consoles, so if 8GB vram can be an issue already then you can bet next gen consoles will push vram requirements to the new level.

2080 unlike radeon VII has very interesting RTX feature (DLSS also) but even RTX feature increase vram requirements even more. Battlefield 5 use around 5-6GB vram without RTX, and 8GB with RTX. Basically speaking 8GB vram is not enough for high end card with RTX feature on top of that, 2080 has 8GB the same amount of memory as much cheaper and old 580 RX! But I'm must say what Nv have done is very clever, because now people will buy 2080 anyway, and 1/2 years later people will be forced to buy new GPU because of vram issues.

JohnnyFootball

GerAlt-Right. Ciriously.

And the 2080 doesn't possess the non-gaming features that could be provided by 16GB HBM2....except it doesn't have half the features the 2080 has.

Most importantly, those RTX features are essentially meaningless if the performance and support is non-existent.

As of right now, after close to 6 months on the market, Battlefield is still the only game with support for ray tracing.

Last edited:

shark sandwich

tenuously links anime, pedophile and incels

Navi is the next generation architecture. Rumors are that the first Navi cards will be midrange but high-end will come later this year/early 2020So if I'm in the market for a high end GPU for 4K gaming, but can't afford a 2080ti, am I right in thinking it goes something like this in terms of best price/performance:

1080ti used>>>>Radeon VII>>>>>2080

?

Isn't Navi going to be aimed more at the mid range market though?