-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Witcher 3 gameplay from YouTubers from CDPR event

- Thread starter Tovarisc

- Start date

TheStruggler

Report me for trolling ND/TLoU2 threads

cant wait for direct feed but even that footage the ps4 version looked well done

Can you then even then trust CDPR/Sony/MS when they release that console footage that it's actually console footage and not from PC version with controller?

It's there only for a show, obviously. There is PC hidden beneath that sofa.

People has grown very skeptical about this stuff and don't really trust footage to be what it's said to be. Can't really blame people tho, many publishers/devs like to push deceiving footage.

When it's confirmed to being actual console footage by either CDPR themselves, or the words confirming it's current gen console footage across the screen. Then they can be held libel if it's not.

Note to look at the ps4 that is on, by the bottom right hand corner of the screenshot....

EDIT: did people miss the ps4 thats on in the picture....

I miss nothing. That's not enough to confirm it's PS4 footage. It would have to specifically say it is, not implied by an on console. Yes, I've grown cynical.

EatChildren

Currently polling second in Australia's federal election (first in the Gold Coast), this feral may one day be your Bogan King.

Still a great looking game.

Still a great looking game.

Started my 2nd playthrough few days ago and only texture that is "bad" is one for Geralt's torso. Witcher 2 looks so gud even by todays standards.

Still a great looking game.

Technically, sure, but I find this particular screenshot really ugly. Geralt looks like he has been pasted on and the whole scene is just a vomit of color.

And the color/lighting/insanity of that foliage!

It really does seem like witcher 2 was pretty uneven from an artistic standpoint

Come at me, bros!

Technically, sure, but I find this particular screenshot really ugly. Geralt looks like he has been pasted on and the whole scene is just a vomit of color.

And the color/lighting/insanity of that foliage!

It really does seem like witcher 2 was pretty uneven from an artistic standpoint

Come at me, bros!

It's important to understand what's going on in this scene. There's a crazy cursed zone on the battlefield that's distorting the colors and warping the sun's rays. At this part, Geralt is traveling with a sorceress who creates a shielded zone, so the people walking inside of it don't really have the colors distorted.

Unless that was satire and I completely missed the point of your spoiler'd part.

jim2point0

Banned

Still a great looking game.

Arrows!

Bloooooom.

Trolls!

Panoramaaaaaa

Primethius

Banned

Thanks for reminding me about the troll, lol.

I reloaded a save two or three times because he would attack me after the initial conversation and I thought it was bugged.

I reloaded a save two or three times because he would attack me after the initial conversation and I thought it was bugged.

It's important to understand what's going on in this scene. There's a crazy cursed zone on the battlefield that's distorting the colors and warping the sun's rays. At this part, Geralt is traveling with a sorceress who creates a shielded zone, so the people walking inside of it don't really have the colors distorted.

Unless that was satire and I completely missed the point of your spoiler'd part.

No it wasn't satire. I find it ugly and was jokingly pointing out that I expect some people to react to it. May be it makes sense from the context of the story, but it isn't aesthetically pleasing by any means. I'm just pointing out that it isn't a shot that represents how beautiful the game is, especially when presented without context. Subjective opinion, of course.

The ones jim posted above, for example, look fantastic. The one we are discussing is giving me seizures

Still a great looking game.

Yep.. the last time I played it I was really suprised how good some of the ground textures look even in comparison to newer games - sorry for jpg

Summer Haze

Banned

Yes, if you look at the materials I think it's pretty obvious. It would be hard to get that sort of interaction with light without PBR. But here's a quote:

Funnily enough I actually haven't found it very obvious in this game. Usually I can spot it's use easily. *Shrugs*

jim2point0

Banned

Funnily enough I actually haven't found it very obvious in this game. Usually I can spot it's use easily. *Shrugs*

It's really not. When I heard this game was actually using PBR, I was surprised. You can see it on some armors and clothing though.

Dictator93

Member

Funnily enough I actually haven't found it very obvious in this game. Usually I can spot it's use easily. *Shrugs*

It's really not. When I heard this game was actually using PBR, I was surprised. You can see it on some armors and clothing though.

The question is then posed, who are you duders "spotting" it.

Beyond just saying "it looks good" that is.

Ugh. Seriously some of the worst video editing I've ever seen at any game website.

Honestly, I could deal with the bad dubstep. But those camera zoms? The ones that make the image a blurry mess? And the awful cuts that are barely in time with the music and seem more random than anything else?

IGN grabs some amazing footage and then fucking ruins it.

Summer Haze

Banned

The question is then posed, who are you duders "spotting" it.

Beyond just saying "it looks good" that is.

I dunno...there's just a certain look a game (usually) has when it uses it. I'm not tech-savvy enough to explain why. Sorry I don't have a better explanation, lol.

It's okay. I bought Witcher 2 on day one, and I was never impressed by its graphics. The game uses some weird filters and color schemes that make it ugly as sin at times.Come at me, bros!

DOWN

Banned

How do people feel about Triss' hair? I like the character, but her hair being a dyed clown unnatural red looks ridiculous and tacky to me. Seems really out of place in a medieval sort of aesthetic.

Yeneffer is great looking. Though her more complex hairstyle was neat, it reminded me of over the top glamour mods people make for Skyrim so I like her newer look better. Has a more natural fall to it IMO.

Yeneffer is great looking. Though her more complex hairstyle was neat, it reminded me of over the top glamour mods people make for Skyrim so I like her newer look better. Has a more natural fall to it IMO.

GavinUK86

Member

Looks alright considering it's off screen footage.

if it is really from a ps4

DOWN

Banned

Like just compared to open world? Or RPGs?damn, Witcher 2 still looks better than anything on ps4 IMO.

The lighting isn't better or worse in either version. They've made changes to the scene that result in different placement and distance of the light sources (the flames). We see specular highlights in places in the new version that aren't in the old version, and vice versa.

It also looks like they redesigned the armor somewhat, but it's hard to tell for sure because a lot of it is in shadow in both versions.

In the newer version the burning house in the background is further away, whereas in the older version there is a smaller fire that is closer behind him. The entire scene is much darker in the older version, while the newer version has fire all around and is therefor generally better lit. In the newer version it seems the strongest light source is coming from the front and right of him, which naturally throws the left sides of each leg into shadow. His victim is being affected by the light in the same way (not as easily seen in that shot, but in some of the previous I posted). All of the lighting looks consistent.

Where'd these new images come from?

damn, Witcher 2 still looks better than anything on ps4 IMO.

Lol no

TW2 is a very inconsistent looking game tbh.

On the topic of characters, I found it interesting how they decided to give Geralt a more 'natural' skin tone. He should be pretty damn pale.

Primethius

Banned

Yep, that's the PS4 version. It's looking good.

Unless CDPr decided to implement PS4 controller icons in the PC version.

Unless CDPr decided to implement PS4 controller icons in the PC version.

The way the light is interacting with the foliage looks the same as the "Volume Based Translucency" from the Nvidia Tech Demo.

https://www.youtube.com/watch?v=3SpPqXdzl7g

And it is stunning.

I don't know if TW2 use a comparable DX9 technique, but the way the illumination interact at every level [HDR/color space, bloom, lightshafts, shadows] with foliage was already awesome [and it still is]:

https://www.youtube.com/watch?v=3VcNiMgQGSo&feature=youtu.be&t=50s

Yep, that's the PS4 version. It's looking good.

Unless CDPr decided to implement PS4 controller icons in the PC version.

I hope they did :b I want plug my PS4 controller into my PC and get PS4 controller icons in Witcher 3.

Primethius

Banned

I hope they did :b I want plug my PS4 controller into my PC and get PS4 controller icons in Witcher 3.

Having gotten used to Inputmapper while using my DS4, I don't particularly mind either way. It would be nice though.

You have literally no idea what you're talking about. GSync is done to fix SCREEN TEARING while having unlocked fps. That's the point of it. Output device has nothing to do with stutter. Stutter happens in the GPU before the picture reaches it's destination. Jesus christ.

Just because VSync helps with stutter doesn't mean it has anything to do with the reasons for it. VSync helps stuttering because stuttering is based on sudden increase in the frame intervals, and VSync places an artificial cap which standardizes the interval. GSync actually breaks this and introduces the suttering back.

I think you're still talking about screen tearing with a wrong term.

http://www.anandtech.com/show/6857/amd-stuttering-issues-driver-roadmap-fraps/3

http://www.pcper.com/reviews/Graphics-Cards/Frame-Rating-Part-2-Finding-and-Defining-Stutter

(btw, how can one capture stutter or microstutter in action into a video, if it had anything to do with the output device?)

You can facepalm me all you want, but, I'm sorry, you're confused about all this.

G-sync gets rid of three things: stutter, screen-tear, and input lag.

Traditionally, if you use v-sync and your framerate drops below your display's refresh rate you get stuttering. This happens because there is no longer a new frame available each time the display refreshes, which results in some frames being duplicated to send to the display when there isn't a new frame available each time the display has to refresh. Sometimes frames are even repeated more than once. This uneven frame cadence results in what is commonly known as stutter, stuttering or judder.

Now, you can turn off vsync, but the result is screen tear and still some wobble and judder to the animation. Even ignoring the ugly tears across the image, the motion still isn't as smooth as you get with vsync when your frame rate is at or above your refresh rate.

The other downside of vsync, aside from the stutter you get when framerate drops below refresh rate, is input lag. This happens because frames are stored in a frame buffer and not immediately sent out to the display. The more frames buffered the more lag. This is why triple buffering has more input lag than double buffering.

So, as you can see, the only way to avoid both stutter and screen tear in a traditional setup is to use vsync and maintain a framerate at or above your display's refresh rate (or a factor thereof).

This is also why console games begin to stutter whenever they drop below 30fps (or 60fps). Most tvs refresh at 60fps, so framerates in console games are usually targeted at 30fps, which is a factor of 60fps (divides evenly into it). This way each frame will be displayed exactly twice and an even frame cadence will be maintained. But if the framerate drops below 30fps you start to get duplicated frames and the animation becomes jerky and irregular, i.e. stutter.

There's simply no getting around it --if you're using vsync and your framerate drops below your refresh rate you'll get stutter. It's immediately noticeable. If my framerate dropped even a few frames below my previous monitor's 60Hz refresh rate I would immediate notice the stutter. Camera panning and movement in the game become jerky and irregular.

G-Sync/free sync gets rid of all that, of course. No more stutter, screen tear or added lag.

Here is a quote from anandtech if you don't want to take my word for it.

http://www.anandtech.com/show/7582/nvidia-gsync-reviewWhen you have a frame that arrives in the middle of a refresh, the display ends up drawing parts of multiple frames on the screen at the same time. Drawing parts of multiple frames at the same time can result in visual artifacts, or tears, separating the individual frames. Youll notice tearing as horizontal lines/artifacts that seem to scroll across the screen. It can be incredibly distracting.

You can avoid tearing by keeping the GPU and display in sync. Enabling vsync does just this. The GPU will only ship frames off to the display in sync with the panels refresh rate. Tearing goes away, but you get a new artifact: stuttering.

Because the content of each frame of a game can vary wildly, the GPUs frame rate can be similarly variable. Once again we find ourselves in a situation where the GPU wants to present a frame out of sync with the display. With vsync enabled, the GPU will wait to deliver the frame until the next refresh period, resulting in a repeated frame in the interim. This repeated frame manifests itself as stuttering. As long as you have a frame rate that isnt perfectly aligned with your refresh rate, youve got the potential for visible stuttering.

Looks alright considering it's off screen footage.if it is really from a ps4

Yeah, I'm sure it will be fine. I just want some decent footage.

You can facepalm me all you want, but, I'm sorry, you're confused about all this.

G-sync gets rid of three things: stutter, screen-tear, and input lag.

Traditionally, if you use v-sync and your framerate drops below your display's refresh rate you get stuttering. This happens because there is no longer a new frame available each time the display refreshes, which results in some frames being duplicated to send to the display when there isn't a new frame available each time the display has to refresh. Sometimes frames are even repeated more than once. This uneven frame cadence results in what is commonly known as stutter, stuttering or judder.

Now, you can turn off vsync, but the result is screen tear and still some wobble and judder to the animation. Even ignoring the ugly tears across the image, the motion still isn't as smooth as you get with vsync when your frame rate is at or above your refresh rate.

The other downside of vsync, aside from the stutter you get when framerate drops below refresh rate, is input lag. This happens because frames are stored in a frame buffer and not immediately sent out to the display. The more frames buffered the more lag. This is why triple buffering has more input lag than double buffering.

So, as you can see, the only way to avoid both stutter and screen tear in a traditional setup is to use vsync and maintain a framerate at or above your display's refresh rate (or a factor thereof).

This is also why console games begin to stutter whenever they drop below 30fps (or 60fps). Most tvs refresh at 60fps, so framerates in console games are usually targeted at 30fps, which is a factor of 60fps (divides evenly into it). This way each frame will be displayed exactly twice and an even frame cadence will be maintained. But if the framerate drops below 30fps you start to get duplicated frames and the animation becomes jerky and irregular, i.e. stutter.

There's simply no getting around it --if you're using vsync and your framerate drops below your refresh rate you'll get stutter. It's immediately noticeable. If my framerate dropped even a few frames below my previous monitor's 60Hz refresh rate I would immediate notice the stutter. Camera panning and movement in the game become jerky and irregular.

G-Sync/free sync gets rid of all that, of course. No more stutter, screen tear or added lag.

Here is a quote from anandtech if you don't want to take my word for it.

http://www.anandtech.com/show/7582/nvidia-gsync-review

Then it's just a mixup of terminology. Generally, the word "stutter" means temporary, complete a halt of video. Even the literal word "stutter" refers to the speech issue where your speech is cut and halted in fast succession. Almost everyone, when talking about sutter as an issue of games, understands it that way and what you're talking about should be referred as "jitter" or "refresh rate based microstutter", or something similar as the effect you're talking about is closest to microstutter in terms of actual issue.

Personally, I did experience similar when playing on a 60hz monitor and the fps dropping, but I never called that stutter. However, when upgrading into 144hz it kinda disappeared, as the screen refreshes so fluidly that my eyes aren't strained from having frames doubled. It actually made sub-60fps more bearable experience, strangely.

Hope you understand what I mean

Yep.. the last time I played it I was really suprised how good some of the ground textures look even in comparison to newer games - sorry for jpg

They look good from the top, but I can't figure out a way to get AF in the game with an nVidia card. Ground textures look like smeared messes most of the time.

BigTnaples

Todd Howard's Secret GAF Account

Looks alright considering it's off screen footage.if it is really from a ps4

Looks great.

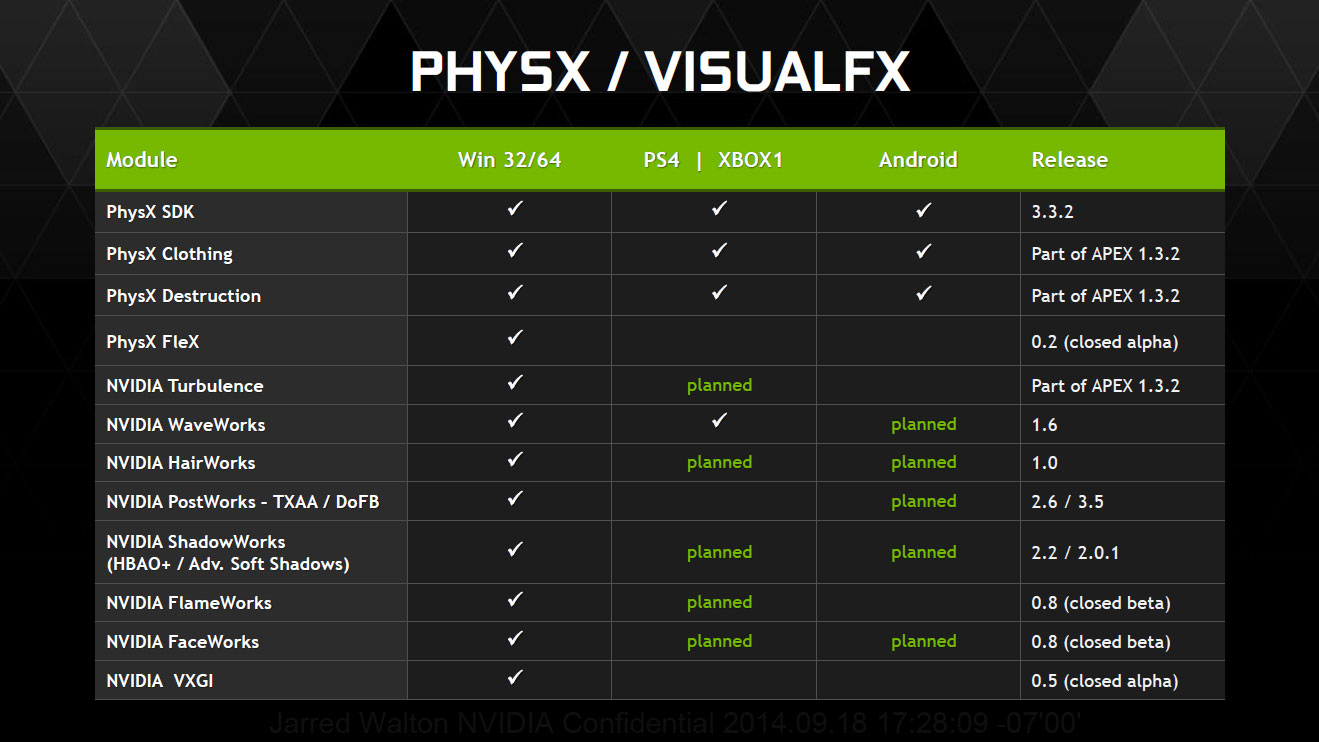

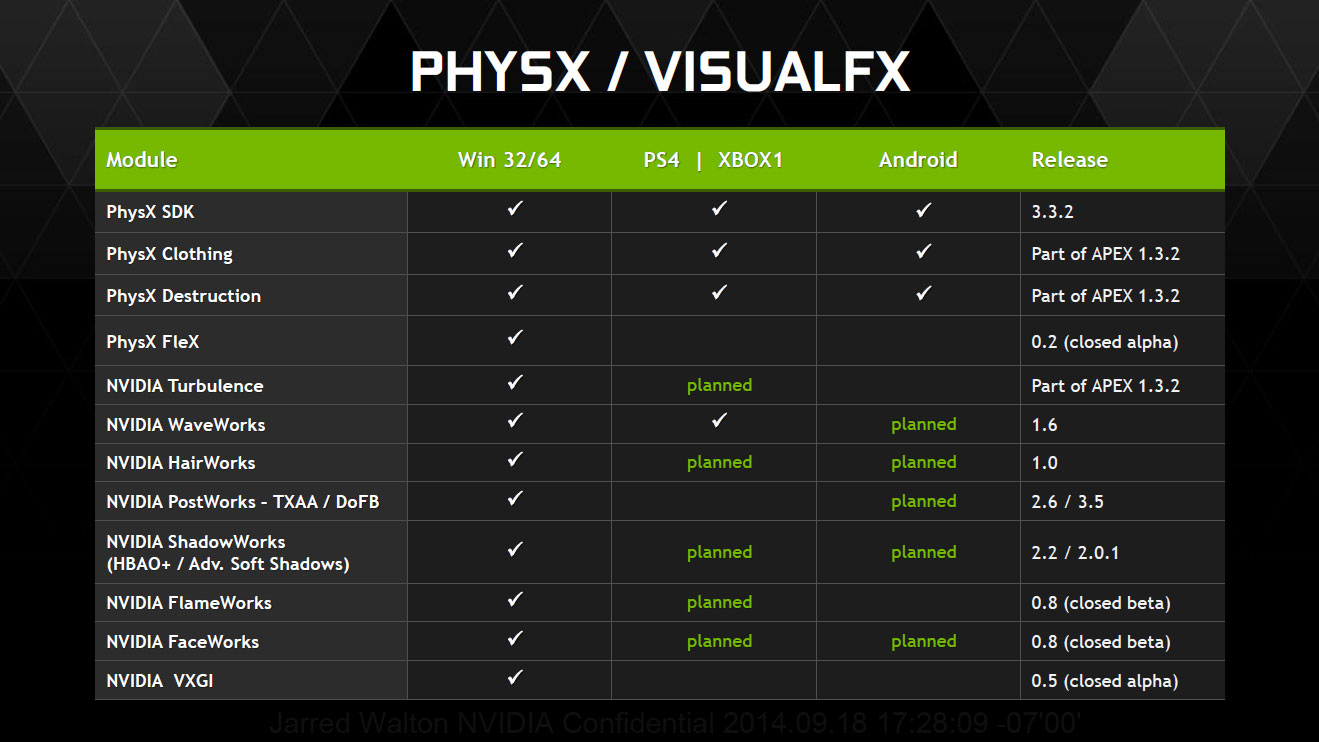

Big differences will be Hair Works, Water Works, PhysX, Volume Based Translucency, and IQ (4K, AA, AF, etc.)

Basically most of the stuff from this video.

https://www.youtube.com/watch?v=3SpPqXdzl7g

Maybe some LoD differences as well. But I expect the PS4 version to look pretty damn comparable with the PC version running at 1080p 2xAA, with no Nvida Exclusive stuff.

therealminime

Member

I still think Flotsam's surrounding forest area is one of the better looking areas of any game still out now. The tech isn't crazy, it's just great art and superb atmosphere.

I am really feeling the hype building for 3 with each day. I just want to get into that world and start wandering around and exploring so badly. So close.

I am really feeling the hype building for 3 with each day. I just want to get into that world and start wandering around and exploring so badly. So close.

Turin Turambar

Member

I call this an major upgrade. Yennefer is sexy af now.

I liked her old hair more.

DOWN

Banned

Looked like a hairspray storm on a Maxim shoot. New hair looks more elegant to me.I liked her old hair more.

Considering the large number of youtubers at the event, I'm pretty surprised with the lack of videos that came out. Maybe CDPR didn't want them to release too much. Just some of the formats were totally just thrown together (i.e. angry joe/the annoying canadian girl). AnderZEL only has the one 10 minute video and a developer interview. Can't say the same for other languages, but the Gopher videos are far and above the best videos released.

Oh well, I just cant feed my need for more videos. Can I have the game now? God damn it.

Oh well, I just cant feed my need for more videos. Can I have the game now? God damn it.

I personally prefer the old version (brunette). Her face looked cuter

I liked her old hair more.

Well obviously Yennefer didn't like ya'll enough to keep her old look. She changed her appearance, because she chose me! *shrugs*

Considering the large number of youtubers at the event, I'm pretty surprised with the lack of videos that came out. Maybe CDPR didn't want them to release too much. Just some of the formats were totally just thrown together (i.e. angry joe/the annoying canadian girl). AnderZEL only has the one 10 minute video and a developer interview. Can't say the same for other languages, but the Gopher videos are far and above the best videos released.

Oh well, I just cant feed my need for more videos. Can I have the game now? God damn it.

Also not every youtuber who was at event has released anything. For e.g. JesseCox and Dodger were there, but neither has released anything. Jesse teased that he will, but then nothing.

I personally prefer the old version (brunette). Her face looked cuter

Well, that's the problem - Yennefer should not look cute

Then it's just a mixup of terminology. Generally, the word "stutter" means temporary, complete a halt of video. Even the literal word "stutter" refers to the speech issue where your speech is cut and halted in fast succession. Almost everyone, when talking about sutter as an issue of games, understands it that way and what you're talking about should be referred as "jitter" or "refresh rate based microstutter", or something similar as the effect you're talking about is closest to microstutter in terms of actual issue.

Personally, I did experience similar when playing on a 60hz monitor and the fps dropping, but I never called that stutter. However, when upgrading into 144hz it kinda disappeared, as the screen refreshes so fluidly that my eyes aren't strained from having frames doubled. It actually made sub-60fps more bearable experience, strangely.

Hope you understand what I mean

Well, I understand how you see it, but I don't agree that that is how most people use the term. In fact, you're the first person I've ever run into that thinks stutter only refers to the long pauses (extreme frame latency spikes), which can be caused by the game engine, drivers or hardware.

I agree that some people use the word "stutter" to refer to cases like that, for lack of a better word, but in the vast majority of cases when someone says their game is stuttering, be it a console or PC game, it's because the game's framerate is out of sync with the refresh rate due to insufficient fps.

Look at the anandtech article I quoted, or basically any article on the subject, and you'll see the term used the way I am arguing it most often is used. Hell, even Nvidia repeatedly states that G-Sync does away with "virtually all stuttering".

If it were true that most stutter wasn't caused by framerates being out of sync with refresh rates then how could G-sync resolve stuttering in the majority of cases?

I can say that my G-Sync monitor has done away with about 95% of the suttering that I used to experience in games. The only forms of stutter that gsync can't do away with are hitches due to asset loading or extremely large frame latency spikes, the latter of which are usually caused by latencies or timing issues in the game engine itself. G-sync can do nothing about those types of stutter, but fortunately they are much less frequent.

And I really can't agree that the most common form of stutter should be referred to as "micro-stutter", because that term generally refers to something else. It most often refers to the subtle frame timing issues that sometimes affect multi-GPU setups. It's true that both forms of stutter are fundamentally caused by variations in frame render times, it's just that the variations are much smaller where micro-stutter is concerned. The typical stutter that occurs when framerates drop below refresh rates is anything but subtle.

http://hardforum.com/showthread.php?t=1317582What is microstuttering?

When running two Graphics Processing units (GPUs) in tandem, via Crossfire or SLI, in Alternate Frame Rendering (AFR) mode, the two GPUs will produce frames asyncronously (for lack of a better term). Microstuttering can be expressed one way as your computer experiancing, in extreme rapid sucession, a high FPS, followed by a low FPS, followed by a high, then low, and so on.

It's actually one of the most consistent games I've ever played artistically, and also in terms of texture quality. Particularly in the realm of RPGs.It really does seem like witcher 2 was pretty uneven from an artistic standpoint

ColonialRaptor

Member

I still think Flotsam's surrounding forest area is one of the better looking areas of any game still out now. The tech isn't crazy, it's just great art and superb atmosphere.

I am really feeling the hype building for 3 with each day. I just want to get into that world and start wandering around and exploring so badly. So close.

I am exactly the same, I still believe that flotsam and its surrounds are the best looking area of any game I've played, the only game that has artwork and graphics that make me go oooh and ahhh is the order 1886. I'm sure there are other games that are capable of doing that to me but I don't play very many. I didn't play the order I just saw my brother playing it.

PLASTICA-MAN

Member

Looks great.

Big differences will be Hair Works, Water Works, PhysX, Volume Based Translucency, and IQ (4K, AA, AF, etc.)

Basically most of the stuff from this video.

https://www.youtube.com/watch?v=3SpPqXdzl7g

Maybe some LoD differences as well. But I expect the PS4 version to look pretty damn comparable with the PC version running at 1080p 2xAA, with no Nvida Exclusive stuff.

Lol

And you assumed allf of that in 3 seconds of an offscreen video.

Just like you assumed this:

Flex is planned since a non CUDA version and a DirectCompute version of it is in the works.

This saddens me greatly. From gopher:

I made a mistake when editing the 3rd part of the Witcher 3 preview. Fixed it and re-rendering now. Will not be published until tomorrow

I made a mistake when editing the 3rd part of the Witcher 3 preview. Fixed it and re-rendering now. Will not be published until tomorrow

Looks quite good despite the potato quality. Hopefully we get direct feed high quality footage soon. But at least we finally got some supposedly PS4 footage.Looks alright considering it's off screen footage.if it is really from a ps4

BigTnaples

Todd Howard's Secret GAF Account

Lol

And you assumed allf of that in 3 seconds of an offscreen video.

Just like you assumed this:

No. I've been saying this all along, and based it on numerous dev quotes, interviews and their last work on consoles as well as reasonable expectations of my PS4 compared to my PC.

I just quoted the footage because it's the current topic of discussion.

And yes, we have all seen that chart. It's irrelevant to the Witcher 3.