DylanTackoor

Member

Has there been any word on whether Nvidia will be releasing new drivers for Inquisition near launch?

.

Has there been any word on whether Nvidia will be releasing new drivers for Inquisition near launch?

Has there been any word on whether Nvidia will be releasing new drivers for Inquisition near launch?

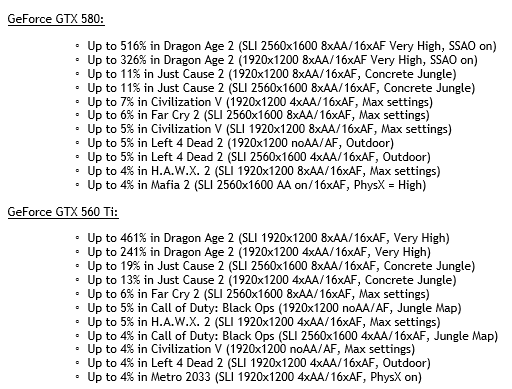

How much performance increase can we expect from a Game Ready driver?

First world problem, I know, but I hope my sli 980s can hold at least 60 fps at 1440p maxed with some aa

How much performance increase can we expect from a Game Ready driver?

First world problem, I know, but I hope my sli 980s can hold at least 60 fps at 1440p maxed with some aa

So wait, maybe I'm reading these wrong, but am I seeing that the game is so CPU-bound that even though I have an i7 3770k and a 970, I'll still be struggling to hit 30fps?

So wait, maybe I'm reading these wrong, but am I seeing that the game is so CPU-bound that even though I have an i7 3770k and a 970, I'll still be struggling to hit 30fps?

I can't imagine that to be the case. What are you reading?

Edit: Awww ok I see the post now. Hmmmm... If the game actually doesn't scale well across cores I'd be very very disappointed because BF4 does that quite well. It's backwards to now have a single very heavy thread.

It'd also be kind of lolzy considering all the backlash against AC: U as that runs at a smooth 30 FPS at 1440p (HBAO+, Soft Shadows turned on) with my 970 and a 2500k.

Quite likely that you'll be matching the PS4 at least. Original benchmark in the OP shows a 270X(a 7870 in other words) doing 28fps average but that's with 4xMSAA and everything maxed. Its also using a better CPU than yours, but its not drastically better. Cut that down to 2xMSAA(or preferably use a different sort of AA solution) and you should definitely be above 30fps average.still not sure if i should get it on pc or ps4 how well will a 3570k at 4.2 and a 7870 run this at 1080?

A tick less than the 760 in the graph in the OP at 4xMSAA and max settings. Obviously turning those down or off will get you much better results.i7 @3.5 ghz

660ti 2gb VRAM

8GB RAM

Anybody have an idea of what I can expect?

I forgot to say what type of i7....it's a 4770kA tick less than the 760 in the graph in the OP at 4xMSAA and max settings. Obviously turning those down or off will get you much better results.

Also depends slightly on which i7 specifically. Take another couple ticks off if its an older i7 like a 920.

I would imagine you could hit 30fps average comfortable.

I have a 7870... does that support Mantle?

Do we know which Post AA this game is using? I'll probably leave MSAA off for the performance gain

7970 still rocking along. I am going to have to learn how to overclock my 4770K soon though; still stock speed.

So wait, maybe I'm reading these wrong, but am I seeing that the game is so CPU-bound that even though I have an i7 3770k and a 970, I'll still be struggling to hit 30fps?

As a tip: keep the textures at their default setting (should be ultra for your card). Trying out "Fade-touched" texture quality with that card may result in some bad stuttering, for very little difference. If I recall correctly, the same texture assets are shown on high, ultra, and fade-touched, the only thing that changes is how large the memory caches of the textures are, so you'll see variances in amount of texture pop-in, but on ultra, I don't think I ever notice it.To hit 60fps with my i7 3770K@4.5ghz, 3GB780 and 8GB RAM@2133mhz I'll need to keep AA to a minimum (display will be 1080p) and maybe AO off? I'd rather keep the textures at their highest setting. I guess I'll need to play around with the options when I get the chance.

Not officially, but the game re-uses a lot of functionality from other Frostbite games (i.e. BF4) so a lot of this should be viable.If sub-60 framerates are going to be a commonplace, does the game at least offer an in-game 30fps lock?

Does UI changes when playing with Xbox controller?

Yes. It will switch to the console UI.

Only if you're trying to flip on 4xMSAA, which can really decimate the framerate. You'll probably be able to keep it at 2x (which still looks good, esp. when combined with the post-process AA) and have a very smooth experience. You certainly won't be CPU limited, that's for sure.

As a tip: keep the textures at their default setting (should be ultra for your card). Trying out "Fade-touched" texture quality with that card may result in some bad stuttering, for very little difference. If I recall correctly, the same texture assets are shown on high, ultra, and fade-touched, the only thing that changes is how large the memory caches of the textures are, so you'll see variances in amount of texture pop-in, but on ultra, I don't think I ever notice it.

Not officially, but the game re-uses a lot of functionality from other Frostbite games (i.e. BF4) so a lot of this should be viable.

i7 @3.5 ghz

660ti 2gb VRAM

8GB RAM

Anybody have an idea of what I can expect?

Is this game gonna support sli at launch?

This is what I need to know. Going PC if that is the case. (GTX 770's).

You guys should request it in this thread on the Geforce forums.

I'm pretty sure that Nvidia is aware of Dragon Age launching soon, but it might help anyway.

Anyone else can't enable Mantle?

I have a 280x

.... if you look at any material in the environment you will notice that every single surface reacts to light exactly the same way. none of the materials look like anything that actually exists in real life.

So this is what I have found on the german website Gamestar:http://www.gamestar.de/spiele/dragon-age-inquisition/artikel/dragon_age_inquisition_im_technik_check,46872,3080155,4.html

30fps lock on cutscenes is ridiculous and super jarring. The game runs great otherwise, except for this:

anyone getting these graphic bugs? happens in game and in cutscenes aswell, just this weird flickering and artifacting