-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Rumor: Kinect 2 so accurate it can lip read; Future Xbox to come w/ Kinect 2 bundled

- Thread starter mocoworm

- Start date

Castor Krieg

Banned

This isn't PR.

Ever heard of "controlled leak"?

thelurkinghorror

Member

Videogame give you the "context"Voice control =/= lip reading. It's simply impossible to do it with high accuracy in a commercially-viable product.

Right now lip-reading is done by people. There are plenty of situations, where disagreements rise due to inability to read some lip movements. You need context for that, electronic device won't provide you with context.

Then we get into the whole accents, pronunciation errors, etc.

Voice control will end up being similar - you would need a lot of processing power to analyze the tone of voice, pitch, etc. Just because you can do stupid one word commands in Kinect does not mean the technology will develop by the same order of magnitude as CPU, RAM, etc. The box is for playing video games, and watching crap TV shows, FFS!

Microsoft at its best - PR bullshit.

Air Zombie Meat

Member

Can't wait to lip waggle my way around a track in forza.

bigtroyjon

Member

Ever heard of "controlled leak"?

LOL, yeah every bullshit rumor coming from a gaming website is a controlled leak by various companies PR firms.

I'm mildly distressed at the implications that the system is focussed around Kinect. I'm not actively opposed to motion controls, but I just don't have the lounge layout to give the system an unimpeded viewpoint of a full body, which would seem to be... problematic, to say the least.

Always-honest

Banned

We got a group of friends together last weekend and played Kinect Sports and Dance Central. Worked great and everyone had fun. I also play Child Of Eden regularly and I prefer the Kinect controls to the controller. I've also heard good things about Gunstringer and Fruit Ninja.

Haven't tried it but i also hear good things about it.... It's a bit of an Smartphone app thingy, but seems to work well.

McHuj

Member

From a technical point, it's certainly possible to do something like lip reading. However, I'm more concerned about the processing requirements to do this and how they will impact the overall system.

Will there be a dedicated a processor or will it run on the cpu/gpu? If the rumors of a 6 cpu are true, I could see something like a full core dedicated to the kinect/os.

Will there be a dedicated a processor or will it run on the cpu/gpu? If the rumors of a 6 cpu are true, I could see something like a full core dedicated to the kinect/os.

thelurkinghorror

Member

Haven't tried it but i also hear good things about it.... It's a bit of an Smartphone app thingy, but seems to work well.

Fruit Ninja on Kinect is awesome! Gunstringer is a good attempt to build a proper platform with Kinect, is good but not so much.

dragonelite

Member

How about you get the first one kinda functioning before talking about the next one?

The hardware is working as it should.

That some devs use it for things it isn't build for yeah dont blame the hardware blame the software.

You don't use raw data for the depth field. You detect jitter between adjacent points. Meaning it's physically impossible to produce a depth field of the same resolution as the camera. If the adjacent camera pixel is lit up by another ray, the jitter information is destroyed. That's why Kinect depth fields are 320x240, even though the two cameras used to produce them are both 640x480.Uh, of course it is hampered by USB, you can even observe it firsthand with the different SDKs on PC, official or not : you can retrieve raw data @ 640x480 precision from the sensor, the only reason why the 360 doesn't do it is because it takes too much bandwidth.

Compression could have helped, but it also adds latency and computing ressources, and reduce measurement precision.

USB is not really a good protocol, especially for video streaming. It's an old problem, but since it's the most common standard, engineers have to deal with it.

The hardware is working as it should.

That some devs use it for things it isn't build for yeah dont blame the hardware blame the software.

That is the main problem, bad software. Games that actually have good controls are mentioned prettry rarely, and even then people ask "but wouldn't it be better with a controller"? Gunstringer and CoE come to mind.

My prediction: Steve Ballmer will introduce Xbox Ten, celebrating the 10 year Xbox anniversary on January 10 at CES.

If you guys still doubt this read the sentence again.

Oh yeah guys 2013/2014 right? I mean we know accurate technical details like this:

Also they mention 'main RAM' so does it have seperate VRAM?

Ze plot thickens.

If you guys still doubt this read the sentence again.

Oh yeah guys 2013/2014 right? I mean we know accurate technical details like this:

But trust Pachter, advanced devkits aren't out there... really."It can be cabled straight through on any number of technologies that just take phenomenally high res data straight to the main processor and straight to the main RAM and ask, what do you want to do with it?" our source said.

Also they mention 'main RAM' so does it have seperate VRAM?

Ze plot thickens.

REMEMBER CITADEL

Banned

Voice control =/= lip reading. It's simply impossible to do it with high accuracy in a commercially-viable product.

It was considered pretty much impossible offering Kinect's current functionality in a commercially-viable product until Kinect appeared. It's the first cheap alternative to a number of highly specialized and very expensive devices, that's why you can see it being used in medicine, education, motion capture and so on.

That said, the statement was just meant to illustrate its potential accuracy. And it's not PR.

You don't use raw data for the depth field. You detect jitter between adjacent points. Meaning it's physically impossible to produce a depth field of the same resolution as the camera. If the adjacent camera pixel is lit up by another ray, the jitter information is destroyed. That's why Kinect depth fields are 320x240, even though the two cameras used to produce them are both 640x480.

You can retrieve 640x480 depth data from the camera, but at that resolution you cannot do player indexing at the same time. It can only be 320x240 with player indexing. And Kinect doesn't use stereo cameras to make the depth field.

Is it even possible?

I mean, lip reading is an iffy science as it is.

And why would you need to lip read if it can recognize voice commands anyway?

I use my Zune Pass for parties and I turn the music up loud. If I wanted to search for a song it wouldn't work because of the music. So, if there was lip reading I would be able to turn the music up and still use the hands-free searching, provided it was offered.

NemesisPrime

Banned

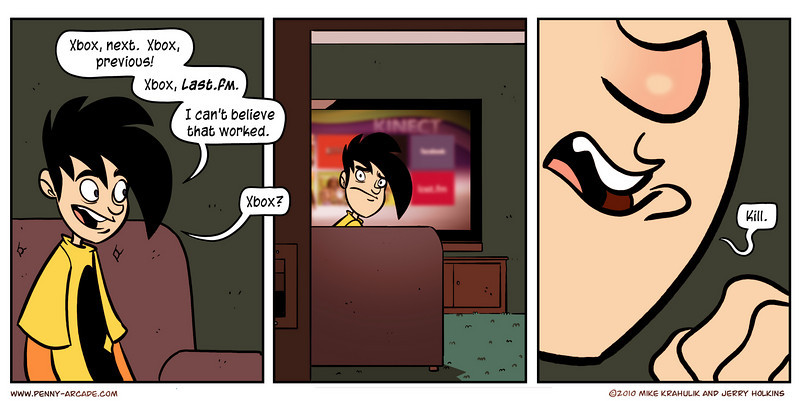

So it can automatically ragequit games for you?

Good idea. When you throw down the controller it send an angry message to your opposition, logs you off and shuts down the xbox.

TBH the current Kinect can do this *if* it would get better data. So Kinect 2 will just get HD cameras and the rest is up to the SDK.

Alx

Member

You don't use raw data for the depth field. You detect jitter between adjacent points. Meaning it's physically impossible to produce a depth field of the same resolution as the camera. If the adjacent camera pixel is lit up by another ray, the jitter information is destroyed. That's why Kinect depth fields are 320x240, even though the two cameras used to produce them are both 640x480.

The native resolution of the sensor used for depth measurement is 1200x960

http://openkinect.org/wiki/Hardware_info#Depth_Image_sensor

The real precision of depth information depends on the distance to the observed object, but it is usually higher than 320x240 (otherwise there would be no point in retrieving a 640x480 depth info).

USB was not the bottle neck, the skeletal recognition takes huge processing power and RAM. I've watched it choke up an i7 with 16 GB of RAM.

I've been using the beta SDK for some time on my laptop, and it has no difficulty detecting and tracking a skeleton. I didn't check the CPU use in details, but I certainly don't have 16GB of RAM. There may be a problem in your software design if it's so slow...

The SDK is supposed to use the GPU too, but I didn't check how much is done on it either.

Combichristoffersen

Combovers don't work when there is no hair

Good idea. When you throw down the controller it send an angry message to your opposition, logs you off and shuts down the xbox.

It registers you yelling, sends a poorly written message full of homophobic slurs to the guy who killed you, and logs you off XBL. We might be onto something here.

thelurkinghorror

Member

btw, they said Kinect2 will be able to read your lips, they don't have said this will be a useful function or be used in games or dashboard...

Risk Breaker

Member

USB being a bottleneck for a 320x240 signal at 30hz... weird, my PSEye works at 640x480 at 60hz or 320x240 at 120hz and it also uses USB ;O

Yep, and game journalists get constantly bribed to write good reviews.Ever heard of "controlled leak"?

tinfoilhat.jpg

InaudibleWhispa

Member

And it also doesn't have a 3D depth camera, which is the thing that is supposedly being limited.USB being a bottleneck for a 320x240 signal at 30hz... weird, my PSEye works at 640x480 at 60hz or 320x240 at 120hz and it also uses USB ;O

Alx

Member

USB being a bottleneck for a 320x240 signal at 30hz... weird, my PSEye works at 640x480 at 60hz or 320x240 at 120hz and it also uses USB ;O

PSEye has been optimized to stream video data and only that (I think RGB info is coded on 3 bytes instead of the usual 4).

A kinect stream is a 32 bit color image + 16 bit depth image (+ sound). It's bigger in size than a regular image, and probably less optimized.

I think we made the calculations a few months ago, that the PSEye would use the full bandwidth of a USB2 port. You probably can't use another device on the same USB controller (but it's not a big problem on PS3 where few peripherals are connected through USB).

Risk Breaker

Member

PSEye has been optimized to stream video data and only that (I think RGB info is coded on 3 bytes instead of the usual 4).

A kinect stream is a 32 bit color image + 16 bit depth image (+ sound). It's bigger in size than a regular image, and probably less optimized.

I think we made the calculations a few months ago, that the PSEye would use the full bandwidth of a USB2 port. You probably can't use another device on the same USB controller (but it's not a big problem on PS3 where few peripherals are connected through USB).

It also uses sound, I dunno. I can have both the PSEye using headtracking + voice chat and a driving wheel on my 80GB fat PS3. I would think MS would've optimized it better.

AlanzTalon

Member

Doesn't say anything about it being built in. That'd be dumb with entertainment centers.

I think what they really mean is packed in, with the port designed specifically for it built into the machine. that the Kinect is now standard equipment (which is obvious)

The Technomancer

card-carrying scientician

As long as I can still stick my computer in the middle of that process, bring it on!

I think some people have got abit carried away here.

They're not saying lip reading is the next great innovation in gaming, or even that lip syncing has a practical purpose in anything they're working on. Just that the technology is advanced and precise enough to pick up very subtle human elements such as lip syncing, which is clearly a great step up from the Kinect we have now.

I don't own a Kinect and have very little interest in any Kinect 2, but the fact that the second hardware is going to be much more advanced is good news. At the least the potential is there for the hardware to do better things than it does currently, which MIGHT lead to better gameplay experiences for all of us (but I doubt it).

People are deluded if they think Microsoft are dropping Kinect anytime soon, or that they don't consider it a success. If we are stuck with it, we might as well get a better version.

They're not saying lip reading is the next great innovation in gaming, or even that lip syncing has a practical purpose in anything they're working on. Just that the technology is advanced and precise enough to pick up very subtle human elements such as lip syncing, which is clearly a great step up from the Kinect we have now.

I don't own a Kinect and have very little interest in any Kinect 2, but the fact that the second hardware is going to be much more advanced is good news. At the least the potential is there for the hardware to do better things than it does currently, which MIGHT lead to better gameplay experiences for all of us (but I doubt it).

People are deluded if they think Microsoft are dropping Kinect anytime soon, or that they don't consider it a success. If we are stuck with it, we might as well get a better version.

REMEMBER CITADEL

Banned

It also uses sound, I dunno. I can have both the PSEye using headtracking + voice chat and a driving wheel on my 80GB fat PS3. I would think MS would've optimized it better.

It's already been explained, but PSEye doesn't provide the same amount of data over the same connection. It completely lacks the depth component.

InaudibleWhispa

Member

I think what they really mean is packed in

Yeah, there is 0% chance of Kinect being built into the console itself. It requires completely different positions depending on the users set-up, and there is no way Microsoft are expecting some people to put an entire console on top of their TV. Plus Kinect tilts to scan the room/see necessary body parts.

The PSEye is different technology to Kinect's main sensor, you can't really compare them like that. Headtracking is just software making use of the standard PSEye stream. Kinect is passing along the same kind of RGB data from one camera, as well as feeding the depth data from a sensor reading an IR projection like this.It also uses sound, I dunno. I can have both the PSEye using headtracking + voice chat and a driving wheel on my 80GB fat PS3. I would think MS would've optimized it better.

Angry Fork

Member

$599

I wish so bad this would happen. It would break the internet. The 2 MS douche looking guys come out (one is wannabe Tom Cruise the other is that sunglasses Kinect guy), they show off Kinect with a family on stage etc. 40 minutes pass of this over and over. Then 599$ US Dollars. Explosion.

natethegreat409

Member

I'm not fat Microsoft.... You don't need to "get me off the couch". I work out plenty on my own and everything. Give me games I can play sitting down with a controller in my hand and make me have to think and actually try. Otherwise, I will not be buying a new console. I'm switching to PC gaming after this generation and it's horrible hardware issues. My computers never break compared to these shitty consoles they are putting out now.

So it can automatically ragequit games for you?

I lol'ed

I wish so bad this would happen. It would break the internet. The 2 MS douche looking guys come out (one is wannabe Tom Cruise the other is that sunglasses Kinect guy), they show off Kinect with a family on stage etc. 40 minutes pass of this over and over. Then 599$ US Dollars. Explosion.

Why would anyone want a company to fuck up so bad? That's no different than the dude in the other thread who said he can't wait for Sony to fuck up with the PS4.

Don't worry, I already know the answer, but it's still sad.

Risk Breaker

Member

The PSEye is different technology to Kinect's main sensor, you can't really compare them like that. Headtracking is just software making use of the standard PSEye stream. Kinect is passing along the same kind of RGB data from one camera, as well as feeding the depth data from a sensor reading an IR projection like this.

Ah, I guess it doesn't compress the information from the IR matrix depth calculation and it does consume a bit of bandwidth. Will a better connection/bandwidth make Kinect that much better though?

Angry Fork

Member

Why would anyone want a company to fuck up so bad? That's no different than the dude in the other thread who said he can't wait for Sony to fuck up with the PS4.

Don't worry, I already know the answer, but it's still sad.

Because it's funny. Besides Sony managed not to kill itself and bounced back. Even if MS did something similar they'd be fine. I just would love to see the internet reaction so bad.

LowEndTorque

Member

cool. Bring on the Brittney Spears lip syncing game.

Americanmushroom

Banned

That's some great technology. It's a shame it's only gonna be used on dance games and stuff. Oh well, the marketing will take care of that problem

InaudibleWhispa

Member

I'm guessing this rumour is referring to new hardware though, so naturally more bandwidth will be hugely beneficial.Ah, I guess it doesn't compress the information from the IR matrix depth calculation and it does consume a bit of bandwidth. Will a better connection/bandwidth make Kinect that much better though?

NemesisPrime

Banned

It registers you yelling, sends a poorly written message full of homophobic slurs to the guy who killed you, and logs you off XBL. We might be onto something here.

Yeah day one!

Similar threads

- 393

- 26K

tusharngf

replied