digoutyoursoul

Member

What made Microsoft go with a turd of a GPU this time around? Didn't 360 have a better GPU than PS3?

No, MS won't do that. People just have to realize, I believe, that MS is targeting the XB1 differently than it did the 360. They also already said they didn't target the higher end of graphics, etc., for gaming, people just didn't listen. In the end, MS is banking on the app and TV functionality making the XB1 take off like the Wii did. Remember, the Wii's graphics capabilities were NOTHING like the X360 or the PS3, but it did very well. MS wants to do that, and the XB1 system is the way they believe they can make that happen.

What made Microsoft go with a turd of a GPU this time around? Didn't 360 have a better GPU than PS3?

What made Microsoft go with a turd of a GPU this time around? Didn't 360 have a better GPU than PS3?

ms can still win the tech wars by dropping xb1 price to $349

$499 for a clearly weaker console, lol, not even apple that bad. ms is trying to be the new nintendo.

I don't think ESRAM makes sense as an excuse for CoD. The engine is not using deferred rendering.

Weak GPU makes more sense. Fillrate issue.

That is also true, the 360 had a forward looking GPU with the unified architecture but the PS3 had seperate Vectors and Pixel pipeline design of the Nvidia 7xxx series gpus.

At least both the X1 and the PS4 are using GCN which is a current and up to date architecture.

I don't see all the fuss. It's early and devs haven't even tapped into the cloud yet.

No, MS won't do that. People just have to realize, I believe, that MS is targeting the XB1 differently than it did the 360. They also already said they didn't target the higher end of graphics, etc., for gaming, people just didn't listen. In the end, MS is banking on the app and TV functionality making the XB1 take off like the Wii did. Remember, the Wii's graphics capabilities were NOTHING like the X360 or the PS3, but it did very well. MS wants to do that, and the XB1 system is the way they believe they can make that happen.

right. architecturally the X1 GPU isn't as dated as RSX was. so there's that.

Well, unless I'm mistaken, so is the PS4's GPU. Not to downplay the comparison too much, but yeah.

And it fits in line with them asking for the extra 10% of GPU use. Memory might not be the weak link in this case.

Actually, it was an accurate statement in terms of performance.

Though this is also true.right. architecturally the X1 GPU isn't as dated as RSX was. so there's that.

Not in terms of fillrate.

I have only seen confirmation that COD Ghosts on next gen adds a bunch of new lighting effects (HDR, volumetric, self shadowing). I haven't seen any confirmation that it isn't using deferred rendering to make it happen.

we do not know if there is a performance difference between the two versions. we do not know if the Xbox One version is running all the same effects at the same complexity as the PS4 version.

we only know the resolutions. remember Battlefield 4, running at a lower average framerate on Xbox One and (currently) missing at least one effect to boot?

10% more pixels over 720p isn't going to get you very far at all. It would only mean that the PS4 version was *almost* double the number of pixels instead of *more than* double.

The Wii wasn't $500. Targeting a mainstream audience with an enthusiast price makes no sense.

Well, unless I'm mistaken, so is the PS4's GPU. Not to downplay the comparison too much, but yeah.

ms can still win the tech wars by dropping xb1 price to $349

$499 for a clearly weaker console, lol, not even apple that bad. ms is trying to be the new nintendo.

The 10% extra GPU power would probably not have resulted in a resolution bump but it may have resulted in an effects bump or a flat frame rate bump. It will be interesting to see the comparisons for COD as the fact they asked for that extra power suggests to me that even at 720P they are(perhaps were) having some problems with something.

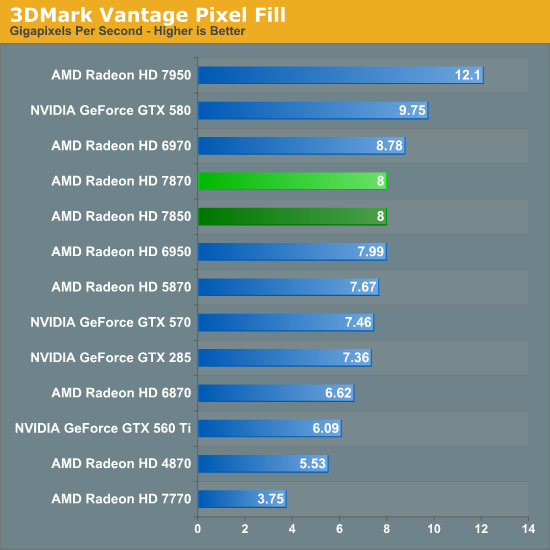

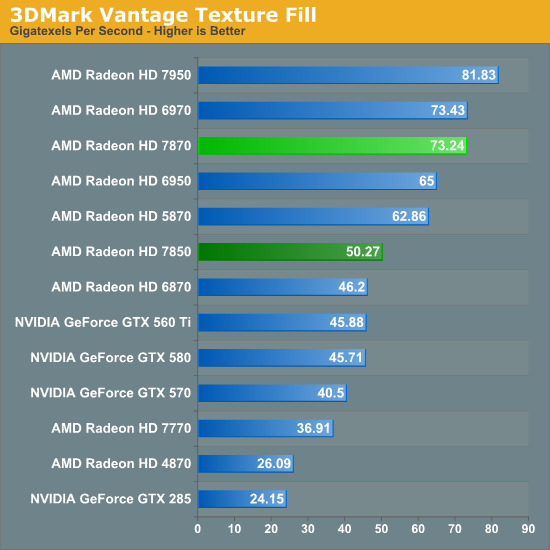

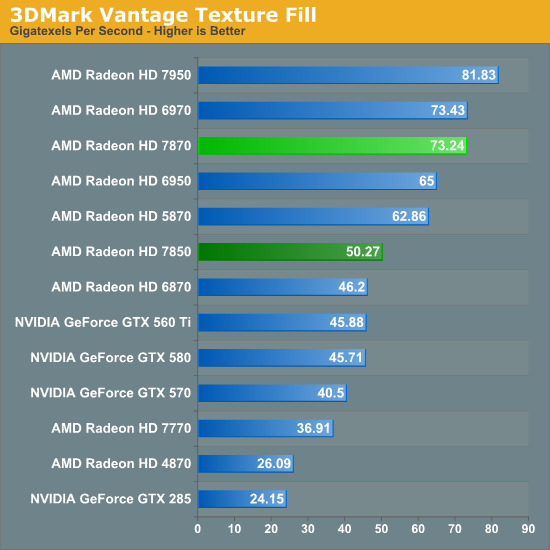

Xbones is substantially weaker and the difference shines right in 1080p...Look up performance on the 7770(it will be harder and harder to find because it is so outdated by now). It can't do 1080p/60fps in anything released within the last two years. The 7850/7870 of the PS4 is right at 60fps/1080p in the same games

MS isn't winning a thing this gen, imo. However, they can stay competitive by dropping the price to $349.

However, how long will that take? A $100 price drop would do wonders in terms of sales but, I don't see even a $50 price drop until post-summer 2014. A $150 price drop? I don't see it being that low until at least 2015.

Then, would Sony just sit back and let them undercut them in price? I don't think so. They're going to make sure the PS4 is cheaper than the X1 alllll gen.

One of those cards has 1GB of video RAM, the other 2GB. I think thats an unfair comparison, find some benchmarks with the 1GB 7850.

Then, would Sony just sit back and let them undercut them in price? I don't think so. They're going to make sure the PS4 is cheaper than the X1 alllll gen.

One of those cards has 1GB of video RAM, the other 2GB. I think thats an unfair comparison, find some benchmarks with the 1GB 7850.

Both consoles are using very standard parts so it really comes down to who is able to absorb loss and how fast do parts scale.

What's the most expensive part of each system? I have to believe the PS4 actually scales better but I don't know how Kinect factors in. Does ESRAM come down in cost over time? Cause GDDR5 will get very cheap.

MS can cut Kinect from the package to cut price substantially. It's just a question of whether or not they are too proud to do it.

Both consoles are using very standard parts so it really comes down to who is able to absorb loss and how fast do parts scale.

What's the most expensive part of each system? I have to believe the PS4 actually scales better but I don't know how Kinect factors in. Does ESRAM come down in cost over time? Cause GDDR5 will get very cheap.

What made Microsoft go with a turd of a GPU this time around? Didn't 360 have a better GPU than PS3?

Both consoles are using very standard parts so it really comes down to who is able to absorb loss and how fast do parts scale.

What's the most expensive part of each system? I have to believe the PS4 actually scales better but I don't know how Kinect factors in. Does ESRAM come down in cost over time? Cause GDDR5 will get very cheap.

I don't think ESRAM makes sense as an excuse for CoD. The engine is not using deferred rendering.

Weak GPU makes more sense. Fillrate issue.

PS4: 1.84TF GPU ( 18 CUs)

PS4: 1152 Shaders

PS4: 72 Texture units

PS4: 32 ROPS

PS4: 8 ACE/64 queues

8gb GDDR5 @ 176gb/s

Verses

Xbone: 1.31 TF GPU (12 CUs)

Xbone: 768 Shaders

Xbone: 48 Texture units

Xbone: 16 ROPS

Xbone: 2 ACE/ 16 queues

8gb DDR3 @ 69gb/s+ 32MB ESRAM @109gb/s

ESRAM scales really well with node shrinks compared to logic so as these are shrunk to 20nm and less the % gap between the X1 APU and the PS4 APU will reduce.

Further researching...

Anvil Next (some Ubi games). It uses deferred lighting. Frostbite 3 (lots of EA games)... deferred lighting. UE4... deferred. Cryengine 3... deferred. Crystal engine (Tomb Raider, Deus Ex: Human Revolution)... deferred.

We won't have to wait long to find out for sure if resolution issues are framebuffer related or launch related.

Everyone is going deferred. Which is why it probably killed the engineers to only have 32mb of ESRAM on xbone but I doubt the suits cared.

Everyone is going deferred. Which is why it probably killed the engineers to only have 32mb of ESRAM on xbone but I doubt the suits cared.

Further researching...

Anvil Next (some Ubi games). It uses deferred lighting. Frostbite 3 (lots of EA games)... deferred lighting. UE4... deferred. Cryengine 3... deferred. Crystal engine (Tomb Raider, Deus Ex: Human Revolution)... deferred.

We won't have to wait long to find out for sure if resolution issues are framebuffer related or launch related.

Everyone is going deferred. Which is why it probably killed the engineers to only have 32mb of ESRAM on xbone but I doubt the suits cared.

Both consoles are using very standard parts so it really comes down to who is able to absorb loss and how fast do parts scale.

What's the most expensive part of each system? I have to believe the PS4 actually scales better but I don't know how Kinect factors in. Does ESRAM come down in cost over time? Cause GDDR5 will get very cheap.

Well sounds like this won't be a good gen for MS multiplats then

ESram seems very limiting

I wonder if KI is using deferred lighting as well. Would explain 720p.

Wasn't the entire point of their using eSRAM in order to save money?

Microsoft obviously think 720p is good enough. If they didn't, Killer Instinct and that gold game would be running at higher resolutions. Neither *needs* real time lighting. We know they don't prioritize 1080p, because most of their exclusive launch games aren't 1080p (and all of Sony's exclusives are).

So why should we expect them to make sure the hardware can do 1080p with advanced rendering techniques?

I honestly think they believe 720p is fine like almost every video game editorial writer and youtube commenter.

It was to save money vs. using EDRAM. They opted not to use GDDR5 because they didn't think it would be available in quantity by fall 2013.

I think people are forgetting one important fact

xbone has more ram than ps4 which will allow for more effects like svogi and dogus

Yeah 32MB broken into even smaller 4x8MB chunks. Not much you can do with 8MB with the next-gen engines that are coming down the pipes.

Likely just like the 360 era, this eSRAM will be pretty much used for post processing type stuff and not much else. So when it comes to the main graphic engine, you will have to souly rely on the 67GB/s of bandwidth, which is just ... impossible. Impossible for launch games, more then impossible for true next-gen " built from the ground up with final hardware in mind on final devkits " engines.

I don't honestly know what Microsoft can do.

It was to save money vs. using EDRAM.

Yes but the general memory scenario for the XB1 relative to the PS4 has far less room for future price drops

I.E. While the ESram will likely get cheaper over time as they successfully shrink the APU (Pretty sure they'll accomplish this only time will tell) but the majority of the memory the DDR3 is already at rock bottom prices

The PS4 however has GDDR5 memory which will drop in price quite a lot over the gen

CPUS are the same, not sure what the price disparity is between GPU's though

More ram? You mean 32mb more? According to GAF's insiders, the OS reserve is 2GB on the PS4. Which actually gives it 1GB more ram for games compared to the Xbox One.

Yeah 32MB broken into even smaller 4x8MB chunks. Not much you can do with 8MB with the next-gen engines that are coming down the pipes.

Likely just like the 360 era, this eSRAM will be pretty much used for post processing type stuff and not much else. So when it comes to the main graphic engine, you will have to souly rely on the 67GB/s of bandwidth, which is just ... impossible. Impossible for launch games, more then impossible for true next-gen " built from the ground up with final hardware in mind on final devkits " engines.

I don't honestly know what Microsoft can do.

According to AMD the 7770 has " the Radeon HD 7770 offers up 1.28 TFLOPS of compute performance, with a texture fillrate of 40GT/s, a pixel fillrate of 16 GP/s, and peak memory bandwidth of 72GB/s."

Do we know the X1's fillrates?

Oh right, yeah.

Yeezus, is Xbone the most cynically designed console of all time? It's pretty much completely unlikeable.