Shmunter

Member

I think he means a tech talk not just paid PR, Lol"We are excited, blah, blah, blah"

I think he means a tech talk not just paid PR, Lol"We are excited, blah, blah, blah"

Sony has already given indications about this. Nothing has changed since.What kind of cooling do you think is gonna be required for the SSD, SSS expansion, and GPU at full clock? This baby is gonna run pretty hot I assume?

I am completely calm. I am also betting that there is absolutely no way that the ps5 OS will only take 0.5 GB of RAM because of the ssd. Your comments in the video and the tone you use speak for themselves. You imply that good Sony made a console (with ...variable clock speeds) for developers while the big bad MS made an ultra powerful console because of marketing reasons. Everyone can watch the video, listen to what you are saying and draw their own conclusions.Calm down man, come on

WHY and HOW am I biased, this is not proganda this is just discussing tech. I even say in my video the rates ARE variable and i even show that this is the case now for PC hardware, it is now new. But to help clear it up. The PS5 clocks ARE variable as required by the load.

As the for the second part, I am truly lost.

It that a joke? Because it makes absolutely no sense lklWTF?

XSX has twice the bamdwidth of the PS5;

896 GB/s vs 448 GB/s.

Few weeks back, folks here claimed AMD were liars about ps5 having rdna2.That is what Cerny said. He also said he spent 2 years talking with devs to learn what they want for a new Playstation. Any reason to think he lied?

NXGamer Thanks for dropping by, hope to see you participating in future threads. We are all thirsty for technical details and appreciate your input

I love the entire "You are biased and here is why" projection I have seen a great deal today, all this feign "I am not bothered about any of this, he is wrong, no details on why but hey, I am too good for that and also, I am so not invested" attitude. Why are you making anything personal here?

The For Developers comment is, as I do explain in the video, getting the Buy-in from the team to help support the product. Sony know they need the 3rd Party as much as 1st. MS have done a great job and they are pushing things on, but I have spoken with enough Devs in detail on the subject and Sony has/was in a much better place on the PS4 with a better piece of hardware, SDK and support. This carries a great deal of weight and is the factor I am discussing here.

I have the ego?, I actually find that almost all people that know me and watch me think the opposite, but I am happy to discuss this why do I have an ego?

And what am I "getting wrong" or stretching here so you can enlighten me?

Did you just add 560 + 336 Gbps to make 896Gbps? Please explain that logic. Also I was talking about I/O bandwidth not memory.

And both Xbox and Ps5 has the same Ram. Don't know where you're pulling all this from.

Also please explain what you mean by ps5 sharing it with cpu and how its different from xbox.

Why do you think this? Both use the same GDDR6 ram from what I saw.

But it doesn't.

And ps5's ssd isn't? Genuinely curious..

And of course Mark Cerny is an idiot who wouldn't take into account how the drive he himself designed gets hot, and throttled to nullify that 5GBps he worked so hard to achieve I suppose.

All evidence points to the opposite so far.

It's possible for the GPU to access the 10 GB of ram and the CPU to access 3.5 GB of the 6 GB of ram. with 2.5 GB reserved for the OS.

My mistake,it seems they're both using 14 Gbps ram.

You will be able to replace the PS5's internal SSD.

from Digital Foundry:

"The internal SSD can be replaced with a bigger hard drive with an off-the-shelf drive - meaning NVMe PC drives will work on your console."

PS5 SSD expansion upgrades explained: Compatible SSDs and other external storage options explored

How the PS5's external SSD storage upgrade works, including compatible SSDs and other external storage options, explained.www.eurogamer.net

No, but he was giving the maximum sequential speed of the SSD, which is irrelevant for games as random access is what matters in gaming.

What evidence?

It's possible for the GPU to access the 10 GB of memory and CPU to access 3.5 GB of the slower 6 GB of memory, as 2.5 GB is reserved for the OS.It that a joke? Because it makes absolutely no sense lkl

Xbox memory is 10GB at 560GB/s and 6GB at 336GB/s.

It is only a single bus/pool you can access it simultaneously to combine the bandwidth lol

At best case you have a theorycal 560GB/s memory access... at worst you have a theorycal 336GB/s memory access.

896GB/s is not even a good joke.

It is only a single 320but bus... you can’t access at the same time more than that lolIt's possible for the GPU to access the 10 GB of memory and CPU to access 3.5 GB of the slower 6 GB of memory, as 2.5 GB is reserved for the OS.

The GPU can be utilizing 560 GB/s of bandwidth and the CPU can be using 336 GB/s.

So, the XSX doesn't have to share it's bandwidth with the CPU unlike the PS5.

It does not have to be partitioned that way as developers can use it how they want to, but that's likely what most devs will do.

from Digital Foundry:

Inside Xbox Series X: the full specs

This is it. After months of teaser trailers, blog posts and even the occasional leak, we can finally reveal firm, hard …www.eurogamer.net

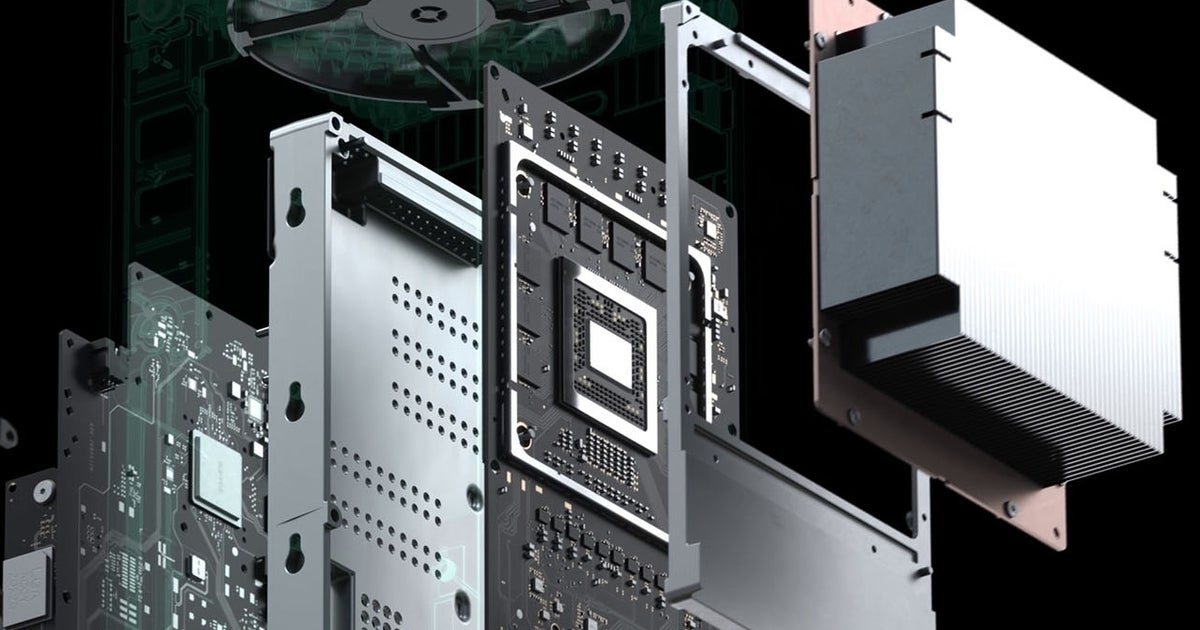

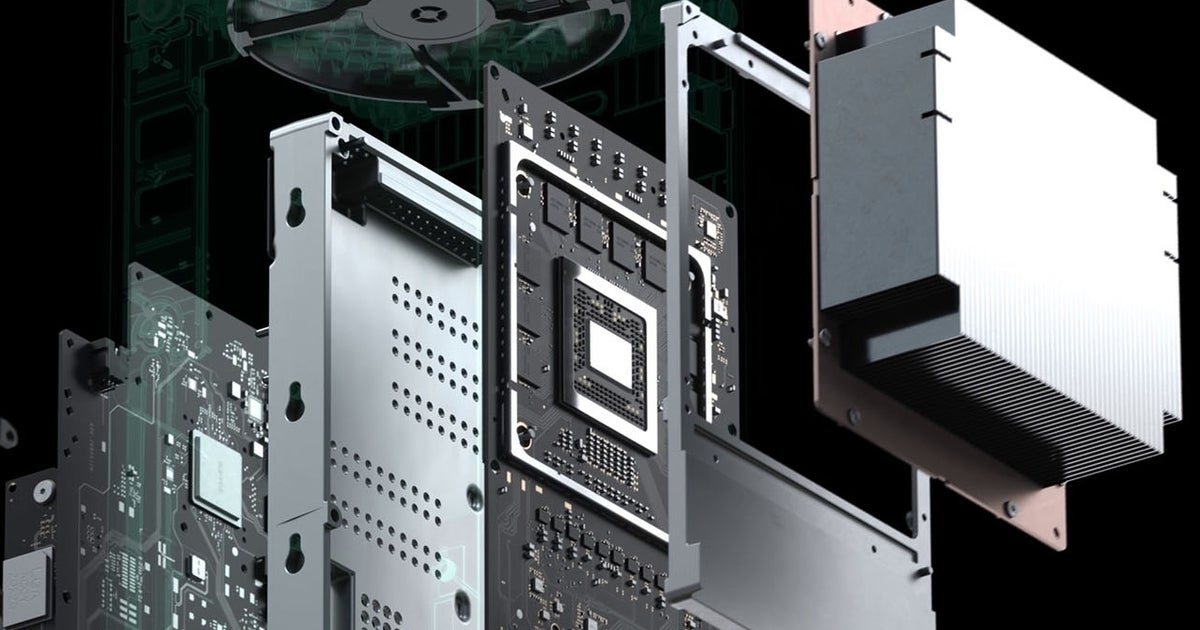

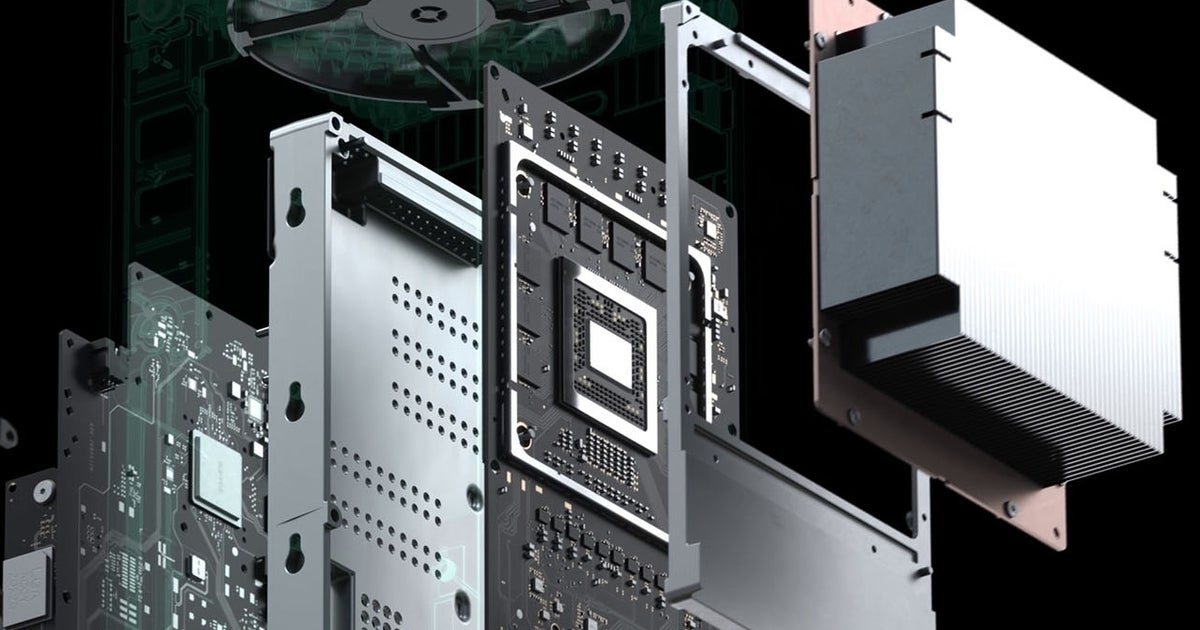

"Microsoft's solution for the memory sub-system saw it deliver a curious 320-bit interface, with ten 14gbps GDDR6 modules on the mainboard - six 2GB and four 1GB chips. How this all splits out for the developer is fascinating.

"Memory performance is asymmetrical - it's not something we could have done with the PC," explains Andrew Goossen "10 gigabytes of physical memory [runs at] 560GB/s. We call this GPU optimal memory. Six gigabytes [runs at] 336GB/s. We call this standard memory. GPU optimal and standard offer identical performance for CPU audio and file IO. The only hardware component that sees a difference in the GPU."

In terms of how the memory is allocated, games get a total of 13.5GB in total, which encompasses all 10GB of GPU optimal memory and 3.5GB of standard memory. This leaves 2.5GB of GDDR6 memory from the slower pool for the operating system and the front-end shell. From Microsoft's perspective, it is still a unified memory system, even if performance can vary. "In conversations with developers, it's typically easy for games to more than fill up their standard memory quota with CPU, audio data, stack data, and executable data, script data, and developers like such a trade-off when it gives them more potential bandwidth," says Goossen. "

It's possible for the GPU to access the 10 GB of memory and CPU to access 3.5 GB of the slower 6 GB of memory, as 2.5 GB is reserved for the OS.

The GPU can be utilizing 560 GB/s of bandwidth and the CPU can be using 336 GB/s.

So, the XSX doesn't have to share it's bandwidth with the CPU unlike the PS5.

It does not have to be partitioned that way as developers can use it how they want to, but that's likely what most devs will do.

from Digital Foundry:

Inside Xbox Series X: the full specs

This is it. After months of teaser trailers, blog posts and even the occasional leak, we can finally reveal firm, hard …www.eurogamer.net

"Microsoft's solution for the memory sub-system saw it deliver a curious 320-bit interface, with ten 14gbps GDDR6 modules on the mainboard - six 2GB and four 1GB chips. How this all splits out for the developer is fascinating.

"Memory performance is asymmetrical - it's not something we could have done with the PC," explains Andrew Goossen "10 gigabytes of physical memory [runs at] 560GB/s. We call this GPU optimal memory. Six gigabytes [runs at] 336GB/s. We call this standard memory. GPU optimal and standard offer identical performance for CPU audio and file IO. The only hardware component that sees a difference in the GPU."

In terms of how the memory is allocated, games get a total of 13.5GB in total, which encompasses all 10GB of GPU optimal memory and 3.5GB of standard memory. This leaves 2.5GB of GDDR6 memory from the slower pool for the operating system and the front-end shell. From Microsoft's perspective, it is still a unified memory system, even if performance can vary. "In conversations with developers, it's typically easy for games to more than fill up their standard memory quota with CPU, audio data, stack data, and executable data, script data, and developers like such a trade-off when it gives them more potential bandwidth," says Goossen. "

How is the CPU accessing the slower GDDR6 memory reserved for the OS and Front Shell if they can't be accessed at the same time ?You have misunderstood the presentation, it is a shared 320bit bus that drops to 192bits when accessing the higher density chips due to striping. When you access the faster 10GB of the memory space you 560GB/s, when you access the slower 6GB of the memory space you get 336GB/s.

You cannot access these at the same time, and the CPU and GPU cannot access memory at the same time, its contended resource and not a split pool.

How is the CPU accessing the slower GDDR6 memory reserved for the OS and Front Shell if they can't be accessed at the same time ?

And why is 13.5 GB of ram available for games as Microsoft says ?

That is what Cerny said. He also said he spent 2 years talking with devs to learn what they want for a new Playstation. Any reason to think he lied?

because he wants to sell the console? Also?To what devs did he talk to? First party devs?

Or any multiplatform games creator? I think any dev would prefer LOCKED frequency instead of variable frequencies.

I have to listen to you. You must surely know better than the Lead PS Architect. Armchair analysts ftw! What more can you tell us?because he wants to sell the console? Also?To what devs did he talk to? First party devs?

Or any multiplatform games creator? I think any dev would prefer LOCKED frequency instead of variable frequencies.

Cerny even mentions that this audio could be used to offload other tasks when not used to process audio if needed. Similiar tricks have been used by first party studios in past PS consoles, so not a shocker but cool to be something considered from the inceptions instead of hack for the situation.I’m confused on a couple of points:

if the system can sustain the “boost” speeds... then it’s not a “boost”, it’s just ... the speed that the thing operates at. If he meat to explain that the system clocks down when under less load to drop power and heat, that’s a fundamental difference to “boosting” when under load. Which is it?

Cerny explains that the CPU and GPU power draw dictates the clock speeds, and that this is a deterministic process. But he also says you can trade CPU power for GPU power. Does this mean that the system can theoretically go higher than the caps listed, where I can trade CPU clock for more GPU clock, or does this actually mean that it can’t sustain both cap clock speeds simultaneously?

36CU at higher clocks is designed to widen the pipe, so to speak, rather than offer up more pipes. However, this limits parallelism in this aspect of the hardware. In modern software trends, threading and parallelism is basically the only way to scale up efficiently. Why did Sony go against the grain, so to speak? The amount of parallelism in modern graphics programming needs as many pipes as it can get.

Clock to heat ratio is not linear; it’s exponential. AMD have had issues with this in their hardware with their consumer cards, where they ran much hotter than their nVidia counterparts. While I don’t doubt Sony’s thermal solution will be sufficient, the noise of the PS4 Pro is not really acceptable for a consumer grade appliance. What thermal solution can Sony offer up that would be power efficient, silent, and sufficient, for those boost clock speeds?

The audio engine is compared to, basically, sticking the entire PS4 cpu on the board and dedicating it to audio. Do developers have more control over this piece of the hardware, or is this a “hands off” area, that uses the power independently to produce Sony’s desired audio output? If developers can control it, can it only be used for audio? How’s does it interface with the rest of the machine?

It’s locked at 9.2, this variable BS is just for show and tell imo , in the beginning 3 years in we might see some use of variable clocks.because he wants to sell the console? Also?To what devs did he talk to? First party devs?

Or any multiplatform games creator? I think any dev would prefer LOCKED frequency instead of variable frequencies.

Just like Nintendo. Those are not our friends.because he wants to sell the console? Also?To what devs did he talk to? First party devs?

Or any multiplatform games creator? I think any dev would prefer LOCKED frequency instead of variable frequencies.

That's a blatant lie.I am completely calm.

Why would you want a locked frequency when say a scene in a game doesn't need all the compute?