kevboard

Member

Reality

is that what the kids call crack nowadays?

Reality

i did say some textures are better and of course its 60fps, no shit sherlock i can take several pics like you of sonic racing worlds running via emulation and it looks the same 99% of the time but keep trolling. again this game can run 60fps max settings on steam deck so its a useless comparison when we know ps4 pro gpu vastly more powerful but you of course don't care and wanna push a false narrative. you been doing the same crap every generation."thEy aRe ClOse"

i would say you are right but then we both wrongis that what the kids call crack nowadays?

ThisSwitch 2 is a beast

i did say some textures are better and of course its 60fps,

no shit sherlock i can take several pics like you of sonic racing worlds running via emulation and it looks the same 99% of the time but keep trolling.

again this game can run 60fps max settings on steam deck with so its a useless comparison when we know ps4 pro gpu vastly more powerful but you of course dont care and wanna push a false narrative.

you been doing the same crap every generation.

Switch 2 is a beast

The year RE9 comes out on switch 2 and runs at 60fps you mean? Ow that doesn't fit your box?What year is this.gif

I never signeSome textures, some geometry, some details, some ambient occlusion, some shaders, some...

Please do

Let's all witness that poor ass attempt as you dig further towards the center of the earth.

Oh the fucking irony of you calling anyone pushing false narrative lol

Again, just a few days ago you "I can't see the difference" for Monster hunter story 3 where it's missing OBVIOUS fucking effects like ambient occlusion

You need to

Your thread posting privileges for that sort of shit should be revoked

I'm sorry, you're here since 2025? The fuck do you know about me you little troll?

Or you just outed yourself as an alt?

You know you can read forums without signing up right? I think even in the wiiu days you were such a fanboy that despite almost every game running better on 360 you kept the false narrative that wiiu was way more powerful and history repeats itself. again sonic racing worlds runs high setting 60fps on SD, meaning pro should and can run this game much better so move on to the next game game you been wrong about everything, and pro has won in almost every single comparison when you said it never would.Some textures, some geometry, some details, some ambient occlusion, some shaders, some...

Please do

Let's all witness that poor ass attempt as you dig further towards the center of the earth.

Oh the fucking irony of you calling anyone pushing false narrative lol

Again, just a few days ago you "I can't see the difference" for Monster hunter story 3 where it's missing OBVIOUS fucking effects like ambient occlusion

You need to

Your thread posting privileges for that sort of shit should be revok

Or you just outed yourself as an alt?

The year that RE9 is going to sell more on SW2 than on Xbox Series…What year is this.gif

I would suggest old Sweet tooth has just outed themselves lol..Some textures, some geometry, some details, some ambient occlusion, some shaders, some...

Please do

Let's all witness that poor ass attempt as you dig further towards the center of the earth.

Oh the fucking irony of you calling anyone pushing false narrative lol

Again, just a few days ago you "I can't see the difference" for Monster hunter story 3 where it's missing OBVIOUS fucking effects like ambient occlusion

You need to

Your thread posting privileges for that sort of shit should be revoked

I'm sorry, you're here since 2025? The fuck do you know about me you little troll?

Or you just outed yourself as an alt?

The year that RE9 is going to sell more on SW2 than on Xbox Series…

The year RE9 comes out on switch 2 and runs at 60fps you mean? Ow that doesn't fit your box?

I never signe

You know you can read forums without signing up right? I think even in the wiiu days you were such a fanboy that despite almost every game running better on 360 you kept the false narrative that wiiu was way more powerful and history repeats itself.

again sonic racing worlds runs high setting 60fps on SD

meaning pro should

and can run this game much better so move on to the next game game you been wrong about everything

and pro has won in almost every single comparison when you said it never would.

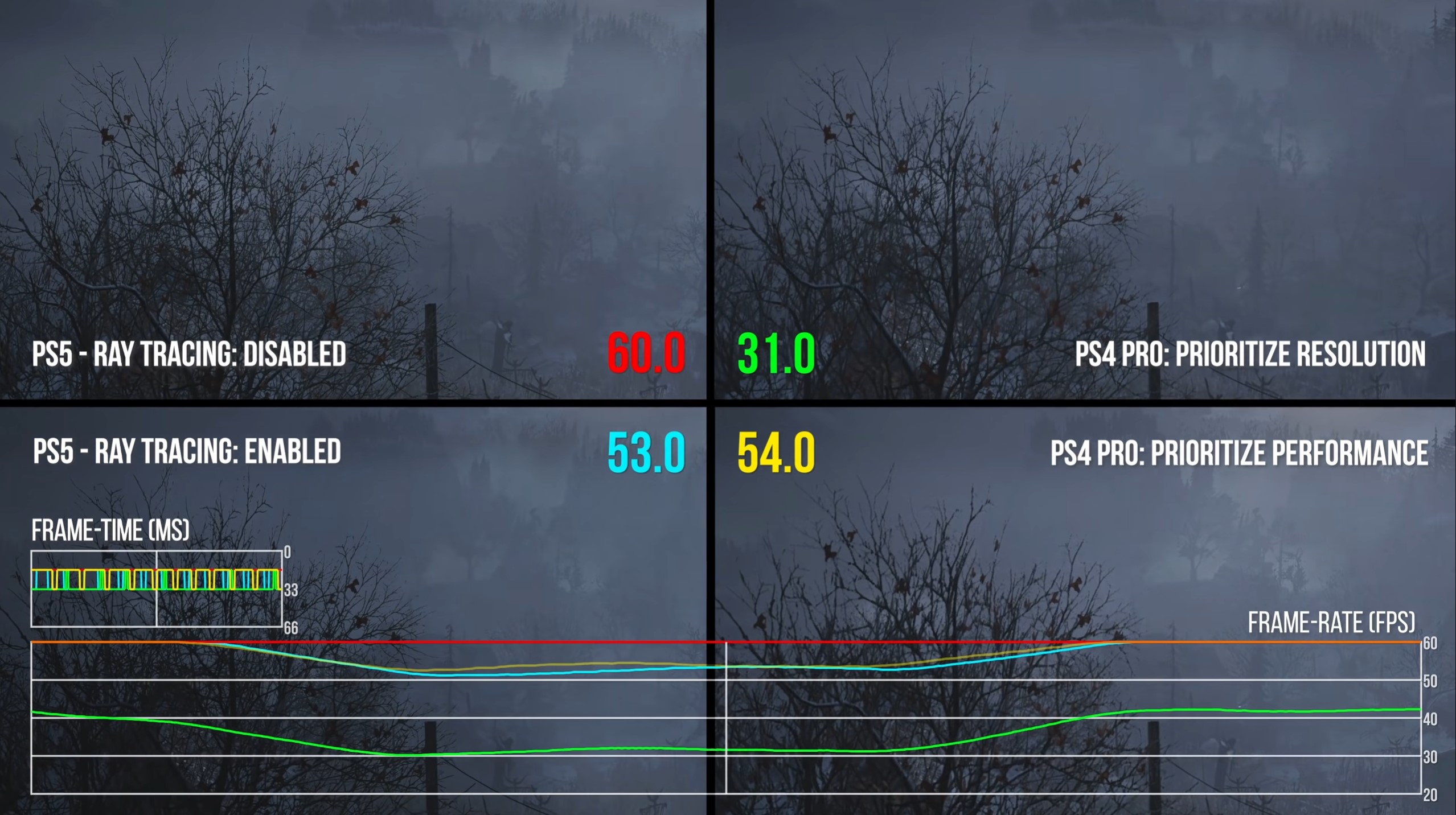

U blind?Just like the "solid 60fps" RE8 PS4 pro?

For comparison sake - Resident Evil Village stats from VGTech:

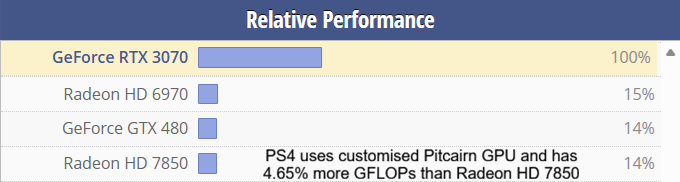

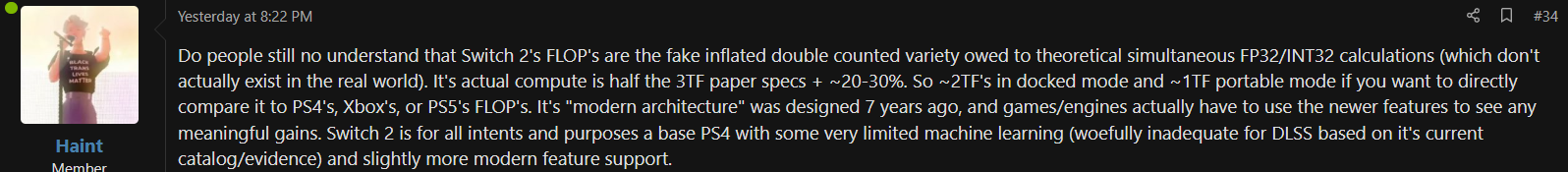

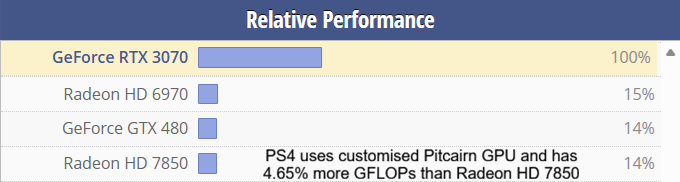

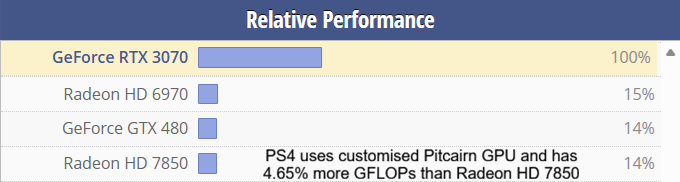

Its actual compute is half the 3TF paper specs + ~20-30%. So ~2TF's in docked mode and ~1TF portable mode

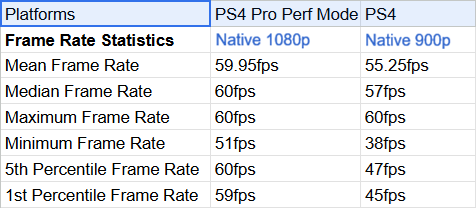

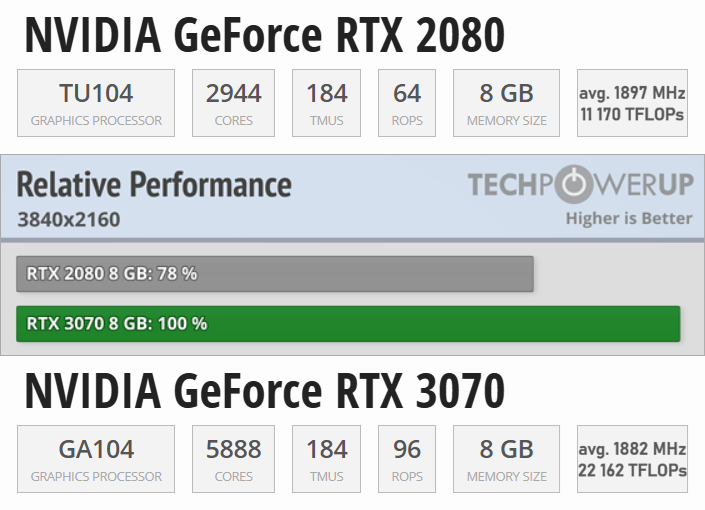

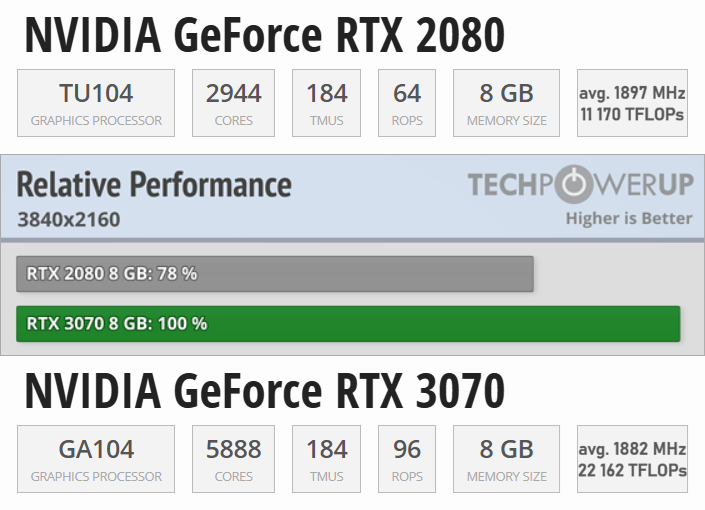

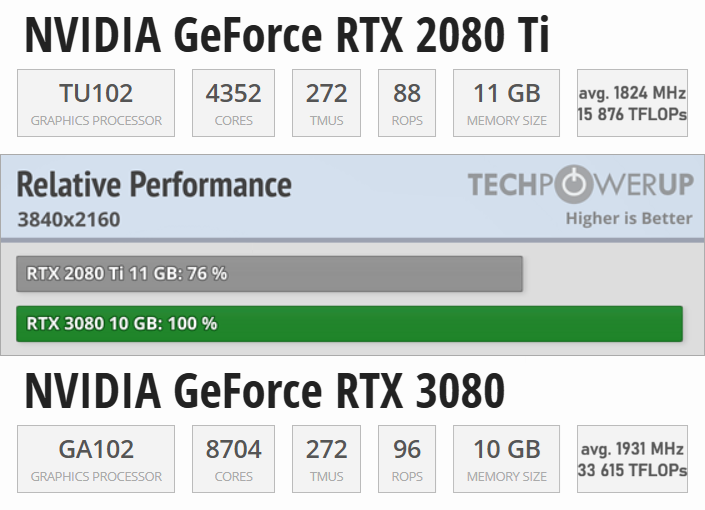

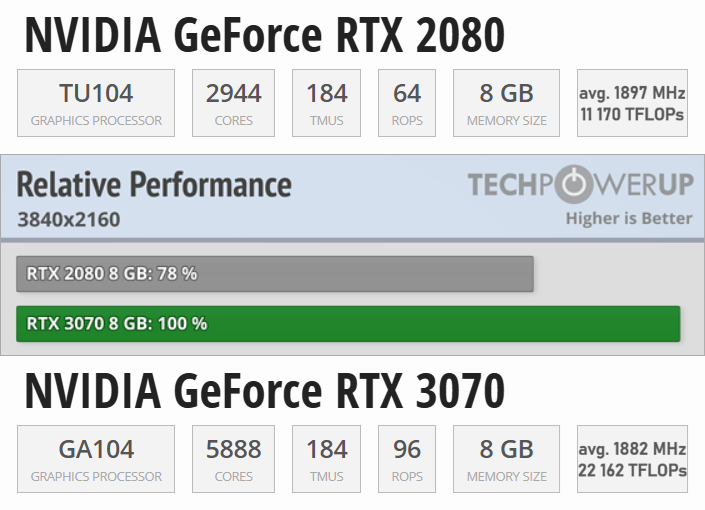

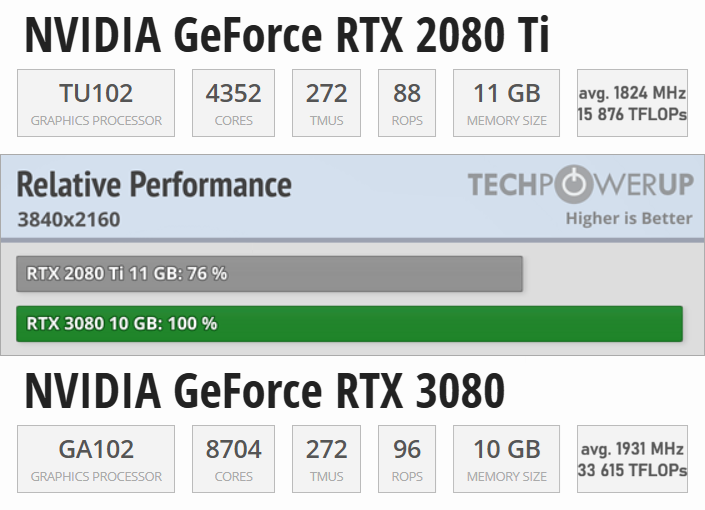

As we can all see - 50.4% of TFLOPs, but 78% of performance.The half flop theoric, holy shit, what is this, are we back 5 fucking years ago?

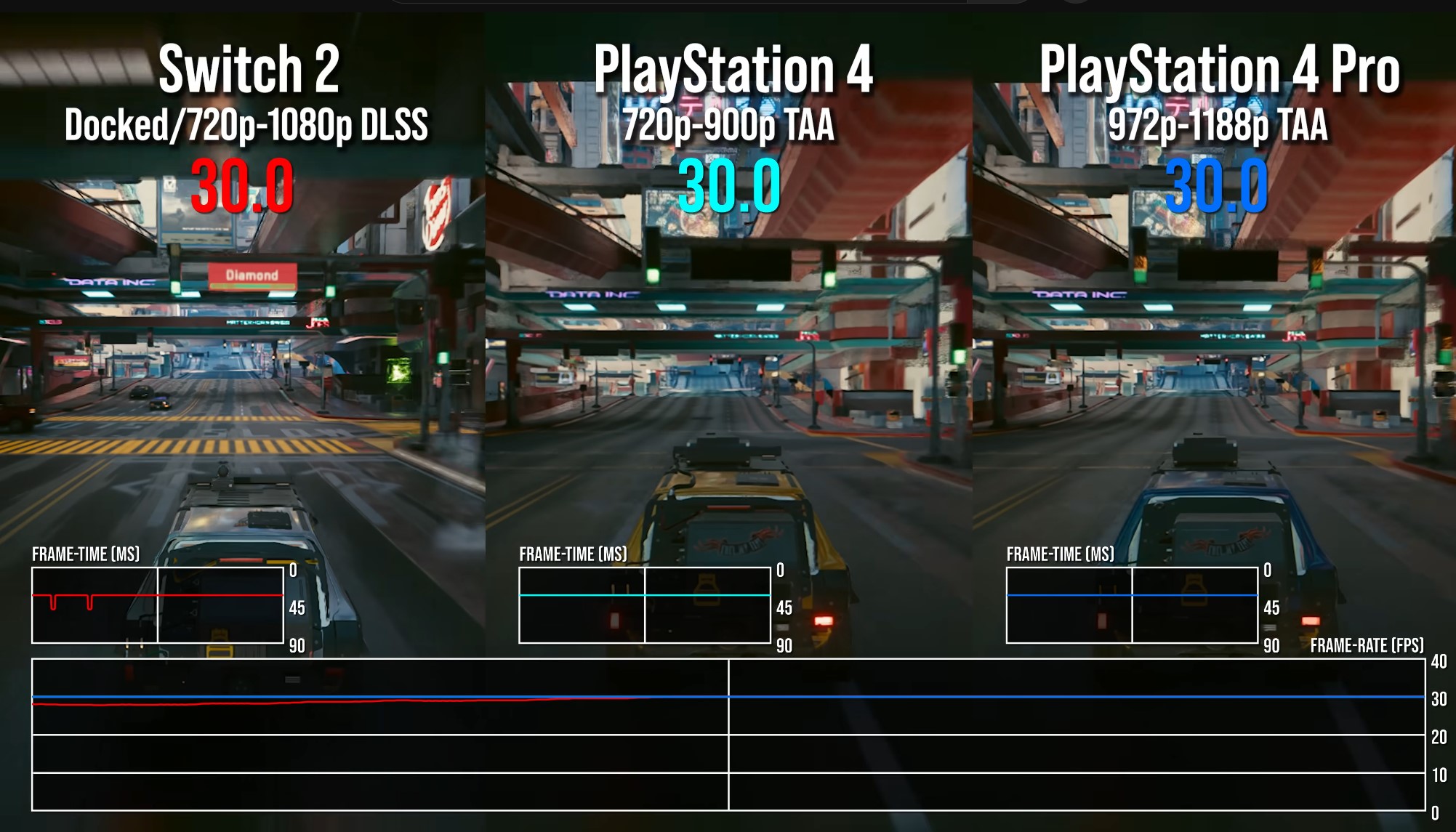

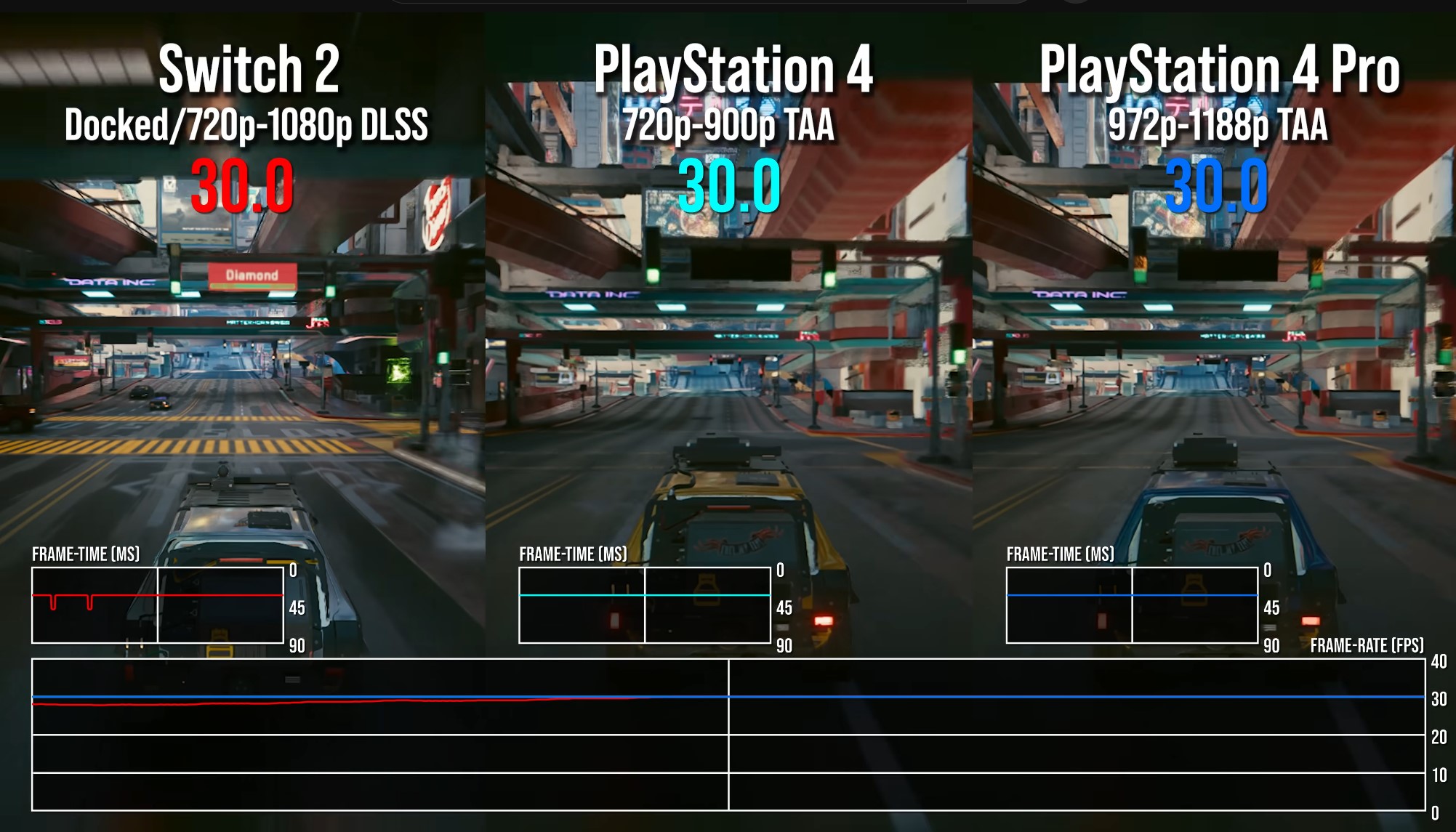

Switch 2 drops frames during traversal like a madman. Unlike PS4 Pro.DLSS less banding artifacts than CDPR's TAA, fine details like rain resolve much better, nowhere near the SSR grainy shimmering artifact. Nearly every scene with details in parallel with the camera view will be shimmering even when not moving.

What path tracing are you speaking of?CDPR not a technical reference (LOL, the first open world AAA path tracing game, they're not good technically!)

So, if Elden Ring defies all hardware, please tell me - why is the Xbox One S version more or less what one would expect it to be when compared to the PS4 version?And here comes the clown again with Elden Ring, which is the epitome of spaghetti code that defies all hardware, but still being evaluated before release

The announcement from last night, the move to change from old DLSS Convolutional Neural Networks (CNN) to transformer model is applicable for all RTX hardware

Even Switch 2 with rumoured old ass Ampere will have the best upscaling tech of console world

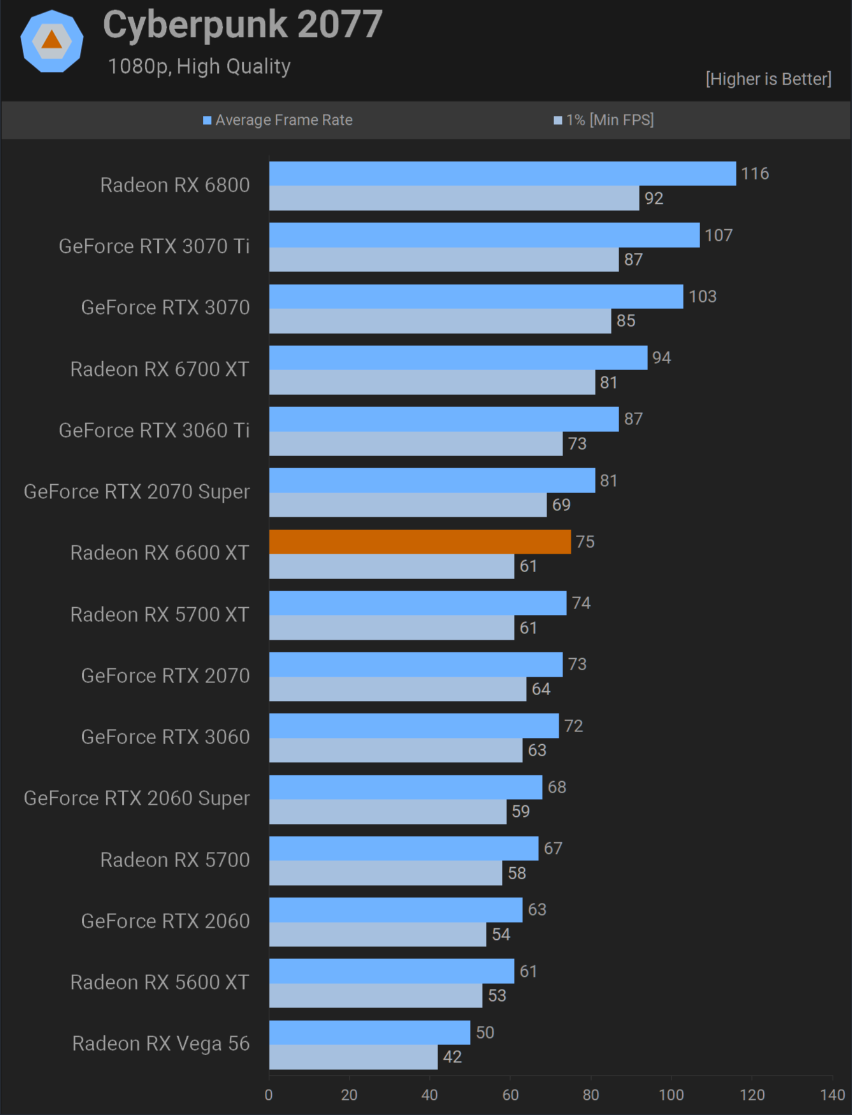

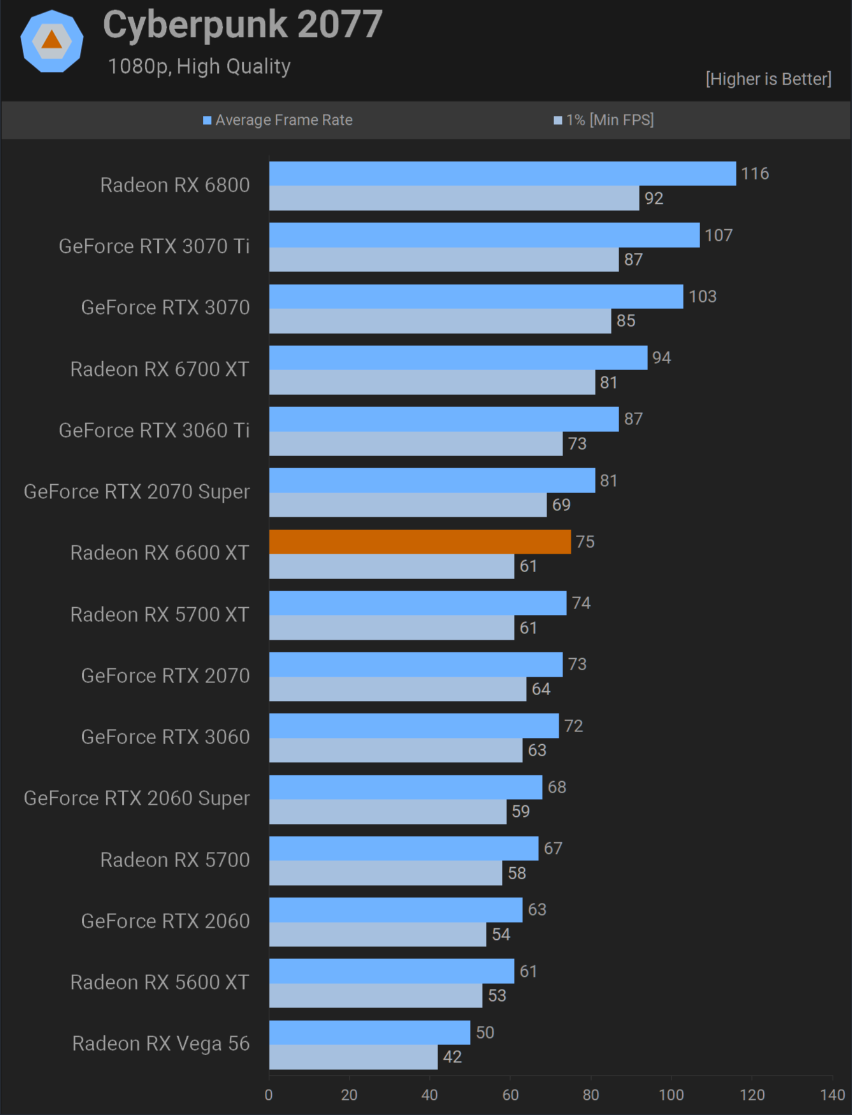

lol cyberpunk the game running 10fps better and higher resolution in the developers own benchmarks you should put a clown pic as your profile pic. mean while we have 10 -15 games running higher resolution on pro, lets look at sonic worlds a game that can run on toaster at 60fps high setting as proof as switch 2 superiority.

The 15% delta is literally in the very first benchmark you posted, which you conveniently and disingenuously chose to show 1440p results for on budget cards incapable of running high end games at that resolution (as evidenced by the sub-60 frame rates)

GPU Test System Update for 2025

2025 will be busy with GPU launches, all three GPU vendors are expected to release new cards in the coming weeks and months. To prepare for this, we've upgraded our GPU Test System, with a new Ryzen X3D CPU and more. The list of games has changed substantially, too.www.techpowerup.com

Not a single average here you're anywhere near 10-15% above a 3060, and this is without RT! Most of the time 3060 leads

So by your calculation, 8.5TFlops ahead of a 10.6 TFlops. Make it make sense lol

With RT then 3060 leads with +20%

So for 276 mm^2 with "fake trouble TFlops", competent ML which has now transformer model, competent ray tracing blocks, it still manages to match or exceed the 236mm^2 dedicated mainly to raster. On a much denser TSMC node than Samsung's 8nm too.

So what year exactly did AMD catch up in functionality clock for clock, core for core with Ampere?

Hooolllyyyy shit the cope

Not even ayyMD subreddit would muster the courage to type the nonsense you just did

Developers ignoring them…. By calling hardware agnostic function calls in their DX12 or Vulkan APIs? Do you understand how any of this works?

Which AMD's solution clearly never worked for gaming

Unlike Nvidia's ampere and Ada

Taped out in 2021

Revisions can happen after

But yes Nintendo did sit on it

And is that 7 years?

Haint mathing

Star Wars outlaws uses all the advantages of a newer architecture minus mesh shaders, which it would actually benefit from performance wise unless you do not understand the purpose of it.

So games that do not use more VRAM, better CPU than base PS4, without RT, or without compute shader features like mesh shaders, don't use SSD, don't use I/O texture decompression/streaming, would be akin to base PS4

Fucking hell

Yes yes, you figured it out.

Not the first time either.The 15% delta is literally in the very first benchmark you posted, which you conveniently and disingenuously chose to show 1440p results for on budget cards incapable of running high end games at that resolution (as evidenced by the sub-60 frame rates)

lol cyberpunk the game running 10fps better and higher resolution in the developers

own benchmarks you should put a clown pic as your profile pic. mean while we have 10 -15 games running higher resolution on pro

, lets look at sonic worlds a game that can run on toaster at 60fps high setting as proof as switch 2 superiority.

The 15% delta is literally in the very first benchmark you posted,

which you conveniently and disingenuously chose to show 1440p results

for on budget cards incapable of running high end games at that resolution (as evidenced by the sub-60 frame rates)

Gauge out your eyes because you clearly don't need them.

Playstation consoles are so undercutting content we might as well call them different generations

With worse image quality, missing features, missing effects, not rendering everything

Aren't you supposed to be running emulator of sonic cross worlds by now clown to prove your point? We're all waiting

While you're proposing that hitman 2, hogwart legacy and Elden ring are the benchmark? Do list those 10-15 games

No?

1080p, 2025, 25 games average rasterization

You had to go back to 2021 and pick a $680 card over a $550 to find that sole benchmark that does not even come close to your claim of "on average its +15% ?

Here, another 2021 legacy

In the meantime, 2025 25 games average kills your whole argument.

U blind?

As we can all see - 50.4% of TFLOPs, but 78% of performance.

Again - 47.2% of TFLOPs, but 76% of performance.

So yeah, Haint is right. It is half and then you multiply it by 1.25 (1.33 at best).

One look at the TMUs between the generations tells you everything you need to know.

Just take a look at a few different Geforce generations and maybe (just maybe) you'll understand if Nvidia follows your flawed logic or if they actually follow real logic.

If anyone really feels the need to compare FLOPs between architectures, you can do so between Maxwell, Pascal, Turing, GCN 4, RDNA1 and RDNA 2, but THAT'S IT.

And it's not like Pascal and Turing or GCN 4 and RDNA 1 have the same 'FLOPs efficiency' but they're roughly comparable.

The FLOPs of Ampere, Ada Lovelace, Blackwell, RDNA 3 and RDNA 4 are all heavily inflated. Actually, RDNA 3 is in a league of its own when it comes to FLOPflation...

And to quote your words from another thread: 'As if GCN 1.1 Tahiti generation would be even comparable to Ampere architecture'

You're right for once.

GCN 1 FLOPs aren't in any way comparable to Ampere's FLOPs.

In gaming, GCN 1 is much more performant per FLOP than Ampere is.

And before you point to mesh shaders or some other barely used tech - how many games use hardware RT or mesh shaders on Switch 2? It's getting close to 200 games released on S2. Is it even 5%?

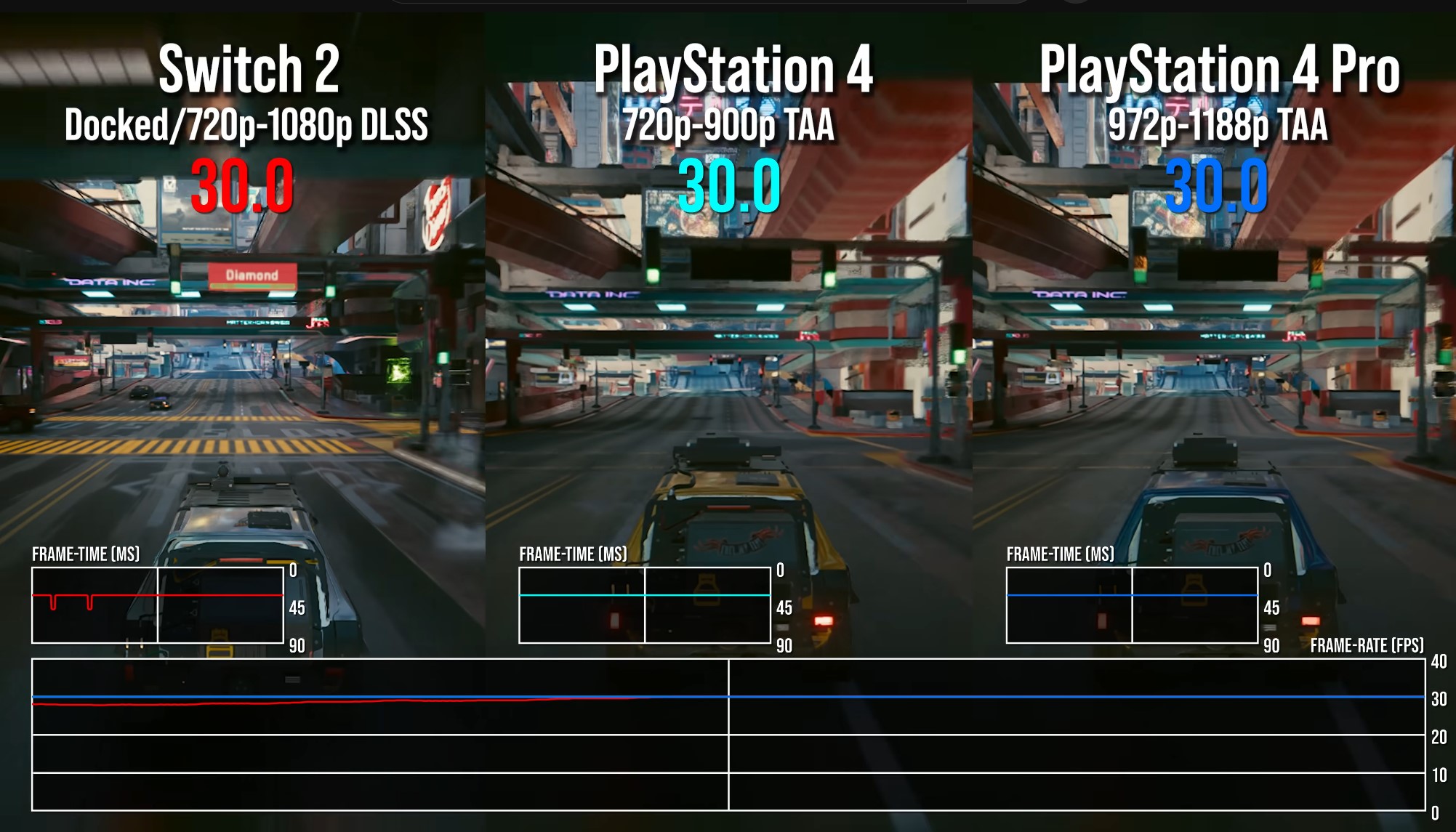

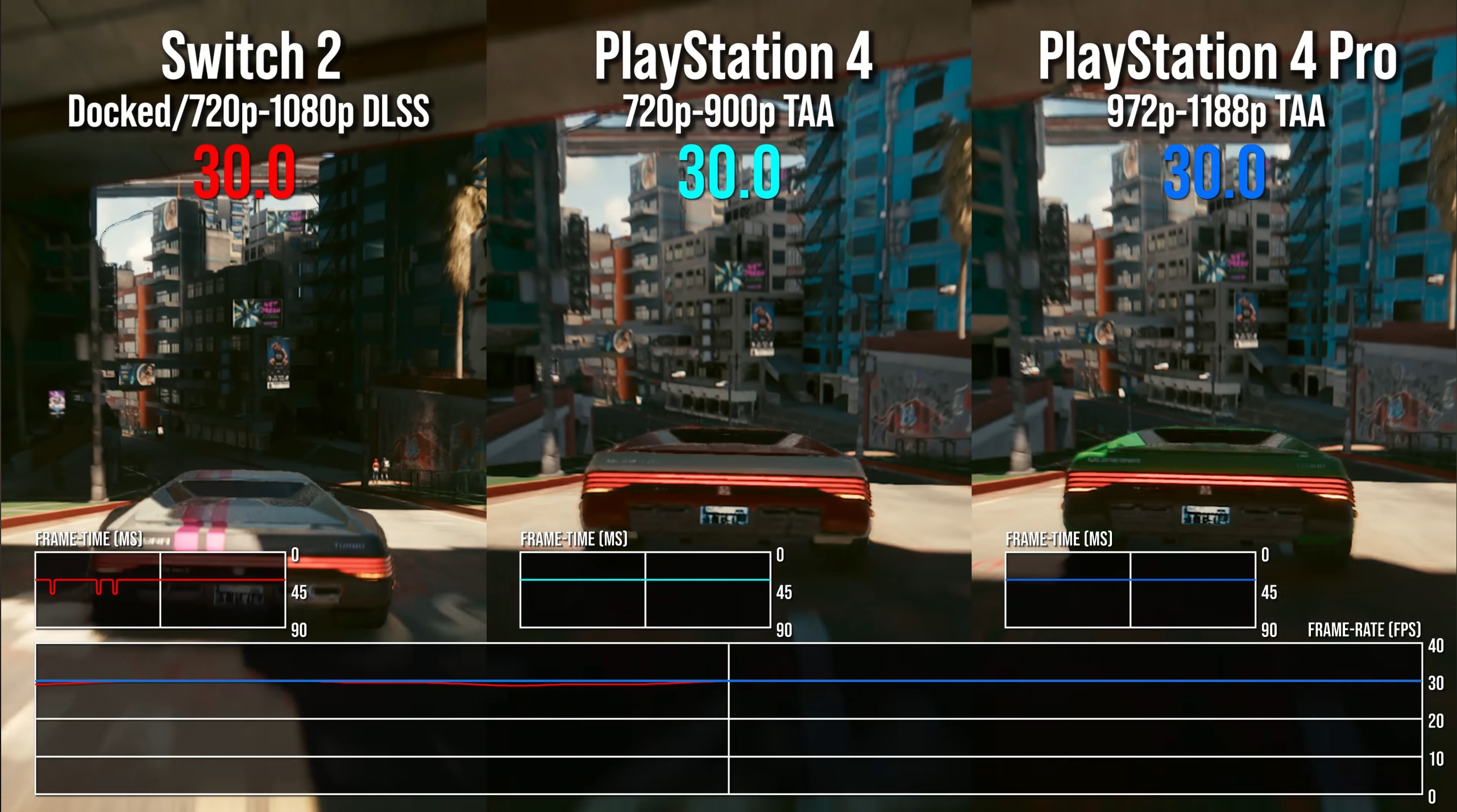

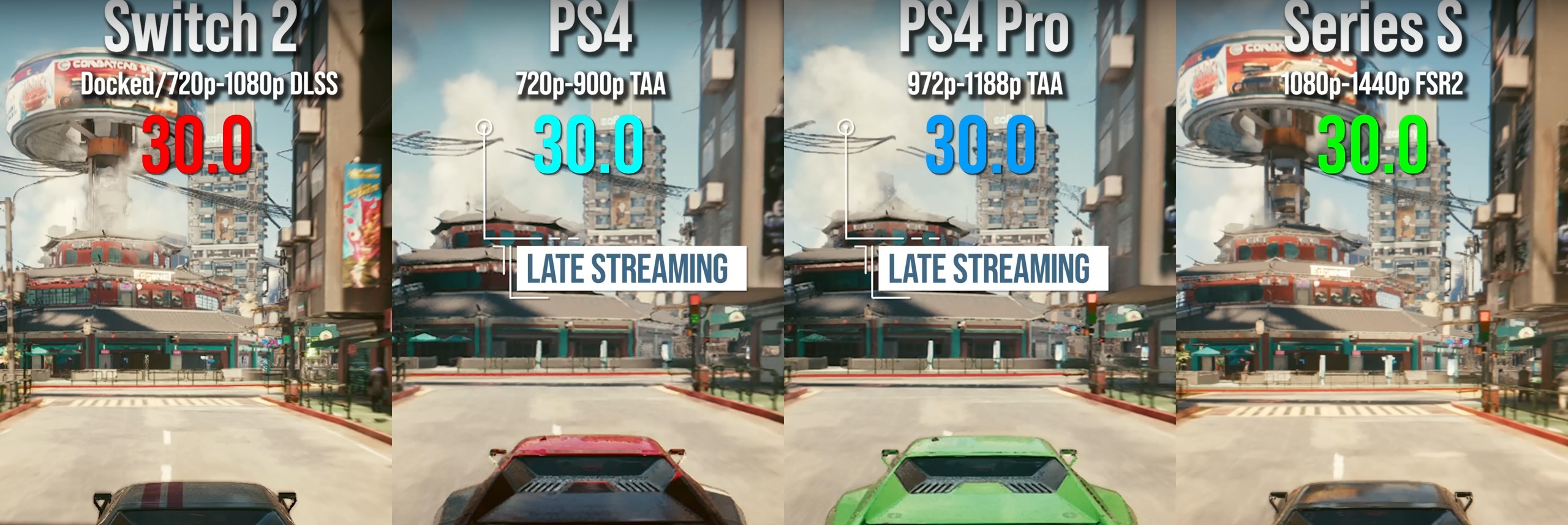

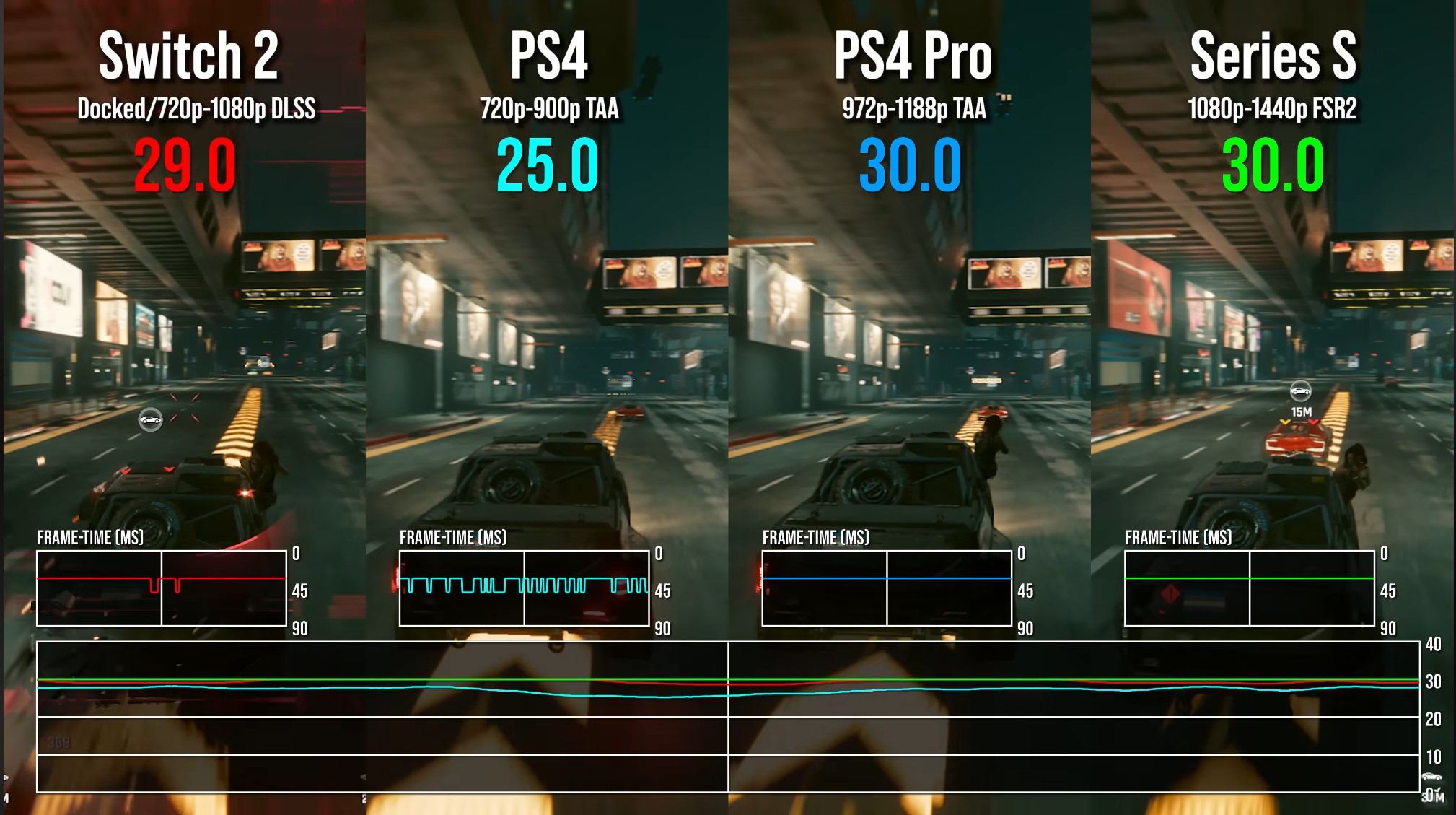

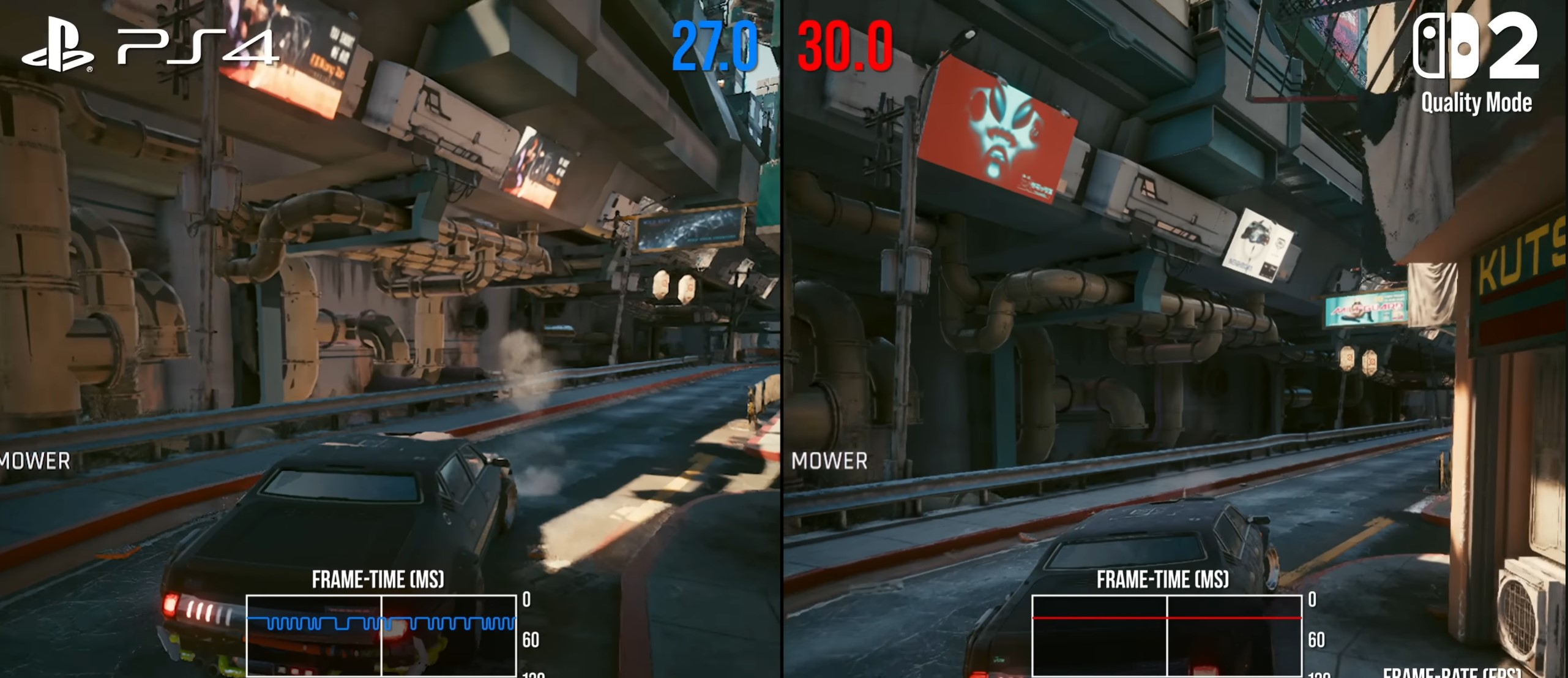

Switch 2 drops frames during traversal like a madman. Unlike PS4 Pro.

And I told you last month that I'm done looking at Cyberpunk.

What path tracing are you speaking of?

The one on Switch 2?

The one on Xbox Series X?

Or maybe the one on PS5 Pro?

If it weren't for Nvidia's fondness for the neo-noir artstyle with all its colorful neon lights, skyscrapers, and steam, Cyberpunk wouldn't have ever gotten all the attention from Nvidia.

Last time I personally cared about Cyberpunk 2077 was back in the fall of 2020 - when I was selling my CDR stock a few weeks before the release of the game.

Because it was obvious that they were hiding the base 8th gen consoles versions for absolutely obvious reason - abysmal performance.

The difference in framerates during car traversal in CP2077 between the PS4 Pro (high 20s) and the Switch 2 (low 20s) is very similar to CD Projekt Red's intentions in the summer and fall of 2020 - extremely obvious

So, if Elden Ring defies all hardware, please tell me - why is the Xbox One S version more or less what one would expect it to be when compared to the PS4 version?

And why is the PS4 Pro version what you would expect it to be when compared to the PS4 one?

And also - do you think FromSoftware put more time into optimizing the Xbox version than it put in the already delayed once (it was supposed to release in 2025) Elden Ring Tarnished Edition on Switch 2?

And since you're so knowledgeable and so moderate in your claims - could you please explain what's going on with DLSS 4 SR on Switch 2?

It's not like you were fantasizing and daydreaming about the capabilities of your beloved plastic box, right?

REALITY: 'On the Switch 2, moving objects just kind of look lower res and flickery on their edges as soon as any sight of movement kind of starts. This applies to any and all moving objects in all scenes in the Switch 2 version.' (DF, Oct 3rd, 2025, 16m0s)

Poor Buggy was fantasizing about the DLSS Transformer model, but instead he got something worse than the DLSS 3.x SR CNN model in the majority of games that actually opt to use Tensor cores for upscaling.

And as for all your wild, outlandish claims - it's not like AI upscaling is even something that is universally used on the Switch 2. Less than half of the S2 games use any kind of DLSS, and the ones that actually use the full CNN model can be counted on the fingers of one hand.

And though it was unintentional on your part, thank you for proving my point on AMD's cards being significantly handicapped by terrible drivers and developer indifference. Did you not stop to think why you are finding such disparities in multi-game averages, particularly over time?

i was talking about sonic racing worlds the switch 1 version at 1440p would look close to pro.

switch 1 version with resolution bump via emualtion.

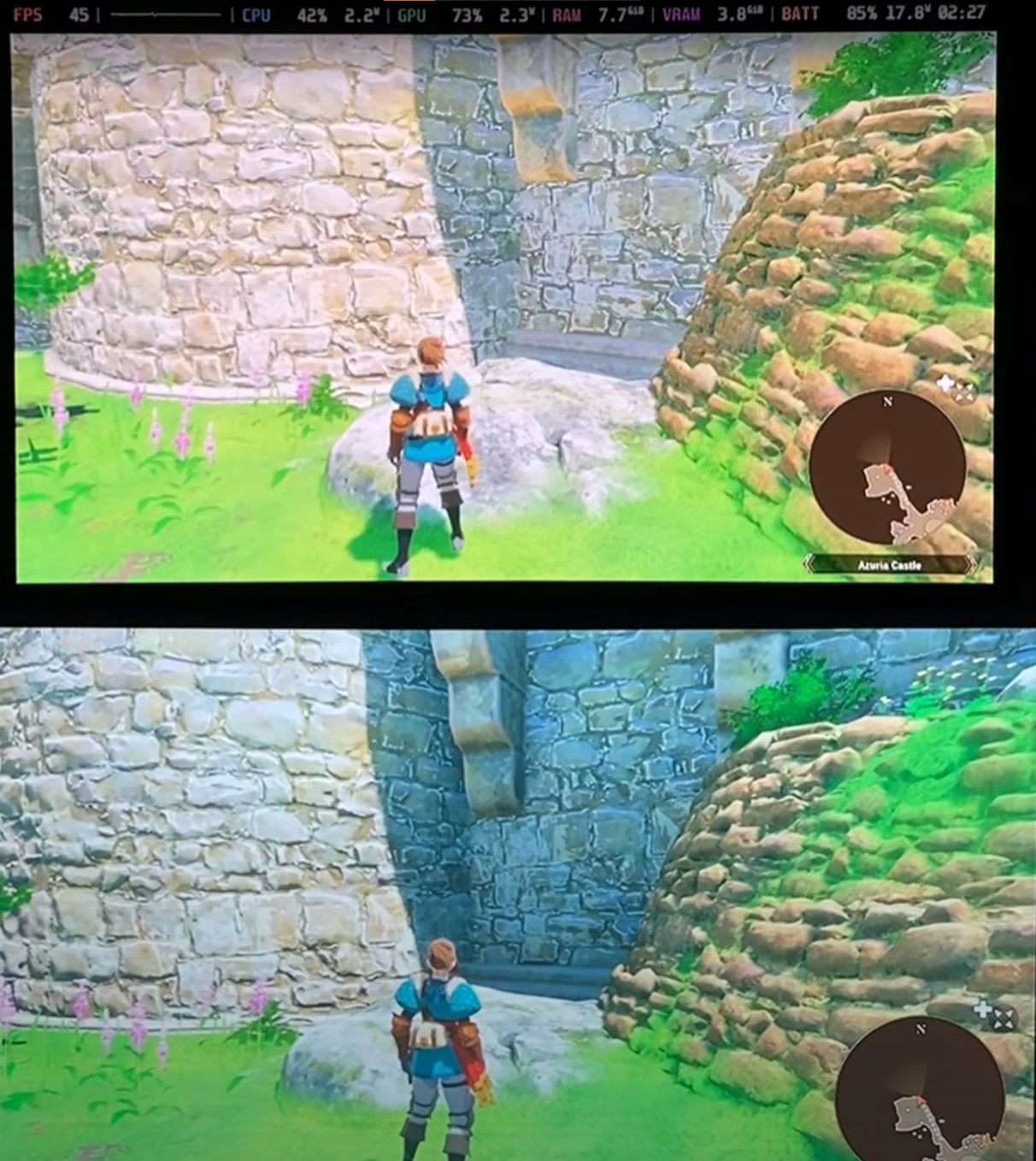

looks almost the same to my eyes. looks like a game on the same generation of hardware.Switch 1 emulator at 4k vs Switch 2 version vs. PS4 Pro version

Go to the eyes doctor ASAP andlooks almost the same to my eyes. looks like a game on the same generation of hardware.

meanwhile you're in threads saying it's not a big difference 600p/40-60fps upscaled vs 1440p/60fps. bruh you guys will never change. like the pro and switch version look 10% better at best hardly anything that demanding going on there that shows the switch 2 or ps4 pro is 10x more powerful. go to sleep. you can't even the notice the difference between 60fps locked and 40-60fps and what's to tell me get my eyes checked.Go to the eyes doctor ASAP and

looks almost the same to my eyes.

You went fromlooks almost the same to my eyes. looks like a game on the same generation of hardware.

That's what olivar stated and you even admitted he made a mistake probably. so yea it does look the same, i see little more grass and slightly better textures. no reason ps4 pro should not this 1440p/60fps when its 11x more powerful then switch 1, and the game is not even cpu limited. SD runs this max settings at 800p at 60fps and no way in hell is SD on par with ps4 pro gpu which is what this game is suggesting, really a pointless comparison but i understand its the only win switch 2 has.You went from

"The Switch 2 version doesn't feature the same graphical features as the PS4 Pro and XSS; that's why it can run at 60 fps."

to

"Oliver from DF stated the Switch 2 version is just an upressed Switch 1 version without any gen 8 (PS4/X1/PS4 Pro/X1X) graphical features."

to

"Actually, Switch 1 version at 4k resolution looks exactly like PS4 Pro."

to

You went from

"The Switch 2 version doesn't feature the same graphical features as the PS4 Pro and XSS; that's why it can run at 60 fps."

to

"Oliver from DF stated the Switch 2 version is just an upressed Switch 1 version without any gen 8 (PS4/X1/PS4 Pro/X1X) graphical features."

to

"Actually, Switch 1 version at 4k resolution looks exactly like PS4 Pro."

to

ps4 pro is superior end of discussion. you can literally look at all these cross gen games only one goes over 1080p anyone with common sense would get it. dlss is mostly dlss lite which doesn't even work in motion.

switch 1 is not anywhere near them. sonic cross worlds though on switch 2 and ps4 pro other then some textures, 60fp, and resolution does not look that much better. switch 2 gets 60fps but ps4 pro just a terrible port.

switch 1 is not anywhere near them. sonic cross worlds though on switch 2 and ps4 pro other then some textures, 60fp, and resolution does not look that much better. switch 2 gets 60fps but ps4 pro just a terrible port.

i said sonic worlds switch 1 version with a resolution bump to 1440 and 60fps would look close to ps4 pro and switch 2 version. i mean that switch 1 emulation pic at 60fps would be vastly better then playing at 30fps and look close enough for me. i look at those comparison pics and its a very small downgrade.But it looks so close, according ot you...

Didn't he have a big meltdown last year for doing the same thing.... defending Nintendo?

i said sonic worlds switch 1 version with a resolution bump to 1440 and 60fps would look close to ps4 pro and switch 2 version. i mean that switch 1 emulation pic at 60fps would be vastly better then playing at 30fps and look close enough for me. i look at those comparison pics and its a very small downgrade.

U blind?

As we can all see - 50.4% of TFLOPs, but 78% of performance.

Again - 47.2% of TFLOPs, but 76% of performance.

So yeah, Haint is right. It is half and then you multiply it by 1.25 (1.33 at best).

One look at the TMUs between the generations tells you everything you need to know.

Just take a look at a few different Geforce generations and maybe (just maybe) you'll understand if Nvidia follows your flawed logic or if they actually follow real logic.

If anyone really feels the need to compare FLOPs between architectures, you can do so between Maxwell, Pascal, Turing, GCN 4, RDNA1 and RDNA 2, but THAT'S IT.

And it's not like Pascal and Turing or GCN 4 and RDNA 1 have the same 'FLOPs efficiency' but they're roughly comparable.

The FLOPs of Ampere, Ada Lovelace, Blackwell, RDNA 3 and RDNA 4 are all heavily inflated. Actually, RDNA 3 is in a league of its own when it comes to FLOPflation...

And to quote your words from another thread: 'As if GCN 1.1 Tahiti generation would be even comparable to Ampere architecture'

You're right for once.

GCN 1 FLOPs aren't in any way comparable to Ampere's FLOPs.

In gaming, GCN 1 is much more performant per FLOP than Ampere is.

And before you point to mesh shaders or some other barely used tech - how many games use hardware RT or mesh shaders on Switch 2? It's getting close to 200 games released on S2. Is it even 5%?

Switch 2 drops frames during traversal like a madman. Unlike PS4 Pro.

And I told you last month that I'm done looking at Cyberpunk.

What path tracing are you speaking of?

The one on Switch 2?

The one on Xbox Series X?

Or maybe the one on PS5 Pro?

If it weren't for Nvidia's fondness for the neo-noir artstyle with all its colorful neon lights, skyscrapers, and steam, Cyberpunk wouldn't have ever gotten all the attention from Nvidia.

Last time I personally cared about Cyberpunk 2077 was back in the fall of 2020 - when I was selling my CDR stock a few weeks before the release of the game.

Because it was obvious that they were hiding the base 8th gen consoles versions for absolutely obvious reason - abysmal performance.

The difference in framerates during car traversal in CP2077 between the PS4 Pro (high 20s) and the Switch 2 (low 20s) is very similar to CD Projekt Red's intentions in the summer and fall of 2020 - extremely obvious

So, if Elden Ring defies all hardware, please tell me - why is the Xbox One S version more or less what one would expect it to be when compared to the PS4 version?

And why is the PS4 Pro version what you would expect it to be when compared to the PS4 one?

And also - do you think FromSoftware put more time into optimizing the Xbox version than it put in the already delayed once (it was supposed to release in 2025) Elden Ring Tarnished Edition on Switch 2?

And since you're so knowledgeable and so moderate in your claims - could you please explain what's going on with DLSS 4 SR on Switch 2?

It's not like you were fantasizing and daydreaming about the capabilities of your beloved plastic box, right?

FANTASY:

REALITY: 'On the Switch 2, moving objects just kind of look lower res and flickery on their edges as soon as any sight of movement kind of starts. This applies to any and all moving objects in all scenes in the Switch 2 version.' (DF, Oct 3rd, 2025, 16m0s)

Poor Buggy was fantasizing about the DLSS Transformer model, but instead he got something worse than the DLSS 3.x SR CNN model in the majority of games that actually opt to use Tensor cores for upscaling.

And as for all your wild, outlandish claims - it's not like AI upscaling is even something that is universally used on the Switch 2. Less than half of the S2 games use any kind of DLSS, and the ones that actually use the full CNN model can be counted on the fingers of one hand.

Thank god you are here to moderate all of this........... Somebody should give you a job on the staff.

This thread was always a Swipe at the Switch 2, Resident evil just got tagged on for the ride.Somewhere along the lines I forgot this thread was actually about Resident Evil.