-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Sony Principal Graphics Engineer confirms PS5 has custom architecture that is based on RDNA2. (Update: Read OP)

- Thread starter Dnice1

- Start date

CatLady

Selfishly plays on Xbox Purr-ies X

Simply embarrassing. This is what happens when you don’t read the updated op.....

I read the update and while it has information from the Sony developer clarifying what he meant, I can't find anything in the update that states the information contained in the original OP was faked, photoshopped or a product of the shadowy Xbox Discord.

What's embarrassing is the behavior of a certain group of fans that when confronted with information they don't like they attack, accuse, threaten, report and invent conspiracy theories.

ThisWe all knew it was custom based RDNA. Basically its RDNA5 because PS5.

So, xbox series x is than RDNA x -> RDNA 10 ... wow riddle solved

MasterCornholio

Member

I read the update and while it has information from the Sony developer clarifying what he meant, I can't find anything in the update that states the information contained in the original OP was faked, photoshopped or a product of the shadowy Xbox Discord.

What's embarrassing is the behavior of a certain group of fans that when confronted with information they don't like they attack, accuse, threaten, report and invent conspiracy theories.

The mods questioned the information as well because it was presented badly by the OP and the DM wasn't verified before with them. It's only natural that others would question that information.

With that said not everyone who questioned it behaved appropriately though.

This is also achiveable with lower power. 300W is only needed if the chip is stressed. As far as I understand, the PS5 chip will clock up if it is not fully stressed (else it would really suck the power out of the wall). Also they seem to have cut out a few things. This makes the chip less complex and it can take more power. E.g. MS added (or let AMD add) support for Int8, Int4, ... instructions. This also makes the chip more complex which will result in worse clock-rates (than without such things).It's achievable. With ~300W power consumption (5700xt). I don't think that they put something that hot into PS5, but how much were they able to cut it down? To have some normal thermals they would need to cut that power consumption by 1/4 at least (Is that even possible?).

What really struck me is the sheer size of the PS5 case. This could mean they have a big power supply and a big cooling solution. The other parts aren't that big. I assume the PS5 also contains a power supply in the range of 300W. Else they wouldn't have such a big case.

Ass of Can Whooping

Member

But but but it has a fast SSD

These just feel like bot responses now

Nikana

Go Go Neo Rangers!

But also like @Bill O'Rights said the way they posted the information was pretty bad. They should have had it verified and with proper direct links before posting it. It's normal that some people were going to question.

It's like the leak thatNikana gave us. Nobody questioned it because he had it vetted. If the information had direct links and the DM was verified by the mods nobody would question it.

To be fair me, quite a few people still questioned it but didn't do so with the same veracity. They didnt question the information came from a source that was vetted but they did question if it was true or not since there is an NDA and other tried to convince me that I made a mistake posting the information. Some of the very same people who cried fake in this incident.

geordiemp

Member

I read the update and while it has information from the Sony developer clarifying what he meant, I can't find anything in the update that states the information contained in the original OP was faked, photoshopped or a product of the shadowy Xbox Discord.

What's embarrassing is the behavior of a certain group of fans that when confronted with information they don't like they attack, accuse, threaten, report and invent conspiracy theories.

I questioned the ethics of chasing a sony engineer on social media, getting a Personal DM saying he needs to check if its OK to talk about it due to NDA, and then posting it on GAF.

That Sony engineer has probably been forced to give a statement to a media outlet by HR and got into trouble because of delusional warriors who cant just keep it to the forum.

Not Classy. Some people need a reality check to mess with others livelihoods on social media.

Last edited:

MasterCornholio

Member

To be fair me, quite a few people still questioned it but didn't do so with the same veracity. They didnt question the information came from a source that was vetted but they did question if it was true or not since there is an NDA and other tried to convince me that I made a mistake posting the information. Some of the very same people who cried fake in this incident.

Well whenever information that is posted breaches an NDA I think it's normal for some people to be concerned. I definitely was a bit rough on O'Dium because I thought he was getting someone in trouble by posting that DM.

I love getting inside information but not at the cost of somebody's job.

That Sony engineer has probably been forced to give a statement to a media outlet by HR and got into trouble because of delusional warriors who cant just keep it to the forum.

Hopefully that isn't true because I would hate for anyone to get in trouble over this.

Last edited:

Arkam

Member

Hopefully that isn't true because I would hate for anyone to get in trouble over this.

100% this guy will be having a meeting with their manager TODAY over this. Sony like most software companies have a strict "no talking publicly" about products unless PR trained and authorized. So while the dude did not intend to violate this, he was dumb and we saw the result. I am sure his team is looking at him like "WOW, maybe you are not as smart as I thought you were"

Investor9872

Member

So what RDNA 2 feature is missing?

No one knows since it hasn't been confirmed. But it's probably VRS.

Nikana

Go Go Neo Rangers!

Well whenever information that is posted breaches an NDA I think it's normal for some people to be concerned. I definitely was a bit rough on O'Dium because I thought he was getting someone in trouble by posting that DM.

I love getting inside information but not at the cost of somebody's job.

I concur but some people will always find a way to doubt information here that doesn't fit a narrative that they have in their head. And that should not ever translate to the discrediting of someone in the fashion that it did. It was huge step over the line especially when it turned out the information ended up not being faked.

Many people falsely tried to make claims that my post eluded to that Gearbox received dev kits late which is preposterous and derailed the thread for a few posters. I never disclosed the information of where the developer was from and yet people ran with it. And when questioned continued to double down on certain things that made zero sense but no one decided to try and discredit the poster. The double standard there is absolutely preposterous.

Coulomb_Barrier

Member

MS customized the XSX HW to support 4bit, 8bit and 16bit integer rapid packed math just for Machine learning and the XSX also supports DXML. Likely not as good as Tensor cores but an in between of sorts.

Also Cerny has already stated that PS5 supports Primitive Shaders.

Basically the predecessor to Mesh shaders.

Please educate yourself on the comparative performance of Nvidia's Tensor Cores vs MS's solution, as you seem to be overly flattering with your claims about MS performance in this area:

Dictator said:yeah about half of the ML performance of a 2060. So DLSS on an XSX would cost around 5.5 to 6 miliseconds of compute time if it scaled like it does across Turing to RNDA 2.

From Ree.

Also, Series X doesnt have specific hardware to accelerate it, basically like DLSS 1.9 the ML calculations are done using the shader cores. RTX cards dedicate a significant portion of their GPU die for the tensor cores.

Last edited:

pyrocro

Member

Big enough to say he was wrong and apologize, I tip my hat to you, good sir, it was wise to take the precautions and I hope as I'm sure most do that it all ends well.

cheers.

Yes it obvious you don't know where to startThis is so bad, I don't know where to start...

there is no maybe"DLSS 2.0 runs on the (tensor/ML cores) " - maybe it does, maybe not.

"Also DLSS 1.0 runs on the shaders of the GPUs and performs noticeably worse." - it "performing worse" (as in looking like shit, compared to plain FidelityFX, which, by the way, is crossplatform) has nothing to

NVIDIA DLSS 2.0: A Big Leap In AI Rendering

Through the power of AI and GeForce RTX Tensor Cores, NVIDIA DLSS 2.0 enables a new level of performance and visuals for your games - available now in MechWarrior 5: Mercenaries and coming this week to Control.

www.nvidia.com

why are you bring up FidelityFX, this is not related to your original post or my reply.(odd mention)

It seems that you are aware that most training against large datasets is done in the cloud but you're unaware how the inference part is executed on hardware.do with exactly how neural network inference was executed.

"hardware accelerate ML good, "software-based ML" bad." - what the heck is "software based ML" on GPUs, clueless one?

well in this case (DLSS2.0) tensor cores on RTX cards help out by accelerating the process.

Several others have explained this to you already. Please take note.

Make of that what you will.

llien

Member

Make of that what you will.

Welp, make of NVidias 2015 claim that GPUs excel at neural network inference what you will.

MasterCornholio

Member

100% this guy will be having a meeting with their manager TODAY over this. Sony like most software companies have a strict "no talking publicly" about products unless PR trained and authorized. So while the dude did not intend to violate this, he was dumb and we saw the result. I am sure his team is looking at him like "WOW, maybe you are not as smart as I thought you were"

I think what bothers me the most about this was that it was shared without his consent. Unlike

Thugnificient

Banned

Not even a counter-argument and is absolutely meaningless within the context of what we are discussing.Welp, make of NVidias 2015 claim that GPUs excel at neural network inference what you will.

yurinka

Member

We already knew it's a custom RDNA2 chip, they mentioned officially multiple times and also by the console hardware architect and the CEO of the company who makes the chips.

Which means that is going to be mostly a RDNA2 chip like the ones in PC, but adding extra stuff they want (some of which who knows if may end on RDNA3 if they see fit) and removing other stuff they won't need if desired to make it cheaper.

Same goes with the Series X chip.

We'll need to see how both really are and how they optimize their stuff(in case it isn't something the console or engine already does for them), but the gap is very likely to be smaller because it seems more optimized to achive a real performance closer to its theorical peak (tflops). In fact we may see games or GPU tasks where PS5 performs better:

-PS5 GPU has a faster frequency which means all the GPU tasks -and there are many important related ones- that doesn't need more CUs than the ones that PS5 has will perform faster on PS5.

-Has a faster, more optimized I/O system to stream data to the memory and also dedicated hardware to certain common memory management work that elsewhere is done by code in the CPU, so that saved CPU work will be free to do something else.

-The variable frequency isn't something negative. Unlike the variable systems found on PC or mobile, it's something controllled by code so it's up to the devs. And also allows things like to use unused CPU horsepower as extra GPU horsepower.

-Some things like the sound coprocessor seem to be beasts. So we may see -not in launch games- using unused sound coprocessor running non-sound secondary CPU tasks to free CPU, which at the same time could be used it to support GPU stuff. We saw in multiple consoles to use chips for tasks there weren't originally designed for to optimize the performance, so it wouldn't be rare specially considering that now CPU+GPU are specially designed to share stuff.

Which means that is going to be mostly a RDNA2 chip like the ones in PC, but adding extra stuff they want (some of which who knows if may end on RDNA3 if they see fit) and removing other stuff they won't need if desired to make it cheaper.

Same goes with the Series X chip.

Both PS5 and Series X use custom RDNA2. They both are basically RDNA2 but will have some extra things and some removed things.So we could end up with a situation where we have one console with 10.2 RNDA1.5 tflop (variable) vs one console with 12.2 RDNA2.0 tflop (sustained)?

Perhaps the gap is bigger than the 17% people talk about.

OR its the same for Xbox Series X - it also use RDNA1.5?

We'll need to see how both really are and how they optimize their stuff(in case it isn't something the console or engine already does for them), but the gap is very likely to be smaller because it seems more optimized to achive a real performance closer to its theorical peak (tflops). In fact we may see games or GPU tasks where PS5 performs better:

-PS5 GPU has a faster frequency which means all the GPU tasks -and there are many important related ones- that doesn't need more CUs than the ones that PS5 has will perform faster on PS5.

-Has a faster, more optimized I/O system to stream data to the memory and also dedicated hardware to certain common memory management work that elsewhere is done by code in the CPU, so that saved CPU work will be free to do something else.

-The variable frequency isn't something negative. Unlike the variable systems found on PC or mobile, it's something controllled by code so it's up to the devs. And also allows things like to use unused CPU horsepower as extra GPU horsepower.

-Some things like the sound coprocessor seem to be beasts. So we may see -not in launch games- using unused sound coprocessor running non-sound secondary CPU tasks to free CPU, which at the same time could be used it to support GPU stuff. We saw in multiple consoles to use chips for tasks there weren't originally designed for to optimize the performance, so it wouldn't be rare specially considering that now CPU+GPU are specially designed to share stuff.

Last edited:

llien

Member

There is all maybes in the world.there is no maybe

NVIDIA DLSS 2.0: A Big Leap In AI Rendering

Through the power of AI and GeForce RTX Tensor Cores, NVIDIA DLSS 2.0 enables a new level of performance and visuals for your games - available now in MechWarrior 5: Mercenaries and coming this week to Control.www.nvidia.com

NVs claim it has to be tensor cores (which is nothing but basic 4x4 matrix multiply-add, something that GPUs, as NV itself figured in 2015, excel at) are as valuable as raising price on TEsla cards now, because "mining".

They have no other way to justify not having it on pre-Tesla GPUs.

Well, that is hardly surprising.[I have severe reading comprehension problems]

No, not "most". ALL.It seems that you are aware that most training against large datasets is done

It likely doesn't mean what you think it means.but you're unaware how the inference part is executed on hardware.

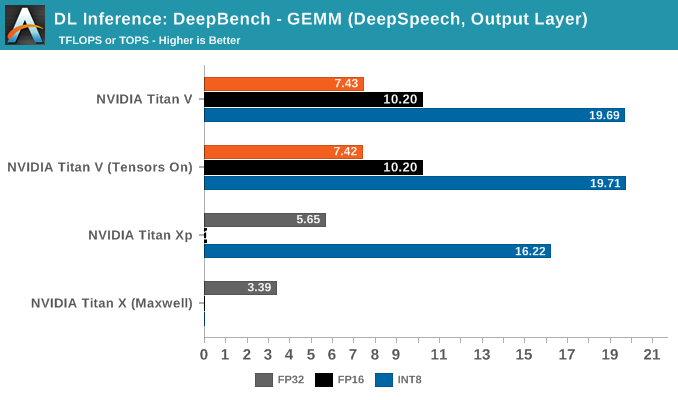

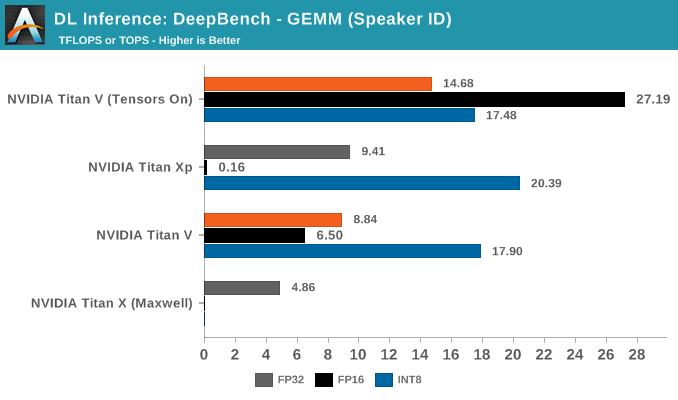

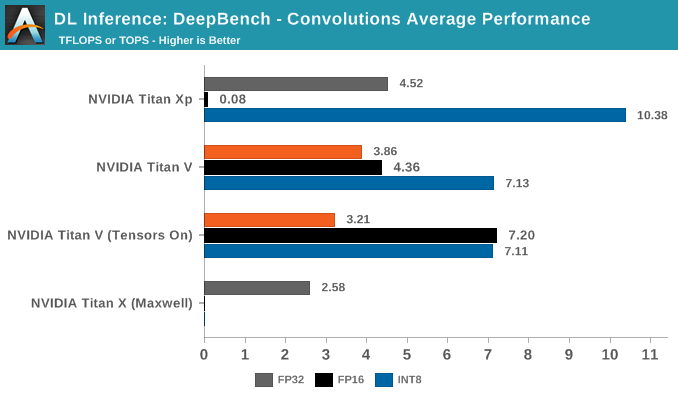

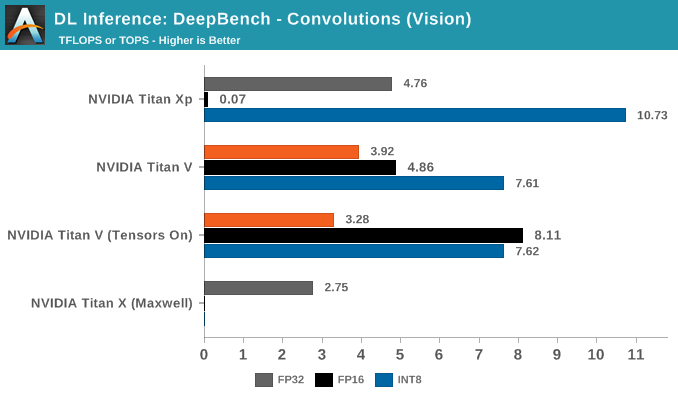

Actual ML benchmarks with Tensors on vs off (so shaders show that using tensor cores is mostly, although not always, faster, but even when faster, it's about 2-3.5 times, not tens of times.

There is no magic on having neural network inference run on non-tensor (which sound cool, but is actually dumb, superstraighforward packed math) on cards without tensor cores, certainly not for the faster cards, such as 1080Ti.

Last edited:

Nikana

Go Go Neo Rangers!

I think what bothers me the most about this was that it was shared without his consent. UnlikeNikana leak where he got permission to share that information. The developer in

Nikana case agreed to take a risk by releasing that information. I'm pretty sure Rosario never wanted that information to be shared which is why he DMd O'Dium instead of sharing it publicly via a tweet.

Not sure if I buy this. I worked in the media sector of gaming for many years.

People dont just talk when asked about things they aren't sure if they can or can not talk about. Even the developer I talked to, it was laid out very clearly what I could and could not say. We talked about more than I posted but it was very clear what I was and was not allowed to say.

I have known this person a long time and they know I would never just blurt out information which is why they were willing to talk to me. The scenario in question with odium would have to have a lot of coincidences for it to be a screw up. Unless Odium/Gavin was purposefully willing to burn that bridge for the sake of a Neogaf post, there is no way I buy that the information was given with this common midnset that it would not be shared.

Last edited:

saintjules

Member

This thread be spawning fanbois from another dimension like

More like the dimension that is the ps5/xbox spec thread (currently locked)

pyrocro

Member

I don't think you understand that TENSOR cores are fasterWelp, make of NVidias 2015 claim that GPUs excel at neural network inference what you will.

no one is saying GPU not used for AI work, but the workload runs as shaders.

with the TENSOR cores, you get more native to AI/ML representation and execution.

think ASIC board like google coral.

Last edited:

Genx3

Member

Please educate yourself on the comparative performance of Nvidia's Tensor Cores vs MS's solution, as you seem to be overly flattering with your claims about MS performance in this area:

From Ree.

Also, Series X doesnt have specific hardware to accelerate it, basically like DLSS 1.9 the ML calculations are done using the shader cores. RTX cards dedicate a significant portion of their GPU die for the tensor cores.

Nothing you stated is contrary to what I stated.

We agree that Tensor cores are the superior method of using HW accelerated ML.

Not sure why you're asking me to educate myself.

I stated its an in between fully custom HW block accelerated like tensor cores vs just using Shaders to do the calcs.

Yea its using the shaders but at a much reduced cost when you're using 4bit, 8bit and 16bit RPM.

That's why I stated an in between.

Last edited:

llien

Member

Yeah, "mabye it runs, maybe not" is so hard to comprehend, I mean, it takes a genius to translate "NN inference rusn great on GPUs" into "as it could run on normal SMs as well".Not even a counter-argument and is absolutely meaningless within the context of what we are discussing.

I'm not only fucking understand that, I have literally REFERENCED exactly HOW MUCH FASTER they are IN CERTAIN TASKS in my FUCKING PREVIOUS POST.I don't think you understand that TENSOR cores are faster

Last edited:

Gaming Discussion

Video game news, industry analysis, sales figures, deals, impressions, reviews, and discussions of everything in the medium, covering all platforms, genres, and territories.

Last edited:

Coulomb_Barrier

Member

Nothing you stated is contrary to what I stated.

We agree that Tensor cores are the superior method of using HW accelerated ML.

Not sure why you're asking me to educate myself.

I stated its an in between fully custom HW block accelerated like tensor cores vs just using Shaders to do the calcs.

Yea its using the shaders but at a much reduced cost when you're using 4bit, 8bit and 16bit RPM.

That's why I stated an in between.

Well ok but there's a big difference between dedicated hardware like Tensor Cores and using shaders to do the same thing. The entire point of Tensor Cores is they're not shaders; thsy're fixed function accelerators as using shaders is very costly. So my issue is saying Series X ML is halfway house to what Nvidia is using on RTX, it isn't, it's totally different even if the aim is the same, and much less performant.

pyrocro

Member

something is wrong here you arguing things no one is putting forward.

it's like your angry or something. chill the fuck out.

DLSS1.x runs on the shaders and DLSS2.0 runs uses the tensor cores

all the other things you're talking about no one is asserting, you should be answering those things with your inner voice. ok

again not even sure why this is even mentioned or why you think it can only happen in the "CLOUD".

No one thinks its magic. No one, don't know where you getting this from.

in summary, just so you're not confused DLSS2.0 uses the tensor cores on the RTX cards.(which is the only assertion anyone is making)

it's like your angry or something. chill the fuck out.

Like I said the only thing in contention here is if DLSS2.0 runs on a special accelerated part of the GPU [tensor core] (it does).There is all maybes in the world.

NVs claim it has to be tensor cores (which is nothing but basic 4x4 matrix multiply-add, something that GPUs, as NV itself figured in 2015, excel at) are as valuable as raising price on TEsla cards now, because "mining".

They have no other way to justify not having it on pre-Tesla GPUs.

DLSS1.x runs on the shaders and DLSS2.0 runs uses the tensor cores

all the other things you're talking about no one is asserting, you should be answering those things with your inner voice. ok

[edit:Let's not be so harsh on you.], the link you posted before is local benchmarks inferencing, you can train and or run inference locally on a machine with TensorFlow, PyTorch or whatever you chose to use.Well, that is hardly surprising.

No, not "most". ALL.

again not even sure why this is even mentioned or why you think it can only happen in the "CLOUD".

FFS It easy to spot the 101's good luck with your course.It likely doesn't mean what you think it means.

Actual ML benchmarks with Tensors on vs off (so shaders show that using tensor cores is mostly, although not always, faster, but even when faster, it's about 2-3.5 times, not tens of times.

There is no magic on having neural network inference run on non-tensor (which sound cool, but is actually dumb, superstraighforward packed math) on cards without tensor cores, certainly not for the faster cards, such as 1080Ti.

No one thinks its magic. No one, don't know where you getting this from.

in summary, just so you're not confused DLSS2.0 uses the tensor cores on the RTX cards.(which is the only assertion anyone is making)

Last edited:

Gaming Discussion

Video game news, industry analysis, sales figures, deals, impressions, reviews, and discussions of everything in the medium, covering all platforms, genres, and territories.

Last edited:

llien

Member

You made this up, there is absolutely no source on this planet who claims that DLSS 1 runs on "shaders", while 2.0 on TCs.DLSS1.x runs on the shaders

You can train or run inference locally on pretty much any computing device, including my dish-washing machine.you can train and or run inference locally on a machine with TensorFlow, PyTorch or whatever you chose to use.

Training the neural netowrk in the context of our discussion, namely rendering games at higher resolution, lower resolution and using them as input/output to determine the NN weights is not something even remotely reasonable to be done on a gaming machine. It is completely done in data centers by NV.

llien

Member

They've called it 'packed math" and it started with Vega, I think.- added instructions for 4-bit, 8-bit, 16-bit dot products (my guess SIMD instructions specific for ML)

Basically, instead of 1 fp32 operation, it can very effectively process 2 fp16, utilizing the same hardware.

"tensor coring" si likely faster, but it is not even remotely "don't even think using shaders for it" kind of faster, especially for high end GPUs.

Last edited:

kuncol02

Banned

Hard to say by how much, but they reduced it by quite a bit. The XSX power supply is 315W, and they are rocking 1.8GHz and 52CUs for the GPU. According to AMD they achieved a 33% decrease in power consumption for the same performance compared to RDNA1.

AFAIK that 33% was with same performance not same clock speed, which means that clock for clock power consumption will not be that good. I would say that best we could expect is 20% (with 15% more realistic).

That was about Radeon 5700XT and in response to info, that it's not able to reach that clock speeds.This is also achiveable with lower power. 300W is only needed if the chip is stressed. As far as I understand, the PS5 chip will clock up if it is not fully stressed (else it would really suck the power out of the wall). Also they seem to have cut out a few things. This makes the chip less complex and it can take more power. E.g. MS added (or let AMD add) support for Int8, Int4, ... instructions. This also makes the chip more complex which will result in worse clock-rates (than without such things).

What really struck me is the sheer size of the PS5 case. This could mean they have a big power supply and a big cooling solution. The other parts aren't that big. I assume the PS5 also contains a power supply in the range of 300W. Else they wouldn't have such a big case.

Gaming Discussion

Video game news, industry analysis, sales figures, deals, impressions, reviews, and discussions of everything in the medium, covering all platforms, genres, and territories.

Last edited:

But that's not what he said. He said more features with one cut.If this is indeed accurate, it seems to br RDNA2 with cut features, effectively making it not full RDNA2, hence "between RDNA and RDNA 2".

What he said wasIf this is indeed accurate, it seems to br RDNA2 with cut features, effectively making it not full RDNA2, hence "between RDNA and RDNA 2".

"It is based on RDNA 2, but it has more features and, it seems to me, one less."

Thugnificient

Banned

You’re late to the thread.But that's not what he said. He said more features with one cut.

What he said was

"It is based on RDNA 2, but it has more features and, it seems to me, one less."

Vognerful

Member

I questioned the ethics of chasing a sony engineer on social media, getting a Personal DM saying he needs to check if its OK to talk about it due to NDA, and then posting it on GAF.

That Sony engineer has probably been forced to give a statement to a media outlet by HR and got into trouble because of delusional warriors who cant just keep it to the forum.

Not Classy. Some people need a reality check to mess with others livelihoods on social media.

Man I would think you are different person from the one who was responding to O'dium on his post about his explanation and then later on his discussion with this engineer. You came way insulting and condensing. In fact I would say you were the catalyst that caused that thread to turn into shit show and him having death threats to him and his wife.

Or do you think this was justified because you believe he did something wrong?

pyrocro

Member

Because it's not in your head does not mean it does not exist. time and space exist outside of you.You made this up, there is absolutely no source on this planet who claims that DLSS 1 runs on "shaders", while 2.0 on TCs.

With a target resolution of 4K, DLSS 1.9 in Control is impressive. More so when you consider this is an approximation of the full technology running on the shader cores.

Nvidia DLSS in 2020: Stunning Results

We've been waiting to reexamine Nvidia's Deep Learning Super Sampling (DLSS) for a long time and after a thorough new investigation we're glad to report that DLSS...

www.techspot.com

www.techspot.com

I said :You can train or run inference locally on pretty much any computing device, including my dish-washing machine.

Training the neural netowrk in the context of our discussion, namely rendering games at higher resolution, lower resolution and using them as input/output to determine the NN weights is not something even remotely reasonable to be done on a gaming machine. It is completely done in data centers by NV.

It seems that you are aware that most training against large datasets is done in the cloud.

and you replied

which is proof you're clueless.No, not "most". ALL.

So shift goalpost again? or you just going to ignore this.

I have $5 on the latter.

again DLSS2.0 is accelerated on the tensor cores.(again the only thing in contention here)

what next your going to say...

either way, I'm done with this.

geordiemp

Member

Man I would think you are different person from the one who was responding to O'dium on his post about his explanation and then later on his discussion with this engineer. You came way insulting and condensing. In fact I would say you were the catalyst that caused that thread to turn into shit show and him having death threats to him and his wife.

Or do you think this was justified because you believe he did something wrong?

What.

Odium hassling a guy on social media and posting private messages and getting him to trouble and he had to make a statement to a media outlet. Wow.

What has that got to do with me ?

Gaf is a gaming forum, nobody uses real names, we have banter and fun. What did I say that was insulting exactly ? I asked him to debate and claimed he was not technical, he declined. End of. I like a bit of technical debate, but thats it, nothing outside of forums, no real names, nothing. END> He was condescending to me,but whatever.

Once you go onto twitter and start harrasing people at their work or in their private lives, that is out of bounds and its bad form.

I would never ever stoop that low. Thats Timdog level of idiocy and sad beyond sad.

Last edited:

MasterCornholio

Member

Not sure if I buy this. I worked in the media sector of gaming for many years.

People dont just talk when asked about things they aren't sure if they can or can not talk about. Even the developer I talked to, it was laid out very clearly what I could and could not say. We talked about more than I posted but it was very clear what I was and was not allowed to say.

I have known this person a long time and they know I would never just blurt out information which is why they were willing to talk to me. The scenario in question with odium would have to have a lot of coincidences for it to be a screw up. Unless Odium/Gavin was purposefully willing to burn that bridge for the sake of a Neogaf post, there is no way I buy that the information was given with this common midnset that it would not be shared.

He could have just made the information public instead of tweeting it. That's what he did with everything else that he shared with O'Dium. I mean if he didn't have any issues with the information being public why would he feel bad about what he said?

llien

Member

Because it's not in your head does not mean it does not exist.

Cite the part stating that NV claims DLSS 1.0 was not using tensor cores.

Oh, you can't? Color me surprised, pathetic one.

Compared to CU/SMs, which are programmable, it's actually rather straightforward.Tensor cores can do a huge 4x4x4 (my guess 4x4 with accumulate) calculation. That's a lot of transistors to pull that off.

it isn't 4x4x4 it's 4x4+4.

Multiply-add (accumulate) is fairly standard GPU op.

This article is from 2004:

My guess, that Nvidia's solution runs concurrent with the shader cores, and so rendering is not impacted in terms of processing, but bandwidth is (as competition for that resource).

Welp, ML inference benchmarks show that TC can speed things anywhere from not at all to 4-ish times.

It is still not night and day and very far from "impossible to run without TCs". Especially for higher end cards with abundance of CU/SM units.

Last edited:

Ass of Can Whooping

Member

Man I would think you are different person from the one who was responding to O'dium on his post about his explanation and then later on his discussion with this engineer. You came way insulting and condensing. In fact I would say you were the catalyst that caused that thread to turn into shit show and him having death threats to him and his wife.

Or do you think this was justified because you believe he did something wrong?

He opened the gates himself. Pinning the death theats on one gaf member is nonsense when he's plastered himself all over social media w/ his real identity no less. It was bound to happen

Last edited:

Bankai

Member

Heads were rolling when people speculated ...

I read ejaculated

Move on from the meta drama surrounding threats and the like on social media. Make a new thread in Off-Topic or take it to the meta thread.

If any such behavior is done on this site, report it to the staff accordingly.

Thank you.

If any such behavior is done on this site, report it to the staff accordingly.

Thank you.

Nikana

Go Go Neo Rangers!

He could have just made the information public instead of tweeting it. That's what he did with everything else that he shared with O'Dium. I mean if he didn't have any issues with the information being public why would he feel bad about what he said?

Theres 1000 different reasons to not make it public. Look at what happened in this very forum with this information. Peoples families being talked about, credibility questioned etc. And this is a small community. Imagine what would happen on twitter. You still have a poster here saying this is Gavins fault for harassing the guy. Which I have not seen a single piece of information showing that the engineer was harassed in any capacity by Gavin.

If you look at what was said in the statement to the website in question he says exactly what he meant. And he never actually refutes what he said but that it was worded badly. He made a comment that was true and and people who have no real idea of how the industry works or how games are made jumped onto it and turned into something it wasn't. What he said originally isn't false but he didn't give the proper context and details and when you do that on the internet we all know what happens.

Look at very threads here about stuff that is mispoken or not correct. It immediately starts a drama and petty comments within seconds.

How do we as fans of these machines lose out at that?

We don't and thts the point. The fud narrative of one system not having certain features when this same narrative was also pushed this gen with dx12 and Sony smashing tht frivolous agenda to oblivion is my point. When I mention history you do know in what context I'm saying it right? As in propoganda like this has been spread before and Sony came out with similar or better solutions, apis, features, etc. First it was Sony doesn't hace RDNA 2 thts been debunked, no ray tracing lol tht was annihilated with almost every game shown having it during presentation and list goes on. In name Sony might not have certain important features buttttt be sure thy will have important similar or better features, thy have proven this in the past. Just because one company may do something better tht isn't the end of the world. Tech isnt always equal, if it was it would be a boring boring world.

So the narrative of one system not having certain features is bad, but Sony will have important or better features? Sony smashes frivolous agenda to oblivion. Sony comes out with better solutions, APIs or features.

I think we agree on a basic level, but look at your post. Sony, Sony, Sony. This nonsense goes both ways.

sendit

Member

I read the update and while it has information from the Sony developer clarifying what he meant, I can't find anything in the update that states the information contained in the original OP was faked, photoshopped or a product of the shadowy Xbox Discord.

What's embarrassing is the behavior of a certain group of fans that when confronted with information they don't like they attack, accuse, threaten, report and invent conspiracy theories.

The fact is we don't have conclusive evidence to suggest either XSX or PS5 is the full RDNA2 part/standard. They're both custom solutions based on RDNA2. To pick and choose which rumor/fud to agree with is embarrassing. To jump to conclusion is also embarrassing.

Last edited:

DeepEnigma

Gold Member

The fact is we don't have conclusive evidence to suggest either XSX or PS5 is the full RDNA2 part/standard. They're both custom solutions based on RDNA2. To pick and choose which rumor/fud to agree with is embarrassing. To jump to conclusion is also embarrassing.

Confirmation bias via rumors and FUD be damned.

It's probably closer to this reality. Both are based on RDNA2 IPC with whatever customization they choose.

AMD's RDNA2 Next-Generation Architecture For Radeon Is Not Identical To Xbox Series X Or PS5

Architectural reveals of the next-generation consoles have had enthusiasts discussing AMD's upcoming RDNA2 architecture with much gusto and has started the inevitable speculation on how RDNA2 will translate into next-generation Radeon graphics cards. Unfortunately, or fortunately, a source...

Last edited:

Hello. Thanks for the friendly and cordial discourse right now compared to the rest of the console boys going off their heads around us.

I can kinda understand why you'd think that I'm pushing a narrative but all I am saying is that both have taken the same basic building blocks of the engine and chassis and have 2 different design principles around it to do the job required.

The MS route is stated as highest and most powerful so everything else had to be designed around that. But that requires innovation too. The fact that CPUs, GPUs and everything that makes them improve performance is because of innovation. RNDA 2 is an innovation. Zen 2 is an innovation.

Anything AMD, Intel or Nvidia do to improve on their CPUs and GPUs is an innovation. I get that innovation wise MS have been working on VXA, SFS and some audio technique. But they mostly seem to be software based.

Now PS are saying we're not going for top of the line power so all this extra engine room and chassis space can be used elsewhere on the car. So their APU has inbuilt controllers and processers to do stuff that other stuff that helps overall balance.

I'm just looking it at an engineering POV. It's like back in the 90s we had separate audio cards until everything was offloaded onto the CPU and Audiocards became niche. But Sony are saying with the Tempest engine is that "Hey, remember when sound was processed elsewhere before instead of the CPU? Well we've put sound back on a dedicated chip, so that means free CPU cycles for other processes."

Which from the engineering perspective is cool because it's about the balance. The PS5 is less powerful SOC overall at top end but it is supplemented by other techniques.

So it's more hardware based.

I'm old enough to been through 5-6 console generations and the stupid wars. I know it's about the games and enjoyment and any console war is fraught because it's not easy to own 2 machines so praising your purchase and putting other people's choices down is a self image thing that I don't care about. For me it's about the tech and how gsmes developers will leverage these new developments. But as someone interested in the tech, I can't help but be interested in the entire fact of Mark Cerny's design is "SEE HOW WE'VE ELIMINATED ALL THESE DESIGN BOTTLENECKS!"

And I do want to see...

I think we're in basic agreement, definitely, and I'd never be the one to tell someone not to be excited about something.

I think our difference in perspective comes from our interests maybe? You're obviously way more techy than I am. My career is very close to marketing, which means I'm far more cynical about things than others - sometimes more than I should be. For instance, getting rid of bottlenecks, in my mind, is what you do when you build a new console. But, even if we take the worst of the worst - Xbox's two and a half odd GB/s SSD without compression or any other enhancements - then that is still so much faster than anything else we've had to use before. And then you have PS5's extra speed or Xbox's Velocity solution ON TOP OF THAT. That is INSANE.

And if you don't get rid of bottlenecks, it's useless. You end up with the same thing you get when you put an SSD in a PS4. Faster, but not so much that it's lifechanging.

And I don't mean to talk down the process, because just getting to the point when you can shift that amount of data is mindblowing. But that's why these are the best console engineers in the world. The least I expect of them is that when they put an SSD in their new machine, that they make sure it can work at its top efficiency.

But the thing is, Cerny changed the conversation. Getting rid of bottlenecks stopped being part of the process and started to become something exclusive to Sony.

The fact Microsoft has the more powerful console is... not coincidence, but luck of the draw. I think Sony were probably surprised by the difference (although I don't buy the last minute changes theory). I think Microsoft probably thought they were in for a closer show of it. That was why there was so much "OUR most powerful console" hedging a few months ago. That's not a criticism. I just mean that Microsoft didn't set out to make a really powerful console and then looked at what else they were doing. Both consoles need balance, and I think both will be balanced.

But Cerny changed the conversation. He's a genius and he doesn't have an obvious sales pitch, so people don't think he's selling something. That's ridiculous - he made PS4 and he made PS5. Of course he's selling something. But that trust meant he changed the conversation. To the point where Xbox being the more powerful console on paper became a negative in some people's eyes. To the point where people still don't realise Microsoft has its own dedicated audio hardware and even its own SSD and I/O solution.

Hence the brute force vs engineering thing. I still don't feel it's a fair comparison, but we won't know for certain for a few months yet, and personally I can't stand the wait.