llien

Member

With major Big Navi announcement coming in 3 days, let's fight over upscaling tech, before it becomes much more important, shall we?

What resolution?

I find the "delivers... 4k" trend, started by known types, very annoying. No matter how many buzzwords are surrounding some technology, upscaling is upscaling. There is no good reason to call it by the target resolution, other than deception.

"but isn't it sharpening", "but isn't it AI", "but deep learning", "but <insert buzzword>"

You have original resolution, lower than 4k, which is upscaled (upsampled, transformed, bazinga-ed, susaned, pick your poison) into 4k.

At the end of the day: you can call it Susan, if it makes you happy.

Why only one game?

This is the only game that supports both (at least to my knowledge)

Who has reviewed?

Toms Hardware and Ars Technica.

www.tomshardware.com

www.tomshardware.com

arstechnica.com

arstechnica.com

What did they conclude?

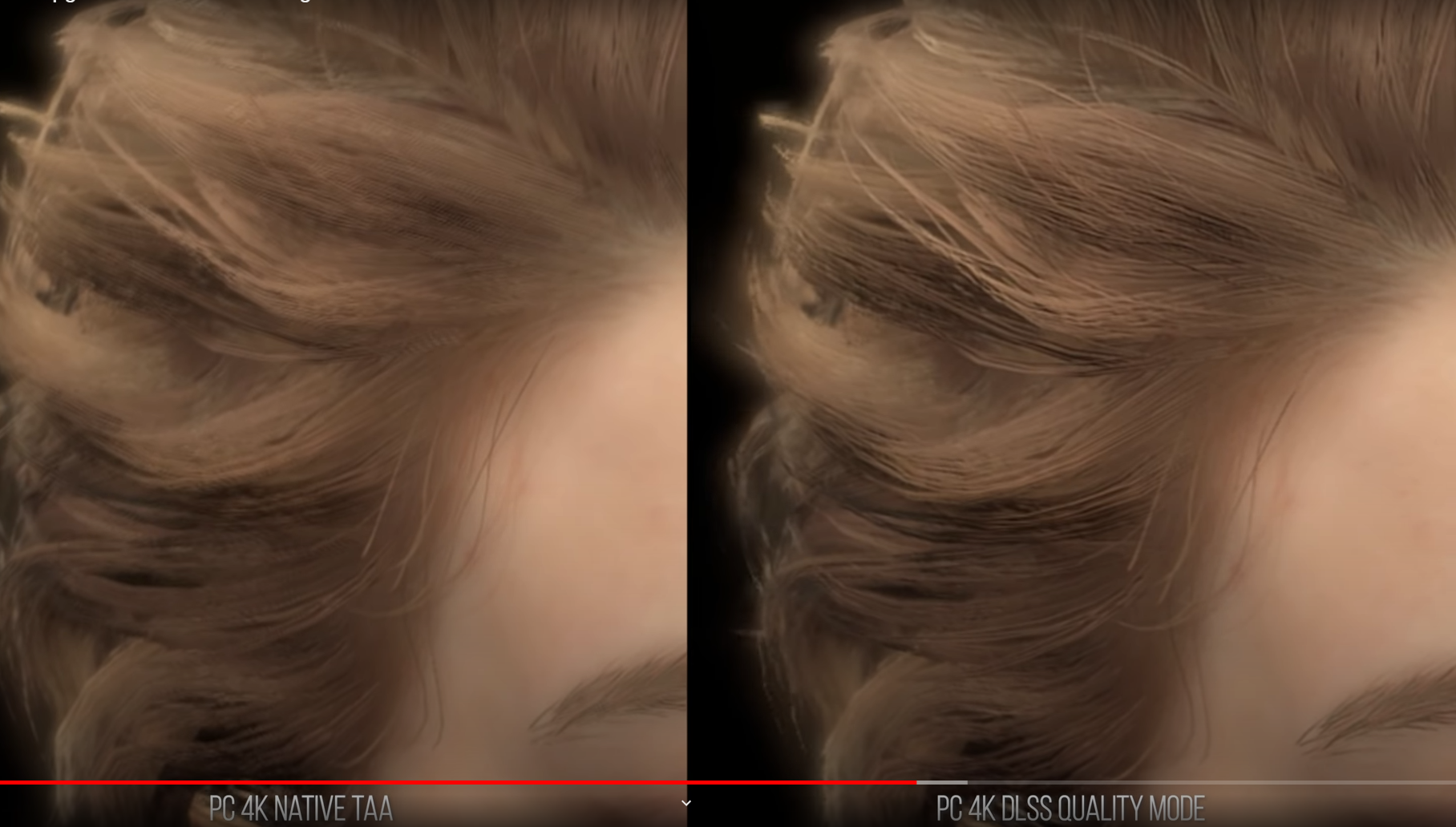

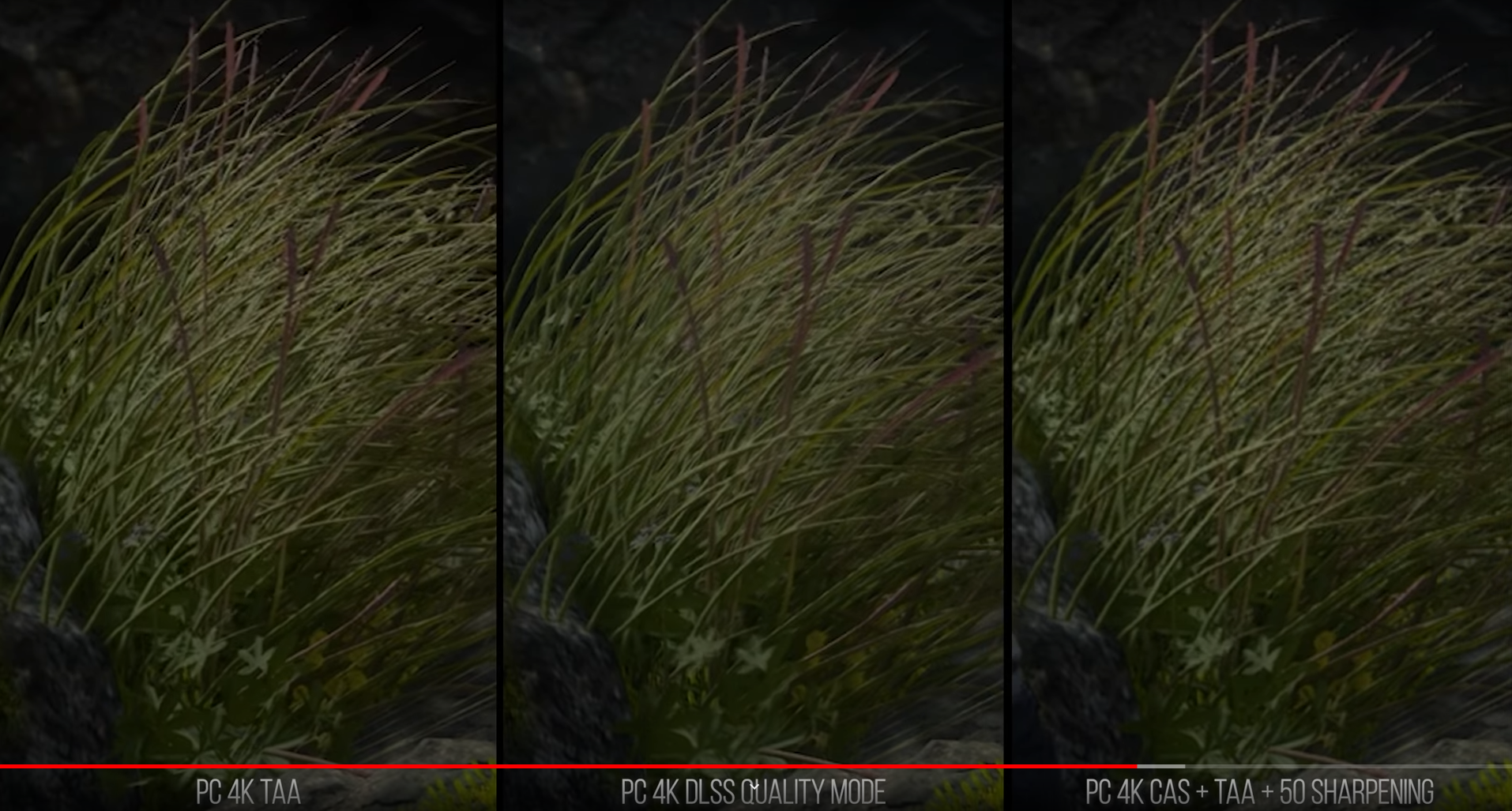

They disagree (see more below)

Toms is short on details, wile Ars technica article is filled with pictures, I'd advise you to actually check out the article.

Ars Technica's conclusion:

Fidelity FX CAS is both faster and delivers better quality.

Toms:

Fidelity FX does a great job, but... Very positive on DLSS 2.0. (and a rather frustrating "no difference unless you are pixel peeping")

Complains about shimmering seen with FidelityFX.

(I wish I knew how to embed juxtapose links)

Side note on what DLSS 2 & 1 are (and what they are not)

1.0

(source: nvidia) 1.0 was "true" AI based approach, with neural network being trained per game at datacenters using higher resolution images. At least in theory it could have led to great results (NN would be biased in ways that match visuals in particular game) but that didn't quite work.

2.0

(source: anandtech) 2.0 ditched per game training, uses TAA (temporal anti-aliasing) and some "one fits all" NN transformations, no more per game training at datacenter.

Hence it has weaknesses typical for TAA (problems with small, quickly moving objects, blur) as well as strengths (reduces shimmering)

What about something-something-deep-learning?

Took place with 1.0 at datacenters. Was never meant to run on customer PCs with neither version.

Briefly on Neural Networks

It started when humans discovered how neurons work:

A bunch of "inputs".

A bunch of "outputs"

When certain signal level on inputs is reached, neuron signals it's active state on the outputs.

Signal level at which neuron triggers is called "weight". (just a single value, cool, eh?)

Apparently there are many ways to connect neurons (and in humans, very distinct structures have been discovered, e.g. in eyes), one can create networks with wildly different number of neurons, their alignment, number of layers, types of connections between layers. Once NN structure is settled, it needs to be trained.

It is fed inputs, and weights are adjusted to achieve the desired output. This is "learning" or "training" a very time consuming process. Note how it's up to the trainer how many sample inputs to feed to the network.

Once training is done, a set of weights is known. Using those weights to process the input is called inference. It is a much much less computationally expensive process than training, even mobile phones can do that. (of course it also depends on the complexity of the network)

Being massively parallel (think of CUs as small, dumb CPU cores), GPUs are inherently good at both inference and training of NNs. Tensor could improve performance, or not, it depends.

Is NN approach inherently superior to procedural methods?

In general, no, not at all. See, for instance, calculator.

It depends on the type of the problem. The more ambiguous, "somehow catch the clue" type it is, the more likely is NN to excel at it.

And your point was?

1) There is only one game that supports both and results are far from clear, it is highly subjective

2) World when we'd get some games with upscaling tech of AMD and some games with upscaling by NV would be terrible, think about that. Luckily, though, FidelityFX is cross-platform.

What resolution?

I find the "delivers... 4k" trend, started by known types, very annoying. No matter how many buzzwords are surrounding some technology, upscaling is upscaling. There is no good reason to call it by the target resolution, other than deception.

"but isn't it sharpening", "but isn't it AI", "but deep learning", "but <insert buzzword>"

You have original resolution, lower than 4k, which is upscaled (upsampled, transformed, bazinga-ed, susaned, pick your poison) into 4k.

At the end of the day: you can call it Susan, if it makes you happy.

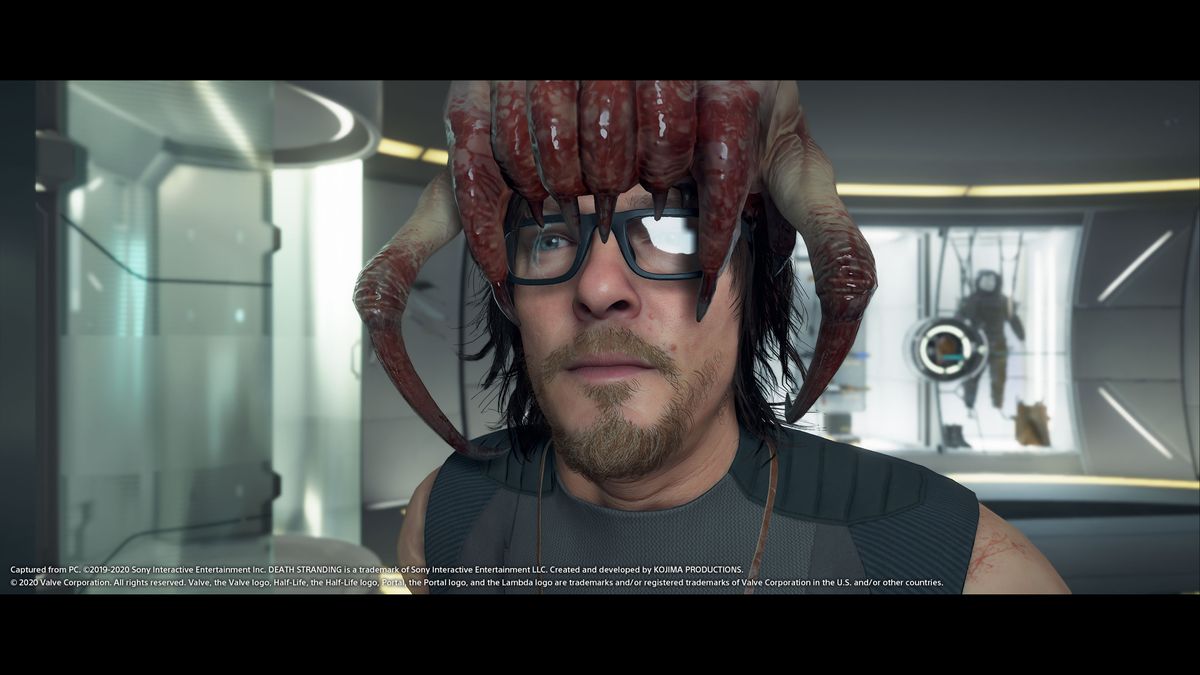

Why only one game?

This is the only game that supports both (at least to my knowledge)

Who has reviewed?

Toms Hardware and Ars Technica.

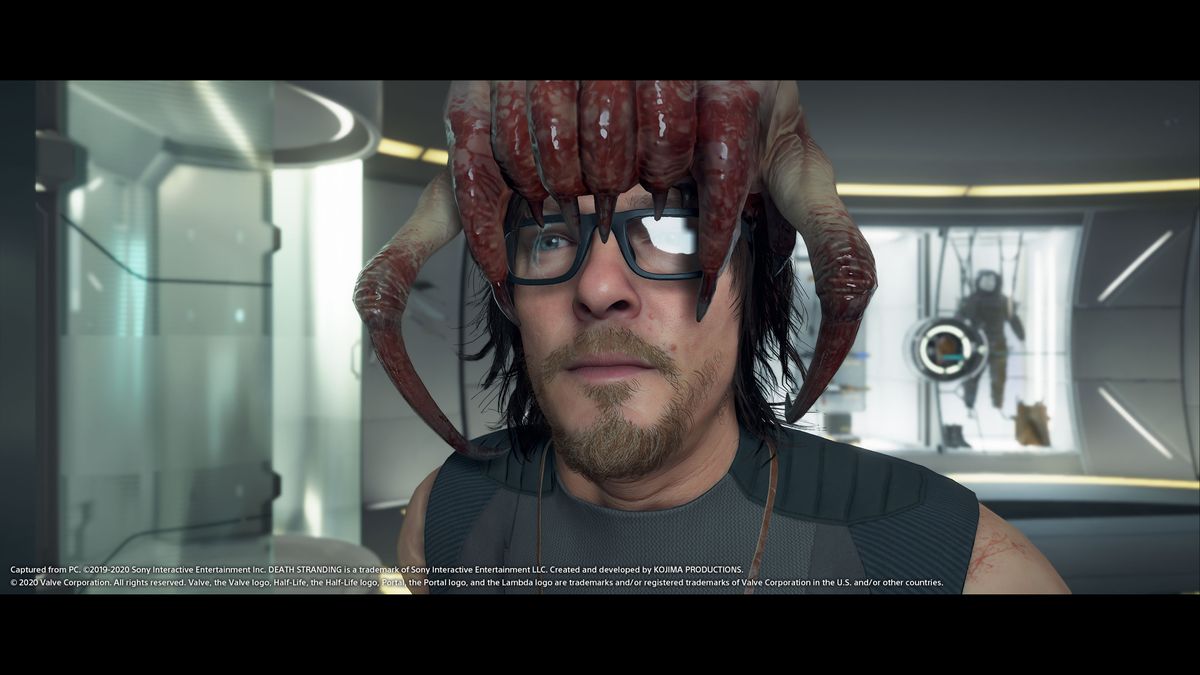

Death Stranding With DLSS 2.0 Allows for 4K and 60 FPS on Any RTX GPU

Death Stranding comes to PC on July 14, but we were able to do some preliminary early access testing.

Why this month’s PC port of Death Stranding is the definitive version [Updated]

A major embargo is up, so we've added comparison images for anti-aliasing methods.

What did they conclude?

They disagree (see more below)

Toms is short on details, wile Ars technica article is filled with pictures, I'd advise you to actually check out the article.

Ars Technica's conclusion:

Fidelity FX CAS is both faster and delivers better quality.

Toms:

Fidelity FX does a great job, but... Very positive on DLSS 2.0. (and a rather frustrating "no difference unless you are pixel peeping")

Complains about shimmering seen with FidelityFX.

(I wish I knew how to embed juxtapose links)

Side note on what DLSS 2 & 1 are (and what they are not)

1.0

(source: nvidia) 1.0 was "true" AI based approach, with neural network being trained per game at datacenters using higher resolution images. At least in theory it could have led to great results (NN would be biased in ways that match visuals in particular game) but that didn't quite work.

2.0

(source: anandtech) 2.0 ditched per game training, uses TAA (temporal anti-aliasing) and some "one fits all" NN transformations, no more per game training at datacenter.

Hence it has weaknesses typical for TAA (problems with small, quickly moving objects, blur) as well as strengths (reduces shimmering)

What about something-something-deep-learning?

Took place with 1.0 at datacenters. Was never meant to run on customer PCs with neither version.

Briefly on Neural Networks

It started when humans discovered how neurons work:

A bunch of "inputs".

A bunch of "outputs"

When certain signal level on inputs is reached, neuron signals it's active state on the outputs.

Signal level at which neuron triggers is called "weight". (just a single value, cool, eh?)

Apparently there are many ways to connect neurons (and in humans, very distinct structures have been discovered, e.g. in eyes), one can create networks with wildly different number of neurons, their alignment, number of layers, types of connections between layers. Once NN structure is settled, it needs to be trained.

It is fed inputs, and weights are adjusted to achieve the desired output. This is "learning" or "training" a very time consuming process. Note how it's up to the trainer how many sample inputs to feed to the network.

Once training is done, a set of weights is known. Using those weights to process the input is called inference. It is a much much less computationally expensive process than training, even mobile phones can do that. (of course it also depends on the complexity of the network)

Being massively parallel (think of CUs as small, dumb CPU cores), GPUs are inherently good at both inference and training of NNs. Tensor could improve performance, or not, it depends.

Is NN approach inherently superior to procedural methods?

In general, no, not at all. See, for instance, calculator.

It depends on the type of the problem. The more ambiguous, "somehow catch the clue" type it is, the more likely is NN to excel at it.

And your point was?

1) There is only one game that supports both and results are far from clear, it is highly subjective

2) World when we'd get some games with upscaling tech of AMD and some games with upscaling by NV would be terrible, think about that. Luckily, though, FidelityFX is cross-platform.

Last edited: