There's so much confusion on ray tracing vs path tracing and nobody's to blame because you have different solutions between the old papers before computers, the offline rendering solutions and the gaming solutions. It confuses even Cherno guy on youtube who is an Ex-DICE engine engineer.

PAPER/Offline theory is :

Ray tracing is follow the needle. Viewpoint→ hit object → bounce in the direction of light sources

point you wanted to contribute to the scene.

Path tracing is that same ray as above → bouncing the same ray between scene objects → to light source

surface

If you want a

simplified explanation on ray tracing vs path tracing in offline renderers, not a 1980's paper, it's pretty good. It's more from a Blender artist perspective.

"Pure" Ray tracing as defined here still requires cheats similar to rasterization, one example is that this solution should only give hard shadows, so soft shadows have to be tricked in, so are caustics.

For them offline rendering, typically

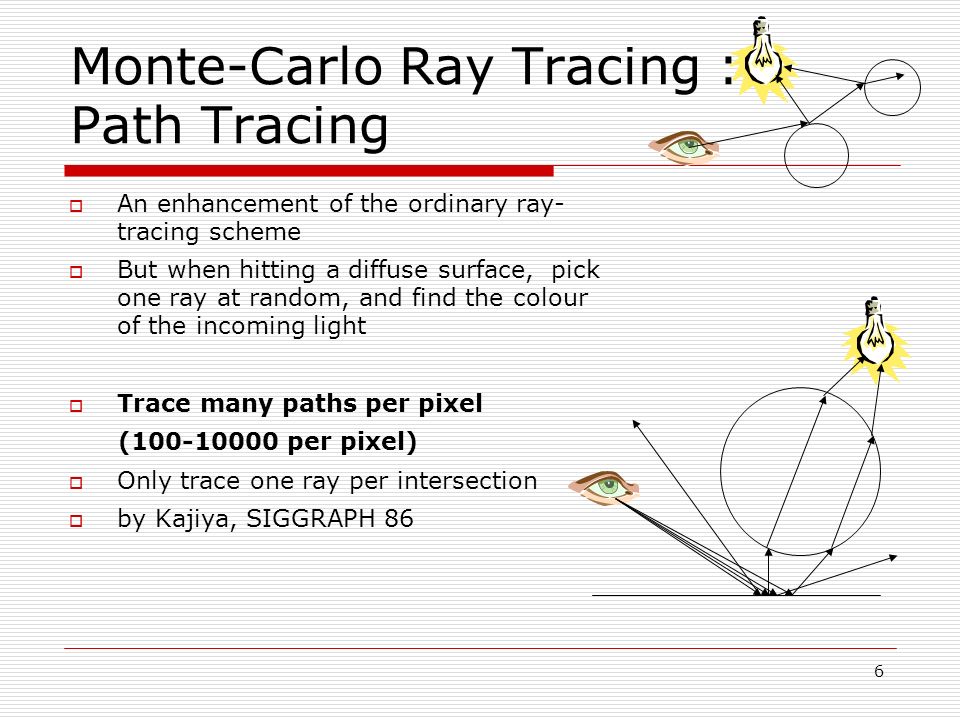

ray tracing is 1 ray per pixel

Path tracing is tens, hundreds, thousands of rays per pixel

But that's not for gaming. In gaming, ray tracing will fire pre-defined amount of rays out per frame.

A ray hitting a surface and leaves with the same angle of entry from the normal of the surface will be a specular reflection and be mirror "like".

Some peoples take the ray tracing approach and divert the ray randomly at each frames for a set number of bounces until it goes back to the light source point. This is when you want to simulate a rough surface material and you have diffuse reflection. If you're estimating a bounce, even though randomly, you're already calculating a "path" per say. This is where both start to intertwine together and confuse the fuck out of everybody.

But the "random" nature of the above approximation for ray tracing is not really how light behaves in the true physics of light. This is where scholars start to define "ray" vs "path" tracing, as in they reserve the name of path tracing for physically accurate paths.

Path tracing "tries" this by removing the "random" factor and really tries to simulate how light would bounce around physically correctly. But it's still too heavy to just cast a ray per pixels, so here comes also random selection of light sources, shadow casts. This selection is with a stochastic approach being often, the Monte Carlo solution in papers most often, probably something in-house for Nvidia.

The definition by Nvidia for the path tracing is this.

What's revolutionary with what Nvidia found and what i believe they are using for Cyberpunk 2077 overdrive patch (copy paste from the ray tracing thread, cause i'm lazy)

Well they've done a brilliant hybrid where the RTXDI, based on ReStir can do with millions of lights with no penalty, they use that for direct illumination and then they use a path tracing solver for indirect bounces.

I mean, no, it's not a Monte Carlo Path tracing solution, but that's the point, for real-time graphics we move away from CGI solutions.

RTXDI based on ReSTIR, i can guess the solution they provided for the game as their

ReSTIR PT presentation from SIGGRAPH 22.

We apply our GRIS theory in a proof-of-concept path tracing algorithm we call ReSTIR path tracing (ReSTIR PT). We build on the Falcor GPU rendering framework [Kallweit et al. 2021], and implement ReSTIR PT as chained GRIS passes, per Section 6.3. ReSTIR PT can use any shift map to reuse paths between pixels, but we implement the two from the previous section: a hybrid shift combining random replay and reconnection with our lobe specific improvements, and a simpler reconnection shift that always reconnects to the first indirect vertex. Like many path tracers, ours only evaluates the sampled BSDF lobe for BSDF-sampled vertices and evaluates all lobes for NEEsampled vertices. We treat lobe selections as additional path parameters, as described in Section 7.6, using the sampled lobe roughness to choose between reconnection and random replay.

Our ReSTIR PT implementation handles full surface-to-surface light transport. Volumetric media requires a volumetric shift map; Lin et al . [2021] implicitly defines one possibility and Gruson et al . [2018] propose another, though finding fast volumetric shifts for resampling remains interesting future work.

ReSTIR PT is an unbiased global illumination method that better handles specular light transport, thanks to supporting arbitrary shift maps. While we expect benefits to direct illumination from our GRIS theory, our implementations primarily address indirect light. We use ReSTIR DI [Bitterli et al. 2020] for direct lighting.

Don't know what witchcraft Nvidia did for this honestly. There's so many research on this subject beyond just Nvidia anyway as you can see the list of references. Generalized resampled importance sampling (GRIS) is like a memory storage for reusing arbitrary paths and they use that to derive a path traced resampler. The paper compares their solution to

"naive" path tracing.

The whole point of real-time ray/path tracing for gaming is to find these tricks/algorithms to beat the other solutions and get as close as possible to reference image as quick as possible.

ReSTIR is a game changer compared to Monte Carlo algorithms for speed and noise levels

If this works well for Cyberpunk 2077, we're in a revolution of "ray/path" tracing. Next hardware that will accelerate this solution even further will revolutionize game rendering.

What's to remember here is that there's discrepancies between papers, offline renderers and gaming solutions. Even the ray tracing we have right now are with tweaks and hacks to approximate beyond the very definition of the initial ray tracing papers. Rendering is always a hack of something. Path tracing in real-time is a hack of what the offline renderers are doing and restir DI + restir PT are a hack of a hack of a hack

But if the result in the end is closer to reference image and faster, who the fuck cares.