-

Hey, guest user. Hope you're enjoying NeoGAF! Have you considered registering for an account? Come join us and add your take to the daily discourse.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Jason Schreier's industry sources: PS5 is superior in ways that Sony has not communicated yet

- Thread starter LordOfChaos

- Start date

anothertech

Member

Dam. Jason was right for once.

Md Ray

Member

Music to my ears.Jason said:Yes, exactly. The number everyone's looking at is teraflops, which is essentially the maximum speed that a graphics card can run at. I believe it stands for floating point operations per second. So everybody's now seeing this spec sheet and they see PS5, 10.2 teraflops, and Xbox Series X, 12 teraflops. And it's like, oh my god, the Xbox is more powerful than the PlayStation. But meanwhile, the people I've been talking to over the past few months and the past couple years who are actually working on the PlayStation have pretty much unanimously all said: This thing is a beast. This thing is one of the coolest pieces of hardware that we've ever seen, we've ever used before. There are so many things here that are revolutionary, so many behind-the-scenes tools and features, APIs, and all sorts of other stuff that is way beyond my scope of comprehension. This is why I'm a reporter, and not an engineer.

He was right all along.

Last edited:

Oddvintagechap

Member

I dont understand. What do you want Sony to say? WE HAVE SECRET SAUCE.I preffer to believe in the "developers received Series X tools later" narrative because its much more plausible

And also because Digital Foundry knows their stuff when its about tech things

And really, if there was a secret sauce, Sony would be shouting about it.

What's the benefit of keeping this secret sauce in secrecy? This is just dumb

This is coming from someone who will only buy a PS5, btw

Sony did what they had to do. They took their sweet as time. Everyone was bitching about flops, wheres the price, when is the announcement, etc. Sony released a PS5 with a great launch lineup and features everyone likes. The SSD and the controller.

Md Ray

Member

No Vulkan derivatives. It's GNM derivative, Sony's own low-level API, that apparently gives devs even more control over the hardware than Vulkan.The one area where PS5 definitely beats the XSX is ROP/fillrate throughput thanks to the higher clockspeed (both consolves have 64 ROPs, though PS5 has fewer TMUs)

I'm assuming they also use some sort of Vulkan derivative which may be beating DX12

Last edited:

Ritsumei2020

Report me for console warring

True. Its a bug with the XSX running at reduced scene complexity (foliage, geometry, textures, lighting....) as well as running at lesser FPS than the PS5. What will happen to the FPS with increased scene complexity after they fix the bug?

4K 120fps Ultra of course,

Its just a matter of sorting out the ‘bugs’.

/s if necessary

Md Ray

Member

Source?This is a bug. And the dev confirmed it.

danielJackson

Banned

The one area where PS5 definitely beats the XSX is ROP/fillrate throughput thanks to the higher clockspeed (both consolves have 64 ROPs, though PS5 has fewer TMUs)

I'm assuming they also use some sort of Vulkan derivative which may be beating DX12

Yeah that is why PS4-5 have the upper hand vs xbox, they dont need to care about PC compatibility at all, so they have their own more streamlined and low-level APIs and tools. So no need to use directx/vulcan etc.

They have probably some similarities, but it gives them more freedom to build these tools as they dont have to think how it affects their PC ports. Like MS have to do.

All this were clear to people whom like to follow tech world for the whole year, but xbox "power is everything" pushers didnt believe it, that "less is more" if it is used more efficiently.

Both consoles are good enough, but it is the series s that is slow bastard and will definitely affect at least series x games and maybe some multiplatformers too.

"We" also stated it for months that series S must be sub 1080p, or series x must be limited by series s, but people didnt believe

Boneless

Member

Nah he's just a man who is right sometimes and wrong other times. Let's not demonize people like we are on era.

Isnt Jim doing the demonizing in his articles?

Hawking Radiation

Member

Jason gets mocked and ridiculed for anything he says on this forum. I've been following gaming for many years now and even before the dawn of Restera and there is one thing I learned and that is Jason, Matt, Shinobi are as reliable as they come when it comes to insider news.Music to my ears.

He was right all along.

I've always waited for these guys to say something and as far as I know they are always right.

They have good sources in the industry which is why I never doubted Jason when he said PS5 has other advantages not communicated to the general public yet.

We have had developers that have stated the numbers don't tell the full story yet you had armchair devs on here shouting 12TF >19TF because overclocked GPU etc etc.

The chest pumping after we learned that PS5 is sitting at 10TF is rather amusing right now considering the '9TF' console is smashing it in multiplats right out of the gate.

Xbox already has a launch problem in that they don't have anything resembling an exclusive to show off the console capabilities. Now the Series X is being trumped and the fucking Series S is being brought down to 720p?! Armchair devs on here told me it's going to play the same games but in 1440p and 120FPS.

We are only into the first month of the machines on the market and already games are going as low as 720p.

FUCK ME.

Xbox have problems and the last thing you want is to stumble getting out of the gates against PlayStation because once PS gets that momentum it's farewell and goodbye.

Last edited:

azertydu91

Hard to Kill

Didn't DF said to reach 120 fps the seres S rez went down at worse to 576p (or something like that)?Jason gets mocked and ridiculed for anything he says on this forum. I've been following gaming for many years now and even before the dawn of Restera and there is one thing I learned and that is Jason, Matt, Shinobi are as reliable as they come when it comes to insider news.

I've always waited for these guys to say something and as far as I know they are always right.

They have good sources in the industry which is why I never doubted Jason when he said PS5 has other advantages not communicated to the general public yet.

We have had developers that have stated the numbers don't tell the full story yet you had armchair devs on here shouting 12TF >19TF because overclocked GPU etc etc.

The chest pumping after we learned that PS5 is sitting at 10TF is rather amusing right now considering the '9TF' console is smashing it in multiplats right out of the gate.

Xbox already has a launch problem in that they don't have anything resembling an exclusive to show off the console capabilities. Now the Series X is being trumped and the fucking Series S is being brought down to 720p?! Armchair devs on here told me it's going to play the same games but in 1440p and 120FPS.

We are only into the first month of the machines on the market and already games are going as low as 720p.

FUCK ME.

Xbox have problems and the last thing you want is to stumble getting out of the gates against PlayStation because once PS gets that momentum it's farewell and goodbye.

I thought this was a serious post until I read this.We have only a handful of crossplat titles where comparisons can be made and differences are not that jarring. Sony has a lead as its SDK is reportedly more robust and more intuitive at the moment but Microsoft will eventually catch up - since Nadella became CEO all of their dev tools have been world class.

In 2022, after half a cycle, we can once again compare notes on how/whether the PS5 is "superior in ways that have not been communicated". This is the same garbage we hear every console cycle - "blast processing", "the reality synthesizer", "the emotion engine", "the Cell, a supercomputer on a die", etc.

We actually do know where the PS5 is superior: faster i/o speeds, haptic feedback (a game changer), VR backwards-compatibility. As for raw power, the XSX is the superior machine and in the coming years, as more tools come out, documentation is streamlined, etc., that will be ascertained. Same thing happened two generations ago: The Cell was a better processor than the Xenon, but that only really became apparent towards the end of that generation once Cell's complexity was fully understood by all major developers.

This is not rocket science, both consoles have RDNA2 Radeon GPUs and the SX GPU is more powerful. It is what it is, folks. Whether that makes the Series X "better" (a childish notion, but console warring seems to be reaching embarrassing levels) or "worse", well, that's for you to decide.

Last edited:

Hawking Radiation

Member

FIVE HUNDRED AND SEVENTY SIX PEE !!Didn't DF said to reach 120 fps the seres S rez went down at worse to 576p (or something like that)?

Yes you read it right. On a next gen console in 2020.

John Wick

Member

Remember what that guy from Ready at Dawn said:Music to my ears.

He was right all along.

Andrea Pessino

@AndreaPessino

·

Mar 19

"Dollar bet: within a year from its launch gamers will fully appreciate that the PlayStation 5 is one of the most revolutionary, inspired home consoles ever designed, and will feel silly for having spent energy arguing about "teraflops" and other similarly misunderstood specs."

Looks like a lot of developers agree and aren't scared to say it.

Ritsumei2020

Report me for console warring

FIVE HUNDRED AND SEVENTY SIX PEE !!

Yes you read it right. On a next gen console in 2020.

People trolled the Switch,

Now get ready for 7 years of this

John Wick

Member

A very good post friend!Jason gets mocked and ridiculed for anything he says on this forum. I've been following gaming for many years now and even before the dawn of Restera and there is one thing I learned and that is Jason, Matt, Shinobi are as reliable as they come when it comes to insider news.

I've always waited for these guys to say something and as far as I know they are always right.

They have good sources in the industry which is why I never doubted Jason when he said PS5 has other advantages not communicated to the general public yet.

We have had developers that have stated the numbers don't tell the full story yet you had armchair devs on here shouting 12TF >19TF because overclocked GPU etc etc.

The chest pumping after we learned that PS5 is sitting at 10TF is rather amusing right now considering the '9TF' console is smashing it in multiplats right out of the gate.

Xbox already has a launch problem in that they don't have anything resembling an exclusive to show off the console capabilities. Now the Series X is being trumped and the fucking Series S is being brought down to 720p?! Armchair devs on here told me it's going to play the same games but in 1440p and 120FPS.

We are only into the first month of the machines on the market and already games are going as low as 720p.

FUCK ME.

Xbox have problems and the last thing you want is to stumble getting out of the gates against PlayStation because once PS gets that momentum it's farewell and goodbye.

By the time MS release their games Sony will be on their next wave when the PS5 will be starting to really show its capabilities. Sony worked with devs especially Epic games and their internal studios regarding the features of PS5

Last edited:

Ritsumei2020

Report me for console warring

A very good post friend!

By the time MS release their games Sony will be on their next wave when the PS5 will be starting to really show its capabilities. Sony worked with devs especially Epic games and their internal studios regarding the features of PS5

Wait until Xbox finds the ‘tools’

Then Sony is done for. The power of the

CoD for example outputs at 4k 120fps HDR 4:2:2 12bits.

Which COD? Pretty sure CW drops to 1080p for 120hz.

geordiemp

Member

Also Jason Schreier is just some guy with an opinion he should not be somone whos words you take with any authority, he was wrong about both consoles being above 10.7tflops and wrong about both consoles being like an rtx 2080 power wise.

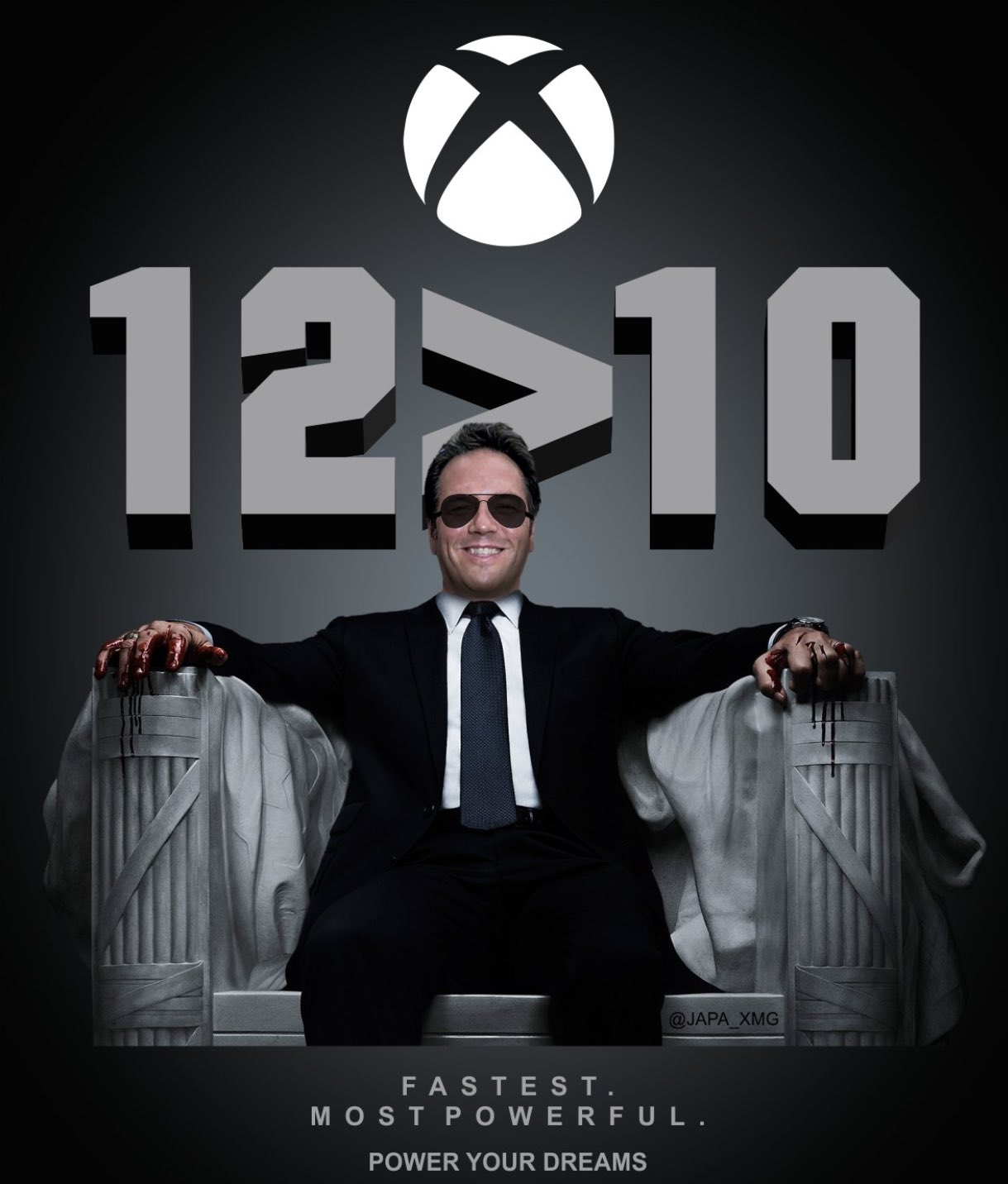

There was some funny memes in this thread, I like this one.

BlitzerRadic

Member

True. Its a bug with the XSX running at reduced scene complexity (foliage, geometry, textures, lighting....) as well as running at lesser FPS than the PS5. What will happen to the FPS with increased scene complexity after they fix the bug?

Well logically, when scene complexity and geometry increases, framerate takes a hit. But I'm no expert or anything.

Or you didn’t distribute workload correctly and use all the cus now .. so framerate stays the same .. who knows .Well logically, when scene complexity and geometry increases, framerate takes a hit. But I'm no expert or anything.

Hezekiah

Banned

Sorry I don't get it, why do people think Microsoft put in 6GB of slower speed RAM?I mean... I called it as soon as Xbox said they where giving the series X 16GB of vram instead of 20GB because they would have to split the speeds for that to work (and they did), I'm going to call this now the series X will run 16GB at the lower clock speed, because its the only reasonable way for developers to use its special boy duel speeds in a multiplatform world without coding the game from the ground up to use it. Or Microsoft are going to have to allow for developers to switch the 6GB off and use just the 10 at the higher speed.

That decision has greatly hamstrung the console, its the cell processor issue all over again.

cebri.one

Member

I'd still wait for a proper post-morten. Right now our biggest hope is someone getting a PS5, opening it and getting a nice photograph of the die to see if there are any architectural differences. That with Cerny patents could give a good overview of why PS5 seems to be performing above its expected targets. Although AMD 6000 series already give us some hints.

Last edited:

Bojanglez

The Amiga Brotherhood

Games wise, I believe it's called under promising and over delivering, also letting the games do the talking not their marketing execs.Oooh, so mysterious. Our console is better in so many ways, you just can’t tell and we’re not revealing. Like our HDMI 2.1 connection which may or may not be at HDMI 2.1 performance levels, so mysterious, right??

Bernd Lauert

Banned

FIVE HUNDRED AND SEVENTY SIX PEE !!

Yes you read it right. On a next gen console in 2020.

Just out of curiosity, since the PS5 drops to 900p in that 120hz mode, what resolution would you expect from the Series S, which is not even half as powerful?

NeoIkaruGAF

Gold Member

I know you're probably enjoying yourself too much right now about this, but in that specific case we're still talking about running a game at a frame rate that was a pipe dream on consoles until a week ago, and that no one in their right mind would even expect to be in the Series S version of the game, at all.FIVE HUNDRED AND SEVENTY SIX PEE !!

Yes you read it right. On a next gen console in 2020.

There's surely better ammo for console warring out there right now.

Radical_3d

Member

What is this all this talk about 500p in a 120fps mode? The theory is, the SS was to reproduce SX games at 1/4th of the resolution because the SX was going to be 4K and therefore SS will be 1080. But the reality is that 4K is a monstrosity and the consoles can’t hit that. So with games going as low as 1440p in the next generation the SS surely couldn’t maintain 1080 with just 1/3th of the power. So there comes the framerate caps like in AC Valhalla. But if you can’t cut in framerate, like in a 120fps mode, then of course you have to cut until that 12TF render target fits in a 4TF machine. Basically I think you all know this and I see most of the surprise reactions on this thread like this:

Remember that in the powerful consoles it’s 120fps at 1080p.

Remember that in the powerful consoles it’s 120fps at 1080p.

Last edited:

SLB1904

Banned

For once? LmfaoDam. Jason was right for once.

Thirty7ven

Banned

Life comes at you fast.

assurdum

Banned

It's not secret sauce. Better development kit and more balanced system is nothing of secret sauce, it can beat a more powerful hardware less balanced. I'm not saying it's always the case, but first wave of multiplat showed how Sony wasn't wrong at all.I preffer to believe in the "developers received Series X tools later" narrative because its much more plausible

And also because Digital Foundry knows their stuff when its about tech things

And really, if there was a secret sauce, Sony would be shouting about it.

What's the benefit of keeping this secret sauce in secrecy? This is just dumb

This is coming from someone who will only buy a PS5, btw

Last edited:

STARSBarry

Gold Member

Sorry I don't get it, why do people think Microsoft put in 6GB of slower speed RAM?

err because they have... 10GB of the Ram is at 560GB/s while 6GB is at 336GB/s as you might be able to tell 560GB/s and 336GB/s are diffrent speeds, hence why people "think" that there are diffrent speeds, although its less a "think" and more an actual honest to god fact.

Now if you want to know why this is bad, its fairly complicated, but the main reason is that very VERY few products would ever do this and the ONLY benefit is cost, all the negatives are how it complicates everything involving its use. It is not normal for hardware to have two diffrent speeds of ram developers and tools are not exsperienced around this constraint. There is also the issue of that you have 10GB of one and 6GB of the other, have you ever heard of people putting two diffrent sized RAM sticks in a system before? Yeah further complicates the issue.

While these are not physically diffrent sticks they are separate parts of the stick with diffrent speeds, it would make it alot easier to develop around if they where, sadly this is not an option with 16GB, as it would need to be 20GB to allow for this.

Last edited:

Hezekiah

Banned

Yeah I knows it's a negative, I was wondering why they did it, thought it was a cost thing but wasn't sure.err because they have... 10GB of the Ram is at 560GB/s while 6GB is at 336GB/s as you might be able to tell 560GB/s and 336GB/s are diffrent speeds, hence why people "think" that there are diffrent speeds, although its less a "think" and more an actual honest to god fact.

Now if you want to know why this is bad, its fairly complicated, but the main reason is that very VERY few products would ever do this and the ONLY benefit is cost, all the negatives are how it complicates everything involving its use. It is not normal for hardware to have two diffrent speeds of ram developers and tools are not exsperienced around this constraint. There is also the issue of that you have 10GB of one and 6GB of the other, have you ever heard of people putting two diffrent sized RAM sticks in a system before? Yeah further complicates the issue.

While these are not physically diffrent sticks they are separate parts of the stick with diffrent speeds, it would make it alot easier to develop around if they where, sadly this is not an option with 16GB, as it would need to be 20GB to allow for this.

Last edited:

ethomaz

Banned

Outputs at 4k 120Hz HDR 4:2:2 12bits all the time in 120Hz TVs.Which COD? Pretty sure CW drops to 1080p for 120hz.

He is talking about the HDMI bandwidth and not internal render that is indeed 1080p for 120Hz mode.

Last edited:

ethomaz

Banned

It is probably cost.Yeah I knows it's a negative, I was wondering why they did it, thought it was a cost thing but wasn't sure.

They wanted a 320bits bus for better bandwidth but 320bists bus needs multiple of 10 chips of RAM or the same size to works... that means 10GB or 20GB total memory.

10GB was too low.

20GB too expensive.

So they used different memory sizes to reach 16GB with multiple of 10 chips that ended creating the access issue.

I believe the idea is to put critical render data on the 10GB with faster speed and non-critical data (audio for example) in the 6GB with slower speed.

The key is I believe devs needs to choose what to allocate in the fast and slow part for the RAM... there is no magical tool that can decide what data can go on slow and what data can go to faster if devs not says where data will be allocated.

In theory games that uses less than 10GB of RAM can be fully allocated in faster part... the add works only exists in games that uses more than 10GB.

BTW we don’t have evidences that is causing the Series X low performance compared to PS5 because I believe these games are not using 10GB of RAM.

Last edited:

Romulus

Member

Maybe so, but they were eventually correct. I was a solid 360 boy until around 2011 when the quality of PS3 exclusives compelled me to switch. And I haven’t seen anything to make me switch back.

I saw it much differently. I saw it as the best devs in the world finally figured out our to polish a turd. And if the ps3 was even remotely easy to work with, we would have gotten a full generation of good looking games, not 2 years worth.

I just feel like if you moved all the Sony devs over to 360 in 2006ish, the results would have been far, far better there. Xbox just had no prominent developers to show off their hardware. And I would argue also that most impressive ps3 games weren't much more than great art, animation, and tricks, but super linear with forced camera controls to alleviate hardware taxation. Almost all the exclusives ran at 30fps too, or below.

Last edited:

cebri.one

Member

It is probably cost.

They wanted a 320bits bus for better bandwidth but 320bists bus needs multiple of 10 chips of RAM or the same size to works... that means 10GB or 20GB total memory.

10GB was too low.

20GB too expensive.

So they used different memory sizes to reach 16GB with multiple of 10 chips that ended creating the access issue.

I believe the idea is to put critical render data on the 10GB with faster speed and non-critical data (audio for example) in the 6GB with slower speed.

The key is I believe devs needs to choose what to allocate in the fast and slow part for the RAM... there is no magical tool that can decide what data can go on slow and what data can go to faster if devs not says where data will be allocated.

In theory games that uses less than 10GB of RAM can be fully allocated in faster part... the add works only exists in games that uses more than 10GB.

BTW we don’t have evidences that is causing the Series X low performance compared to PS5 because I believe these games are not using 10GB of RAM.

I don't think there are any evidences, apart from some complains on twitter, of the XBSX memory pool being an issue for games. It's probably more cumbersome to develop for but I don't think is nowhere near to the XBO or PS3 situation.

I do have interest in computer hardware from an enthusiast perspective and do some 3D rendering from time to time but my knowledge is limited on this matter so I may be wrong. I do think that the issue, if there is any, with the XSXS GPU maybe that if, as some speculate here, 14CU per Shader Array is not the optimal configuration I wouldn't be surprised if the command processor is having issues giving tasks efficiently to the CUs. If the front end of the CU is not able to efficiently distribute the work then MS has issues, but may be solvable.

Mesh Shaders as far as I'm aware are trying to simplify as much as possible the process between the input assembler and the rasterization process given that most of the hardware there is programmable, which is where the CUs come into play. So Mesh Shaders could bring additional efficiency to how that data is fetched into the CUs.

However there are still some hardware limitations that I don't know how they would impact performance. For example the L1 cache. There is one MB of L1 per SA so XBSX here has less cache bandwidth as a) is running 22% slower and b) it has to be shared among more CUs which means way more contingency reducing the effective bandwidth even more.

Then there are other units that are running faster on PS5 like the ROPs or the Rasterizers that are fixed per SA. So who knows, I think there is still a lot to learn about both consoles.

Shmunter

Member

Bad ram setup is being discussed on Beyond3d. Speculation is 3rd party will suffer forever as no other platform has the limitations so ports will take a hit if not reworked.I don't think there are any evidences, apart from some complains on twitter, of the XBSX memory pool being an issue for games. It's probably more cumbersome to develop for but I don't think is nowhere near to the XBO or PS3 situation.

I do have interest in computer hardware from an enthusiast perspective and do some 3D rendering from time to time but my knowledge is limited on this matter so I may be wrong. I do think that the issue, if there is any, with the XSXS GPU maybe that if, as some speculate here, 14CU per Shader Array is not the optimal configuration I wouldn't be surprised if the command processor is having issues giving tasks efficiently to the CUs. If the front end of the CU is not able to efficiently distribute the work then MS has issues, but may be solvable.

Mesh Shaders as far as I'm aware are trying to simplify as much as possible the process between the input assembler and the rasterization process given that most of the hardware there is programmable, which is where the CUs come into play. So Mesh Shaders could bring additional efficiency to how that data is fetched into the CUs.

However there are still some hardware limitations that I don't know how they would impact performance. For example the L1 cache. There is one MB of L1 per SA so XBSX here has less cache bandwidth as a) is running 22% slower and b) it has to be shared among more CUs which means way more contingency reducing the effective bandwidth even more.

Then there are other units that are running faster on PS5 like the ROPs or the Rasterizers that are fixed per SA. So who knows, I think there is still a lot to learn about both consoles.

if this is indeed the case, XsX would need to be the lead platform to layout the games ram management upfront.

dead monkey uk

Member

You realize you will see every game in 1080p as your output right?FIVE HUNDRED AND SEVENTY SIX PEE !!

Yes you read it right. On a next gen console in 2020.

AgentP

Thinks mods influence posters politics. Promoted to QAnon Editor.

MS had two gens of two memory pools, sdram & esram, then copied the ps4 with the xb1x. Then went back to effectively two pools. Strange.

One thing that hit me, the same feature that enables quick resume might be hurting performance. They are using virtual machines right? This abstraction has overhead.

One thing that hit me, the same feature that enables quick resume might be hurting performance. They are using virtual machines right? This abstraction has overhead.

oldergamer

Member

Dude that is not correct. its one unified pool, just some runs slower designed for components that don't use a lot of bandwidth. it's not the same as sdram, or esram, or even PS3.MS had two gens of two memory pools, sdram & esram, then copied the ps4 with the xb1x. Then went back to effectively two pools. Strange.

One thing that hit me, the same feature that enables quick resume might be hurting performance. They are using virtual machines right? This abstraction has overhead.

Kumomeme

Member

even when XSX solved their tools issue, but compared to ps5 tools and API, it still designed for more than 1 sku (xss and pc) compared to ps5's api that designed only for 1 specific sku without need to worry for other widerange of pc specs out there for example. ps5 api still could have advantages here.

Even the crytek devs before also said similliar stuff about how xbox use direct x compared to ps5. this is differences between console performance and pc all this time.

it might be ios vs android situation. not said xsx cant beat ps5, on paper they should has advantages in performance aspect but the gap probably not big and gonna shrink easily as people thought at first place(whole 10 vs 12tf debate)

Even the crytek devs before also said similliar stuff about how xbox use direct x compared to ps5. this is differences between console performance and pc all this time.

it might be ios vs android situation. not said xsx cant beat ps5, on paper they should has advantages in performance aspect but the gap probably not big and gonna shrink easily as people thought at first place(whole 10 vs 12tf debate)

Last edited:

MasterCornholio

Member

MS had two gens of two memory pools, sdram & esram, then copied the ps4 with the xb1x. Then went back to effectively two pools. Strange.

One thing that hit me, the same feature that enables quick resume might be hurting performance. They are using virtual machines right? This abstraction has overhead.

I never thought of that. It could be why Sony doesn't use it with the PS5 other than the small storage size.

Omega Supreme Holopsicon

Member

The secret sauce might be infinity cache, full rdna2 with hints of rdna3.It's not secret sauce. Better development kit and more balanced system is nothing of secret sauce, it can beat a more powerful hardware less balanced. I'm not saying it's always the case, but first wave of multiplat showed how Sony wasn't wrong at all.

assurdum

Banned

Infinity cache can't be on ps5 the SOC is too small. But I suspect infinity cache is inspired to the ps5 cache system, so it could work in a very similar way.The secret sauce might be infinity cache, full rdna2 with hints of rdna3.

Hawking Radiation

Member

Just out of curiosity, since the PS5 drops to 900p in that 120hz mode, what resolution would you expect from the Series S, which is not even half as powerful?

Even Richard Leadbetter stated in his Digital Foundry review on Dirt 5 that Microsoft need to change their messaging regarding Series S and its 1440p at up to 120FPS marketing because its highly optimistic. It can't even get there in a cross gen launch game.

Last edited:

Omega Supreme Holopsicon

Member

We havent seen die shots, ps5 soc is nearly as big as series x soc. It has very high bandwidth, it might have a smaller but relatively big cache capable of infinity like functions.Infinity cache can't be on ps5 the SOC is too small. But I suspect infinity cache is inspired to the ps5 cache system, so it could work in a very similar way.

ScaryBrandon

Banned

PS5 is an xbox series x with similar but 20% weaker hardware. There is no secret sauce or secret features.A very good post friend!

By the time MS release their games Sony will be on their next wave when the PS5 will be starting to really show its capabilities. Sony worked with devs especially Epic games and their internal studios regarding the features of PS5

FIREKNIGHT2029

Member

Jason was right all along and so were some insiders. I knew this would be the case. Cerny tried to warn us all but fanboys wanted to simplify everything to Tflops  .

.

I’m not too surprised by the results we are seeing since I actually believed Jason Schrier and the few insiders that told us Tflops didn’t tell the whole story, what really surprises me a bit is the Series S. I knew the Series S was going to be a potato that wouldn’t hit the 1440p standard MS claimed it would but I didn’t think we would be seeing resolutions as low as 576p. I thought 900p and 720p would be a thing but 576p is 360/PS3 era shit .

.

I’m not too surprised by the results we are seeing since I actually believed Jason Schrier and the few insiders that told us Tflops didn’t tell the whole story, what really surprises me a bit is the Series S. I knew the Series S was going to be a potato that wouldn’t hit the 1440p standard MS claimed it would but I didn’t think we would be seeing resolutions as low as 576p. I thought 900p and 720p would be a thing but 576p is 360/PS3 era shit