So as a fairly recent 980 Ti owner and the public back-and-forth and kinda click bait nature of this report has lead me to try and understand the facts and implications with my limited tech knowledge and tiny monkey brain.

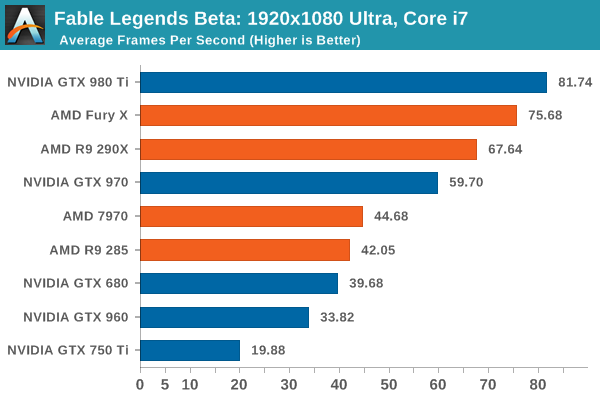

Correct me if I'm wrong, but here's what I've gathered (and grossly generalised): Maxwell (and at this point probably Pascal) GPUs have hardware level deficiencies compared to modern AMD GPUs when it comes to async compute. In practice, framerate gains from async heavy engines and software will greatly benefit AMD over Nvidia. The real world impact of this, especially in video games, is still up in the air, as it is entirely dependant on the proliferation of engines using asyc computing and the extent to which they do. The safest bet is indeed AMD as it's simply better to have than to not, and logic suggests that async performance will become more important as time goes by. But how long it takes for async performance to be a strong metric in video games and engines, and the extent to which they use async to the detriment of Maxwell Nvidia, is up in the air at this point.

Wrong/right/butts?

Butts.

A. We don't know much about Pascal so anything on that is not even speculation but a pure guesswork right now.

B. Maxwell is less efficient in async compute than GCN, that is true and that has been known for some time, there are no new news here. Whether to assert this is a "hardware level deficiencies" or a "conscious architectural decision stemming from the rest of the Maxwell pipeline" is up to whoever is making such statements as this can be seen as both, depending on one's point of view and the subject of discussion.

C. Let's be clear here: the discussion about async compute in DX12 is a pure gaming talk as it assumes that compute queues are running in parallel to graphics queue - and graphics means gaming. Async compute in pure compute mode is a different beast as it doesn't involve any context switches anywhere and was actually done first by NV in the GK110 GPU.

D. Gains from async compute are highly dependent on the loads which are happening in both graphics and compute threads. It would probably be correct to expect GCN to show bigger relative gains from running compute stuff asynchronously because their comparative ALU utilization on a typical gaming workload has always been lower than that of any NV GPU between G80 and GM200.

This is the main reason why it makes sense for them to have a complex "ACEs" frontend in their architecture which launches work on GPU's idle resources while graphics queue is loading something else. This is also the main reason why I'm completely not sure that improving the same frontend in Pascal would lead to the same gains as can be achieved in GCN - as NV's GPUs have less idle units in general on a typical graphics workload.

But that's assuming that Pascal won't shift its h/w utilization more in favor of compute and less in favor of graphics - which seems to be the general trend anyway and the real question is should launching stuff asynchronously lead to more efficient h/w load (a la GCN) or should it just not slow down other stuff already running on the GPU with peak load (a la Maxwell)? I fully expect NV to take the second route - meaning that they'll improve the frontend to allow async compute running with minimal overhead on state changes (i.e. not slowing down other things) but their gains from running stuff asynchronously are likely to remain small (that's completely out of nowhere but I expect them to be in range of +5-10% on Maxwell on average).

E. "Engines" has less to do with efficient async compute than shaders which are actually run by these engines. Running compute stuff asynchronously is very easy in D3D12, making sure that you are actually getting some performance from this on most of modern D3D12 PC hardware is damn hard and will require an effort from a game developer.

F. "Safest bet" on what exactly? There are no requirements of supporting the async compute in one way other another. The driver just have to support it in D3D12 but how exactly it will launch the threads submitted to async queues are entirely up to the driver and the h/w it is running on. If in say GCN2 AMD will improve their typical h/w utilization on the graphics workload to a point where running compute stuff asynchronously in parallel with graphics won't bring them as big performance gains as on GCN1 then what exactly would that mean?

Async compute is just a way of running programs on a GPU, you don't have to run them in parallel to get DX12 compatibility and what's more important - you don't have to run them in parallel to achieve the performance crown and beat your competition. All of this is entirely dependent on the GPU architectures in question.

Anyone read the Guru 3D article on the topic?

They're saying Nvidia does have async compute, but the scheduler is software, not hardware:

http://www.guru3d.com/news-story/nvidia-will-fully-implement-async-compute-via-driver-support.html

Frontend command dispatch unit is there on Maxwell chips so what "software" are we're talking about here? There is a lot of stuff going on in the driver "software" compiler on both Maxwell and GCN architectures. It is a word play to call one's solution "software" and another's "hardware" as all solutions are using drivers which are running on CPUs. The only sensible metric is the relative CPU loads during job submission, and last time I checked it was several times faster on NV GPUs - this will certainly change in DX12/Vulkan but there is no indications that NV will become slower than AMD here. So let's wait for some benchmarks.