Can hbm3 ever hope to compete with gddr6 in dollar per GB?

Here's the gist of it......

"HBM3: changing the formula.

HBM1 & HBM2 where two newcomers in the market, HBM2 fixed some limitations of the first gen. HBM1 like the maximum memory size, you still remember how the original Fury cards were limited to 4GB. Because the single package 1024bit module had a maximum size of 4GB by then. So to have 8GB of HBM1 you needed 2x 1024bit packages which will rise the cost for Fury.

HBM3 comes with more refinement, allowing higher size per package, faster clocks and more importantly, optional smaller bus at 512bit. This last bit is the game changer here, the narrower bus hit's the sweet spot to not require silicon interposer, here you can use a much cheaper organic interposer instead.

The HBM consortium decided this to widen the adoption of the standard more, by using a 512bit bus with the higher clocks the HBM3 allows, the new standard can achieve the same higher bandwidth with much lower cost by not requiring a silicon interposer at all.

How this can be implemented ?

The organic interposer we know is the same packaging material used today in MCM designs, like EPYC and Threadripper CPU's, and even regular Ryzen and Core CPU's, it can handle higher density routing than PCB with acceptable cost, that's why it's used to host the original CPU or GPU die, and reroute the die connections to the packaging where it's connects to the PCB.

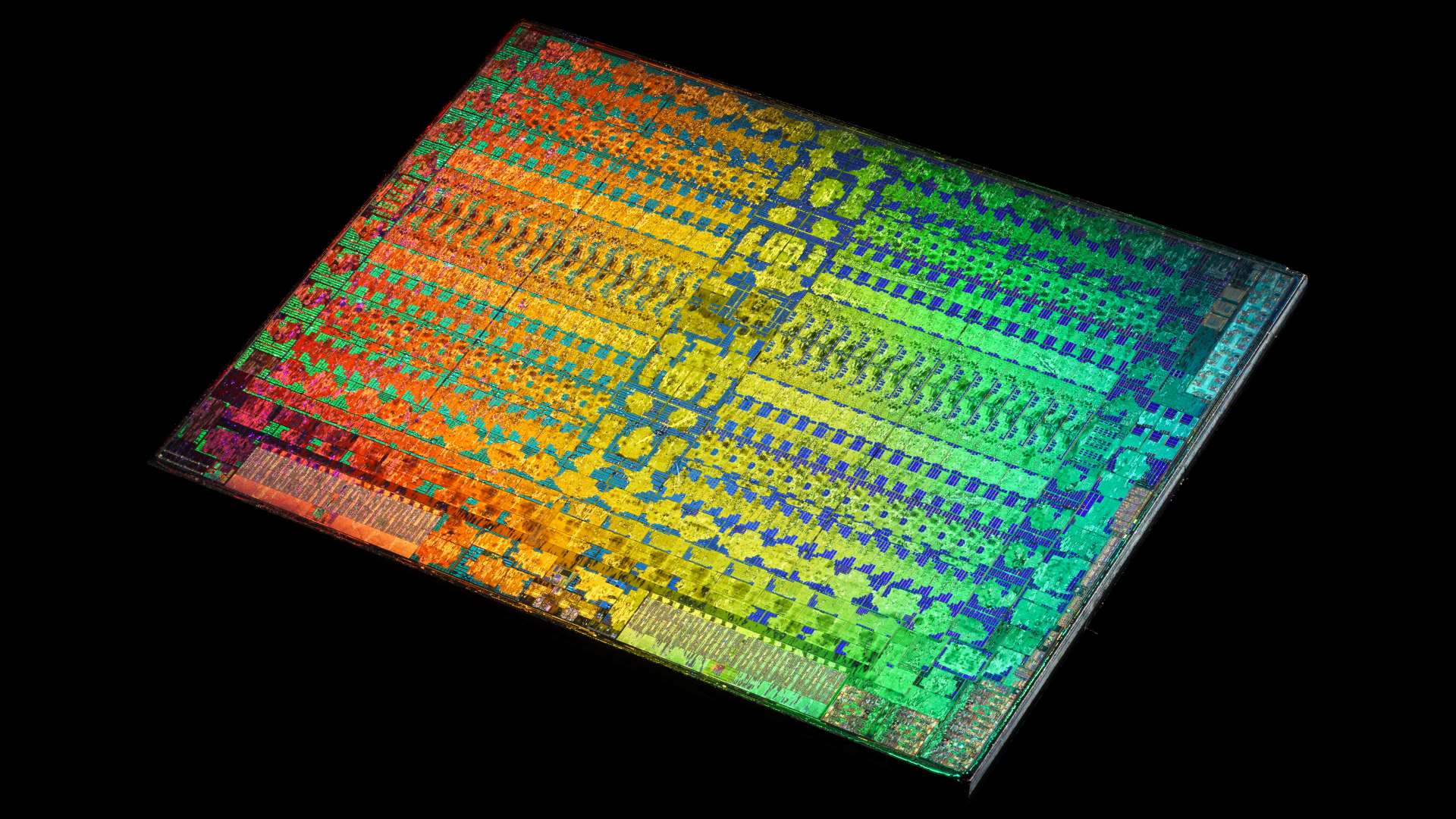

HBM3 will not sit on the PCB like how GDDR are used now, instead it will sit on the same organic interposer as any other MCM design, so imagine Threadripper CPU package with on of the chiplets being replaced with HBM3 memory, now it's very possible with HBM3 without the addition cost of silicon interposer. This also allows lower Z-Height that what was when the silicon interposer was used. I used Threadripper as example here not because it will, we don't know. But because it uses the same organic interposer packaging that can allow HBM3 to be used.

Adding to that, Samsung also is trying to reduce the cost more by removing the buffer die which exists on all HBM chips and reduce the number of TSV's which will allow the chip to be used even in mainstream GPU's specially mobile ones where space and power saving is crucial. Removing the buffer die and reducing the TSV's will affect the total bandwidth but Samsung plans to increase the bus speed per pin to compensate. Current projections for these new cheaper HBM3's can reach 200GB/s compared to HBM2 current 256GB/s for the cheapest modules.

Long time to develop, coming in 2019/2020 timeframe:

Interestingly, this new lower cost HBM3 has been known for a while now but it seems we forgot about it, Samsung announced it back in 2016 along with GDDR6 but availability is only meant for 2019/2020 timeframe. So it will be interesting to see how late 2019 or 2020 APU's and GPU's will be. AMD can do marvels by then. Even HBM3 as L4 cache when the iGPU is not used can be a thing to think about if it's feasible."

Finally, this does not mean HBM3 will be inferior to HBM2 that high-end applications will not use it. It means HBM3 will allow more options including lower cost options, HBM3 will still be available in full 1024bit bus and requires silicon interposers where cost is not an issue like high-end applications. But also a lower cost option will be available to help with more market penetration where HBM's low power and size for memory bandwidth advantage is very important.