Coulomb_Barrier

Member

TEH BIAS.

Well shit. I've changed my mind now, not going to sell that 980 I just bought.

TEH BIAS.

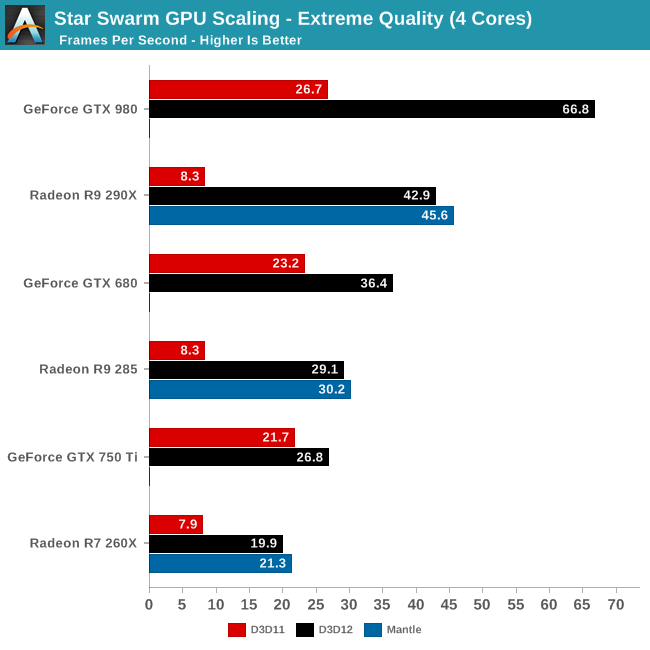

This pic should be on OP, imo.

So you can just program async, with a penalty on nvidia hardware?

Maybe the performance gains can nullify the performance penalty.

they dont seem to be nearly as beneficial as async compute. also, consoles not supporting them pretty much makes them useless. you will see the occasional 2 titles a year use them to do almost nothing to enhance the visuals. they will probably become the new tessellation.

What does this prove, exactly?

People wondering why Nvidia is doing a bit better in DX11 than DX12. Thats because Nvidia optimized their DX11 path in their drivers for Ashes of the Singularity. With DX12 there are no tangible driver optimizations because the Game Engine speaks almost directly to the Graphics Hardware. So none were made. Nvidia is at the mercy of the programmers talents as well as their own Maxwell architectures thread parallelism performance under DX12. The Devellopers programmed for thread parallelism in Ashes of the Singularity in order to be able to better draw all those objects on the screen. Therefore what were seeing with the Nvidia numbers is the Nvidia draw call bottleneck showing up under DX12. Nvidia works around this with its own optimizations in DX11 by prioritizing workloads and replacing shaders. Yes, the nVIDIA driver contains a compiler which re-compiles and replaces shaders which are not fine tuned to their architecture on a per game basis. NVidias driver is also Multi-Threaded, making use of the idling CPU cores in order to recompile/replace shaders. The work nVIDIA does in software, under DX11, is the work AMD do in Hardware, under DX12, with their Asynchronous Compute Engines.

I like this quote, where Nvidia's drivers gave them big boosts before, they're losing in DX12 because drivers aren't nearly as important.

Pretty much what AMD has been on about for the past 3 years. While Nvidia focused on the market as-is, AMD was getting in early on DX12 and it's cost them dearly in their already low market share. Now that DX12 is the current focus, I'm sure Pascal won't have these problems and it honestly can't if they want to compete. When Pascal comes around, we'll probably start seeing the first DX12 games hit the market and if their top-tier card is competing or even winning, that's all anyone is going to be talking about, not Maxwell performance.

It has yet to be proven that async compute is drastitically important for something like Maxwell 2 archichtere or even if Maxwell 2 does not support it. Even the supposed "dev" does not know. We KNOW async compute helps GCN in a signifcant fashion, but who is to say async compute would help Maxwell 2 the same amount (assuming it does not have it). This thread has too much chicken head cut off syndrom already going on, let's lessen it abit.

And I do not think one can scoff at the DX12 hardware features NV has... just so sweepinly. Just like one cannot scoff at how much better NV's tesselation is.

Pardon my ignorance but what main feature are you talking about? I thought G-Sync monitors just eliminated screen-tearing by syncing your GPU to the monitor's refresh rate?

The author in the op does try to explain how he tries to remove any bias from his findings. Not that anyone or anything can be unbiased, but it does sound like he makes a reasonable effort.

This pic should be on OP, imo.

OP can't use G-Sync yet since he still has an AMD GPU. But it seems like it's pretty good monitor anyways

And then, we are talking about how Nvidia lacks Asynchronous Compute when that's the commercial name AMD gives to that feature. For your info, Nvidia calls it Dynamic Parallelism, and has been among us for several generations with CUDA.

What does this prove, exactly?

This actually sounds like a terrible situation for AMD. DX12 seems like the company that is willing to spend the most money and manpower with dev support will have far and away the best performance on their cards. We've seen how this has played out with DX10/11 and it should be obvious how this will play out with DX12. Nvidia will create middleware tuned for their cards and spend a lot of time assisting devs and their cards will have by far the best optimized versions of these games and AMD will not be able to correct the situation through driver updates. AMD just isn't going to spend the money and resources to provide the dev support Nvidia does routinely. Project Cars is a recent example of this going completely wrong for AMD.

This is before we even get into claims that one or the other company is "sabotaging" it's rival though "help" provided to a dev.

People saying the developer is biased

Did anyone read the OP?

That we are talking about some biased developer trying too hard to favor one brand above other. They are openly admiting that they are using one resource on AMD that they have completely shutdown on Nvidia because latter one doesn't even have the driver support unlocked for them. And even then, they can't surpass GTX performance.

And then, we are talking about how Nvidia lacks Asynchronous Compute when that's the commercial name AMD gives to that feature. For your info, Nvidia calls it Dynamic Parallelism, and has been among us for several generations with CUDA.

Fud sprinkler.

Certainly I could see how one might see that we are working closer with one hardware vendor then the other, but the numbers don't really bare that out. Since we've started, I think we've had about 3 site visits from NVidia, 3 from AMD, and 2 from Intel ( and 0 from Microsoft, but they never come visit anyone ;(). Nvidia was actually a far more active collaborator over the summer then AMD was, If you judged from email traffic and code-checkins, you'd draw the conclusion we were working closer with Nvidia rather than AMD wink.gif As you've pointed out, there does exist a marketing agreement between Stardock (our publisher) for Ashes with AMD.

So they worked with Nvidia having as many site visits as AMD and if you can trust his word they've been communicating more with NVidia which wouldn't surprise me seeing as they were surprised at the performance drop under DX12 so would want to work out what they were breaking or give NVidia a chance to fix whatever was going wrong.

The publisher has a deal not the dev...

i think history allows ample scoffing. tessellation has been completely useless. and what vendor specific features that dont work on consoles have ever not been useless?

From what I read in B3D, all DX12 cards will "support" it by running the same execute and produce the same result.Apparently Maxwell 2 does support this feature but have asked Oxide because on those cards it made the bench ran slower for some reason.

Wait, so Maxwell is fully DX12 compliant but does not have async compute like AMD cards have? Does this mean that PS4 is almost DX13 levels then due to having this feature as well as hUMA and a supercharged PC architecture which DX12 does not have? If so I can easily see PS4 competing with the next gen Xbox which will assumedly be based on DX13 further delaying the need for Sony to launch a successor. Woah. If this is true I can easily see PS4 lasting a full ten years. Highly interesting development, I can't wait to see what Naughty Dog and co do with this new found power.

Tesselation has never been useless, numerous games use and have used it. Everytime it is used (even for simple terrain generation), it means less perf degregation on NV and basically comes "free".

That we are talking about some biased developer trying too hard to favor one brand above other. They are openly admiting that they are using one resource on AMD that they have completely shutdown on Nvidia because latter one doesn't even have the driver support unlocked for them. And even then, they can't surpass GTX performance.

And then, we are talking about how Nvidia lacks Asynchronous Compute™ when that's the commercial name AMD gives to that feature. For your info, Nvidia calls it Dynamic Parallelism, and has been among us for several generations with CUDA.

Fud sprinkler.

Certainly I could see how one might see that we are working closer with one hardware vendor then the other, but the numbers don't really bare that out. Since we've started, I think we've had about 3 site visits from NVidia, 3 from AMD, and 2 from Intel ( and 0 from Microsoft, but they never come visit anyone ;(). Nvidia was actually a far more active collaborator over the summer then AMD was, If you judged from email traffic and code-checkins, you'd draw the conclusion we were working closer with Nvidia rather than AMD wink.gif As you've pointed out, there does exist a marketing agreement between Stardock (our publisher) for Ashes with AMD.

So they worked with Nvidia having as many site visits as AMD and if you can trust his word they've been communicating more with NVidia which wouldn't surprise me seeing as they were surprised at the performance drop under DX12 so would want to work out what they were breaking or give NVidia a chance to fix whatever was going wrong.

The publisher has a deal not the dev...

From what I read in B3D, all DX12 cards will "support" it by running the same execute and produce the same result.

The question is whether there is actual native hardware implementation.

Tesselation has never been useless, numerous games use and have used it. Everytime it is used (even for simple terrain generation), it means less perf degregation on NV and basically comes "free".

Likewise, consoles never supported a number of DX10 and DX11 features and yet even PC ports grabbed and used these features, for years. Some even had hugely different PC versions (battlefield 3, the crysis games, metro games). Even less-popular ports took advantage of non-console features (batman games, red faction guerilla).

You are overreacting IMO and downplaying a lot of things.

WFT.

Look at you, trying to create a meme.Wait, so Maxwell is fully DX12 compliant but does not have async compute like AMD cards have? Does this mean that PS4 is almost DX13 levels then due to having this feature as well as hUMA and a supercharged PC architecture which DX12 does not have? If so I can easily see PS4 competing with the next gen Xbox which will assumedly be based on DX13 further delaying the need for Sony to launch a successor. Woah. If this is true I can easily see PS4 lasting a full ten years. Highly interesting development, I can't wait to see what Naughty Dog and co do with this new found power.

mind listing some particular examples where you think tessellation made a worthwhile improvement to the visuals?

Look at you, trying to create a meme.

What I get from this article is to not buy any Nvidia GPUs for awhile. I mean, there was that DX 12 benchmark article before that said about the same thing.

Look at this post:

The metro games, Crysis 2 and 3, batman games, COD ghosts, Dragon Age: Inq, lost planet 2, Ryse, HAWX games, Total War Shogun 2, Watch Dogs (water).

And those are just the ones that I can suddenly think of. It is used in tons of rendering pipelines. And in the ones above, signifcantly.

The metro games, Crysis 2 and 3, batman games, COD ghosts, Dragon Age: Inq, lost planet 2, Ryse, HAWX games, Total War Shogun 2, Watch Dogs (water).

And those are just the ones that I can suddenly think of. It is used in tons of rendering pipelines. And in the ones above, signifcantly.

Nice to see my 7850 still has a some life left in it, it will be interesting to see how this turns out.

This is all so interesting to read. I know current gen consoles are barely 2 years old but when do we typically start speculating/start hearing rumors of the power of next gen consoles?

What I get from this article is to not buy any Nvidia GPUs for awhile. I mean, there was that DX 12 benchmark article before that said about the same thing.

Do have shares in NV or something?That DX12 benchmark was the benchmark from those same guys.

It went from thia

to this:

Please, keep advising people not to buy some brand with higher DX12 feature level because some alpha state benchmark.

Colors and that.

Ur ignoring the fact that costs to nvidia are going to be higher. Amd can keep putting out the same card... As more people switch to dx12, amd's costs don't rise as much as Nvidia's costs. And as far as we know, pascal is the same thing maxwell save for the fabrication process and vram.People wondering why Nvidia is doing a bit better in DX11 than DX12. Thats because Nvidia optimized their DX11 path in their drivers for Ashes of the Singularity. With DX12 there are no tangible driver optimizations because the Game Engine speaks almost directly to the Graphics Hardware. So none were made. Nvidia is at the mercy of the programmers talents as well as their own Maxwell architectures thread parallelism performance under DX12. The Devellopers programmed for thread parallelism in Ashes of the Singularity in order to be able to better draw all those objects on the screen. Therefore what were seeing with the Nvidia numbers is the Nvidia draw call bottleneck showing up under DX12. Nvidia works around this with its own optimizations in DX11 by prioritizing workloads and replacing shaders. Yes, the nVIDIA driver contains a compiler which re-compiles and replaces shaders which are not fine tuned to their architecture on a per game basis. NVidias driver is also Multi-Threaded, making use of the idling CPU cores in order to recompile/replace shaders. The work nVIDIA does in software, under DX11, is the work AMD do in Hardware, under DX12, with their Asynchronous Compute Engines.

I like this quote, where Nvidia's drivers gave them big boosts before, they're losing in DX12 because drivers aren't nearly as important.

Pretty much what AMD has been on about for the past 3 years. While Nvidia focused on the market as-is, AMD was getting in early on DX12 and it's cost them dearly in their already low market share. Now that DX12 is the current focus, I'm sure Pascal won't have these problems and it honestly can't if they want to compete. When Pascal comes around, we'll probably start seeing the first DX12 games hit the market and if their top-tier card is competing or even winning, that's all anyone is going to be talking about, not Maxwell performance.

Certainly I could see how one might see that we are working closer with one hardware vendor then the other, but the numbers don't really bare that out. Since we've started, I think we've had about 3 site visits from NVidia, 3 from AMD, and 2 from Intel ( and 0 from Microsoft, but they never come visit anyone ;(). Nvidia was actually a far more active collaborator over the summer then AMD was, If you judged from email traffic and code-checkins, you'd draw the conclusion we were working closer with Nvidia rather than AMD wink.gif As you've pointed out, there does exist a marketing agreement between Stardock (our publisher) for Ashes with AMD.

So they worked with Nvidia having as many site visits as AMD and if you can trust his word they've been communicating more with NVidia which wouldn't surprise me seeing as they were surprised at the performance drop under DX12 so would want to work out what they were breaking or give NVidia a chance to fix whatever was going wrong.

The publisher has a deal not the dev...

maybe we have different ideas of what constitutes a worthwhile visual increase. where is tessellation used n ryse and DA out of curiosity? ive personally gone around trying to find tessellation in c3, and the very rare cases that you see it used, it might as well not even be there. the difference is minuscule.

On

on

on

on

Please, keep advising people not to buy some brand with higher DX12 feature level because some alpha state benchmark.

Colors and that.

LOLSo, is this situation similar to the whole Geforce FX debacle? Anyone remember that one?

So, is this situation similar to the whole Geforce FX debacle? Anyone remember that one?

Not seeing the difference in these shots to be quite honest, the curves don't seem massively impacted by tessellation. Are there specific points in any of those images that look substantially worse? I mean the usual candidates (perfect circles) like the weapon sights or the curves on that big rock seem identical. Crysis 3 is a bit of a pain in the arse for showing this anyway as the screenspace effects like CA are completely washing out details.Hundreds if not thousands of surfaces in Crysis 3 use tesselation, and tesselation enables the vegetation to interact and look the way it does, the particles to look the way they do, the shadows to look the way they do, the water to look the way it does. Any and every rock in the game, considering entire levels are made of rocks (last level is basically all rocks and tesselation rounded alien geometry, second last level, tons of other parts of other levels). I would say it is a big deal.

Off

On

off

on

off

on

off

on

Not sure what you mean?

Seems like best summation thus farAll it really means is that those who have slower upgrade cycles are likely to be able to hang on to their AMD cards longer than those with NV equivalents.

Not seeing the difference in these shots to be quite honest, the curves don't seem massively impacted by tessellation. Are there specific points in any of those images that look substantially worse? I mean the usual candidates (perfect circles) like the weapon sights or the curves on that big rock seem identical. Crysis 3 is a bit of a pain in the arse for showing this anyway as the screenspace effects like CA are completely washing out details.

This is more that NV tend to produce cards for the now but AMD produce more future proof products.