How Should a System be Optimized?

In the "good old days" developers expended a lot of effort formulating the most efficient mechanisms to perform specific tasks on expensive hardware. The software engineering departments of many universities invariably had teams of researchers performing mathematical proofs on the latest algorithms, identifying the speed with which they would operate. (These were the days of Tony Hoare and Donald Knuth, famed for their works on the ways of searching and sorting data.) This was, and still is, valuable work, applied by many pieces of commercial software. However, the performance of modern hardware far outweighs that which was available in even the recent past (Moore's Law still applies), and the costs also continue to drop.

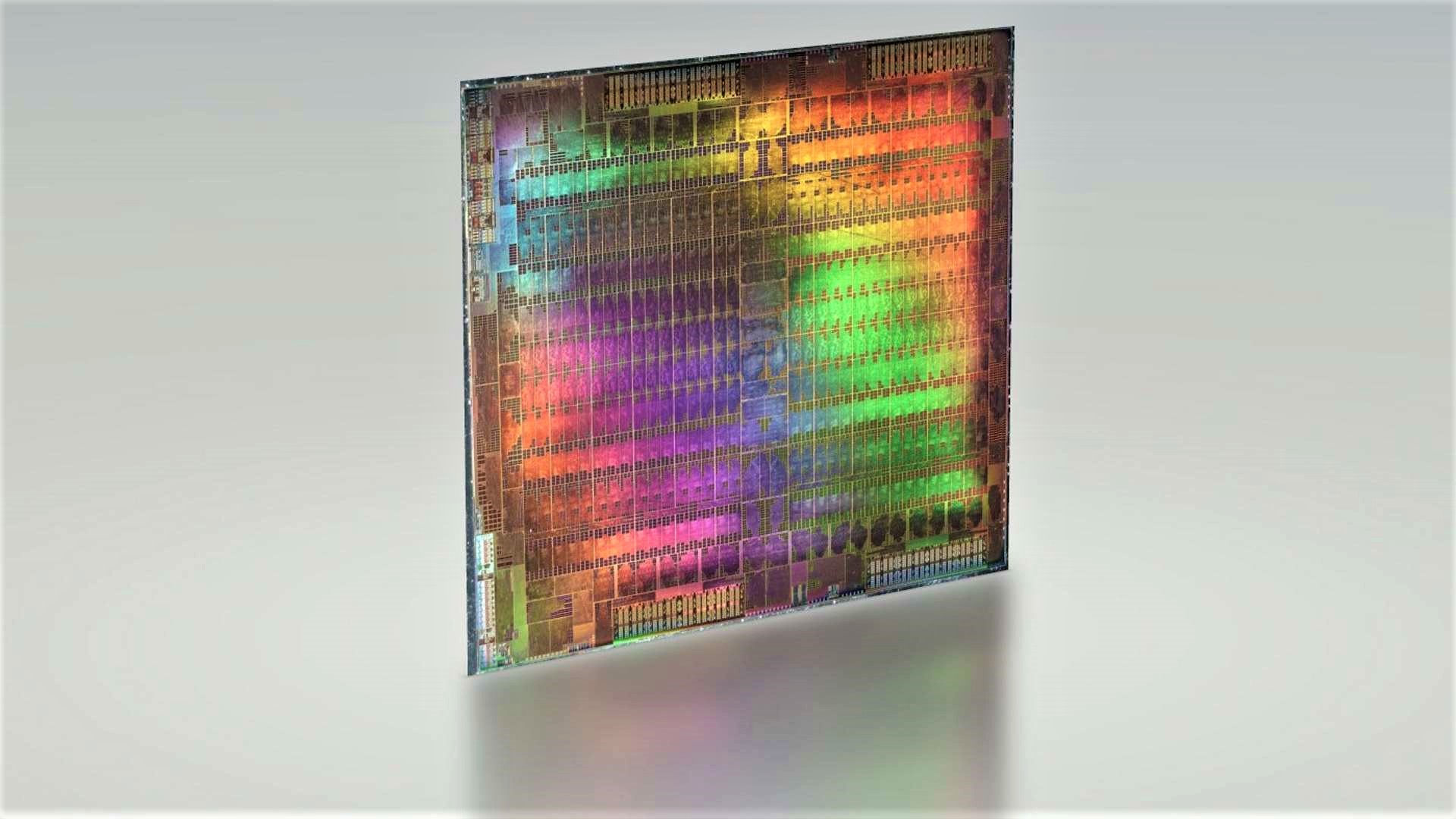

These days, optimization is much more likely to involve identifying the appropriate hardware to use as a platform, and then tuning the system for that hardware. It is far more cost-effective to add another 512 MB of memory to a computer running an application server than to pay for a consultant or developer to rewrite part of the system. With the commoditization of software (how many sites write their own database server software these days rather than using a commercial package?), hardware selection is critical since the customer often does not have access to the underlying source code of the system.

That said, fast hardware is not an excuse for poor design and coding practice; if an application halts while waiting for user input, or while data is locked in a database, it does not matter how fast the processor is. Software tools such as the Intel VTune™ Performance Analyzer should be used to identify, isolate, and rectify bottlenecks occurring with in-house code, and software vendors often supply their own tools for monitoring and maintaining the performance of their systems.